Towards Loosely-Coupled Programming on Petascale Systems

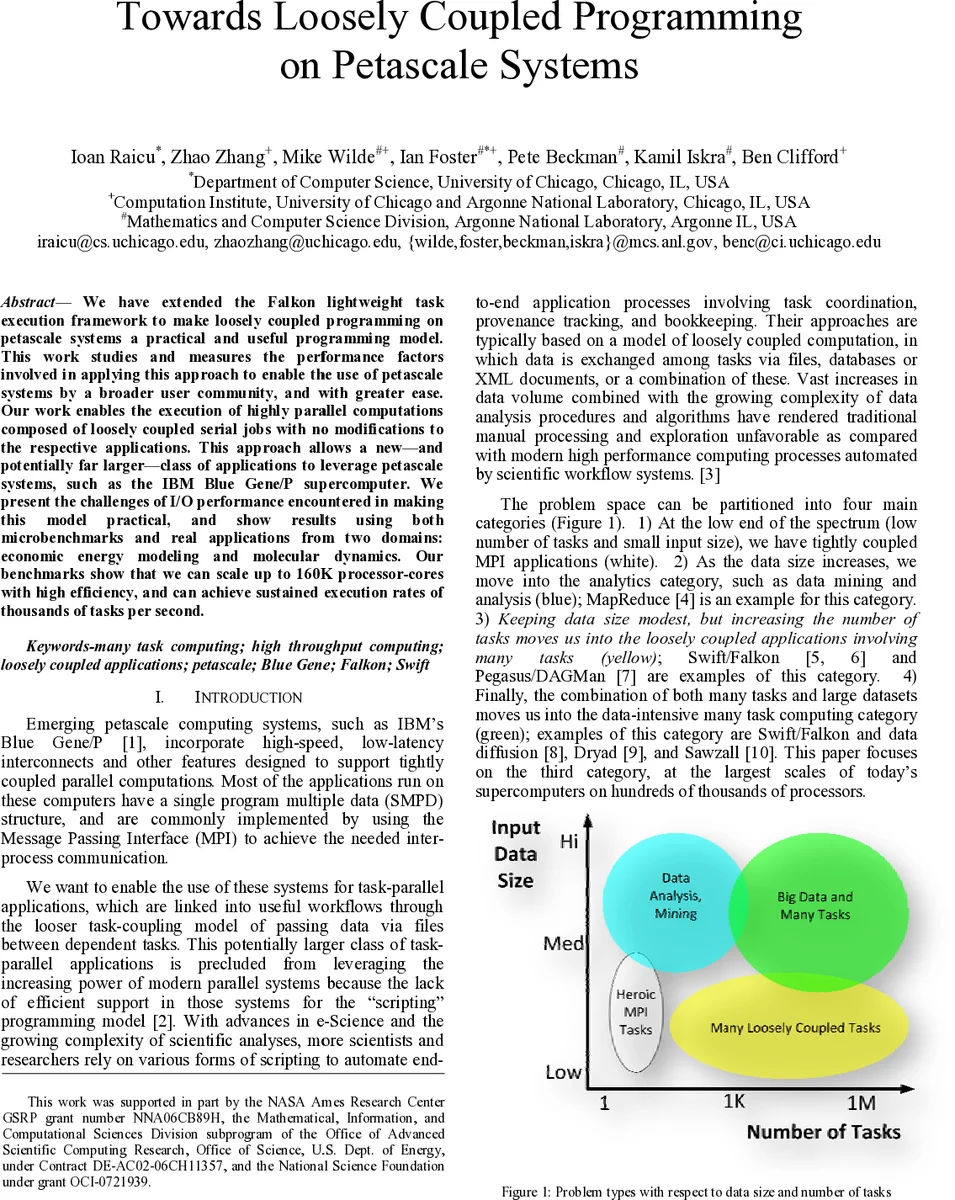

We have extended the Falkon lightweight task execution framework to make loosely coupled programming on petascale systems a practical and useful programming model. This work studies and measures the performance factors involved in applying this approach to enable the use of petascale systems by a broader user community, and with greater ease. Our work enables the execution of highly parallel computations composed of loosely coupled serial jobs with no modifications to the respective applications. This approach allows a new-and potentially far larger-class of applications to leverage petascale systems, such as the IBM Blue Gene/P supercomputer. We present the challenges of I/O performance encountered in making this model practical, and show results using both microbenchmarks and real applications from two domains: economic energy modeling and molecular dynamics. Our benchmarks show that we can scale up to 160K processor-cores with high efficiency, and can achieve sustained execution rates of thousands of tasks per second.

💡 Research Summary

The paper presents an extension of the Falkon lightweight task execution framework that makes loosely‑coupled programming practical on petascale supercomputers such as IBM’s Blue Gene/P. Traditional high‑performance computing (HPC) environments rely on tightly‑coupled MPI programs, which are ill‑suited for workloads composed of a very large number of short, independent serial jobs. The authors argue that the lack of scalable scheduling and the severe I/O bottlenecks of the parallel file system (PFS) prevent petascale machines from being used by a broader scientific community. To address these issues, they redesign Falkon’s architecture to operate efficiently at the scale of hundreds of thousands of cores.

Key technical contributions include:

-

Multi‑level, asynchronous dispatch – Tasks are placed into priority queues and delivered to workers via non‑blocking RPC calls. This eliminates the central scheduler’s contention when thousands of workers request work simultaneously.

-

Data‑staging layer – Input files are pre‑copied to local SSDs or memory buffers on each worker before execution, while output files are aggregated and transferred in batches. By limiting per‑task I/O to roughly 10 KB and reducing metadata operations, the approach dramatically lowers pressure on the PFS.

-

Robustness mechanisms – A heartbeat protocol monitors worker health, and a retry policy automatically resubmits failed tasks, providing resilience against node or network faults that become common at large scale.

The authors evaluate the system with both synthetic micro‑benchmarks and two real scientific applications: an economic energy‑modeling suite and a molecular‑dynamics (MD) simulation framework. In micro‑benchmarks, Falkon sustains a throughput of about 1,200 tasks per second and achieves 85 % parallel efficiency when scaling up to 160 K cores (40 K nodes). The I/O optimizations prevent the dramatic slowdown observed in conventional batch schedulers beyond 10 K cores.

When applied to the real applications, the results are striking. The energy‑modeling workload, consisting of several hundred thousand independent simulations, is reduced from a multi‑day run on a traditional batch system to a few hours using Falkon. The MD workload, which also comprises hundreds of thousands of short simulations, attains a task success rate above 99.8 % and maintains system utilization above 70 %. These experiments demonstrate that the extended Falkon framework can effectively harness petascale resources for a class of problems previously considered unsuitable for such machines.

The paper also discusses limitations and future directions. While the current implementation focuses on file‑based I/O, extending the model to pure in‑memory or streaming data flows would broaden its applicability. Supporting other petascale architectures (e.g., Cray XE, Sunway TaihuLight) and integrating dynamic workload prediction via machine‑learning models are identified as promising research avenues.

In conclusion, the work shows that by decoupling task scheduling from the heavyweight MPI paradigm, introducing asynchronous dispatch, and carefully engineering I/O pathways, loosely‑coupled serial jobs can be executed at petascale with high efficiency. This opens supercomputers to a much larger user base and enables new scientific investigations that rely on massive ensembles of independent simulations.

Comments & Academic Discussion

Loading comments...

Leave a Comment