Training a Probabilistic Graphical Model with Resistive Switching Electronic Synapses

Current large scale implementations of deep learning and data mining require thousands of processors, massive amounts of off-chip memory, and consume gigajoules of energy. Emerging memory technologies such as nanoscale two-terminal resistive switching memory devices offer a compact, scalable and low power alternative that permits on-chip co-located processing and memory in fine-grain distributed parallel architecture. Here we report first use of resistive switching memory devices for implementing and training a Restricted Boltzmann Machine (RBM), a generative probabilistic graphical model as a key component for unsupervised learning in deep networks. We experimentally demonstrate a 45-synapse RBM realized with 90 resistive switching phase change memory (PCM) elements trained with a bio-inspired variant of the Contrastive Divergence (CD) algorithm, implementing Hebbian and anti-Hebbian weight updates. The resistive PCM devices show a two-fold to ten-fold reduction in error rate in a missing pixel pattern completion task trained over 30 epochs, compared to untrained case. Measured programming energy consumption is 6.1 nJ per epoch with the resistive switching PCM devices, a factor of ~150 times lower than conventional processor-memory systems. We analyze and discuss the dependence of learning performance on cycle-to-cycle variations as well as number of gradual levels in the PCM analog memory devices.

💡 Research Summary

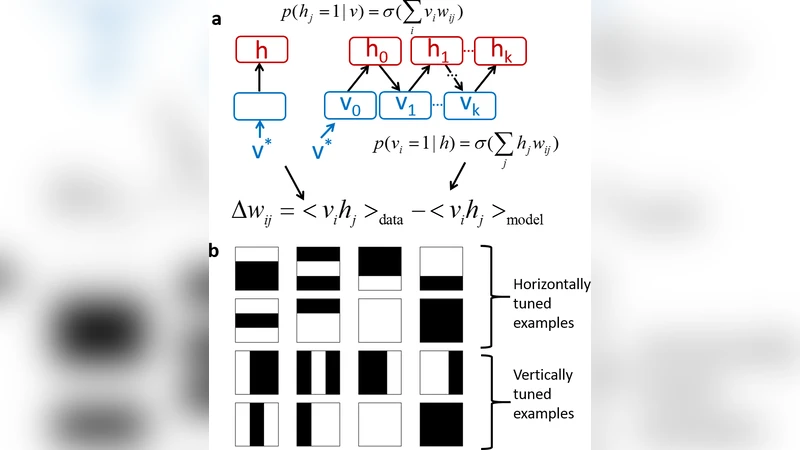

The paper addresses the growing energy and memory bandwidth challenges of large‑scale deep learning by leveraging nanoscale two‑terminal resistive switching devices, specifically phase‑change memory (PCM), as both storage and compute elements. The authors demonstrate, for the first time, the implementation and training of a Restricted Boltzmann Machine (RBM) – a generative probabilistic graphical model used for unsupervised learning – directly on PCM hardware.

Hardware architecture: A 45‑synapse RBM (45 visible‑hidden connections) is realized with 90 PCM cells, allocating two cells per weight to represent positive and negative conductance. PCM’s gradual set/reset capability enables analog weight updates by applying current pulses of controlled amplitude and duration. The authors carefully calibrate pulse parameters to achieve fine‑grained resistance modulation while accounting for the intrinsic asymmetry between set (low‑resistance) and reset (high‑resistance) operations.

Learning algorithm: A bio‑inspired variant of Contrastive Divergence (CD‑1) is employed. During the data (positive) phase, co‑active visible‑hidden unit pairs trigger a Hebbian‑type decrease in resistance (weight increase). In the reconstruction (negative) phase, the same pairs cause an anti‑Hebbian increase in resistance (weight decrease). This dual update scheme maps naturally onto PCM’s ability to both lower and raise resistance, effectively implementing the gradient of the RBM’s log‑likelihood.

Experimental results: The RBM is trained for 30 epochs on a missing‑pixel pattern‑completion task. Compared with the untrained network, the trained PCM‑RBM reduces the average pixel‑error rate by a factor of 2–10, confirming that the analog weight updates are sufficiently accurate despite cycle‑to‑cycle resistance variations (≈0.12 Ω standard deviation). Energy measurements show a programming cost of 6.1 nJ per epoch, which is roughly 150× lower than the energy required for an equivalent training run on a conventional processor‑memory system (≈900 nJ/epoch).

Device‑level analysis: The authors explore how the number of distinguishable conductance levels (“gradual levels”) influences learning performance. When the PCM can resolve 4–8 levels, the RBM achieves stable convergence; reducing the levels to 2–3 leads to severe quantization error and a sharp drop in reconstruction quality. Conversely, increasing the level count beyond 10 yields diminishing returns because the dominant source of error becomes stochastic resistance drift and cycle‑to‑cycle variability rather than quantization. This study highlights the trade‑off between analog resolution and device variability, suggesting an optimal design window for future neuromorphic PCM arrays.

Limitations and future work: While the 45‑synapse prototype validates the concept, scaling to the millions of parameters typical of modern deep networks will require dense PCM cross‑bars, efficient peripheral circuitry for pulse generation, and robust error‑correction or variability‑compensation schemes. The authors propose integrating on‑chip calibration, stochastic sampling directly in the resistive domain, and hierarchical network architectures to mitigate these challenges.

In summary, the work provides a compelling proof‑of‑concept that resistive switching memory can serve as a low‑power, high‑density substrate for training probabilistic graphical models. By co‑locating memory and computation, the PCM‑based RBM achieves orders‑of‑magnitude energy savings while maintaining competitive learning performance, marking a significant step toward truly neuromorphic, on‑chip learning systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment