Active Authentication on Mobile Devices via Stylometry, Application Usage, Web Browsing, and GPS Location

Active authentication is the problem of continuously verifying the identity of a person based on behavioral aspects of their interaction with a computing device. In this study, we collect and analyze behavioral biometrics data from 200subjects, each …

Authors: Lex Fridman, Steven Weber, Rachel Greenstadt

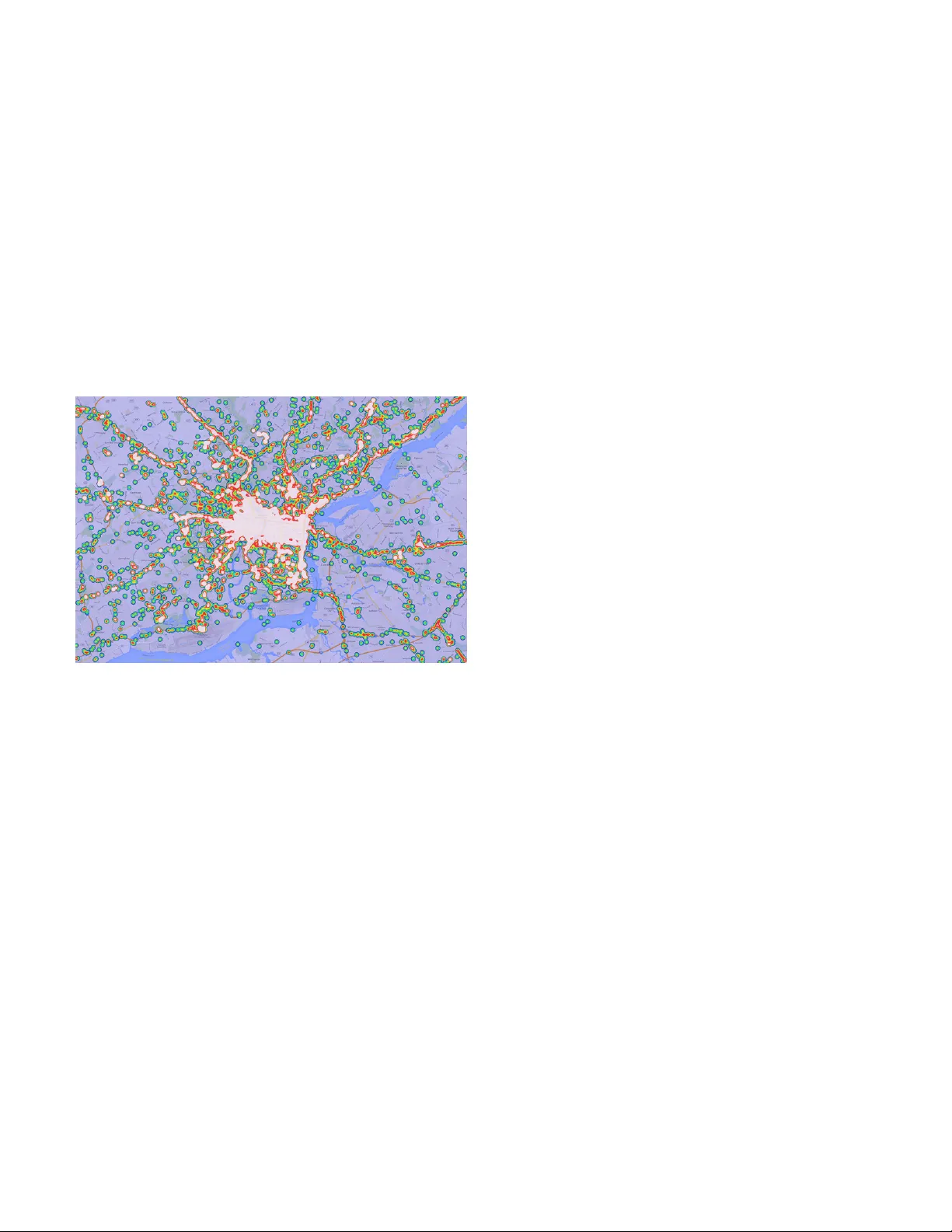

1 Acti v e Authentication on Mobile De vices via Stylometry , Application Usage, W eb Bro wsing, and GPS Location Lex Fridman ∗ , Stev en W eber † , Rachel Greenstadt † , Moshe Kam ‡ ∗ Massachusetts Institute of T echnology fridman@mit.edu † Drex el Univ ersity { sweber@coe, greenie@cs } .drexel.edu ‡ Ne w Jersey Institute of T echnology moshe.kam@njit.edu Abstract —Active authentication is the problem of continuously verifying the identity of a person based on behavioral aspects of their interaction with a computing device. In this study , we collect and analyze behavioral biometrics data from 200 subjects, each using their personal Android mobile de vice f or a period of at least 30 days. This dataset is novel in the context of active authentication due to its size, duration, number of modalities, and absence of restrictions on tracked activity . The geographical colocation of the subjects in the study is repr esentative of a large closed-world en vironment such as an organization where the unauthorized user of a device is likely to be an insider threat: coming from within the organization. W e consider four biometric modalities: (1) text entered via soft k eyboard, (2) applications used, (3) websites visited, and (4) physical location of the device as determined from GPS (when outdoors) or WiFi (when indoors). W e implement and test a classifier f or each modality and organize the classifiers as a parallel binary decision fusion architectur e. W e are able to characterize the performance of the system with respect to intruder detection time and to quantify the contribution of each modality to the ov erall performance. Index T erms —Multimodal biometric systems, insider threat, in- trusion detection, behavioral biometrics, decision fusion, activ e authentication, stylometry , GPS location, web br owsing behavior , application usage patter ns I . I N T R O D U C T I O N According to a 2013 Pe w Internet Project study of 2076 people [ 1 ], 91% of American adults own a cellphone. Increasingly , people are using their phones to access and store sensiti ve data. The same study found that 81% of cellphone owners use their mobile de vice for texting, 52% use it for email, 49% use it for maps (enabling location services), and 29% use it for online banking. And yet, securing the data is often not taken seriously because of an inaccurate estimation of risk as discussed in [ 2 ]. In particular, se veral studies have shown that a large percentage of smartphone owners do not lock their phone: 57% in [ 3 ], 33% in [ 4 ], 39% in [ 2 ], and 48% in this study . Activ e authentication is an approach of monitoring the behav- ioral biometric characteristics of a user’ s interaction with the device for the purpose of securing the phone when the point- of-entry locking mechanism fails or is absent. In recent years, continuous authentication has been e xplored extensiv ely on desktop computers, based either on a single biometric modality like mouse mo vement [ 5 ] or a fusion of multiple modalities like keyboard dynamics, mouse movement, web browsing, and stylometry [ 6 ]. Unlike physical biometric devices like fingerprint scanners or iris scanners, these systems rely on computer interface hardware like the keyboard and mouse that are already commonly a vailable with most computers. In this paper, we consider the problem of activ e authentication on mobile devices, where the v ariety of a v ailable sensor data is much greater than on the desktop, but so is the variety of behavioral profiles, de vice form factors, and environments in which the device is used. Acti ve authentication is the approach of verifying a user’ s identity continuously based on various sensors commonly av ailable on the device. W e study four rep- resentativ e modalities of stylometry (text analysis), application usage patterns, web bro wsing beha vior, and physical location of the device. These modalities were chosen, in part, due to their relati vely low po wer consumption. In the remainder of the paper these four modalities will be referred to as T E X T , A P P , W E B , and L O C A T I O N , respectiv ely . W e consider the trade-of f between intruder detection time and detection error as measured by false accept rate (F AR) and f alse reject rate (FRR). The analysis is performed on a dataset collected by the authors of 200 subjects using their personal Android mobile de vice for a period of at least 30 days. T o the best of our knowledge, this dataset is the first of its kind studied in activ e authentication literature, due to its large size [ 7 ], the duration of tracked activity [ 8 ], and the absence of restrictions on usage patterns and on the form factor of the mobile de vice. The geographical colocation of the participants, in particular , makes the dataset a good representation of an environment such as a closed-world organization where the unauthorized user of a particular device will most likely come from inside the or ganization. W e propose to use decision fusion in order to asynchronously 2 integrate the four modalities and make serial authentication decisions. While we consider here a specific set of binary classifiers, the strength of our decision-le vel approach is that additional classifiers can be added without having to change the basic fusion rule. Moreover , it is easy to ev aluate the marginal improvement of an y added classifier to the overall performance of the system. W e ev aluate the multimodal con- tinuous authentication system by characterizing the error rates of local classifier decisions, fused global decisions, and the contribution of each local classifier to the fused decision. The nov el aspects of our work include the scope of the dataset, the particular portfolio of behavioral biometrics in the context of mobile devices, and the e xtent of temporal performance analysis. The remainder of the paper is structured as follows. In § II , we discuss the related work on multimodal biometric systems, activ e authentication on mobile devices, and each of the four behavioral biometrics considered in this paper . In § III , we discuss the 200 subject dataset that we collected and analyzed. In § IV , we discuss four biometric modalities, their associated classifiers, and the decision fusion architecture. In § V , we present the performance of each individual classifier , the performance of the fusion system, and the contribution of each indi vidual classifier to the fused decisions. I I . R E L AT E D W O R K A. Multimodal Biometric Systems The windo w of time based on which an acti ve authentication system is tasked with making a binary decision is relativ ely short and thus contains a highly variable set of biometric information. Depending on the task the user is engaged in, some of the biometric classifiers may pro vide more data than others. For example, as the user chats with a friend via SMS, the text-based classifiers will be actively flooded with data, while the web bro wsing based classifiers may only get a few infrequent e vents. This moti vates the recent work on multimodal authentication systems where the decisions of multiple classifiers are fused together [ 9 ]. In this way , the verification process is more robust to the dynamic nature of human-computer interaction. The current approaches to the fusion of classifiers center around max, min, median, or majority v ote combinations [ 10 ]. When neural networks are used as classifiers, an ensemble of classifiers is constructed and fused based on different initialization of the neural network [ 11 ]. Sev eral acti ve authentication studies ha ve utilized multimodal biometric systems but hav e all, to the best of our kno wledge: (1) considered a smaller pool of subjects, (2) hav e not char- acterized the temporal performance of intruder detection, and (3) have shown overall significantly worse performance than that achie ved in our study . Our approach in this paper is to apply the Chair-V arshney optimal fusion rule [ 12 ] for the combination of a vailable multimodal decisions. The strength of the decision-le vel fusion approach is that an arbitrary number of classifiers can be added without re-training the classifiers already in the system. This modular design allows for multiple groups to contrib ute drastically dif ferent classification schemes, each lowering the error rate of the global decision. B. Mobile Active Authentication W ith the rise of smartphone usage, acti ve authentication on mobile de vices has be gun to be studied in the last few years. The lar ge number of av ailable sensors makes for a rich feature space to explore. Ultimately , the question is the one that we ask in this paper: what modality contributes the most to a decision fusion system toward the goal of fast, accurate v erification of identity? Most of the studies focus on a single modality . For example, gait pattern was considered in [ 7 ] achieving an EER of 0.201 (20.1%) for 51 subjects during two short sessions, where each subject was tasked with walking down a hallway . Some studies have incorporated multiple modalities. For example, keystrok e dynamics, stylometry , and behavioral profiling were considered in [ 13 ] achieving an EER of 0.033 (3.3%) from 30 simulated users. The data for these users was pieced together from different datasets. T o the best of our kno wledge, the dataset that we collected and analyzed is unique in all its key aspects: its size (200 subjects), its duration (30+ days), and the size of the portfolio of modalities that were all track ed concurrently with a synchronized timestamp. C. Stylometry , W eb Br owsing, Application Usage , Location Stylometry is the study of linguistic style. It has been ex- tensiv ely applied to the problems of authorship attribution, identification, and verification. See [ 14 ] for a thorough sum- mary of stylometric studies in each of these three problem domains along with their study parameters and the resulting accuracy . These studies traditionally use large sets of features (see T able II in [ 15 ]) in combination with support vector machines (SVMs) that ha ve prov en to be effecti ve in high dimensional feature space [ 16 ], e ven in cases when the number of features exceeds the number of samples. Nevertheless, with these approaches, often more than 500 words are required in order to achieve adequately lo w error rates [ 17 ]. This makes them impractical for the application of real-time active authentication on mobile devices where text data comes in short bursts. While the other three modalities are not well in vestigated in the context of acti ve authentication, this is not true for stylometry . Therefore, for this modality , we don’t rein- vent the wheel, and implement the n-gram analysis approach presented in [ 14 ] that has been shown to work sufficiently well on short blocks of texts. W eb bro wsing, application usage, and location have not been studied e xtensiv ely in the context of active authentication. The following is a discussion of the few studies that we are aware of. W eb browsing behavior has been studied for the purpose of understanding user behavior , habits, and interests [ 18 ]. W eb browsing as a source for behavioral biometric data was 3 considered in [ 19 ] to achie ve average identification F AR/FRR of 0.24 (24%) on a dataset of 14 desktop computer users. Application usage was considered in [ 8 ], where cellphone data (from 2004) from the MIT Reality Mining project [ 20 ] was used to achiev e 0.1 (10%) EER based on a portfolio of metrics including application usage, call patterns, and location. Application usage and movements patterns have been studied as part of behavioral profiling in cellular networks [ 8 ], [ 21 ], [ 22 ]. Howe ver , these approaches use position data of lower resolution in time and space than that pro vided by GPS on smartphones. T o the best of our kno wledge, GPS traces have not been utilized in literature for continuous authentication. I I I . D A TA S E T The dataset used in this work contains behavioral biometrics data for 200 subjects. The collection of the data was carried out by the authors o ver a period of 5 months. The requirements of the study were that each subject was a student or employee of Drexel Univ ersity and was an o wner and an acti ve user of an Android smartphone or tablet. The number of subjects with each major Android version and associated API lev el are listed in T able I . Nexus 5 was the most popular device with 10 subjects using it. Samsung Galaxy S5 was the second most popular de vice with 6 subjects using it. Android V ersion API Level Subjects 4.4 19 143 4.1 16 16 4.3 18 15 4.2 17 9 4.0.4 15 5 2.3.6 10 4 4.0.3 15 3 2.3.5 10 3 2.2 8 2 T ABLE I: The Android version and API lev el of the 200 devices that were part of the study . A tracking application w as installed on each subject’ s device and operated for a period of at least 30 days until the subject came in to approve the collected data and get the tracking application uninstalled from their device. The following data modalities were tracked with 1-second resolution: • T ext typed via soft k eyboard. • Apps visited. • W ebsites visited. • Location (based on GPS or W iFi). The key characteristics of this dataset are its large size (200 users), the duration of tracked acti vity (30+ days), and the geographical colocation of its participants in the Philadelphia area. Moreover , we did not place any restrictions on usage patterns, on the type of Android de vice, and on the Android OS v ersion (see T able I ). There were se veral challenges encountered in the collection of the data. The biggest problem was battery drain. Due to the long duration of the study , we could not enable modalities whose tracking proved to be significantly draining of battery power . These modalities include front-facing video for eye tracking and face recognition, gyroscope, accelerometer , and touch gestures. Moreov er , we had to reduce GPS sampling frequency to once per minute on most of the devices. Event Frequency T ext 23,254,478 App 927,433 W eb 210,322 Location 143,875 T ABLE II: The number of e vents in the dataset associated with each of the four modalities considered in this paper . A T E X T ev ent refers to a single character entered on the soft keyboard. An A P P e vents refers to a new app recei ving focus. A W E B ev ent refers to a new url entered in the url box. A L O C A T I O N ev ent refers to a ne w sample of the device location either from GPS or W iFi. T able II sho ws statistics on each of the four inv estigated modalities in the corpus. The table contains data aggre gated ov er all 200 users. The “frequency” here is a count of the number of instances of an action associated with that modality . As stated pre viously , the four modalities will be referred to as T E X T , A P P , W E B , and “location. ” For T E X T , the action is a single ke ystroke on the soft keyboard. F or A P P , the action is opening or bringing focus to a ne w app. F or W E B , the action is visiting a new website. F or L O C A T I O N , no e xplicitly action is taken by the user . Rather , location is sampled regularly at intervals of 1 minute when GPS is enabled. As T able II suggests, T E X T e vents fire 1-2 orders of magnitude more frequently than the other three. 0 100 200 300 400 500 600 Duration of Ac tive Interaction (Hour s) 200 Users (Ordered from Least to Most Active) Fig. 1: The duration of time (in hours) that each of the 200 users acti vely interacted with their device.. The data for each user is processed to remo ve idle periods when the device is not acti ve. The threshold for what is considered an idle period is 5 minutes. F or example, if the time between event A and e vent B is 20 minutes, with no other ev ents in between, this 20 minutes is compressed down to 5 minutes. The date and time of the ev ent are not changed but the timestamp used in dividing the dataset for training and testing (see § V -A ) is updated to reflect the new time between ev ent A and e vent B. This compression of idle times is performed 4 in order to regularize periods of activity for cross validation that utilizes time-based windows as described in § V -A . The resulting compressed timestamps are referred to as “activ e interaction”. Fig. 1 sho ws the duration (in hours) of acti ve interaction for each of the 200 users ordered from least to most acti ve. T able III sho ws three top-20 lists: (1) the top-20 apps based on the amount of text that was typed inside each app, (2) the top- 20 apps based on the number of times they received focused, and (3) the top-20 website domains based on the number of times a website associated with that domain was visited. These are aggregate measures across the dataset intended to pro vide an intuition about its structure and content, but the top-20 list is the same as that used for the the classifier model based on the W E B and A P P features in § IV . Fig. 2: An aggre gate heatmap sho wing a selection from the dataset of GPS locations in the Philadelphia area. Fig. 2 sho ws a heat map visualization of a selection from the dataset of GPS locations in the Philadelphia area. The subjects in the study resided in Philadelphia but traveled all o ver United States and the world. There are two ke y characteristics of the GPS location data. First, it is relatively unique to each indi vidual ev en for people li ving in the same area of a city . Second, outside of occasional travel, it does not vary significantly from day to day . Human beings are creatures of habit, and in as much as location is a measure of habit, this idea is confirmed by the location data of the majority of the subjects in the study . I V . C L A S S I FI C A T I O N A N D D E C I S I O N F U S I O N A. F eatures and Classifiers The four distinct biometric modalities considered in our anal- ysis are (1) text entered via soft ke yboard, (2) applications used, (3) websites visited, and (4) physical location of the device as determined from GPS (when outdoors) or W iFi (when indoors). W e refer to these four modalities as T E X T , A P P , W E B , and L O C A T I O N , respecti vely . In this section we discuss the features that were extracted from the ra w data of each modality , and the classifiers that were used to map these features into binary decision space. A binary classifier is constructed for each of the 200 users and 4 modalities. In total, there are 800 classifiers, each producing either a probability that a user is v alid P ( H 1 ) (or a binary decision of 0 (in valid) or 1 (valid). The first class ( H 1 ) for each classifier is trained on the v alid user’ s data and the second class ( H 0 ) is trained on the other 199 users’ data. The training process is described in more detail in § V -A . For A P P , W E B , and L O C A T I O N , the classifier takes a single instance of the ev ent and produces a probability . F or multiple events of the same modality , the set of probabilities is fused across time using maximum likelihood: H ∗ = argmax i ∈{ 0 , 1 } Y x t ∈ Ω P ( x t | H i ) , (1) where Ω = { x t | T current − T ( x t ) ≤ ω } , ω is a fix ed windo w size in seconds, T ( x t ) is the timestamp of ev ent x t , and T current is the current timestamp. The process of fusing classifier scores across time is illustrated in Fig. 3 . 1) T ext: As T able IIIa indicates, the apps into which text was entered on mobile de vices v aried, but the acti vity in majority of the cases was communication via SMS, MMS, WhatsApp, Facebook, Google Hangouts, and other chat apps. Therefore, T E X T events fired in short bursts. The tracking application cap- tured the keys that were touched on the keyboard and not the autocorrected result. Therefore, the majority of the typed mes- sages had a lot of misspellings and w ords that were erased in the final submitted message. In the case of SMS, we also were able to record the submitted result. For example, an SMS text that was submitted as “ Sorry couldn’t call back. ” had associated with it the following recorded ke ystrokes: “ Sprry coyld cpuldn’t vsll back. ” Classification based on the actual typed keys in principle is a better represen- tation of the person’ s linguistic style. It captures unique typing idiosyncrasies that autocorrect can conceal. As discussed in § II , we implemented a one-feature n-gram classifier from [ 14 ] that has been shown to work well on short messages. It works by analyzing the presence or absence of n-grams with respect to the training set. 2) App and W eb: The A P P and W E B classifier models we construct are identical in their structure. For the A P P modality we use the app name as the unique identifier and count the number of times a user visits each app in the training set. For the W E B modality we use the domain of the URL as the unique identifier and count the number of times a user visits each domain in the training set. Note that, for example, “m.facebook.com” is a considered a different domain than “www .facebook.com” because the subdomain is dif ferent. In this section we refer to the app name and the web domain as an “entity”. T able IIIb and T able IIIc sho w the top entities aggregated across all 200 users for A P P and W E B respectively . For each user , the classification model for the valid class is constructed by determining the top 20 entities visited by 5 App Name Keys P er App com.android.sms 5,617,297 com.android.mms 5,552,079 com.whatsapp 4,055,622 com.facebook.orca 1,252,456 com.google.android.talk 1,147,295 com.infraware.polarisvie wer4 990,319 com.android.chrome 417,165 com.facebook.katana 405,267 com.snapchat.android 377,840 com.google.android.gm 271,570 com.htc.sense.mms 238,300 com.tencent.mm 221,461 com.motorola.messaging 203,649 com.android.calculator2 167,435 com.verizon.messaging.vzmsgs 137,339 com.groupme.android 134,896 com.handcent.nextsms 123,065 com.jb .gosms 118,316 com.sonyericsson.con versations 114,219 com.twitter .android 92,605 (a) App Name V isits T ouchWiz home 101,151 WhatsApp 64,038 Messaging 60,015 Launcher 39,113 Facebook 38,591 Google Search 32,947 Chrome 32,032 Snapchat 23,481 System UI 22,772 Phone 19,396 Gmail 19,329 Messages 19,154 Contacts 18,668 Hangouts 17,209 Home 16,775 HTC Sense 16,325 Y ouT ube 14,552 Xperia Home 13,639 Instagram 13,146 Settings 12,675 (b) W ebsite Domain V isits www .google.com 19,004 m.facebook.com 9,300 www .reddit.com 4,348 forums.huaren.us 3,093 learn.dcollege.net 2,133 en.m.wikipedia.org 1,825 mail.drexel.edu 1,520 one.drexel.edu 1,472 login.drexel.edu 1,462 likes.com 1,361 mail.google.com 1,292 i.imgur .com 1,132 www .amazon.com 1,079 netcontrol.irt.drexel.edu 1,049 www .facebook.com 903 banner .drexel.edu 902 m.hupu.com 824 t.co 801 duapp2.drexel.edu 786 m.ign.com 725 (c) T ABLE III: T op 20 apps ordered by text entry and visit frequency and top 20 websites ordered by visit frequency . These tables are pro vided to giv e insight into the structure and content of the dataset. that user in the training set. The quantity of visits is then normalized so that the 20 frequenc y v alues sum to 1. The classification model for the in valid class is constructed by counting the number of visit by the other 199 users to those same 20 domains, such that for each of those domains we now hav e a probability that a v alid user visits it and an in valid user visits it. The ev aluation for each user gi ven the two empirical distributions is performed by the maximum likelihood product in ( 1 ). Entities that do not appear in the top 20 are considered outliers and are ignored in this classifier . 3) Location: Location is specified as a pair of values: latitude and longitude. Classification is performed using support vector machines (SVMs) [ 23 ] with the radial basis function (RBF) as the kernel function. The SVM produces a classification score for each pair of latitude and longitude. This score is calibrated to form a probability using Platt scaling [ 24 ] which requires an extra logistic regression on the SVM scores via an additional cross-v alidation on the training data. All of the code in this paper is written by the authors except for the SVM classifier . Since the authentication system is written in C++, we used the Shark 3.0 machine learning library for the SVM implementation. B. Decision Fusion Decision fusion with distributed sensors is described by T en- ney and Sandell in [ 25 ] who studied a parallel decision architecture. As described in [ 26 ], the system comprises of n local detectors, each making a decision about a binary hypothesis ( H 0 , H 1 ) , and a decision fusion center (DFC) that uses these local decisions { u 1 , u 2 , ..., u n } for a global decision about the hypothesis. The i th detector collects K observ ations before it makes its decision, u i . The decision is u i = 1 if the detector decides in fav or of H 1 and u i = − 1 if it decides in fa vor of H 0 . The DFC collects the n decisions of the local detectors and uses them in order to decide in fav or of H 0 ( u = − 1) or in fa vor of H 1 ( u = 1) . T enney and Sandell [ 25 ] and Reibman and Nolte [ 27 ] studied the design of the local detectors and the DFC with respect to a Bayesian cost, assuming the observations are independent conditioned on the hypothesis. The ensuing formulation deriv ed the local and DFC decision rules to be used by the system components for optimizing the system-wide cost. The resulting design requires the use of likelihood ratio tests by the decision makers (local detectors and DFC) in the system. Howe ver the thresholds used by these tests require the solution of a set of nonlinear coupled differential equations. In other w ords, the design of the local decision makers and the DFC are co-dependent. In most scenarios the resulting complexity renders the quest for an optimal design impractical. Chair and V arshney in [ 12 ] de veloped the optimal fusion rule when the local detectors are fixed and local observations are statistically independent conditioned on the hypothesis. Data Fusion Center is optimal giv en the performance characteristics of the local fixed decision makers. The result is a suboptimal (since local detectors are fixed) but computationally ef ficient and scalable design. In this study we use the Chair-V arshney formulation. The parallel distributed fusion scheme (see Fig. 3 ) allows each classifier to observe an ev ent, minimize the local risk and make a local decision o ver the set of hypothesis, based on only its o wn observations. Each classifier sends out a decision of the form: u i = ( 1 , if H 1 is decided − 1 , if H 0 is decided (2) The fusion center combines these local decisions by mini- mizing the global Bayes’ risk. The optimum decision rule 6 2 Time t e xt St art of Act i vit y t e xt t e xt t e xt t e xt t e xt app app w eb w eb lo c a tio n lo c a tio n Cl as sifier s Da t a Fusion Cen t er C1 C2 C3 C4 { − 1 , 1 } { − 1 , 1 } { − 1 , 1 } { − 1 , 1 } { − 1 , 1 } Fig. 3: The fusion architecture across time and across classifiers. The T E X T , A P P , W E B , and L O C A T I O N box es indicate a firing of a single event associated with each of those modalities. Multiple classifier scores from the same modality are fused via ( 1 ) to produce a single local binary decision. Local binary decisions from each of the four modalities are fused via ( 4 ) to produce a single global binary decision. performs the following likelihood ratio test P ( u 1 , ..., u n | H 1 ) P ( u 1 , ..., u n | H 0 ) H 1 ≷ H 0 P 0 P 1 = τ (3) where the a priori probabilities of the binary hypotheses H 1 and H 0 are P 1 and P 0 respectiv ely . In this case the general fusion rule proposed in [ 12 ] is f ( u 1 , ..., u n ) = ( 1 , if a 0 + P n i =0 a i u i > 0 − 1 , otherwise (4) with P M i , P F i representing the F alse Rejection Rate (FRR) and F alse Acceptance Rate (F AR) of the i th classifier respecti vely . The optimum weights minimizing the global probability of error are giv en by a 0 = log P 1 P 0 (5) a i = log 1 − P M i P F i , if u i = 1 log 1 − P F i P M i , if u i = − 1 (6) The threshold in ( 3 ) requires knowledge of the a priori probabilities of the hypotheses. In practice, these probabilities are not av ailable, and the threshold τ is determined using different considerations such as fixing the probability of f alse alarm or false rejection as is done in § V -C . V . R E S U LT S A. T raining, Characterization, T esting The data of each of the 200 users’ activ e interaction with the mobile device was divided into 5 equal-size folds (each containing 20% time span of the full set). W e performed training of each classifier on the first three folds (60%). W e then tested their performance on the fourth fold. This phase is referred to as “characterization”, because its sole purpose is to form estimates of F AR and FRR for use by the fusion algorithm. W e then tested the performance of the classifiers, individually and as part of the fusion system, on the fifth fold. This phase is referred to as “testing” since this is the part that is used for ev aluation the performance of the individual classifiers and the fusion system. The three phases of training, characterization, and testing as they relate to the data folds are shown in Fig. 4 . • T raining on folds 1, 2, 3. Characterization on fold 4. T esting on fold 5. • T raining on folds 2, 3, 4. Characterization on fold 5. T esting on fold 1. • T raining on folds 3, 4, 5. Characterization on fold 1. T esting on fold 2. • T raining on folds 4, 5, 1. Characterization on fold 2. T esting on fold 3. • T raining on folds 5, 1, 2. Characterization on fold 3. T esting on fold 4. Sensor P erf ormance: T r aining , Char act eriz a tion, T es ting 5 Me th od olo gy 60% of u se r 1 da t a User 1 W e tr ain, c har act eri z e, and t es t the binar y cl as sifier f or Use r 1 on tw o cl as se s: 1. User 1 2. User s 2 thr oug h 67 20% of u s e r 1 20% of u se r 1 T r ai ning Char ac t eri z a ti on T es ti ng 60% of u se r 2 da t a User 2 20% of u s e r 2 20% of u se r 2 T r aini ng Cha r act eri z a ti on T es ti ng 60% of u se r 3 da t a User 3 20% of u s e r 3 20% of u se r 3 60% of u se r 67 da t a User 67 2 0 % of user 6 7 2 0 % of user 6 7 … … … … Class 1 : A cc ep t Class 2 : R eje ct Fig. 4: The three phases of processing the data to determine the individual performance of each classifiers and the performance of the fusion system that combines some subset of these classifiers. 7 The common e valuation method used with each classifier for data fusion was measuring the av eraged error rates across five experiments; In each experiment, data of 3 folds was taken for training, 1 fold for characterization, and 1 for testing. The F AR and FRR computed during characterization were taken as input for the fusion system as a measurement of the e xpected performance of the classifiers. Therefore each experiment consisted of three phases: 1) train the classifier(s) using the training set, 2) determine F AR and FRR based on the training set, and 3) classify the windows in the test set. B. P erformance: Individual Classifiers 0 0.1 0.2 0.3 0.4 0.5 1 4.2 7.4 10.7 13.9 17.1 20.3 23.6 26.8 30 False Accept Rate (F AR) Time Before De cision (mins) Location App T ext W eb 0 0.1 0.2 0.3 0.4 0.5 1 4.2 7.4 10.7 13.9 17.1 20.3 23.6 26.8 30 False Reject Rate (FRR) Time Before De cision (mins) Location App T ext W eb Fig. 5: F AR and FRR performance of the individual classifiers associated with each of the four modalities. Each bar represent the av erage error rate for a given module and time window . Each of the 200 users has 2 classifiers for each modality , so each bar provides a value that was av eraged ov er 200 individual error rates. The error bar indicate the standard deviation across these 200 v alues. The conflicting objectives of an activ e authentication system are of response-time and performance. The less the system waits before making an authentication decision, the higher the expected rate of error . As more behavioral biometric data trickles in, the system can, on average, make a classification decision with greater certainty . This pattern of decreased error rates with an increased deci- sion window can be observ ed in Fig. 5 that shows (for 10 different time windo ws) the F AR and FRR of the 4 classifiers av eraged ov er the 200 users with the error bars indicating the standard deviation. The “testing fold” (see § V -A ) is used for computing these error rates. The “characterization fold” does not af fect these results, b ut is used only for F AR/FRR estimation required by the decision fusion center in § V -C . The “time before decision” is the time between the first ev ent indicating acti vity and the first decision produced by the fusion system. This metric can be thought of as “decision window size”. Events older than the time range cov ered by the time-window are disregarded in the classification. If no ev ent associated with the modality under consideration fires in a specific time window , no error is added to the a verage. Event Firing Rate (per hour) T ext 557.8 App 23.2 W eb 5.6 Location 3.5 T ABLE IV: The rates at which an e vent associated with each modality “fires” per hour . On average, GPS location is provided only 3.5 times an hour . There are two notable observ ations about the F AR/FRR plots in Fig. 5 . First, the location modality provides the lowest error rates ev en though on a verage across the dataset it fires only 3.5 times an hour as shown in T able IV . This means that classification on a single GPS coordinate is sufficient to correctly verify the user with an F AR of under 0.1 and an FRR of under 0.05. Second, the text modality con verges to an F AR of 0.16 and an FRR of 0.11 after 30 minutes which is one of the worse performers of the four modalities, ev en though it fires 557.8 times an hour on average. At the 30 minute mark, that firing rate equates to an av erage te xt block size of 279 characters. An F AR/FRR of 0.16/0.11 with 279 characters blocks improv es on the error rates achie ved in [ 14 ] with 500 character blocks which in turn improv ed on the errors rates achiev ed in prior work for blocks of small text (see [ 14 ] for a full reference list on short-text stylometric analysis). C. P erformance: Decision Fusion The events associated with each of the 4 modalities fire at very dif ferent rates as shown in T able IV . Moreover , text ev ents fire in bursts, while the location ev ents fire at regularly spaced intervals when GPS signal is av ailable. The app and web e vents fire at varying de grees of b urstiness depending on the user . Fig. 6 shows the distribution of the number of ev ents that fire within each of the time windows. An important takeaw ay from these distributions is that most ev ents come in bursts follo wed by periods of inacti vity . This results in the counterintuitiv e fact that the 1 minute, 10 minute, and 30 minute windo ws ha ve a similar distribution on the number of events that fire within them. This is why the decrease in error rates attained from waiting longer for a decision is not as significant as might be expected. Asynchronous fusion of classification of events from each of the four modalities is robust to the irregular rates at which 8 1 2 3 4 5 6 7 8 9 10 11 12 13 Number of Events Fired in Time Window 0.00 0.05 0.10 0.15 0.20 0.25 0.30 1 min window 10 min window 30 min window Fig. 6: The distribution of the number of ev ents that fire within a giv en time windo w . This is a long tail distribution as non- zero probabilities of e vent frequencies abov e 13 extend to ov er 100. These outliers are excluded from this histogram plot in order to highlight the high-probability frequencies. T ime windows in which no events fire are not included in this plot. ev ents fire. The decision fusion rule in ( 4 ) utilizes all the av ailable biometric data, weighing each classifier according to its prior performance. Fig. 7 shows the recei ver operating characteristic (ROC) curve trading off between F AR and FRR by v arying the threshold parameter τ in ( 3 ). 0 0.05 0.1 0.15 0.2 0 0.05 0.1 0.15 0.2 False Accept Rate (F AR) False Reject Rate (FRR) 1 mins 10 mins 30 mins Fig. 7: The performance of the fusion system with 4 classifiers on the 200 subject dataset. The R OC curve sho ws the tradeoff between F AR and FRR achieved by varying the threshold parameter a 0 in ( 4 ). As the size of the decision windo w increases, the performance of the fusion system improv es, dropping from an equal error rate (EER) of 0.05 using the 1 minute window to belo w 0.01 EER using the 30 minute window . D. Contribution of Local Classifier s to Global Decision 0 0.2 0.4 0.6 0.8 1 1.2 1.4 1 10 30 Individual S ensor Contr ibution (C i ) Time Before De cision (mins) Location App T ext W eb Fig. 8: Relati ve contribution of each of the 4 classifiers computed according to ( 7 ). The performance of the fusion system that utilizes all four modalities of T E X T , A P P , W E B , and L O C A T I O N is described in the previous section. Besides this, we are able to use the fusion system to characterize the contribution of each of the local classifiers to the global decision. This is the central question we consider in the paper: what biometric modality is most helpful in verifying a person’ s identity under a constraint of a specific time window before the verification decision must be made? W e measure the contribution C i of each of the four classifiers by ev aluating the performance of the system with and without the classifier, and computing the contribution by: C i = E i − E E i (7) where E is the error rate computed by a veraging F AR and FRR of the fusion system using the full portfolio of 4 classifiers, E i is the error rate of the fusion system using all b ut the i -th classifier, and C i is the relativ e contribution of the i -th classifier as shown in Fig. 8 . W e consider the contribution of each classifier under three time windows of 1 minute, 10 minutes, and 30 minutes. Location contributes the most in all three cases, with the second biggest contributor being web browsing. T ext contributes the least for the small window of 1 minute, but improv e for the large windo ws. App usage is the least predictable contributor . One e xplanation for the A P P 9 modality contributing significantly under the short decision window is that the first app opened in a session is a strong and frequent indicator of identity . Therefore, its contribution is high for short decision windows. V I . C O N C L U S I O N In this work, we proposed a parallel binary decision-lev el fusion architecture for classifiers based on four biometric modalities: te xt, application usage, web browsing, and loca- tion. Using this fusion method we addressed the problem of activ e authentication and characterized its performance on a real-world dataset of 200 subjects, each using their personal Android mobile device for a period of at least 30 days. The authentication system achiev ed an equal error rate (ERR) of 0.05 (5%) after 1 minute of user interaction with the device, and an EER of 0.01 (1%) after 30 minutes. W e showed the performance of each indi vidual classifier and its contribution to the fused global decision. The location-based classifier , while having the lo west firing rate, contrib utes the most to the performance of the fusion system. Lex Fridman is a Postdoctoral Associate at the Massachusetts Institute of T echnology . He recei ved his BS, MS, and PhD from Drexel Uni versity . His research interests include machine learning, decision fusion, and numerical optimization. Steven W eber receiv ed the BS degree in 1996 from Marquette Univ ersity in Milwaukee, W isconsin, and the MS and PhD degrees from the University of T exas at Austin in 1999 and 2003, respecti vely . He joined the Department of Electrical and Computer Engineering at Drex el University in 2003, where he is currently an associate professor . His research interests are centered around mathematical modeling of computer and communication networks, specifically streaming multimedia and ad hoc networks. He is a senior member of the IEEE. Rachel Greenstadt is an Associate Professor of Computer Science at Drexel Univ ersity , where she research the priv acy and security properties of intelligent systems and the eco- nomics of electronic priv acy and information security . Her work is at ”layer 8” of the network – analyzing the content. She is a member of the D ARP A Computer Science Study Group and she runs the Pri vac y , Security , and Automation Laboratory (PSAL) which is a vibrant group of ten researchers. The priv acy research community has recognized her scholar- ship with the PET A ward for Outstanding Research in Priv acy Enhancing T echnologies, the NSF CAREER A ward, and the Andreas Pfitzmann Best Student Paper A ward. Moshe Kam recei ved his BS in electrical engineering from T el A viv Univ ersity in 1976 and MSc and PhD from Drex el Univ ersity in 1985 and 1987, respecti vely . He is the Dean of the Newark College of Engineering at the New Jersey Institute of T echnology , and had served earlier (2007-2014) as the Robert Quinn professor and Department Head of Electrical and Computer Engineering at Dre xel Uni versity . His profes- sional interests are in system theory , detection and estimation, information assurance, robotics, navigation, and engineering education. In 2011 he served as President and CEO of IEEE, and at present he is member of the Boards of Directors of ABET and the United Engineering Foundation (UEF). He is a fellow of the IEEE “for contrib utions to the theory of decision fusion and distributed detection. ” R E F E R E N C E S [1] M. Duggan, “Cell phone activities 2013, ” Cell , 2013. [2] S. Egelman, S. Jain, R. S. Portnof f, K. Liao, S. Consolvo, and D. W agner , “ Are you ready to lock?” in Proceedings of the 2014 A CM SIGSAC Confer ence on Computer and Communications Security . A CM, 2014, pp. 750–761. [3] M. Harbach, E. von Zezschwitz, A. Fichtner , A. De Luca, and M. Smith, “Itsa hard lock life: A field study of smartphone (un) locking behavior and risk perception, ” in Symposium on Usable Privacy and Security (SOUPS) , 2014. [4] D. V an Bruggen, S. Liu, M. Kajzer , A. Striegel, C. R. Cro well, and J. D’Arcy , “Modifying smartphone user locking behavior , ” in Pr oceed- ings of the Ninth Symposium on Usable Privacy and Security . ACM, 2013, p. 10. [5] C. Shen, Z. Cai, X. Guan, and J. W ang, “On the effecti veness and applicability of mouse dynamics biometric for static authentication: A benchmark study , ” in Biometrics (ICB), 2012 5th IAPR International Confer ence on . IEEE, 2012, pp. 378–383. [6] A. Fridman, A. Stolerman, S. Acharya, P . Brennan, P . Juola, R. Green- stadt, and M. Kam, “Decision fusion for multimodal activ e authentica- tion, ” IEEE IT Pr ofessional , vol. 15, no. 4, July 2013. [7] M. O. Derawi, C. Nickel, P . Bours, and C. Busch, “Unobtrusive user- authentication on mobile phones using biometric gait recognition, ” in Intelligent Information Hiding and Multimedia Signal Pr ocessing (IIH- MSP), 2010 Sixth International Confer ence on . IEEE, 2010, pp. 306– 311. [8] F . Li, N. Clarke, M. Papadaki, and P . Dowland, “ Activ e authentication for mobile devices utilising behaviour profiling, ” International Journal of Information Security , vol. 13, no. 3, pp. 229–244, 2014. [9] T . Sim, S. Zhang, R. Janakiraman, and S. Kumar , “Continuous veri- fication using multimodal biometrics, ” P attern Analysis and Machine Intelligence, IEEE T ransactions on , vol. 29, no. 4, pp. 687–700, 2007. [10] J. Kittler, M. Hatef, R. Duin, and J. Matas, “On combining classifiers, ” P attern Analysis and Machine Intelligence, IEEE T ransactions on , vol. 20, no. 3, pp. 226–239, 1998. [11] C.-H. Chen and C.-Y . Chen, “Optimal fusion of multimodal biometric authentication using wavelet probabilistic neural network, ” in Consumer Electr onics (ISCE), 2013 IEEE 17th International Symposium on . IEEE, 2013, pp. 55–56. [12] Z. Chair and P . V arshney , “Optimal data fusion in multiple sensor de- tection systems, ” Aerospace and Electr onic Systems, IEEE T ransactions on , v ol. AES-22, no. 1, pp. 98 –101, jan. 1986. [13] H. Saev anee, N. Clarke, S. Furnell, and V . Biscione, “T ext-based active authentication for mobile devices, ” in ICT Systems Security and Privacy Pr otection . Springer, 2014, pp. 99–112. [14] M. L. Brocardo, I. Traor e, S. Saad, and I. W oungang, “ Authorship veri- fication for short messages using stylometry , ” in Computer , Information and T elecommunication Systems (CITS), 2013 International Confer ence on . IEEE, 2013, pp. 1–6. [15] A. Abbasi and H. Chen, “Writeprints: A stylometric approach to identity-lev el identification and similarity detection in cyberspace, ” ACM T ransactions on Information Systems (TOIS) , vol. 26, no. 2, p. 7, 2008. [16] A. W . E. McDonald, S. Afroz, A. Caliskan, A. Stolerman, and R. Green- stadt, “Use fe wer instances of the letter ”i”: T oward writing style anonymization. ” in Lecture Notes in Computer Science , v ol. 7384. Springer , 2012, pp. 299–318. 10 [17] A. Fridman, A. Stolerman, S. Acharya, P . Brennan, P . Juola, R. Green- stadt, and M. Kam, “Multi-modal decision fusion for continuous authen- tication, ” Computers and Electrical Engineering , p. Accepted, 2014. [18] R. Y ampolskiy , “Behavioral modeling: an ov ervie w , ” American Journal of Applied Sciences , vol. 5, no. 5, pp. 496–503, 2008. [19] M. Abramson and D. W . Aha, “User authentication from web browsing behavior . ” in FLAIRS Conference , 2013. [20] N. Eagle, A. S. Pentland, and D. Lazer, “Inferring friendship network structure by using mobile phone data, ” Proceedings of the National Academy of Sciences , vol. 106, no. 36, pp. 15 274–15 278, 2009. [21] B. Sun, F . Y u, K. W u, and V . Leung, “Mobility-based anomaly detection in cellular mobile networks, ” in Proceedings of the 3rd ACM workshop on W ireless security . ACM, 2004, pp. 61–69. [22] J. Hall, M. Barbeau, and E. Kranakis, “ Anomaly-based intrusion detection using mobility profiles of public transportation users, ” in W ireless And Mobile Computing, Networking And Communications, 2005.(W iMob’2005), IEEE International Confer ence on , vol. 2. IEEE, 2005, pp. 17–24. [23] S. Abe, Support vector machines for pattern classification . Springer , 2010. [24] A. Niculescu-Mizil and R. Caruana, “Predicting good probabilities with supervised learning, ” in Pr oceedings of the 22nd international confer ence on Machine learning . A CM, 2005, pp. 625–632. [25] R. R. T enney and J. Nils R. Sandell, “Decision with distributed sensors, ” IEEE T ransactions on Aerospace and Electronic Systems , vol. AES-17, pp. 501–510, 1981. [26] M. Kam, W . Chang, and Q. Zhu, “Hardware complexity of binary distributed detection systems with isolated local bayesian detectors, ” IEEE T ransactions on Systems Man and Cybernetics , vol. 21, pp. 565– 571, 1991. [27] A. R. Reibman and L. Nolte, “Optimal detection and performance of distributed sensor systems, ” IEEE T ransactions on Aerospace and Electr onic Systems , vol. AES-23, pp. 24–30, 1987.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment