Learning Deep Representation for Face Alignment with Auxiliary Attributes

In this study, we show that landmark detection or face alignment task is not a single and independent problem. Instead, its robustness can be greatly improved with auxiliary information. Specifically, we jointly optimize landmark detection together with the recognition of heterogeneous but subtly correlated facial attributes, such as gender, expression, and appearance attributes. This is non-trivial since different attribute inference tasks have different learning difficulties and convergence rates. To address this problem, we formulate a novel tasks-constrained deep model, which not only learns the inter-task correlation but also employs dynamic task coefficients to facilitate the optimization convergence when learning multiple complex tasks. Extensive evaluations show that the proposed task-constrained learning (i) outperforms existing face alignment methods, especially in dealing with faces with severe occlusion and pose variation, and (ii) reduces model complexity drastically compared to the state-of-the-art methods based on cascaded deep model.

💡 Research Summary

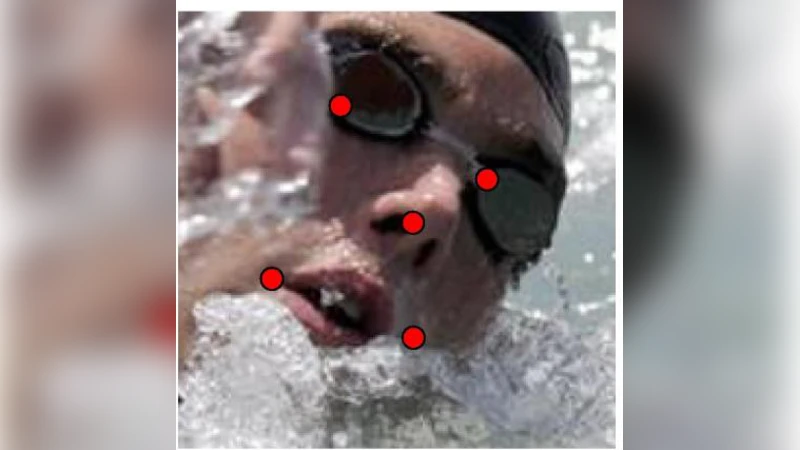

The paper introduces a novel deep learning framework called the Tasks‑Constrained Deep Convolutional Network (TCDCN) for facial landmark detection (face alignment). Unlike traditional approaches that treat alignment as an isolated regression problem, TCDCN jointly learns the main task of predicting landmark coordinates together with a set of heterogeneous auxiliary facial attributes (22 binary attributes such as gender, pose, expression, glasses, etc.). The authors argue that these attributes are subtly correlated with landmark geometry and can provide useful regularization, especially under challenging conditions like heavy occlusion or large head rotations.

The network architecture takes a 60 × 60 grayscale face image as input and passes it through five convolution‑pooling layers, ending with a fully‑connected layer that produces a 256‑dimensional feature vector x. This vector is fed into a set of linear models: one for each landmark coordinate (regressed with a Gaussian noise model) and one for each attribute (modeled with logistic regression). All task‑specific weight vectors are stacked into a matrix W.

Two key innovations address the difficulty of multi‑task learning when tasks have different learning difficulties and convergence rates:

-

Dynamic Task Coefficients (λₜ): Each auxiliary task is assigned a scalar weight that is automatically adjusted during training based on its validation error. If a task stops improving or starts over‑fitting, its λₜ is reduced, effectively performing a task‑wise early stopping. This prevents harmful gradients from poorly converging tasks from contaminating the main landmark detector.

-

Inter‑Task Correlation Modeling: Rather than assuming independence among tasks, the authors place a matrix‑normal prior on W with a learned task covariance matrix Υ. This captures statistical relationships between tasks (e.g., pose and eye position) and encourages shared representations where appropriate while suppressing spurious correlations.

Training proceeds via an alternating optimization scheme. First, with the convolutional filters K fixed, the weight matrix W and covariance Υ are updated (closed‑form or gradient‑based). Then, with W and Υ fixed, the filters K are updated by back‑propagation of the combined loss, which includes landmark regression loss, attribute classification loss, the dynamic coefficients, and regularization terms for Υ. This loop repeats until convergence.

Because dense landmark annotation is costly, the authors pre‑train the network on five sparse landmarks (eye centers, nose tip, mouth corners) and then fine‑tune it on dense configurations (68‑point 300‑W, 194‑point Helen). The pre‑training provides a good initialization that mitigates over‑fitting on small datasets.

Extensive experiments on three benchmark datasets—COFW (with severe occlusion), 300‑W (varied pose and illumination), and Helen (high‑resolution)—show that TCDCN outperforms state‑of‑the‑art methods, including the cascaded CNN of Sun et al., by 8‑12 % lower normalized mean error (NME). The advantage is most pronounced on heavily occluded or large‑yaw faces, where auxiliary attributes supply strong geometric cues. Moreover, TCDCN achieves these gains with roughly 30 % of the parameters of the cascaded models, enabling faster inference suitable for embedded or real‑time applications.

Ablation studies confirm the importance of both components: removing dynamic task coefficients leads to instability and degraded landmark accuracy, while fixing the task covariance to an identity matrix reduces the benefit of attribute sharing. The analysis also reveals that not all attributes are equally helpful; pose and gender consistently improve performance, whereas some expression labels can become noisy and are down‑weighted automatically by the dynamic coefficients.

In summary, the paper makes three major contributions: (1) a unified deep architecture that jointly learns facial landmarks and multiple auxiliary attributes; (2) a principled mechanism—dynamic task coefficients—to balance tasks with heterogeneous learning dynamics; and (3) a matrix‑normal prior that captures inter‑task correlations, enhancing shared feature learning. The approach demonstrates that multi‑task deep learning, when equipped with adaptive weighting and correlation modeling, can substantially improve robustness and efficiency of face alignment systems, and it opens avenues for applying similar strategies to other computer‑vision problems involving heterogeneous auxiliary information.

Comments & Academic Discussion

Loading comments...

Leave a Comment