Secrecy via Sources and Channels

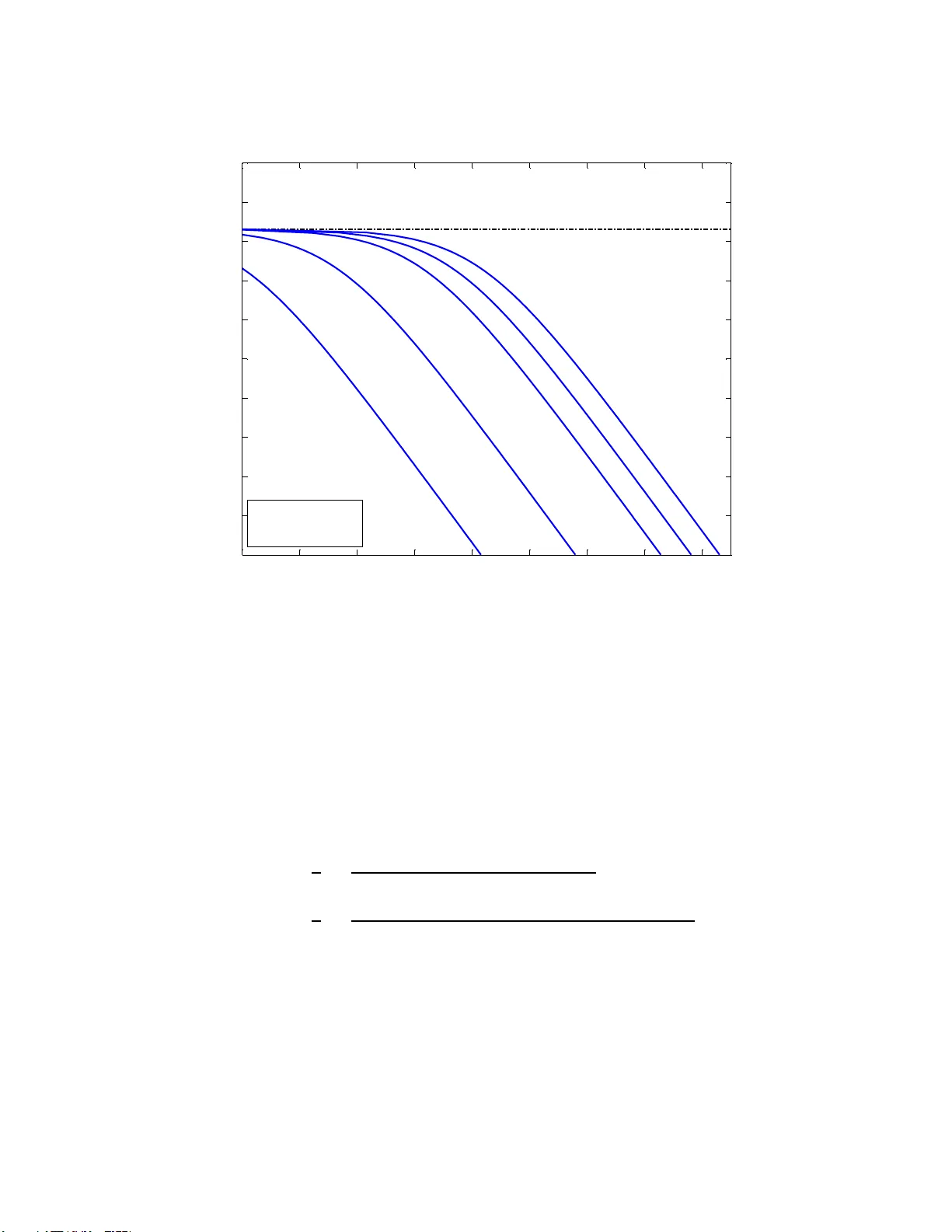

Alice and Bob want to share a secret key and to communicate an independent message, both of which they desire to be kept secret from an eavesdropper Eve. We study this problem of secret communication and secret key generation when two resources are a…

Authors: Vinod M. Prabhakaran, Krishnan Eswaran, Kannan Ramch