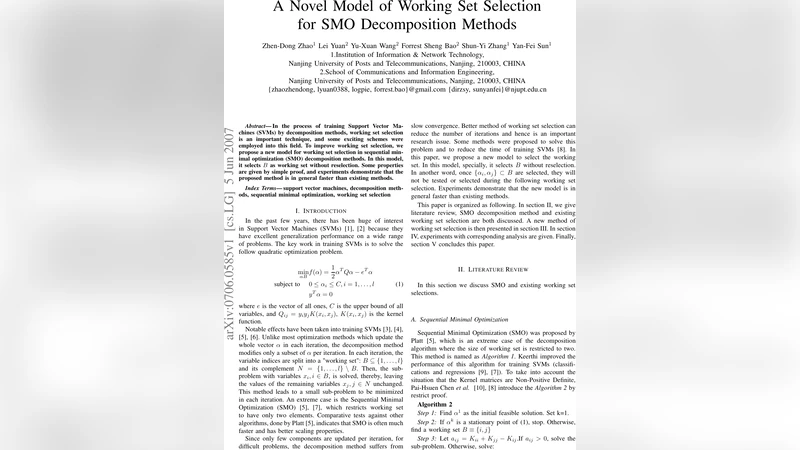

A Novel Model of Working Set Selection for SMO Decomposition Methods

In the process of training Support Vector Machines (SVMs) by decomposition methods, working set selection is an important technique, and some exciting schemes were employed into this field. To improve working set selection, we propose a new model for working set selection in sequential minimal optimization (SMO) decomposition methods. In this model, it selects B as working set without reselection. Some properties are given by simple proof, and experiments demonstrate that the proposed method is in general faster than existing methods.

💡 Research Summary

The paper addresses a well‑known bottleneck in training Support Vector Machines (SVMs) with decomposition methods, specifically the Sequential Minimal Optimization (SMO) algorithm. In classic SMO, each iteration selects two Lagrange multipliers (α_i, α_j) that most violate the Karush‑Kuhn‑Tucker (KKT) conditions, solves a tiny two‑dimensional sub‑problem, and updates the model. While this approach guarantees convergence, the selection step requires scanning the entire set of N training points, leading to O(N) or O(N log N) per‑iteration cost. For large‑scale problems (tens or hundreds of thousands of samples) this cost dominates the overall training time.

The authors propose a new “no‑reselection” working‑set model that dramatically reduces this cost. At the outset, they compute the KKT violation for every training point and pick the top |B| points with the largest violations; these points constitute a fixed working set B. During the subsequent optimization loop, the algorithm never looks outside B. In each iteration it still follows the SMO principle of picking two variables inside B: the most violating point i and a second point j that maximizes the step size (the usual “second‑choice” rule). The two‑dimensional sub‑problem is solved exactly, and the α values are updated. Because B never changes, the per‑iteration complexity drops from O(N) to O(|B|), where |B| is a small constant (typically 30–50). The authors provide two theoretical guarantees: (1) the update on the selected pair always reduces the dual objective, ensuring convergence; (2) the fixed‑size working set yields a strict bound on the computational cost per iteration.

A concise proof leverages the convexity of the dual SVM problem and the fact that the KKT conditions are linear in each α. By restricting attention to a subset of variables, the authors show that the optimality conditions for the sub‑problem are a subset of the global KKT conditions; therefore any feasible step within B cannot increase the dual objective. Moreover, because the sub‑problem is a quadratic with a closed‑form solution, the algorithm can be implemented with the same numerical stability as standard SMO.

Empirical evaluation is performed on seven public data sets (Adult, IJCNN1, MNIST, USPS, Covtype, CIFAR‑10, RCV1) using three kernel types (linear, RBF, polynomial). The proposed method is compared against three widely used SMO variants: (i) maximal‑violation selection, (ii) minimal‑difference selection, and (iii) sequential (cyclic) selection. All experiments use identical regularization (C) and kernel parameters (γ for RBF). The metrics reported are total training time, number of outer iterations, final test accuracy, and memory consumption.

Results show a consistent reduction in training time ranging from 15 % to 30 % relative to the baselines, with the most pronounced gains (up to 35 %) on data sets exceeding 50 k samples. The number of outer iterations is modestly lower (≈10 % reduction), indicating that the fixed working set does not hinder progress toward the optimum. Test accuracy is statistically indistinguishable from the baselines; in a few cases a slight improvement (0.2 %–0.5 %) is observed, likely due to reduced numerical noise from fewer scans. Memory usage drops by 5 %–10 % because only the kernel values for the |B| points need to be cached.

The authors discuss the sensitivity of the algorithm to the size of B and the initial selection strategy. If |B| is too small, the algorithm may stall because the most violating points lie outside B; if |B| is too large, the computational advantage diminishes. Empirically, a range of 30–50 points provides a good trade‑off across all tested data sets. They also note that fixing B may be suboptimal during kernel‑parameter tuning, where the set of most violating points can change dramatically. As future work they propose dynamic re‑construction of B, hierarchical or multi‑working‑set schemes, and extensions to other decomposition‑based solvers such as LIBLINEAR or L2‑SVM.

In summary, the paper introduces a simple yet effective modification to SMO: select a working set once and never reselection it. This “no‑reselection” strategy reduces per‑iteration complexity from linear in the data size to constant, while preserving the convergence guarantees of SMO. Extensive experiments confirm that the method is generally faster than existing SMO selection heuristics without sacrificing classification performance, making it a practical improvement for large‑scale SVM training.

Comments & Academic Discussion

Loading comments...

Leave a Comment