Sequence-to-Sequence Learning as Beam-Search Optimization

Sequence-to-Sequence (seq2seq) modeling has rapidly become an important general-purpose NLP tool that has proven effective for many text-generation and sequence-labeling tasks. Seq2seq builds on deep neural language modeling and inherits its remarkab…

Authors: Sam Wiseman, Alex, er M. Rush

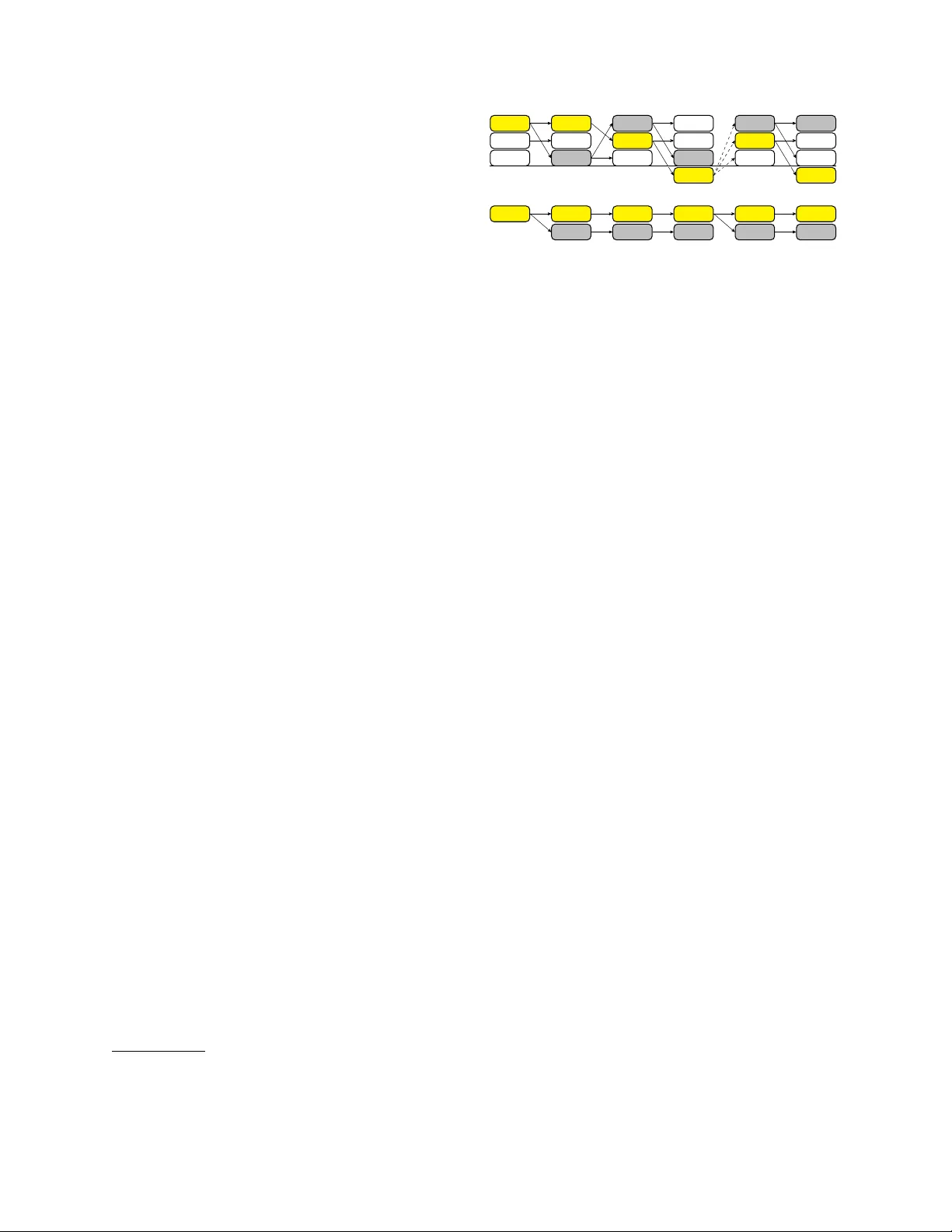

Sequence-to-Sequence Learning as Beam-Sear ch Optimization Sam Wiseman and Alexander M. Rush School of Engineering and Applied Sciences Harv ard Uni v ersity Cambridge, MA, USA { swiseman,srush } @seas.harvard.edu Abstract Sequence-to-Sequence (seq2seq) modeling has rapidly become an important general- purpose NLP tool that has pro v en ef fecti v e for many text-generation and sequence-labeling tasks. Seq2seq builds on deep neural language modeling and inherits its remarkable accurac y in estimating local, ne xt-word distrib utions. In this work, we introduce a model and beam- search training scheme, based on the work of Daum ´ e III and Marcu (2005), that e xtends seq2seq to learn global sequence scores. This structured approach av oids classical biases as- sociated with local training and unifies the training loss with the test-time usage, while preserving the proven model architecture of seq2seq and its efficient training approach. W e show that our system outperforms a highly- optimized attention-based seq2seq system and other baselines on three different sequence to sequence tasks: word ordering, parsing, and machine translation. 1 Introduction Sequence-to-Sequence learning with deep neural networks (herein, seq2seq) (Sutskev er et al., 2011; Sutske v er et al., 2014) has rapidly become a very useful and surprisingly general-purpose tool for nat- ural language processing. In addition to demon- strating impressiv e results for machine translation (Bahdanau et al., 2015), roughly the same model and training have also prov en to be useful for sen- tence compression (Filippov a et al., 2015), parsing (V inyals et al., 2015), and dialogue systems (Ser- ban et al., 2016), and they additionally underlie other text generation applications, such as image or video captioning (V enugopalan et al., 2015; Xu et al., 2015). The dominant approach to training a seq2seq sys- tem is as a conditional language model, with training maximizing the likelihood of each successive tar- get word conditioned on the input sequence and the gold history of target words. Thus, training uses a strictly word-le v el loss, usually cross-entropy ov er the tar get v ocab ulary . This approach has pro v en to be very effecti v e and ef ficient for training neural lan- guage models, and seq2seq models similarly obtain impressi ve perple xities for word-generation tasks. Notably , ho wever , seq2seq models are not used as conditional language models at test-time; they must instead generate fully-formed word sequences. In practice, generation is accomplished by searching ov er output sequences greedily or with beam search. In this conte xt, Ranzato et al. (2016) note that the combination of the training and generation scheme just described leads to at least two major issues: 1. Exposure Bias : the model is nev er exposed to its own errors during training, and so the in- ferred histories at test-time do not resemble the gold training histories. 2. Loss-Evaluation Mismatch : training uses a word-le v el loss, while at test-time we tar get improving sequence-le vel e v aluation metrics, such as BLEU (Papineni et al., 2002). W e might additionally add the concern of label bias (Lafferty et al., 2001) to the list, since word- probabilities at each time-step are locally normal- ized, guaranteeing that successors of incorrect his- tories receiv e the same mass as do the successors of the true history . In this work we dev elop a non-probabilistic vari- ant of the seq2seq model that can assign a score to any possible target sequence , and we propose a training procedure, inspired by the learning as search optimization (LaSO) framew ork of Daum ´ e III and Marcu (2005), that defines a loss function in terms of errors made during beam search. Fur- thermore, we provide an ef ficient algorithm to back- propagate through the beam-search procedure dur- ing seq2seq training. This approach offers a possible solution to each of the three aforementioned issues, while largely maintaining the model architecture and training ef- ficiency of standard seq2seq learning. Moreov er , by scoring sequences rather than words, our ap- proach also allows for enforcing hard-constraints on sequence generation at tr aining time . T o test out the ef fecti veness of the proposed approach, we dev elop a general-purpose seq2seq system with beam search optimization. W e run experiments on three very dif- ferent problems: word ordering, syntactic parsing, and machine translation, and compare to a highly- tuned seq2seq system with attention (Luong et al., 2015). The version with beam search optimization sho ws significant improvements on all three tasks, and particular improvements on tasks that require dif ficult search. 2 Related W ork The issues of exposure bias and label bias have re- cei ved much attention from authors in the structured prediction community , and we briefly revie w some of this work here. One prominent approach to com- bating exposure bias is that of SEARN (Daum ´ e III et al., 2009), a meta-training algorithm that learns a search policy in the form of a cost-sensitiv e classifier trained on examples generated from an interpolation of an oracle policy and the model’ s current (learned) policy . Thus, SEARN explicitly targets the mis- match between oracular training and non-oracular (often greedy) test-time inference by training on the output of the model’ s own policy . D Agger (Ross et al., 2011) is a similar approach, which differs in terms of how training examples are generated and aggregated, and there ha v e additionally been impor- tant refinements to this style of training over the past se veral years (Chang et al., 2015). When it comes to training RNNs, SEARN/D Agger has been applied under the name “scheduled sampling” (Bengio et al., 2015), which in v olves training an RNN to generate the t + 1 ’ st tok en in a target sequence after consum- ing either the true t ’ th token, or , with probability that increases throughout training, the predicted t ’th to- ken. Though technically possible, it is uncom- mon to use beam search when training with SEARN/D Agger . The early-update (Collins and Roark, 2004) and LaSO (Daum ´ e III and Marcu, 2005) training strategies, howe ver , explicitly ac- count for beam search, and describe strategies for updating parameters when the gold structure be- comes unreachable during search. Early update and LaSO dif fer primarily in that the former discards a training example after the first search error , whereas LaSO resumes searching after an error from a state that includes the gold partial structure. In the con- text of feed-forward neural network training, early update training has been recently explored in a feed- forward setting by Zhou et al. (2015) and Andor et al. (2016). Our work differs in that we adopt a LaSO-like paradigm (with some minor modifica- tions), and apply it to the training of seq2seq RNNs (rather than feed-forward networks). W e also note that W atanabe and Sumita (2015) apply maximum- violation training (Huang et al., 2012), which is sim- ilar to early-update, to a parsing model with recur- rent components, and that Y azdani and Henderson (2015) use beam-search in training a discriminativ e, locally normalized dependency parser with recurrent components. Recently authors hav e also proposed alleviating exposure bias using techniques from reinforcement learning. Ranzato et al. (2016) follow this ap- proach to train RNN decoders in a seq2seq model, and they obtain consistent improv ements in perfor- mance, e ven over models trained with scheduled sampling. As Daum ´ e III and Marcu (2005) note, LaSO is similar to reinforcement learning, except it does not require “exploration” in the same way . Such exploration may be unnecessary in supervised text-generation, since we typically know the gold partial sequences at each time-step. Shen et al. (2016) use minimum risk training (approximated by sampling) to address the issues of exposure bias and loss-e v aluation mismatch for seq2seq MT , and show impressi ve performance gains. Whereas exposure bias results from training in a certain way , label bias results from properties of the model itself. In particular , label bias is likely to affect structured models that make sub-structure predictions using locally-normalized scores. Be- cause the neural and non-neural literature on this point has recently been revie wed by Andor et al. (2016), we simply note here that RNN models are typically locally normalized, and we are unaware of any specifically seq2seq work with RNNs that does not use locally-normalized scores. The model we introduce here, ho we v er , is not locally normalized, and so should not suf fer from label bias. W e also note that there are some (non-seq2seq) exceptions to the trend of locally normalized RNNs, such as the work of Sak et al. (2014) and V oigtlaender et al. (2015), who train LSTMs in the context of HMMs for speech recognition using sequence-lev el objec- ti ves; their w ork does not consider search, ho we ver . 3 Background and Notation In the simplest seq2seq scenario, we are gi ven a col- lection of source-target sequence pairs and tasked with learning to generate target sequences from source sequences. For instance, we might view ma- chine translation in this way , where in particular we attempt to generate English sentences from (corre- sponding) French sentences. Seq2seq models are part of the broader class of “encoder -decoder” mod- els (Cho et al., 2014), which first use an encoding model to transform a source object into an encoded representation x . Many different sequential (and non-sequential) encoders have proven to be ef fec- ti ve for different source domains. In this work we are agnostic to the form of the encoding model, and simply assume an abstract source representation x . Once the input sequence is encoded, seq2seq models generate a target sequence using a decoder . The decoder is tasked with generating a target se- quence of words from a target vocab ulary V . In particular , words are generated sequentially by con- ditioning on the input representation x and on the pre viously generated words or history . W e use the notation w 1: T to refer to an arbitrary word sequence of length T , and the notation y 1: T to refer to the gold (i.e., correct) target w ord sequence for an input x . Most seq2seq systems utilize a recurrent neural network (RNN) for the decoder model. F ormally , a recurrent neural network is a parameterized non- linear function RNN that recursiv ely maps a se- quence of vectors to a sequence of hidden states. Let m 1 , . . . , m T be a sequence of T vectors, and let h 0 be some initial state vector . Applying an RNN to any such sequence yields hidden states h t at each time-step t , as follo ws: h t ← RNN ( m t , h t − 1 ; θ ) , where θ is the set of model parameters, which are shared over time. In this work, the vectors m t will always correspond to the embeddings of a tar- get word sequence w 1: T , and so we will also write h t ← RNN ( w t , h t − 1 ; θ ) , with w t standing in for its embedding. RNN decoders are typically trained to act as con- ditional language models. That is, one attempts to model the probability of the t ’th target word con- ditioned on x and the target history by stipulating that p ( w t | w 1: t − 1 , x ) = g ( w t , h t − 1 , x ) , for some pa- rameterized function g typically computed with an af fine layer followed by a softmax. In computing these probabilities, the state h t − 1 represents the tar- get history , and h 0 is typically set to be some func- tion of x . The complete model (including encoder) is trained, analogously to a neural language model, to minimize the cross-entropy loss at each time-step while conditioning on the gold history in the train- ing data. That is, the model is trained to minimize − ln Q T t =1 p ( y t | y 1: t − 1 , x ) . Once the decoder is trained, discrete se- quence generation can be performed by approx- imately maximizing the probability of the tar- get sequence under the conditional distrib ution, ˆ y 1: T = argb eam w 1: T Q T t =1 p ( w t | w 1: t − 1 , x ) , where we use the notation argb eam to emphasize that the decoding process requires heuristic search, since the RNN model is non-Mark ovian. In practice, a simple beam search procedure that explores K prospectiv e histories at each time-step has prov en to be an effec- ti ve decoding approach. Ho we ver , as noted above, decoding in this manner after conditional language- model style training potentially suf fers from the is- sues of exposure bias and label bias, which moti- v ates the work of this paper . 4 Beam Search Optimization W e begin by making one small change to the seq2seq modeling framework. Instead of predicting the probability of the next word, we instead learn to produce (non-probabilistic) scores for ranking se- quences. Define the score of a sequence consisting of history w 1: t − 1 follo wed by a single word w t as f ( w t , h t − 1 , x ) , where f is a parameterized function examining the current hidden-state of the relev ant RNN at time t − 1 as well as the input representa- tion x . In experiments, our f will have an identi- cal form to g but without the final softmax transfor- mation (which transforms unnormalized scores into probabilities), thereby allowing the model to av oid issues associated with the label bias problem. More importantly , we also modify how this model is trained. Ideally we would train by comparing the gold sequence to the highest-scoring complete sequence. Howe v er , because finding the argmax sequence according to this model is intractable, we propose to adopt a LaSO-like (Daum ´ e III and Marcu, 2005) scheme to train, which we will re- fer to as beam search optimization (BSO). In par- ticular , we define a loss that penalizes the gold se- quence falling off the beam during training. 1 The proposed training approach is a simple way to ex- pose the model to incorrect histories and to match the training procedure to test generation. Further- more we show that it can be implemented efficiently without changing the asymptotic run-time of train- ing, beyond a f actor of the beam size K . 4.1 Search-Based Loss W e no w formalize this notion of a search-based loss for RNN training. Assume we hav e a set S t of K candidate sequences of length t . W e can calculate a score for each sequence in S t using a scoring func- tion f parameterized with an RNN, as above, and we define the sequence ˆ y ( K ) 1: t ∈ S t to be the K ’th ranked 1 Using a non-probabilistic model further allows us to incur no loss (and thus require no update to parameters) when the gold sequence is on the beam; this contrasts with models based on a CRF loss, such as those of Andor et al. (2016) and Zhou et al. (2015), though in training those models are simply not updated when the gold sequence remains on the beam. sequence in S t according to f . That is, assuming distinct scores, |{ ˆ y ( k ) 1: t ∈ S t | f ( ˆ y ( k ) t , ˆ h ( k ) t − 1 ) > f ( ˆ y ( K ) t , ˆ h ( K ) t − 1 ) }| = K − 1 , where ˆ y ( k ) t is the t ’th token in ˆ y ( k ) 1: t , ˆ h ( k ) t − 1 is the RNN state corresponding to its t − 1 ’ st step, and where we hav e omitted the x argument to f for bre vity . W e no w define a loss function that gi v es loss each time the score of the gold prefix y 1: t does not exceed that of ˆ y ( K ) 1: t by a margin: L ( f ) = T X t =1 ∆( ˆ y ( K ) 1: t ) h 1 − f ( y t , h t − 1 ) + f ( ˆ y ( K ) t , ˆ h ( K ) t − 1 ) i . Abov e, the ∆( ˆ y ( K ) 1: t ) term denotes a mistake-specific cost-function, which allows us to scale the loss de- pending on the se verity of erroneously predicting ˆ y ( K ) 1: t ; it is assumed to return 0 when the margin re- quirement is satisfied, and a positi ve number other- wise. It is this term that allows us to use sequence- rather than word-lev el costs in training (addressing the 2nd issue in the introduction). For instance, when training a seq2seq model for machine trans- lation, it may be desirable to hav e ∆( ˆ y ( K ) 1: t ) be in- versely related to the partial sentence-lev el BLEU score of ˆ y ( K ) 1: t with y 1: t ; we experiment along these lines in Section 5.3. Finally , because we want the full gold sequence to be at the top of the beam at the end of search, when t = T we modify the loss to require the score of y 1: T to exceed the score of the highest ranked incorrect prediction by a margin. W e can optimize the loss L using a two-step pro- cess: (1) in a forward pass, we compute candidate sets S t and record margin violations (sequences with non-zero loss); (2) in a backward pass, we back- propagate the errors through the seq2seq RNNs. Un- like standard seq2seq training, the first-step requires running search (in our case beam search) to find margin violations. The second step can be done by adapting back-propagation through time (BPTT). W e ne xt discuss the details of this process. 4.2 Forward: Find V iolations In order to minimize this loss, we need to specify a procedure for constructing candidate sequences ˆ y ( k ) 1: t at each time step t so that we find margin viola- tions. W e follo w LaSO (rather than early-update 2 ; see Section 2) and build candidates in a recursiv e manner . If there was no margin violation at t − 1 , then S t is constructed using a standard beam search update. If there was a margin violation, S t is con- structed as the K best sequences assuming the gold history y 1: t − 1 through time-step t − 1 . Formally , assume the function succ maps a se- quence w 1: t − 1 ∈ V t − 1 to the set of all v alid se- quences of length t that can be formed by appending to it a valid word w ∈ V . In the simplest, uncon- strained case, we will hav e succ( w 1: t − 1 ) = { w 1: t − 1 , w | w ∈ V } . As an important aside, note that for some prob- lems it may be preferable to define a succ func- tion which imposes hard constraints on successor sequences. For instance, if we would like to use seq2seq models for parsing (by emitting a con- stituency or dependency structure encoded into a se- quence in some way), we will hav e hard constraints on the sequences the model can output, namely , that they represent v alid parses. While hard constraints such as these would be difficult to add to standard seq2seq at training time, in our framew ork they can naturally be added to the succ function, allowing us to train with hard constraints; we experiment along these lines in Section 5.3, where we refer to a model trained with constrained beam search as ConBSO. Having defined an appropriate succ function, we specify the candidate set as: S t = topK ( succ( y 1: t − 1 ) violation at t − 1 S K k =1 succ( ˆ y ( k ) 1: t − 1 ) otherwise , where we ha v e a mar gin violation at t − 1 iff f ( y t − 1 , h t − 2 ) < f ( ˆ y ( K ) t − 1 , ˆ h ( K ) t − 2 ) + 1 , and where topK considers the scores gi ven by f . This search procedure is illustrated in the top portion of Figure 1. In the forward pass of our training algorithm, sho wn as the first part of Algorithm 1, we run this version of beam search and collect all sequences and their hidden states that lead to losses. 2 W e found that training with early-update rather than (de- layed) LaSO did not work well, ev en after pre-training. Giv en the success of early-update in many NLP tasks this was some- what surprising. W e leave this question to future w ork. a red dog smells home today the dog dog barks quickly F riday red blue cat barks straight now runs today a red dog runs quic kly today blue dog barks home today Figure 1: T op: possible ˆ y ( k ) 1: t formed in training with a beam of size K = 3 and with gold sequence y 1:6 = “a red dog runs quickly today”. The gold sequence is high- lighted in yellow , and the predicted prefixes in volv ed in margin violations (at t = 4 and t = 6 ) are in gray . Note that time-step T = 6 uses a different loss criterion. Bot- tom: prefix es that actually participate in the loss, ar- ranged to illustrate the back-propagation process. 4.3 Backward: Merge Sequences Once we hav e collected margin violations we can run backpropagation to compute parameter updates. Assume a margin violation occurs at time-step t be- tween the predicted history ˆ y ( K ) 1: t and the gold his- tory y 1: t . As in standard seq2seq training we must back-propagate this error through the gold history; ho we ver , unlike seq2seq we also have a gradient for the wrongly predicted history . Recall that to back-propagate errors through an RNN we run a recursiv e backward procedure — denoted belo w by BRNN — at each time-step t , which accumulates the gradients of next-step and fu- ture losses with respect to h t . W e ha ve: ∇ h t L ← BRNN ( ∇ h t L t +1 , ∇ h t +1 L ) , where L t +1 is the loss at step t + 1 , deriving, for instance, from the score f ( y t +1 , h t ) . Running this BRNN procedure from t = T − 1 to t = 0 is known as back-propagation through time (BPTT). In determining the total computational cost of back-propagation here, first note that in the worst case there is one violation at each time-step, which leads to T independent, incorrect sequences. Since we need to call BRNN O ( T ) times for each se- quence, a naiv e strategy of running BPTT for each incorrect sequence would lead to an O ( T 2 ) back- ward pass, rather than the O ( T ) time required for the standard seq2seq approach. Fortunately , our combination of search-strategy and loss mak e it possible to efficiently share BRNN operations. This shared structure comes naturally from the LaSO update, which resets the beam in a con v enient way . W e informally illustrate the process in Figure 1. The top of the diagram shows a possible sequence of ˆ y ( k ) 1: t formed during search with a beam of size 3 for the target sequence y = “a red dog runs quickly today . ” When the gold sequence falls off the beam at t = 4 , search resumes with S 5 = succ( y 1:4 ) , and so all subsequent predicted sequences have y 1:4 as a prefix and are thus functions of h 4 . Moreov er , be- cause our loss function only in v olves the scores of the gold prefix and the violating prefix, we end up with the relatively simple computation tree shown at the bottom of Figure 1. It is evident that we can backpropagate in a single pass, accumulating gradi- ents from sequences that di verge from the gold at the time-step that precedes their div ergence. The second half of Algorithm 1 shows this explicitly for a single sequence, though it is straightforward to extend the algorithm to operate in batch. 3 5 Data and Methods W e run experiments on three dif ferent tasks, com- paring our approach to the seq2seq baseline, and to other rele v ant baselines. 5.1 Model While the method we describe applies to seq2seq RNNs in general, for all experiments we use the global attention model of Luong et al. (2015) — which consists of an LSTM (Hochreiter and Schmidhuber , 1997) encoder and an LSTM decoder with a global attention model — as both the base- line seq2seq model (i.e., as the model that computes the g in Section 3) and as the model that computes our sequence-scores f ( w t , h t − 1 , x ) . As in Luong et al. (2015), we also use “input feeding, ” which in v olv es feeding the attention distribution from the pre vious time-step into the decoder at the current step. This model architecture has been found to be highly performant for neural machine translation and other seq2seq tasks. 3 W e also note that because we do not update the parameters until after the T ’th search step, our training procedure differs slightly from LaSO (which is online), and in this aspect is essen- tially equiv alent to the “delayed LaSO update” of Bj ¨ orkelund and Kuhn (2014). Algorithm 1 Seq2seq Beam-Search Optimization 1: procedure B S O ( x , K tr , succ ) 2: /*F O RW A R D */ 3: Init empty storage ˆ y 1: T and ˆ h 1: T ; init S 1 4: r ← 0 ; v iol ations ← { 0 } 5: for t = 1 , . . . , T do 6: K = K tr if t 6 = T else arg max k : ˆ y ( k ) 1: t 6 = y 1: t f ( ˆ y ( k ) t , ˆ h ( k ) t − 1 ) 7: if f ( y t , h t − 1 ) < f ( ˆ y ( K ) t , ˆ h ( K ) t − 1 ) + 1 then 8: ˆ h r : t − 1 ← ˆ h ( K ) r : t − 1 9: ˆ y r +1: t ← ˆ y ( K ) r +1: t 10: Add t to v iol ations 11: r ← t 12: S t +1 ← topK(succ( y 1: t )) 13: else 14: S t +1 ← topK( S K k =1 succ( ˆ y ( k ) 1: t )) 15: /*B AC K W A R D */ 16: g rad h T ← 0 ; g r ad b h T ← 0 17: for t = T − 1 , . . . , 1 do 18: g rad h t ← BRNN ( ∇ h t L t +1 , g r ad h t +1 ) 19: g rad b h t ← BRNN ( ∇ b h t L t +1 , g r ad b h t +1 ) 20: if t − 1 ∈ v iol ations then 21: g rad h t ← g r ad h t + g r ad b h t 22: g rad b h t ← 0 T o distinguish the models we refer to our system as BSO (beam search optimization) and to the base- line as seq2seq. When we apply constrained training (as discussed in Section 4.2), we refer to the model as ConBSO. In providing results we also distinguish between the beam size K tr with which the model is trained, and the beam size K te which is used at test-time. In general, if we plan on ev aluating with a beam of size K te it makes sense to train with a beam of size K tr = K te + 1 , since our objectiv e requires the gold sequence to be scored higher than the last sequence on the beam. 5.2 Methodology Here we detail additional techniques we found nec- essary to ensure the model learned ef fectively . First, we found that the model failed to learn when trained from a random initialization. 4 W e therefore found it necessary to pre-train the model using a standard, word-le v el cross-entropy loss as described in Sec- 4 This may be because there is relatively little signal in the sparse, sequence-level gradient, but this point requires further in vestig ation. tion 3. The necessity of pre-training in this instance is consistent with the findings of other authors who train non-local neural models (Kingsbury , 2009; Sak et al., 2014; Andor et al., 2016; Ranzato et al., 2016). 5 Similarly , it is clear that the smaller the beam used in training is, the less room the model has to make erroneous predictions without running afoul of the margin loss. Accordingly , we also found it use- ful to use a “curriculum beam” strategy in training, whereby the size of the beam is increased gradually during training. In particular, giv en a desired train- ing beam size K tr , we began training with a beam of size 2, and increased it by 1 e very 2 epochs until reaching K tr . Finally , it has been established that dr opout (Sri- v astav a et al., 2014) regularization improves the per - formance of LSTMs (Pham et al., 2014; Zaremba et al., 2014), and in our experiments we run beam search under dropout. 6 For all experiments, we trained both seq2seq and BSO models with mini-batch Adagrad (Duchi et al., 2011) (using batches of size 64), and we renormal- ized all gradients so they did not exceed 5 before updating parameters. W e did not extensiv ely tune learning-rates, but we found initial rates of 0.02 for the encoder and decoder LSTMs, and a rate of 0.1 or 0.2 for the final linear layer (i.e., the layer tasked with making word-predictions at each time- step) to work well across all the tasks we consid- ered. Code implementing the experiments described belo w can be found at https://github.com/ harvardnlp/BSO . 7 5.3 T asks and Results Our experiments are primarily intended to ev aluate the effecti v eness of beam search optimization ov er standard seq2seq training. As such, we run exper- iments with the same model across three very dif- 5 Andor et al. (2016) found, howe v er , that pre-training only increased con v ergence-speed, b ut w as not necessary for obtain- ing good results. 6 Howe v er , it is important to ensure that the same mask ap- plied at each time-step of the forward search is also applied at the corresponding step of the backward pass. W e accomplish this by pre-computing masks for each time-step, and sharing them between the partial sequence LSTMs. 7 Our code is based on Y oon Kim’ s seq2seq code, https: //github.com/harvardnlp/seq2seq- attn . ferent problems: word ordering, dependency pars- ing, and machine translation. While we do not in- clude all the features and extensions necessary to reach state-of-the-art performance, e ven the baseline seq2seq model is generally quite performant. W ord Ordering The task of correctly ordering the words in a shuffled sentence has recently gained some attention as a way to test the (syntactic) capa- bilities of text-generation systems (Zhang and Clark, 2011; Zhang and Clark, 2015; Liu et al., 2015; Schmaltz et al., 2016). W e cast this task as seq2seq problem by vie wing a shuffled sentence as a source sentence, and the correctly ordered sentence as the target. While w ord ordering is a some what synthetic task, it has two interesting properties for our pur- poses. First, it is a task which plausibly requires search (due to the exponentially many possible or- derings), and, second, there is a clear hard constraint on output sequences, namely , that they be a permu- tation of the source sequence. For both the baseline and BSO models we enforce this constraint at test- time. Howe ver , we also experiment with constrain- ing the BSO model during training, as described in Section 4.2, by defining the succ function to only al- lo w successor sequences containing un-used words in the source sentence. For experiments, we use the same PTB dataset (with the standard training, dev elopment, and test splits) and ev aluation procedure as in Zhang and Clark (2015) and later work, with performance re- ported in terms of BLEU score with the correctly or - dered sentences. For all word-ordering experiments we use 2-layer encoder and decoder LSTMs, each with 256 hidden units, and dropout with a rate of 0.2 between LSTM layers. W e use simple 0/1 costs in defining the ∆ function. W e sho w our test-set results in T able 1. W e see that on this task there is a large improv ement at each beam size from switching to BSO, and a further im- prov ement from using the constrained model. Inspired by a similar analysis in Daum ´ e III and Marcu (2005), we further examine the relationship between K tr and K te when training with ConBSO in T able 2. W e see that larger K tr hurt greedy in- ference, b ut that results continue to impro ve, at least initially , when using a K te that is (somewhat) bigger than K tr − 1 . W ord Ordering (BLEU) K te = 1 K te = 5 K te = 10 seq2seq 25.2 29.8 31.0 BSO 28.0 33.2 34.3 ConBSO 28.6 34.3 34.5 LSTM-LM 15.4 - 26.8 T able 1: W ord ordering. BLEU Scores of seq2seq, BSO, constrained BSO, and a vanilla LSTM language model (from Schmaltz et al, 2016). All experiments above ha v e K tr = 6 . W ord Ordering Beam Size (BLEU) K te = 1 K te = 5 K te = 10 K tr = 2 30.59 31.23 30.26 K tr = 6 28.20 34.22 34.67 K tr = 11 26.88 34.42 34.88 seq2seq 26.11 30.20 31.04 T able 2: Beam-size experiments on w ord ordering de vel- opment set. All numbers reflect training with constraints (ConBSO). Dependency Parsing W e next apply our model to dependency parsing, which also has hard con- straints and plausibly benefits from search. W e treat dependency parsing with arc-standard transi- tions as a seq2seq task by attempting to map from a source sentence to a target sequence of source sentence words interleaved with the arc-standard, reduce-actions in its parse. For e xample, we attempt to map the source sentence But it was the Quotron problems that ... to the target sequence But it was @L SBJ @L DEP the Quotron problems @L NMOD @L NMOD that ... W e use the standard Penn T reebank dataset splits with Stanford dependency labels, and the standard U AS/LAS ev aluation metric (excluding punctua- tion) follo wing Chen and Manning (2014). All models thus see only the words in the source and, when decoding, the actions it has emitted so far; no other features are used. W e use 2-layer encoder and decoder LSTMs with 300 hidden units per layer Dependency P arsing (U AS/LAS) K te = 1 K te = 5 K te = 10 seq2seq 87.33/82.26 88.53/84.16 88.66/84.33 BSO 86.91/82.11 91.00/ 87.18 91.17/ 87.41 ConBSO 85.11/79.32 91.25 /86.92 91.57 /87.26 Andor 93.17/91.18 - - T able 3: Dependency parsing. U AS/LAS of seq2seq, BSO, ConBSO and baselines on PTB test set. Andor is the current state-of-the-art model for this data set (Andor et al. 2016), and we note that with a beam of size 32 the y obtain 94.41/92.55. All experiments above have K tr = 6 . and dropout with a rate of 0.3 between LSTM lay- ers. W e replace singleton words in the training set with an UNK token, normalize digits to a single symbol, and initialize word embeddings for both source and target words from the publicly av ailable word2vec (Mikolov et al., 2013) embeddings. W e use simple 0/1 costs in defining the ∆ function. As in the word-ordering case, we also e xperiment with modifying the succ function in order to train under hard constraints, namely , that the emitted tar- get sequence be a valid parse. In particular , we con- strain the output at each time-step to obey the stack constraint, and we ensure words in the source are emitted in order . W e sho w results on the test-set in T able 3. BSO and ConBSO both show significant improv ements ov er seq2seq, with ConBSO improving most on U AS, and BSO improving most on LAS. W e achieve a reasonable final score of 91.57 UAS, which lags behind the state-of-the-art, but is promising for a general-purpose, word-only model. T ranslation W e finally ev aluate our model on a small machine translation dataset, which allows us to experiment with a cost function that is not 0/1, and to consider other baselines that attempt to mit- igate exposure bias in the seq2seq setting. W e use the dataset from the work of Ranzato et al. (2016), which uses data from the German-to-English por- tion of the IWSL T 2014 machine translation ev al- uation campaign (Cettolo et al., 2014). The data comes from translated TED talks, and the dataset contains roughly 153K training sentences, 7K de vel- opment sentences, and 7K test sentences. W e use the same preprocessing and dataset splits as Ranzato et Machine T ranslation (BLEU) K te = 1 K te = 5 K te = 10 seq2seq 22.53 24.03 23.87 BSO, SB- ∆ 23.83 26.36 25.48 XENT 17.74 20.10 20.28 D AD 20.12 22.25 22.40 MIXER 20.73 21.81 21.83 T able 4: Machine translation experiments on test set; re- sults below middle line are from MIXER model of Ran- zato et al. (2016). SB- ∆ indicates sentence BLEU costs are used in defining ∆ . XENT is similar to our seq2seq model but with a con v olutional encoder and simpler at- tention. DAD trains seq2seq with scheduled sampling (Bengio et al., 2015). BSO, SB- ∆ experiments above hav e K tr = 6 . al. (2016), and like them we also use a single-layer LSTM decoder with 256 units. W e also use dropout with a rate of 0.2 between each LSTM layer . W e em- phasize, howe v er , that while our decoder LSTM is of the same size as that of Ranzato et al. (2016), our re- sults are not directly comparable, because we use an LSTM encoder (rather than a conv olutional encoder as they do), a slightly dif ferent attention mechanism, and input feeding (Luong et al., 2015). For our main MT results, we set ∆( ˆ y ( k ) 1: t ) to 1 − SB( ˆ y ( K ) r +1: t , y r +1: t ) , where r is the last margin violation and SB denotes smoothed, sentence-le vel BLEU (Chen and Cherry , 2014). This setting of ∆ should act to penalize erroneous predictions with a relati vely lo w sentence-le vel BLEU score more than those with a relatively high sentence-lev el BLEU score. In T able 4 we sho w our final results and those from Ranzato et al. (2016). 8 While we start with an improv ed baseline, we see similarly large increases in accuracy as those obtained by DAD and MIXER, in particular when K te > 1 . W e further in vestigate the utility of these sequence-le vel costs in T able 5, which compares us- ing sentence-lev el BLEU costs in defining ∆ with using 0/1 costs. W e see that the more sophisti- cated sequence-le vel costs hav e a moderate effect on BLEU score. 8 Some results from personal communication. Machine T ranslation (BLEU) K te = 1 K te = 5 K te = 10 0/1- ∆ 25.73 28.21 27.43 SB- ∆ 25.99 28.45 27.58 T able 5: BLEU scores obtained on the machine trans- lation development data when training with ∆( ˆ y ( k ) 1: t ) = 1 (top) and ∆( ˆ y ( k ) 1: t ) = 1 − SB( ˆ y ( K ) r +1: t , y r +1: t ) (bottom), and K tr = 6. Timing Gi ven Algorithm 1, we would expect training time to increase linearly with the size of the beam. On the abov e MT task, our highly tuned seq2seq baseline processes an av erage of 13,038 to- kens/second (including both source and target to- kens) on a GTX 970 GPU. For beams of size K tr = 2, 3, 4, 5, and 6, our implementation processes on a v erage 1,985, 1,768, 1,709, 1,521, and 1,458 to- kens/second, respecti vely . Thus, we appear to pay an initial constant factor of ≈ 3 . 3 due to the more complicated forward and backward passes, and then training scales with the size of the beam. Because we batch beam predictions on a GPU, howe v er , we find that in practice training time scales sub-linearly with the beam-size. 6 Conclusion W e hav e introduced a variant of seq2seq and an as- sociated beam search training scheme, which ad- dresses exposure bias as well as label bias, and moreov er allows for both training with sequence- le vel cost functions as well as with hard constraints. Future work will examine scaling this approach to much larger datasets. Acknowledgments W e thank Y oon Kim for helpful discussions and for providing the initial seq2seq code on which our im- plementations are based. W e thank Allen Schmaltz for help with the word ordering experiments. W e also gratefully acknowledge the support of a Google Research A ward. References [Andor et al.2016] Daniel Andor , Chris Alberti, David W eiss, Aliaksei Se veryn, Alessandro Presta, Kuzman Ganchev , Slav Petrov , and Michael Collins. 2016. Globally normalized transition-based neural networks. A CL . [Bahdanau et al.2015] Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Bengio. 2015. Neural machine translation by jointly learning to align and translate. In ICLR . [Bahdanau et al.2016] Dzmitry Bahdanau, Philemon Brakel, K elvin Xu, Anirudh Goyal, Ryan Lowe, Joelle Pineau, Aaron Courville, and Y oshua Bengio. 2016. An Actor-Critic Algorithm for Sequence Prediction. CoRR , abs/1607.07086. [Bengio et al.2015] Samy Bengio, Oriol V inyals, Navdeep Jaitly , and Noam Shazeer . 2015. Scheduled sampling for sequence prediction with recurrent neural networks. In Advances in Neural Information Pr ocessing Systems , pages 1171–1179. [Bj ¨ orkelund and Kuhn2014] Anders Bj ¨ orkelund and Jonas Kuhn. 2014. Learning structured perceptrons for coreference Resolution with Latent Antecedents and Non-local Features. A CL, Baltimor e, MD, USA, J une . [Cettolo et al.2014] Mauro Cettolo, Jan Niehues, Sebas- tian St ¨ uker , Luisa Bentiv ogli, and Marcello Federico. 2014. Report on the 11th iwslt ev aluation campaign. In Pr oceedings of IWSL T , 20014 . [Chang et al.2015] Kai-W ei Chang, Hal Daum ´ e III, John Langford, and Stephane Ross. 2015. Efficient pro- grammable learning to search. In Arxiv . [Chen and Cherry2014] Boxing Chen and Colin Cherry . 2014. A systematic comparison of smoothing tech- niques for sentence-lev el bleu. ACL 2014 , page 362. [Chen and Manning2014] Danqi Chen and Christopher D Manning. 2014. A fast and accurate dependency parser using neural networks. In EMNLP , pages 740– 750. [Cho et al.2014] KyungHyun Cho, Bart van Merrienboer , Dzmitry Bahdanau, and Y oshua Bengio. 2014. On the properties of neural machine translation: Encoder- decoder approaches. Eighth W orkshop on Syntax, Se- mantics and Structur e in Statistical T ranslation . [Collins and Roark2004] Michael Collins and Brian Roark. 2004. Incremental parsing with the perceptron algorithm. In Pr oceedings of the 42nd Annual Meet- ing on Association for Computational Linguistics , page 111. Association for Computational Linguistics. [Daum ´ e III and Marcu2005] Hal Daum ´ e III and Daniel Marcu. 2005. Learning as search optimization: ap- proximate lar ge mar gin methods for structured predic- tion. In Pr oceedings of the T wenty-Second Interna- tional Conference on Mac hine Learning (ICML 2005) , pages 169–176. [Daum ´ e III et al.2009] Hal Daum ´ e III, John Langford, and Daniel Marcu. 2009. Search-based structured pre- diction. Machine Learning , 75(3):297–325. [Duchi et al.2011] John Duchi, Elad Hazan, and Y oram Singer . 2011. Adaptive Subgradient Methods for On- line Learning and Stochastic Optimization. The Jour- nal of Machine Learning Resear ch , 12:2121–2159. [Filippov a et al.2015] Katja Filippov a, Enrique Alfon- seca, Carlos A Colmenares, Lukasz Kaiser , and Oriol V inyals. 2015. Sentence compression by deletion with lstms. In Pr oceedings of the 2015 Conference on Empirical Methods in Natural Language Pr ocessing , pages 360–368. [Hochreiter and Schmidhuber1997] Sepp Hochreiter and J ¨ urgen Schmidhuber . 1997. Long short-term memory . Neural Comput. , 9:1735–1780. [Huang et al.2012] Liang Huang, Suphan F ayong, and Y ang Guo. 2012. Structured perceptron with inexact search. In Proceedings of the 2012 Conference of the North American Chapter of the Association for Com- putational Linguistics: Human Language T echnolo- gies , pages 142–151. Association for Computational Linguistics. [Kingsbury2009] Brian Kingsbury . 2009. Lattice-based optimization of sequence classification criteria for neural-network acoustic modeling. In Acoustics, Speech and Signal Pr ocessing , 2009. ICASSP 2009. IEEE International Confer ence on , pages 3761–3764. IEEE. [Lafferty et al.2001] John D. Lafferty , Andre w McCal- lum, and Fernando C. N. Pereira. 2001. Condi- tional random fields: Probabilistic models for seg- menting and labeling sequence data. In Pr oceedings of the Eighteenth International Conference on Machine Learning (ICML 2001) , pages 282–289. [Liu et al.2015] Y ijia Liu, Y ue Zhang, W anxiang Che, and Bing Qin. 2015. T ransition-based syntactic lineariza- tion. In Pr oceedings of N AACL . [Luong et al.2015] Thang Luong, Hieu Pham, and Christopher D. Manning. 2015. Effecti ve approaches to attention-based neural machine translation. In Pr oceedings of the 2015 Confer ence on Empirical Methods in Natural Language Pr ocessing , EMNLP 2015 , pages 1412–1421. [Mikolov et al.2013] T omas Mikolov , Ilya Sutske v er , Kai Chen, Greg S Corrado, and Jeff Dean. 2013. Dis- tributed representations of words and phrases and their compositionality . In Advances in neural information pr ocessing systems , pages 3111–3119. [Papineni et al.2002] Kishore P apineni, Salim Roukos, T odd W ard, and W ei-Jing Zhu. 2002. Bleu: a method for automatic evaluation of machine translation. In Pr oceedings of the 40th annual meeting on association for computational linguistics , pages 311–318. Associ- ation for Computational Linguistics. [Pham et al.2014] V u Pham, Th ´ eodore Bluche, Christo- pher Kermorv ant, and J ´ er ˆ ome Louradour . 2014. Dropout improv es recurrent neural networks for hand- writing recognition. In F r ontier s in Handwriting Recognition (ICFHR), 2014 14th International Con- fer ence on , pages 285–290. IEEE. [Ranzato et al.2016] Marc’Aurelio Ranzato, Sumit Chopra, Michael Auli, and W ojciech Zaremba. 2016. Sequence le vel training with recurrent neural networks. ICLR . [Ross et al.2011] St ´ ephane Ross, Geoffre y J. Gordon, and Drew Bagnell. 2011. A reduction of imitation learn- ing and structured prediction to no-regret online learn- ing. In Pr oceedings of the F ourteenth International Confer ence on Artificial Intelligence and Statistics , pages 627–635. [Sak et al.2014] Hasim Sak, Oriol V inyals, Georg Heigold, Andrew W . Senior , Erik McDermott, Rajat Monga, and Mark Z. Mao. 2014. Sequence discrimi- nativ e distributed training of long short-term memory recurrent neural networks. In INTERSPEECH 2014 , pages 1209–1213. [Schmaltz et al.2016] Allen Schmaltz, Alexander M Rush, and Stuart M Shieber . 2016. W ord ordering without syntax. arXiv pr eprint arXiv:1604.08633 . [Serban et al.2016] Iulian Vlad Serban, Alessandro Sor- doni, Y oshua Bengio, Aaron C. Courville, and Joelle Pineau. 2016. Building end-to-end dialogue systems using generative hierarchical neural network models. In Pr oceedings of the Thirtieth AAAI Conference on Artificial Intelligence , pages 3776–3784. [Shen et al.2016] Shiqi Shen, Y ong Cheng, Zhongjun He, W ei He, Hua W u, Maosong Sun, and Y ang Liu. 2016. Minimum risk training for neural machine translation. In Pr oceedings of the 54th Annual Meeting of the As- sociation for Computational Linguistics, A CL 2016 . [Sriv astava et al.2014] Nitish Sri v asta va, Geof frey Hin- ton, Alex Krizhevsky , Ilya Sutskev er , and Ruslan Salakhutdinov . 2014. Dropout: A simple way to pre- vent neural networks from overfitting. The Journal of Machine Learning Resear ch , 15(1):1929–1958. [Sutske ver et al.2011] Ilya Sutsk e ver , James Martens, and Geoffre y E Hinton. 2011. Generating text with recur- rent neural networks. In Pr oceedings of the 28th In- ternational Confer ence on Machine Learning (ICML) , pages 1017–1024. [Sutske ver et al.2014] Ilya Sutske ver , Oriol V inyals, and Quoc VV Le. 2014. Sequence to sequence learning with neural networks. In Advances in Neural Informa- tion Pr ocessing Systems (NIPS) , pages 3104–3112. [V enugopalan et al.2015] Subhashini V enugopalan, Mar- cus Rohrbach, Jeffrey Donahue, Raymond J. Mooney , T re v or Darrell, and Kate Saenko. 2015. Sequence to sequence - video to text. In ICCV , pages 4534–4542. [V inyals et al.2015] Oriol V inyals, Łukasz Kaiser , T erry K oo, Slav Petrov , Ilya Sutskev er , and Geoffrey Hinton. 2015. Grammar as a foreign language. In Advances in Neural Information Processing Systems , pages 2755– 2763. [V oigtlaender et al.2015] Paul V oigtlaender , Patrick Doetsch, Simon W iesler , Ralf Schluter , and Hermann Ney . 2015. Sequence-discriminati ve training of recurrent neural networks. In Acoustics, Speech and Signal Pr ocessing (ICASSP), 2015 IEEE International Confer ence on , pages 2100–2104. IEEE. [W atanabe and Sumita2015] T aro W atanabe and Eiichiro Sumita. 2015. T ransition-based neural constituent parsing. Pr oceedings of A CL-IJCNLP . [Xu et al.2015] Kelvin Xu, Jimmy Ba, Ryan Kiros, Kyungh yun Cho, Aaron C. Courville, Ruslan Salakhutdinov , Richard S. Zemel, and Y oshua Bengio. 2015. Show , attend and tell: Neural image caption generation with visual attention. In ICML , pages 2048–2057. [Y azdani and Henderson2015] Majid Y azdani and James Henderson. 2015. Incremental recurrent neural net- work dependency parser with search-based discrimi- nativ e training. In Pr oceedings of the 19th Confer- ence on Computational Natural Language Learning, (CoNLL 2015) , pages 142–152. [Zaremba et al.2014] W ojciech Zaremba, Ilya Sutske v er , and Oriol V inyals. 2014. Recurrent neural network regularization. CoRR , abs/1409.2329. [Zhang and Clark2011] Y ue Zhang and Stephen Clark. 2011. Syntax-based grammaticality improvement us- ing ccg and guided search. In Pr oceedings of the Con- fer ence on Empirical Methods in Natural Language Pr ocessing , pages 1147–1157. Association for Com- putational Linguistics. [Zhang and Clark2015] Y ue Zhang and Stephen Clark. 2015. Discriminati v e syntax-based word order- ing for text generation. Computational Linguistics , 41(3):503–538. [Zhou et al.2015] Hao Zhou, Y ue Zhang, and Jiajun Chen. 2015. A neural probabilistic structured-prediction model for transition-based dependency parsing. In Pr oceedings of the 53rd Annual Meeting of the As- sociation for Computational Linguistics , pages 1213– 1222.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment