Normalizing Flows on Riemannian Manifolds

We consider the problem of density estimation on Riemannian manifolds. Density estimation on manifolds has many applications in fluid-mechanics, optics and plasma physics and it appears often when dealing with angular variables (such as used in prote…

Authors: Mevlana C. Gemici, Danilo Rezende, Shakir Mohamed

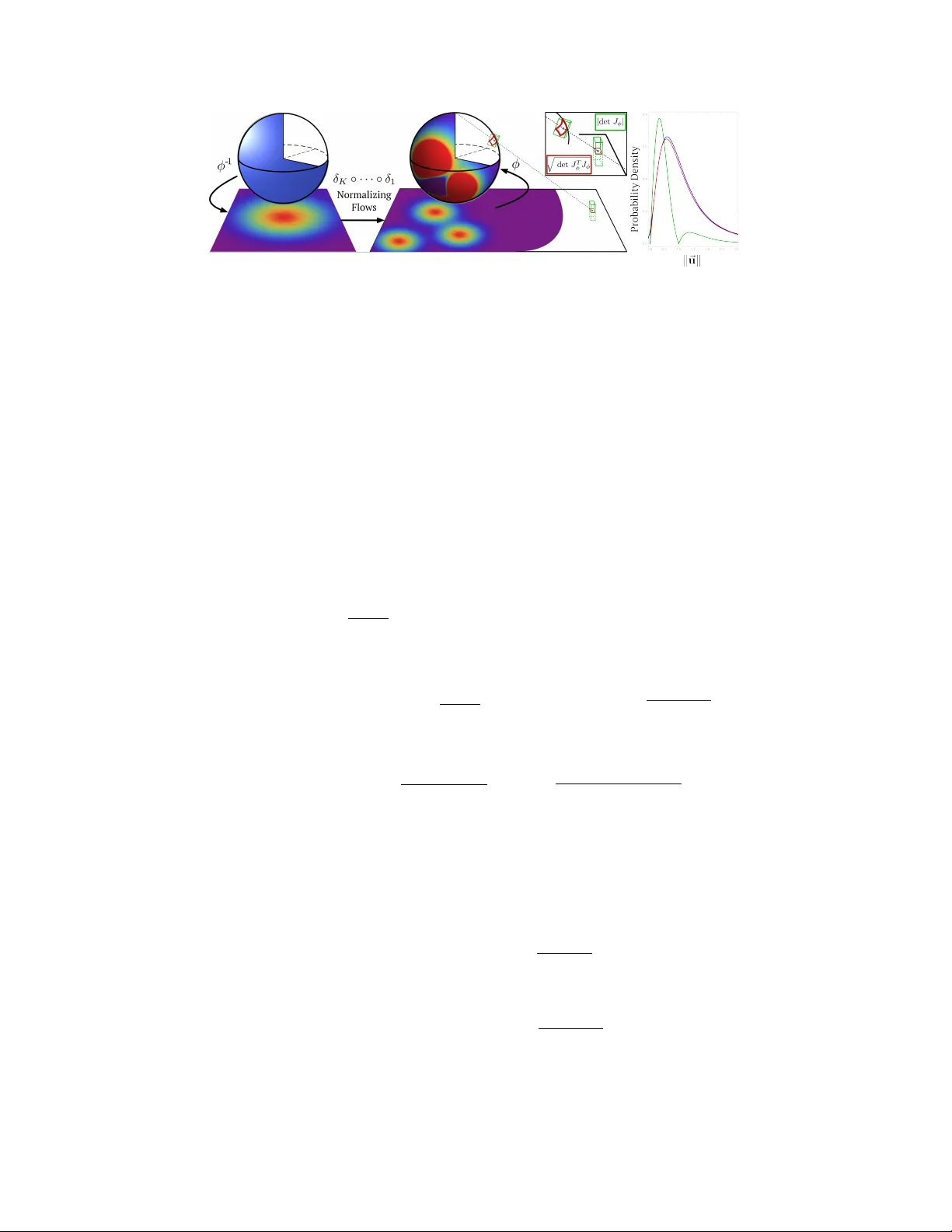

Normalizing Flows on Riemannian Manif olds Mevlana C. Gemici Google DeepMind mevlana@google.com Danilo J. Rezende Google DeepMind danilor@google.com Shakir Mohamed Google DeepMind shakir@google.com Abstract W e consider the problem of density estimation on Riemannian manifolds. Density estimation on manifolds has many applications in fluid-mechanics, optics and plasma physics and it appears often when dealing with angular v ariables (such as used in protein folding, robot limbs, gene-expression) and in general directional statistics. In spite of the multitude of algorithms av ailable for density estimation in the Euclidean spaces R n that scale to large n (e.g. normalizing flows, kernel meth- ods and v ariational approximations), most of these methods are not immediately suitable for density estimation in more general Riemannian manifolds. W e re visit techniques related to homeomorphisms from dif ferential geometry for projecting densities to sub-manifolds and use it to generalize the idea of normalizing flows to more general Riemannian manifolds. The resulting algorithm is scalable, simple to implement and suitable for use with automatic dif ferentiation. W e demonstrate concrete examples of this method on the n-sphere S n . In recent years, there has been much interest in applying variational inference techniques to learning large scale probabilistic models in various domains, such as images and text [ 1 , 2 , 3 , 4 , 5 , 6 ]. One of the main issues in variational inference is finding the best approximation to an intractable posterior distribution of interest by searching through a class of kno wn probability distributions. The class of approximations used is often limited, e.g., mean-field approximations, implying that no solution is e ver able to resemble the true posterior distrib ution. This is a widely raised objection to v ariational methods, in that unlik e MCMC, the true posterior distrib ution may not be recov ered ev en in the asymptotic regime. T o address this problem, recent work on Normalizing Flo ws [ 7 ], In verse Autoregressi ve Flows [ 8 ], and others [ 9 , 10 ] (referred collectiv ely as normalizing flows), focused on dev eloping scalable methods of constructing arbitrarily complex and flexible approximate posteriors from simple distributions using transformations parameterized by neural networks, which gi ves these models uni versal approximation capability in the asymptotic re gime. In all of these w orks, the distributions of interest are restricted to be defined o ver high dimensional Euclidean spaces. There are man y other distrib utions defined o ver special homeomorphisms of Euclidean spaces that are of interest in statistics, such as Beta and Dirichlet (n-Simplex); Norm-T runcated Gaussian (n-Ball); Wrapped Cauchy and V on-Misses Fisher (n-Sphere), which find little applicability in variational inference with lar ge scale probabilistic models due to the limitations related to density complexity and gradient computation [ 11 , 12 , 13 , 14 ]. Many such distrib utions are unimodal and generating complicated distributions from them would require creating mixture densities or using auxiliary random variables. Mixture methods require further knowledge or tuning, e.g. number of mixture components necessary , and a hea vy computational burden on the gradient computation in general, e.g. with quantile functions [ 15 ]. Further , mode complexity increases only linearly with mixtures as opposed to exponential increase with normalizing flows. Conditioning on auxiliary variables [ 16 ] on the other hand constrains the use of the created distribution, due to the need for integrating out the auxiliary factors in certain scenarios. In all of these methods, computation of low-v ariance gradients is dif ficult due to the fact that simulation of random v ariables cannot be in general reparameterized (e.g. rejection sampling [ 17 ]). In this work, we present methods that generalizes previous w ork on improving v ariational inference in R n using normalizing flows to Riemannian manifolds of interest such as spheres S n , tori T n and their product topologies with R n , like infinite cylinders. Figure 1: Left: Construction of a complex density on S n by first projecting the manifold to R n , transforming the density and projecting it back to S n . Right: Illustration of transformed ( S 2 → R 2 ) densities corresponding to an uniform density on the sphere. Blue: empirical density (obtained by Monte Carlo); Red: Analytical density from equation (4) ; Green: Density computed ignoring the intrinsic dimensionality of S n . These special manifolds M ⊂ R m are homeomorphic to the Euclidean space R n where n cor- responds to the dimensionality of the tangent space of M at each point. A homeomorphism is a continuous function between topological spaces with a continuous in verse (bijecti ve and bicontin- uous). It maps point in one space to the other in a unique and continuous manner . An example manifold is the unit 2-sphere, the surface of a unit ball, which is embedded in R 3 and homeomorphic to R 2 (see Figure 1). In normalizing flo ws, the main result of differential geometry that is used for computing the density updates is gi ven by , d ~ x = | det J φ | d ~ u and represents the relationship between dif ferentials (infinites- imal volumes) between two equidimensional Euclidean spaces using the Jacobian of the function φ : R n → R n that transforms one space to the other . This result only applies to transforms that preserve the dimensionality . Ho wever , transforms that map an embedded manifold to its intrinsic Euclidean space, do not preserve the dimensionality of the points and the result above become obso- lete. Jacobian of such transforms φ : R n → R m with m > n are rectangular and an infinitesimal cube on R n maps to an infinitesimal parallelepiped on the manifold. The relation between these volumes is gi ven by d ~ x = √ det G d ~ u , where G = J T φ J φ is the metric induced by the embedding φ on the tangent space T x M , [ 18 , 19 , 20 ]. The correct formula for computing the density o ver M now becomes : Z M ⊂ R m f ( ~ x ) d ~ x = Z R n ( f ◦ φ )( ~ u ) √ det G d ~ u = Z R n ( f ◦ φ )( ~ u ) q det J T φ J φ d ~ u (1) The density update going from the manifold to the Euclidian space, ~ x ∈ S n → ~ u ∈ R n , is then giv en by: p ( ~ u ) = ( f ◦ φ )( ~ u ) q det J T φ J φ ( ~ u ) = f ( ~ x ) q det J T φ J φ ( φ − 1 ( ~ x )) (2) As an application of this method on the n -sphere S n , we introduce In verse Ster eographic T ransform and define it as: φ ( u ) : R n → S n ⊂ R n +1 , ~ x = φ ( ~ u ) = 2 u / ( u T u + 1) 1 − 2 / ( u T u + 1) (3) which maps R n to S n in a bijecti ve and bicontinuous manner . The determinant of the metric G ( x ) associated with this transformation is giv en by: det G = det J φ ( x ) T J φ ( x ) = 2 x T x + 1 2 n (4) Using these formulae, on the left side of Figure 1, we map a uniform density on S 2 to R 2 , enrich this density , using e.g. normalizing flows, and then map it back onto S 2 to obtain a multi-modal (or arbitrarily complex) density on the original sphere. On the right side of Figure 1, we sho w that the density update based on the Riemannian metric, i.e. q det J T φ J φ (red), is correct and closely follo ws the kernel density estimate based on 500k samples (blue). W e also sho w that using the generic volume transformation formulation for dimensionality preserving transforms, i.e. | det J φ | (green), leads to an erroneous density and do not resemble the empirical distributions of samples after the transformation. 2 References [1] D. J. Rezende, S. Mohamed, and D. W ierstra. Stochastic backpropagation and approximate inference in deep generativ e models. In ICML , 2014. [2] D. P . Kingma and M. W elling. Auto-encoding variational Bayes. In ICLR , 2014. [3] Karol Gregor , Ivo Danihelka, Alex Gra ves, Danilo Jimenez Rezende, and Daan W ierstra. Draw: A recurrent neural network for image generation. In ICML , 2015. [4] SM Eslami, Nicolas Heess, Theophane W eber, Y uv al T assa, K oray Kavukcuoglu, and Geof frey E Hinton. Attend, infer, repeat: Fast scene understanding with generative models. arXiv pr eprint arXiv:1603.08575 , 2016. [5] Danilo Jimenez Rezende, Shakir Mohamed, Ivo Danihelka, Karol Gregor , and Daan Wierstra. One-shot generalization in deep generativ e models. In ICML , 2016. [6] Matthew D. Hoffman, Da vid M. Blei, Chong W ang, and John Paisley . Stochastic v ariational inference. Journal of Machine Learning Resear ch , 14:1303–1347, 2013. [7] Danilo Jimenez Rezende and Shakir Mohamed. V ariational inference with normalizing flo ws. arXiv pr eprint arXiv:1505.05770 , 2015. [8] Diederik P . Kingma, T im Salimans, and Max W elling. Improving variational inference with in verse autoregressi ve flo w . CoRR , abs/1606.04934, 2016. [9] Laurent Dinh, Jascha Sohl-Dickstein, and Samy Bengio. Density estimation using real n vp. 2016. [10] T im Salimans, Diederik P . Kingma, and Max W elling. Markov chain monte carlo and variational inference: Bridging the gap. In Francis R. Bach and David M. Blei, editors, ICML , v olume 37 of JMLR W orkshop and Conference Pr oceedings , pages 1218–1226. JMLR.or g, 2015. [11] Arindam Banerjee, Inderjit S. Dhillon, Joydeep Ghosh, and Suvrit Sra. Clustering on the unit hypersphere using v on mises-fisher distributions. J. Mach. Learn. Res. , 6:1345–1382, December 2005. [12] Siddharth Gopal and Y iming Y ang. V on mises-fisher clustering models. In T ony Jebara and Eric P . Xing, editors, Pr oceedings of the 31st International Confer ence on Machine Learning (ICML-14) , pages 154–162. JMLR W orkshop and Conference Proceedings, 2014. [13] Marco Fraccaro, Ulrich P aquet, and Ole W inther . Indexable probabilistic matrix factorization for maximum inner product search. In Pr oceedings of the Thirtieth AAAI Confer ence on Artificial Intelligence, F ebruary 12-17, 2016, Phoenix, Arizona, USA. , pages 1554–1560, 2016. [14] Arindam Banerjee, Inderjit Dhillon, Joydeep Ghosh, and Suvrit Sra. Generative model-based clustering of directional data. In Pr oceedings of the Ninth ACM SIGKDD International Confer- ence on Knowledge Discovery and Data Mining , KDD ’03, pages 19–28, New Y ork, NY , USA, 2003. A CM. [15] Alex Grav es. Stochastic backpropagation through mixture density distributions. CoRR , abs/1607.05690, 2016. [16] Lars Maaloe, Casper Kaae Sonderby , Soren Kaae Sonderby , and Ole W inther . Auxiliary deep generativ e models. CoRR , abs/1602.05473, 2016. [17] Scott W . Linderman David M. Blei Christian A. Naesseth, Francisco J. R. Ruiz. Rejection sampling variational inference. 2016. [18] Adi Ben-Israel. The change-of-variables formula using matrix v olume. SIAM Journal on Matrix Analysis and Applications , 21(1):300–312, 1999. [19] Adi Ben-Israel. An application of the matrix volume in probability . Linear Algebra and its Applications , 321(1):9–25, 2000. [20] Marcel Berger and Bernard Gostiaux. Differ ential Geometry: Manifolds, Curves, and Surfaces: Manifolds, Curves, and Surfaces , volume 115. Springer Science & Business Media, 2012. 3

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment