Ways of Conditioning Generative Adversarial Networks

The GANs are generative models whose random samples realistically reflect natural images. It also can generate samples with specific attributes by concatenating a condition vector into the input, yet research on this field is not well studied. We pro…

Authors: Hanock Kwak, Byoung-Tak Zhang

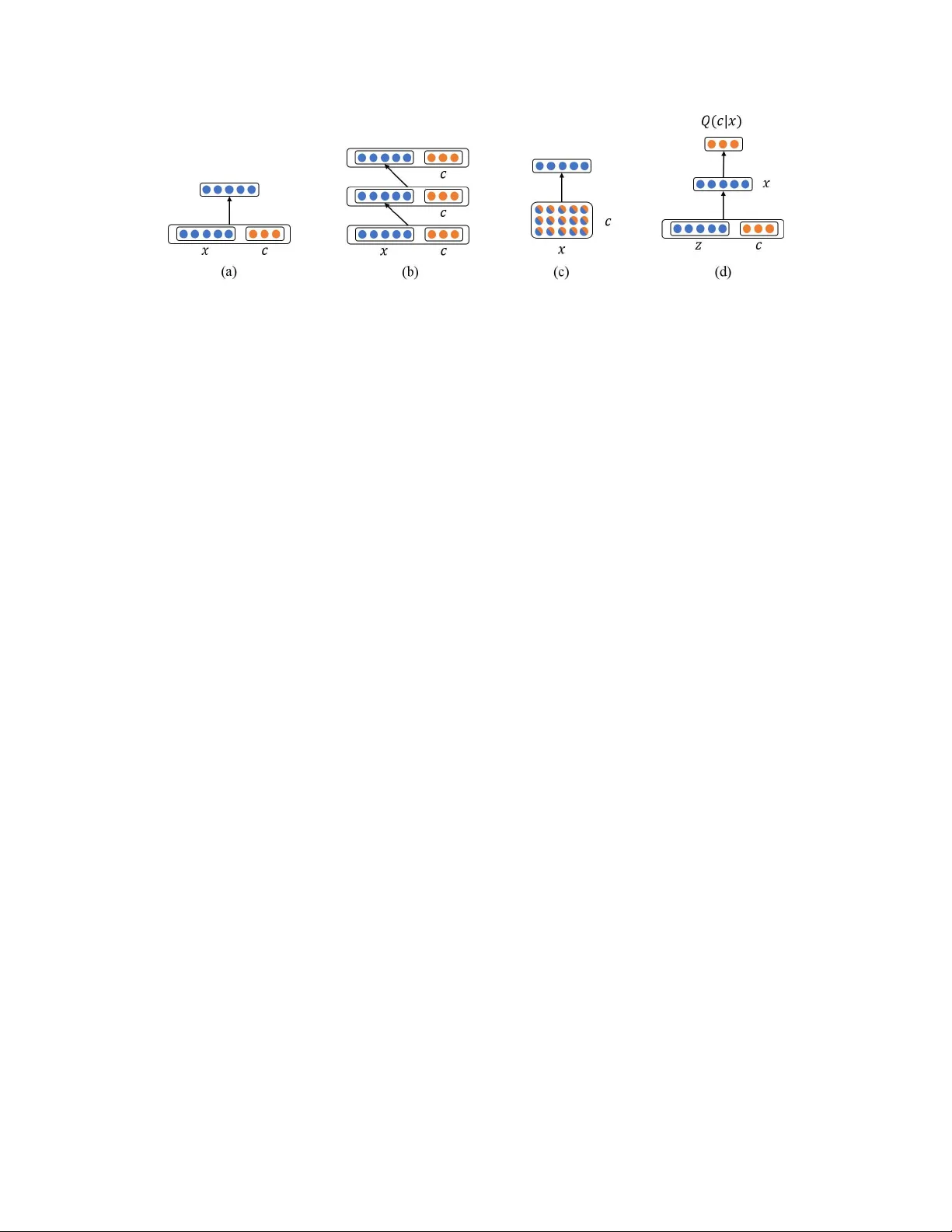

W ays of Conditioning Generative Adversarial Networks Hanock Kwak and Byoung-T ak Zhang School of Compu ter Science and Engine ering Seoul National University Seoul 151-7 44, K orea {hnkw ak, btzhang} @bi.sn u.ac.kr Abstract The GANs are generative models whose ran dom samples realistically reflect natu- ral ima ges. It also can gen erate samples with spec ific attributes by con catenating a conditio n vector into the input, yet research on this field is not well studied. W e propo se n ovel metho ds o f conditioning generative adversarial networks (GANs) that achie ve state-of -the-art resu lts on MNIST and CIF AR-10. W e mainly in tro- duce two models: an infor mation retrieving model th at extracts co nditional in for- mation from the samples, an d a spatial bilinear poo ling model that fo rms bilinear features deriv ed from the spatial cross produ ct of an image and a condition vector . These methods sign ificantly enhance log -likelihood of test data u nder the co ndi- tional distributions compared to the metho ds of concatenation. 1 Intr oduction The goal of the g enerative model is to learn th e underlying p robab ilistic distribution of unlabeled data by d isentangling e xplanato ry factors in the data[1, 2]. It is app licable to tasks such as classi- fication, regression, visualization, and policy learning in reinfor cement learning. The GAN[3] is a prominen t generative model that is found to be useful for realistic sample generatio n[4], semi- supervised learning[5], super resolution[6], te xt-to-im age[7], and im age inpainting[8]. The poten- tiality of GAN was suppor ted by the theory th at if th e model h as en ough capacity , th e learned distribution c an con verge to the distrib ution over real data[3]. The r epresentative power of the GAN was highly enhanced with deep learning techniques[4] and v arious metho ds[5, 9] w as introd uced to stabilize the learning process. After the cond itional GAN[ 10] was first introduc ed to generate sam ples of s pecific labels f rom a s in- gle gener ator, further research was not ma de thorou ghly despite of its practical usage. For example, it was used to g enerate im ages fro m descrip ti ve sentences[7] or attribute vectors[11 ]. The con - ditioned GANs lear n co nditional pr obability distribution in which the c ondition can b e any k ind of auxiliary infor mation describin g the data. Mo stly it was modeled by concatenatin g condition vectors into som e layer s o f the generator and d iscriminator in GAN. Even th ough this forms a joint represen- tation, it is hard to fully cap ture the complex associations b etween the two different moda lities[12 ]. W e intro duce a small variant of bilinear poo ling that provides multiplicative interac tion between all elements of two vectors. T o p rovide compu tationally efficient and sp atially sensible b ilinear opera- tion on an im age (o rder 3 ten sor) and a vector, we calculated cross p roduc t over last dimension of the image, resulting in a new image with i ncreased chann els. If there is an oracle that can extract conditional in formatio n per fectly from a ny samp le, we can train GAN to generate sam ples that th e oracle can fig ure out giv en c ondition s from them. This is an informa tion retriev al mo del whe re there is a pre-train ed model that p lays the role o f an oracle. In addition to the objecti ve of GAN, it maximizes lo wer bou nd of the mu tual inf ormation b etween a 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. giv en con dition and the extracted co ndition. This idea h as o riginated fr om info GAN[13] whic h is an information- theoretic extension to th e GAN that is able to learn disentangled representa tions in a completely unsupe rvised manner . 2 Related W orks The cond itional GAN[10] con catenates con dition vecto r into the inpu t o f th e generato r and the discriminator . V ar iants of this method was successfully applied in [7, 11, 14]. [7] obtained visually- discriminative vector represen tation of text description s and then con catenated tha t vector into every layer of the discrimina tor and th e n oise vector o f th e gener ator . [11] used a similar m ethod to generate face ima ges from binary attribute vectors such as h air styles, face shapes, etc. In [14], Structure-GA N generates surface norm al map s and th en th ey are concatenate d into noise vector of Style-GAN to put styles in those maps. The spatial bilinear poolin g was mainly inspir ed from studies o n multimod al learning[1 5 ]. The key question of the multimo dal learnin g is h ow can a model uncover the correlate d interac- tion of two vectors fro m different domains. In ord er to achiev e this, various methods (vec- tor concatenatio n[16 ], element-wise ope rations[17], factorized restricted Boltzmann machine (RBM)[15], bilinear poolin g[12 , 1 8], etc) were pr oposed for num erous challengin g ta sks. The RBM based models require expensi ve MCMC samplin g which m akes it dif ficult to scale them to large datasets. The bilinear poolin g is more expressive then vector concaten ation or element-wise operation s, but they are inefficient due to squ ared co mplexity O ( n 2 ) . T o solve th is problem, [19 ] addressed the space and time complexity of bilinear features using T ensor Sketch[20]. The inform ation retrieving m odel uses core alg orithm of infoGAN[13] that recover disentangled representatio ns by maximizing the mu tual inform ation for indu cing latent code s. In inf oGAN, the input n oise vector is de composed in to a source of incompressible noise and th e la tent c ode, and there is an aux iliary outp ut in the discriminato r to retrie ve th e late nt cod es. The infoGA N utilizes V ariational Infor mation Maximization [21] to deal with intractability of the mu tual information. Un - like infoGAN which randomly gen erates latent codes, we explicitly put con dition informa tion in the latent codes. 3 Model 3.1 Generative Adversarial Networks The GAN[3] has two networks: a g enerator G tha t tries to generate real data giv en noise z ∼ p z ( z ) , and a d iscriminator D ∈ [0 , 1] that classifies the real data x ∼ p data ( x ) and the fake data G ( z ) . The D ( x ) rep resents probability of x being a rea l data. The o bjective of G is to fit the true data distribution decei ving D by playing the following m inimax game: min θ G max θ D E x ∼ p data ( x ) [log D ( x )] + E z ∼ p z ( z ) [log(1 − D ( G ( z )))] , (1) where θ G and θ D are pa rameters of G and D , re spectiv ely . The parameters ar e up dated by st ochastic gradient descent (or ascent) algorithm s. 3.2 Conditioned GAN The objective of co nditioned GANs is to fit condition al pr obability distribution p ( x | c ) wh ere c is cond ition that describes x . The o bjective is to learn p ( x | c ) c orrectly from a labeled dataset ( x 1 , c 1 ) , ( x 2 , c 2 ) , ..., ( x n , c n ) . The gener ator G ( z , c ) of co nditione d GAN has additional input c , and all generators used in our experiment tak e [ x, c ] as an inpu t where [ ... ] mean s vector conca te- nation. W e can add c into th e input of D ( x, c ) as well, or put regular ization terms to g uide the generato r . If we use D ( x, c ) , then the objective of the cond itioned GAN is to optim ize the equation 1 for each c : min θ G max θ D E c ∼ p data ( c ) [ E x ∼ p data ( x | c ) [log D ( x, c )] + E z ∼ p z ( z ) [log(1 − D ( G ( z , c ) , c ))]] , (2) 2 Figure 1 : Illu stration of th e fou r conditione d GANs: (a) CGAN, ( b) FCGAN, (c ) SBP , and (d ) IRGAN. F or simplicity of visualization, o nly a single pixel of x is visualized, virtually consisting of 5 channels. where D ( x, c ) repr esents p robab ility of x being a real data from co ndition c . W e can also train G ( x, c ) to fit p ( x | c ) by puttin g a re gulariza tion t erm R ( G ) to the o bjective function : min θ G max θ D E x ∼ p data ( x ) [log D ( x )] + E z ∼ p z ( z ) ,c ∼ p data ( c ) [log(1 − D ( G ( z , c )))] + R ( G ) . (3) 3.3 Concatenation Methods W e co mpare ou r prop osed m odels with two c ommon ly u sed con ditioned G ANs. The first one is a condition al GAN[10] (CGAN) that concaten ates c into x of D , a nd the second on e is a fully condi- tional GAN (FCGAN) tha t c oncatenates c into every layer of D including x . When the dimen sion of a layer in D is n × n × d where d is the size of depth, we replicate the v ector c spatially to match the size n × n of the feature m ap and perform a depth concatenatio n. Both mod els, inc luding our s, use the same structure for G ( z , c ) wher e c is only concatenated to z . 3.4 Spatial Bilinear P ooling W e pro pose Spatial Bilinear Poo ling ( SBP) th at provides mu ltiplicativ e interac tion b etween all e le- ments o f two vectors. When the dimen sion of an image is n × n × d , the SB P performs cross produ ct for each pix el ( 1 × 1 × d ) of the image with c an d th en gathers the resulting vectors spatially to make a new image. 3.5 Inf ormation Retrieving GAN The Infor mation Retrie ving GAN (IRGAN) has an approxima tor Q ( c | x ) that measures p ( c | x ) from x . T he gen erator G ( z , c ) of IRGAN ma ximizes Q ( c | G ( z , c )) , fitting the cond itional distribution p ( x | c ) . In our experim ents, the app roxima tors are pre-trained classifiers for MNIST and CIF AR- 10. IRGAN optimizes the equation 3 wher e R ( G ) is defined by the lower b ound [13 ] o f the mutu al informa tion I ( c ; G ( z , c )) : R ( G ) = − λ E c ∼ p data ( c ) ,x ∼ G ( z , c ) [log Q ( c | x )] . (4) In this p aper we assume th at the entropy H ( c ) is constant and omit it from R ( G ) for simplicity . The only difference from [1 3] is that c is sampled from the data distrib ution rather than a pre-d efined distribution, since c are explicitly gi ven from the datasets. 4 Experiments W e tr ained cond itioned GANs on MNIST and CIF A R-10, an d utilized the tec hniques pr oposed by DCGAN[4]. All genera tors hav e id entical structure, an d th e variations on ly exist in the discrimina- tors and the auxiliary networks. W e m easured log-likelihood of the test set data for each c b y fitting 3 T able 1: P arzen window-based l og- likelihood estimates on MNIST . Label of MNIST Model 0 1 2 3 4 5 6 7 8 9 CGAN 124.7 358 .0 35.7 56.9 106.7 -10.6 113.7 183.5 53.1 166. 2 FCGAN 28.7 531.4 -11.9 52.8 101.1 51.7 81.6 221.0 20.0 187 .1 SBP 153.0 643 .7 81.6 1 24.6 173.2 121. 4 197.2 287.5 83.1 248.7 IRGAN 154.0 749 .7 68.7 1 37.3 208.8 130. 8 214.8 296.5 97.7 268.4 T able 2: Parzen windo w-based log-likelihood estimates on CIF AR-1 0. Label of CIF AR-10 Model airplane car bird cat deer dog frog h orse ship truck CGAN 684 .0 417.4 969.5 603.8 1064.5 547.8 929 .9 554.9 771.1 37 3.5 FCGAN 760.2 38 4.7 1018.9 566.3 1099.5 538.5 98 5.9 558.5 793. 2 383.7 SBP 847.7 47 1.3 1057.2 625.9 1180.9 577.1 97 4.7 606.8 872. 9 460.6 IRGAN 721.8 39 1.1 1038.0 561.1 1027.9 586.4 83 1.4 509.0 744. 4 365.9 a Gaussian P arzen window to the samples generated from G ( z , c ) [3]. The best standard deviation σ of the Gaussian was chosen by the validation set. The results are sho wn in T able 1, 2. The I RGAN sho wed the best result o n MNIST , but it did n’t perfor med well on CIF A R-10 du e to inaccurate classifier Q ( c | x ) whose classification ac curacy was 81%. W e f ound that SBP showed stable r esults on b oth datasets and most of th e lab els, surpassing CGAN and FCGAN. 5 Conclusions and Futur e W orks In this paper we pr oposed effecti ve models that can fit cond itional distrib ution p ( x | c ) mo re accu- rately . Howe ver , we need mo re experimen ts on othe r co mplex conditions su ch as multi-labels, text descriptions, and style embedd ing to verify our models. Since cro ss prod uct of tw o lo ng vectors is in efficient, compr essing bilinear po oling is a pro mising alternative to the spatial bilinear p ooling when conditio ns are high dimension al vectors. Refer ences [1] Y oshua Bengio. Learning deep architectures fo r ai. F oun dations an d tr ends R in Machine Learning , 2(1):1–1 27, 2009. [2] L. Theis, A. van den Oord, and M. Bethge. A n ote on the ev aluation of gene rativ e models. I n Pr oceed ings of the Internationa l Confer ence on Learning Repr esen tations (ICLR) , 2016. [3] Ian Goodf ellow , Jean Pouget-Ab adie, Meh di Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair , Aaron Cou rville, and Y oshua Bengio . Generati ve ad versarial nets. In Advan ces in Neural Information Pr ocessing Systems , pages 2672 –268 0, 2014 . [4] Alec Radfor d, L uke Metz, and Sou mith Ch intala. Un supervised repr esentation lear ning with deep conv o lutional generative adversarial networks. In Pr oc eedings of th e In ternational Co n- fer en ce on Learning Repr esentations (ICLR) , 2015. [5] T im Salimans, Ian Go odfellow , W ojciech Zaremba, V icki Cheung, Alec Radford , and Xi Chen . Improved techniques for training gans. arXiv pr eprint arXiv:1 606.0 3498 , 20 16. [6] Christian Ledig, Lucas T heis, Ferenc Huszár , Jose C aballero, Andrew A itken, Alykhan T ejani, Johannes T o tz, Zeh an W ang, and W enzh e Shi. Photo-realistic sin gle image su per-resolution using a generative adversarial network. a rXiv pr ep rint arXiv:1609 .0480 2 , 2 016. 4 [7] Scott Reed, Zeynep Akata, Xinchen Y an, Lajanug en Logeswaran, Bernt Schiele, and Honglak Lee. Generative adversarial text to imag e syn thesis. I n I nternationa l Confer en ce on Machine Learning (ICML) , 2016. [8] Deepak Pathak, Philipp Kräh enbüh l, Jeff Donahue, T rev or Darrell, and Alexei Efros. Con text encoder s: Feature learning by inpainting . In CVPR , 2016. [9] Junbo Zh ao, Mich ael Math ieu, and Y ann LeCun. En ergy-based gen erative adversarial network . arXiv pr e print arXiv:1609 .031 26 , 2016. [10] Mehdi Mirza and Simon Osin dero. Conditio nal generative adversar ial nets. arXiv preprint arXiv:141 1.178 4 , 2014. [11] Anders Boesen Lindbo Larsen, Søren Kaae Sønderby , Hugo Larochelle, and Ole W inther . Au- toencod ing beyond pixels u sing a lear ned similarity metric. In Pr oceeding s of the 3 3nd I nter- nationa l Confer en ce on Ma chine Learning, ICML 2016, New Y ork City , NY , USA , J une 1 9-24, 2016 , pages 1558– 1566, 2 016. [12] Dong Huk Park, Daylen Y ang, Akira Fukui, Anna Roh rbach, T rev or Darrell, and Marcu s Rohrbach . Multimodal comp act bilinear pooling for v isual q uestion an swering and visual groun ding. In Confer ence on Empirical Methods in Natural Language P r ocessing , 20 16. [13] Xi Che n, Y an Duan, Rein Houtho oft, John Schulman, Ilya Sutske ver , and Pieter Abbeel. In- fogan: In terpretab le repr esentation learning by information maximizing generative a dversarial nets. arXiv pr eprint arXiv:1 606.0 3657 , 20 16. [14] Xiaolong W an g and Ab hinav Gupta. Generative image mod eling using style and structur e ad- versarial networks. In Comp uter V ision - ECCV 2016 - 14th Eur opea n Confer ence, Am ster da m, The Netherlands, October 11-1 4, 2016, Pr oceed ings, P a rt IV , pages 318– 335, 2016. [15] Jiquan Ngiam , Aditya Kho sla, M ingyu Kim, Juh an Nam, Hong lak Lee, and Andrew Y Ng. Multimodal deep learning . In Pr o ceeding s of the 28th international confer ence on machine learning (ICML-11) , pages 689– 696, 2011. [16] Bolei Zhou, Y uan dong T ian, Sainbayar Sukhbaatar, Arthur Szlam, and Rob Fergus. Simple baseline for visual question answering. arXiv pr eprint arXiv:1 512.0 2167 , 20 15. [17] Zichao Y ang, Xiaodong He, Jianfeng Gao, Li Deng, and Ale x Smola. Stacked attention n et- works for image question answering. a rXiv pr ep rint arXiv:1511. 02274 , 2 015. [18] Tsung-Y u Lin , Aruni RoyChowdhur y , and Subhr ansu Maji. Bilinear cnn models for fine- grained visual recogn ition. In Internation al Confer ence on Computer V ision (ICCV) , 20 15. [19] Y an g Gao, Oscar Beijbom, Ning Zhang, an d T rev or Darrell. Comp act bilinear po oling. In CVPR , 2016. [20] Ninh Pham an d Rasmus Pagh. Fast and scalable p olyno mial kernels via explicit feature maps. In Pr o ceedings of the 19th ACM SIGKDD international conference on Kno wledge d iscovery and data mining , pages 239–24 7. A CM, 2013. [21] David Bar ber Felix Agakov . The im algorithm: a variational approach to infor mation maxi- mization. In Advances in Neu ral I nformation Pr ocessing Systems 16: Pr ocee dings of t he 2003 Confer ence , volume 16, page 201. MIT Press, 2004 . 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment