The Intelligent Voice 2016 Speaker Recognition System

This paper presents the Intelligent Voice (IV) system submitted to the NIST 2016 Speaker Recognition Evaluation (SRE). The primary emphasis of SRE this year was on developing speaker recognition technology which is robust for novel languages that are…

Authors: Abbas Khosravani, Cornelius Glackin, Nazim Dugan

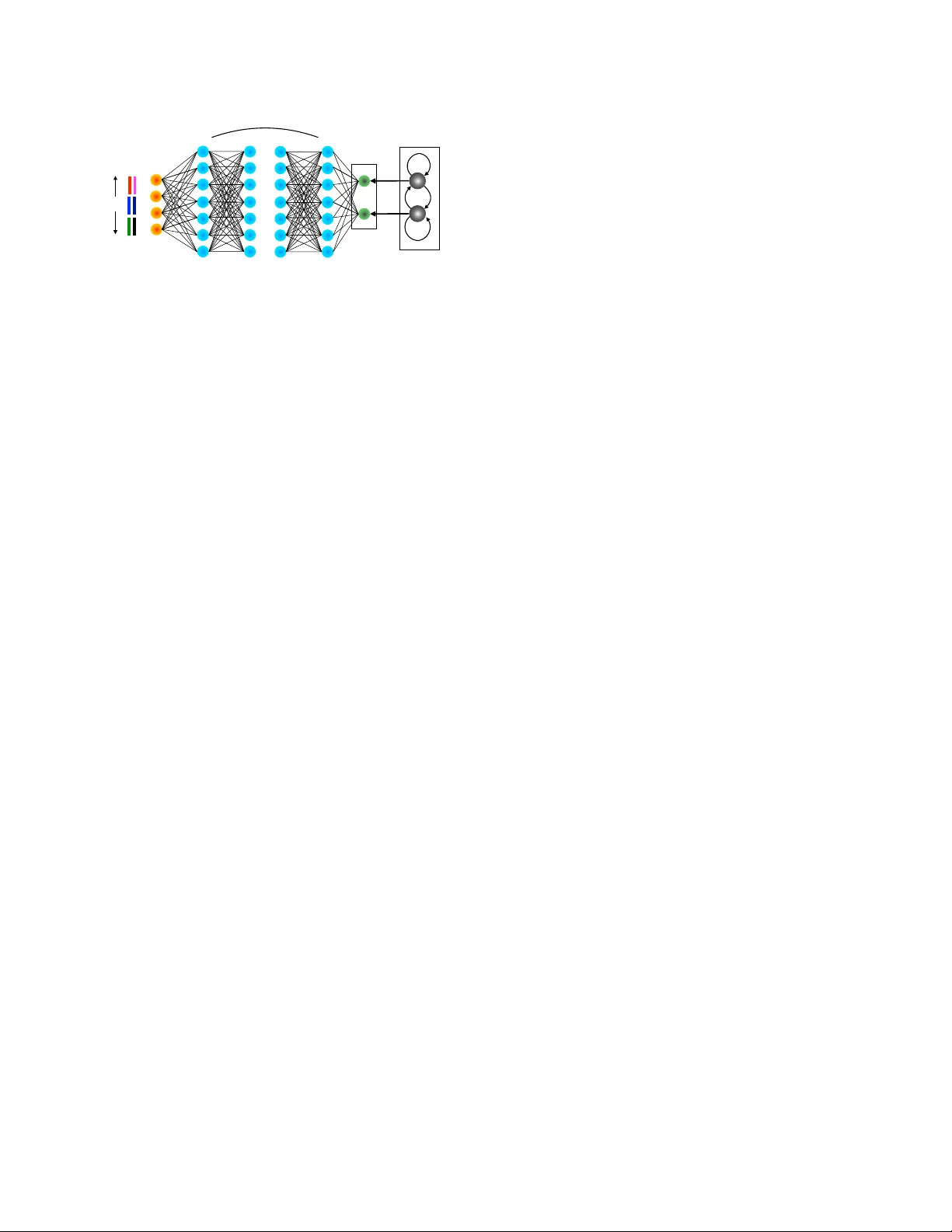

THE INTELLIGENT V OICE 2016 SPEAKER RECOGNITION SYSTEM Abbas Khosravani, Cornelius Glac kin, Nazim Dugan, G ´ erar d Chollet, Nigel Cannings Intelligent V oice Limited, St Clare House, 30-33 Minories, EC3N 1BP , London, UK ABSTRA CT This paper presents the Intelligent V oice (IV) system submit- ted to the NIST 2016 Speak er Recognition Evaluation (SRE). The primary emphasis of SRE this year was on de veloping speaker recognition technology which is rob ust for no vel lan- guages that are much more heterogeneous than those used in the current state-of-the-art, using significantly less training data, that does not contain meta-data from those languages. The system is based on the state-of-the-art i -vector/PLD A which is dev eloped on the fixed training condition, and the results are reported on the protocol defined on the dev elop- ment set of the challenge. Index T erms — Speaker Recognition, Speech Processing 1. INTR ODUCTION Compared to previous years, the 2016 NIST speaker recog- nition ev aluation (SRE) marked a major shift from English tow ards Austronesian and Chinese languages. The task like previous years is to perform speaker detection with the fo- cus on telephone speech data recorded o ver a variety of hand- set types. The main challenges introduced in this e valuation are duration and language v ariability . The potential variation of languages addressed in this ev aluation, recording en viron- ment, and variability of test segments duration influenced the design of our system. Our goal was to utilize recent adv ances in language normalization, domain adaptation, speech activ- ity detection and session compensation techniques to mitigate the adverse bias introduced in this year’ s ev aluation. Over recent years, the i -vector representation of speech segments has been widely used by state-of-the-art speaker recognition systems [3]. The speaker recognition technol- ogy based on i -vectors currently dominates the research field due to its performance, lo w computational cost and the compatibility of i -vectors with machine learning techniques. This dominance is reflected by the recent NIST i -vector ma- chine learning challenge [7] which was designed to find the most promising algorithmic approaches to speaker recogni- tion specifically on the basis of i -vectors [11, 18, 23, 12]. The outstanding ability of DNN for frame alignment which has achiev ed remarkable performance in text-independent speaker recognition for English data [13, 9], f ailed to provide ev en comparable recognition performance to the traditional GMM. Therefore, we concentrated on the cepstral based GMM/ i -vector system. W e outline in this paper the Intelligent V oice system, tech- niques and results obtained on the SRE 2016 dev elopment set that will mirror the ev aluation condition as well as the timing report. Section 2 describes the data used for the system train- ing. The front-end and back-end processing of the system are presented in Sections 3 and 4 respecti vely . In Section 5, we describe experimental ev aluation of the system on the SRE 2016 dev elopment set. Finally , we present a timing analysis of the system in Section 6. 2. TRAINING CONDITION The fixed training condition is used to b uild our speaker recognition system. Only con versational telephone speech data from datasets released through the linguistic data consor- tium (LDC) ha ve been used, including NIST SRE 2004-2010 and the Switchboard corpora (Switchboard Cellular Parts I and II, Switchboard2 Phase I,II and III) for dif ferent steps of system training. A more detailed description of the data used in the system training is presented in T able 1. W e ha ve also included the unlabelled set of 2472 telephone calls from both minor (Cebuano and Mandarin) and major (T agalog and Cantonese) languages provided by NIST in the system train- ing. W e will indicate when and how we used this set in the training in the following sections. T able 1 . The description of the data used for training the speaker r ecognition system. #Langs #Spks #Segs Male Female Male Female English 1 1925 2603 19556 25835 non-English 34 274 489 1428 2657 3. FR ONT -END PROCESSING In this section we will pro vide a description of the main steps in front-end processing of our speaker recognition system in- cluding speech activity detection, acoustic and i -vector fea- ture extraction. 600 512 SoftMax Stacked Filter-Bank … 512 6 … 512 512 HMM Speech Pause Fig. 1 . The ar chitectur e of our DNN-HMM speech activity detection. 3.1. Speech Activity Detection The first stage of an y speaker recognition system is to detect the speech content in an audio signal. An accurate speech activity detector (SAD) can improve the speaker recognition performance. Sev eral techniques hav e been proposed for SAD, including unsupervised methods based on a thresh- olding signal energy , and supervised methods that train a speech/non-speech classifier such as support v ector machines (SVM) [16] and Gaussian mixture models (GMMs) [17]. Hidden markov models (HMMs) [19] ha ve also been suc- cessful. Recently , it has been shown that DNN systems achiev e impressiv e improvement in performance especially in low signal to noise ratios (SNRs) [21]. In our w ork we hav e utilized a two-class DNN-HMM classifier to perform this task. The DNN-HMM hybrid configuration with cross- entropy as the objective function has been trained with the back-propagation algorithm. The softmax layer produces posterior probabilities for speech and non-speech which were then conv erted into log-likelihoods. Using 2-state HMMs cor- responding to speech and non-speech, frame-wise decisions are made by V iterbi decoding. As input to the network, we fed 40-dimensional filter-bank features along with 7 frames from each side. The network has 6 hidden layers with 512 units each. The architecture of our DNN-HMM SAD is shown in Figure 1. Approximately 100 hours of speech data from the Switchboard telephony data with word alignments as ground-truth were used to train our SAD. The DNN train- ing in performed on an NVIDIA TIT AN X GPU, using Kaldi software [20]. Evaluated on 50 hours of telephone speech data from the same database, our DNN-HMM SAD indicated a frame-lev el miss-classification (speech/non-speech) rate of 5.9% whereas an energy-based SAD did not perform better than 20%. 3.2. Acoustic Featur es For acoustic features we hav e experimented with differ - ent configurations of cepstral features. W e hav e used 39- dimensional PLP features and 60-dimensional MFCC fea- tures (including their first and second order deriv ativ es) as acoustic features. Moreover , our experiments indicated that the combination of these two feature sets performs particu- larly well in score fusion. Both PLP and MFCC are extracted at 8kHz sample frequency using Kaldi [20] with 25 and 20 ms frame lengths, respecti vely , and a 10 ms ov erlap (other configurations are the same as Kaldi defaults). For each utter - ance, the features are centered using a short-term (3s window) cepstral mean and v ariance normalization (ST -CMVN). Fi- nally , we employed our DNN-HMM speech acti vity detector (SAD) to drop non-speech frames. 3.3. i -V ector Features Since the introduction of i -vectors in [3], the speaker recogni- tion community has seen a significant increase in recognition performance. i -V ectors are low-dimensional representations of Baum-W elch statistics obtained with respect to a GMM, referred to as universal backgr ound model (UBM), in a sin- gle subspace which includes all characteristics of speak er and inter-session variability , named total variability matrix [3]. W e trained on each acoustic feature a full cov ariance, gender- independent UBM model with 2048 Gaussians followed by a 600-dimensional i -vector extractor to establish our MFCC- and PLP-based i -vector systems. The unlabeled set of dev el- opment data was used in the training of both the UBM and the i -vector extractor . The open-source Kaldi software has been used for all these processing steps [20]. It has been sho wn that successiv e acoustic observation vectors tend to be highly correlated. This may be problematic for maximum a posteriori (MAP) estimation of i -vectors. T o in vestigating this issue, scaling the zero and first order Baum- W elch statistics before presenting them to the i -vector e xtrac- tor has been proposed. It turns out that a scale factor of 0.33 giv es a slight edge, resulting in a better decision cost function [10]. This scaling factor has been performed in training the i -vector e xtractor as well as in the testing. 4. B A CK-END PROCESSING This section provides the steps performed in back-end pro- cessing of our speaker recognition system. 4.1. Nearest-neighbor Discriminant Analysis (NDA) The nearest-neighbor discriminant analysis is a nonparamet- ric discriminant analysis technique which was proposed in [4], and recently used in speaker recognition [22]. The non- parametric within- and between-class scatter matrices ˆ S w and ˆ S b , respectiv ely , are computed based on k nearest neighbor sample information. The NDA transform is then formed using eigen vectors of ˆ S − 1 w ˆ S b . It has been sho wn that as the number of nearest neighbors k approaches the number of samples in each class, the ND A essentially becomes the LD A projection. Based on the finding in [22], ND A outperformed LD A due to -10 0 10 20 30 40 50 60 70 Duration 0 5 10 15 20 25 30 35 40 45 50 Frequency Fig. 2 . The duration of test segments in the development set after dr opping non-speech frames. the ability in capturing the local structure and boundary in- formation within and across different speakers. W e applied a 600 × 400 NDA projection matrix computed using the 10 nearest sample information on centered i -vectors. The result- ing dimensionality reduced i -v ectors are then whitened using both the training data and the unlabelled dev elopment set. 4.2. Short-Duration V ariability Compensation The enrolment condition of the dev elopment set is supposed to pro vide at least 60 seconds of speech data for each tar- get speaker . Ne vertheless, our SAD indicates that the speech content is as lo w as 26 seconds in some cases. The test se g- ments duration which ranges from 9 to 60 seconds of speech material can result in poor performance for lower duration segments. As indicated in Figure 2, more than one third of the test segments hav e speech duration of less than 20 sec- onds. W e ha ve addressed this issue by proposing a short dura- tion v ariability compensation method. The proposed method works by first extracting from each audio segment in the un- labelled dev elopment set, a partial excerpt of 10 seconds of speech material with random selection of the starting point (Figure 3). Each audio file in the unlabelled de velopment set, with the extracted audio segment will result in two 400- dimensional i -vectors, one with at most 10 seconds of speech material. Considering each pair as one class, we computed a 400 × 390 LD A projection matrix to remo ve directions at- tributed to duration v ariability . Moreover , the projected i - vectors are also subjected to a within-class co variance nor- malization (WCCN) using the same class labels. 4.3. Language Normalization Language-source normalization is an effecti ve technique for reducing language dependency in the state-of-the-art i - vector/PLD A speaker recognition system [14]. It can be implemented by extending SN-LDA [15] in order to mitigate Fig. 3 . P artial e xcerpt of 10 second speech dur ation fr om an audio speech file . variations that separate languages. This can be accomplished by using the language label to identify dif ferent sources during training. Language Normalized-LD A (LN-LD A) uti- lizes a language-normalized within-speaker scatter matrix ˆ S W which is estimated as the v ariability not captured by the between-speaker scatter matrix, ˆ S W = S T − ˆ S B , (1) where S T and ˆ S B are the total scatter and normalized between-speaker scatter matrices respectively , and are for- mulated as follows: S T = N X n =1 w n w n T , (2) where N is the total number of i -vectors and ˆ S B = L X l =1 S l X s =1 n l s ( ¯ w l ( s ) − ¯ w l )( ¯ w l ( s ) − ¯ w l ) T , (3) where L is the number of languages in the training set, S l is the number of speak ers in language l , ¯ w l ( s ) is the mean of n l s i -vectors from speaker s and language l and finally ¯ w l is the mean of all i -vectors in language l . W e applied a 390 × 300 SN-LD A projection matrix to reduce the i -vector dimensions down to 300. 4.4. PLD A Probabilistic Linear Discriminant Analysis (PLD A) pro vides a powerful mechanism to distinguish between-speaker vari- ability , separating sources which characterizes speaker in- formation, from all other sources of undesired v ariability that characterize distortions. Since i -vectors are assumed to be generated by some generativ e model, we can break it down into statistically independent speaker - and session- components with Gaussian distributions [5, 8]. Although it has been shown that their distrib ution follow Student’ s t rather than Gaussian [8] distrib utions, length normalizing the entire set of i -vectors as a pre-processing step can approximately Gaussianize their distrib utions [5] and as a result impro ve the performance of Gaussian PLD A to that of heavy-tailed PLD A [8]. A standard Gaussian PLD A assumes that an i -vector w , is modelled according to w = m + Vy + ε. (4) where, m is the mean of i -vectors, the columns of matrix V contains the basis for the between-speaker subspace, the la- tent identity v ariable y ∼ N ( 0 , I ) denotes the speak er factor that represents the identity of the speaker and the residual ε which is normally distrib uted with zero mean and full co vari- ance matrix Σ , represents within-speaker v ariability . For each acoustic feature we hav e trained two PLDA mod- els. The first out-domain PLDA ( V out , Σ out ) is trained us- ing the training set presented in T able 1, and the second in- domain PLD A ( V in , Σ in ) w as trained using the unlabelled dev elopment set. Our ef forts to cluster the dev elopment set (e.g using the out-domain PLDA) was not very successful as it sounds that almost all of them are uttered by dif ferent speak- ers. Therefore, each i -vector was considered to be uttered by one speaker . W e also set the number of speaker factors to 200. 4.5. Domain Adaptation Domain adaptation has gained considerable attention with the aim of compensating for cross-speech-source variability of in- domain and out-of-domain data. The framework presented in [6] for unsupervised adaptation of out-domain PLD A param- eters resulted in better performance for in-domain data. Using in-domain and out-domain PLD A trained in Section 4.4, we interpolated their parameters as follow: V adapt = α V in + (1 − α ) V out Σ adapt = α Σ in + (1 − α ) Σ out . (5) W e chose α = 0 . 10 for making our submission. 4.6. Score Computation and Normalization For the one-se gment enrolment condition, the speak er model is the length normalized i -vector of that se gment, howe ver , for the three-segment enrolment condition, we simply used a length-normalized mean v ector of the length-normalizated i - vectors as the speaker model. Each speaker model is tested against each test se gment as in the trial list. For each two trial i -vectors w 1 and w 2 , the PLD A score is computed as s = w T 1 Qw 1 + w T 2 Qw 2 + 2 w T 1 Pw 2 + c, (6) in which Q = S − 1 T − ( S T − S B S − 1 T S B ) − 1 , (7) P = S − 1 T S B ( S T − S B S − 1 T S B ) − 1 . (8) and S B = V adapt V adapt T and S T = S B + Σ adapt . It has been sho wn and pro ved in our experiments that score nor - malization can hav e a great impact on the performance of the recognition system. W e used the symmetric s-norm proposed in [8] which normalizes the score s of the pair ( w 1 , w 2 ) using the formula ˆ s = s − µ 1 σ 1 − s − µ 2 σ 2 (9) where the means µ 1 , µ 2 and standard de viations σ 1 , σ 2 are computed by matching w 1 and w 2 against the unlabelled set as the impostor speakers, respecti vely . 4.7. Quality Measure Function It has been shown that there is a dependency between the value of the C min det threshold and the duration of both enrol- ment and test segments. Applying the quality measure func- tion (QMF) [18] enabled us to compensate for the shift in the C min det threshold due to the differences in speech duration. W e conducted some experiments to estimate the dependency be- tween the C min det threshold shift on the duration of test segment and used the following QMF for PLD A v erfication scores: QM F ( t ) = − 0 . 2 √ t (10) where t is the duration of the test segment in seconds. 4.8. Calibration In the literature, the performance of speaker recognition is usually reported in terms of calibrated-insensiti ve equal error rate (EER) or the minimum decision cost function ( C min det ). Howe ver , in real applications of speaker recognition there is a need to present recognition results in terms of calibrated log- likelihood-ratios. W e have utilized the BOSARIS T oolkit [1] for calibration of scores. C min det provides an ideal reference value for judging calibration. If C det − C min det is minimized, then the system can be said to be well calibrated. The choice of target probability ( P tar ) had a great im- pact on the performance of the calibration. Howe ver , we set P tar = 0 . 0001 for our primary submission which performed the best on the development set. For our secondary submis- sion P tar = 0 . 001 was used. 5. RESUL TS AND DISCUSSION In this section we present the results obtained on the protocol provided by NIST on the de velopment set which is supposed to mirror that of e valuation set. The results are sho wn in T a- ble 2. The first part of the table indicates the result obtained by the primary system. As can be seen, the fusion of MFCC and PLP (a simple sum of both MFCC and PLP scores) re- sulted in a relati ve improvement of almost 10%, as compared to MFCC alone, in terms of both C det and C min det . In order to quantify the contribution of the dif ferent system components we hav e defined different scenarios. In scenario A, we have analysed the ef fect of using LD A instead of NDA. As can be seen from the results, LD A outperforms ND A in the case of T able 2 . P erformance comparison of the Intelligent V oice speaker r ecognition system with various analysis on the de velopment pr otocol of NIST SRE 2016. Unequalized Equalized Acoustic Featur es EER C min det C det EER C min det C det Primary MFCC 16.49 0.6633 0.6754 15.83 0.6650 0.6749 PLP 17.87 0.6857 0.6977 16.84 0.6914 0.6982 Fusion 16.04 0.6012 0.6107 14.93 0.6011 0.6267 Scenario A MFCC 16.82 0.6658 0.6794 16.42 0.6890 0.7021 PLP 16.98 0.6691 0.6881 16.28 0.6903 0.7092 Fusion 15.73 0.6153 0.6369 15.12 0.6587 0.6964 Scenario B MFCC 16.55 0.6735 0.6880 16.10 0.6755 0.6945 PLP 18.27 0.6938 0.7141 16.97 0.7018 0.7299 Fusion 16.31 0.6075 0.6299 14.70 0.6259 0.6482 Scenario C MFCC 17.08 0.6767 0.6889 16.77 0.6677 0.6927 PLP 17.98 0.6857 0.6968 17.21 0.7001 0.7192 Fusion 16.59 0.6176 0.6264 15.70 0.6363 0.6680 Scenario D MFCC 17.42 0.6694 0.6833 16.54 0.6639 0.6820 PLP 18.49 0.6851 0.7062 17.46 0.6852 0.7054 Fusion 17.03 0.6171 0.6315 15.73 0.6243 0.6410 Scenario E MFCC 16.65 0.6976 0.7124 16.24 0.6972 0.7122 PLP 18.48 0.7182 0.7324 17.49 0.7263 0.7480 Fusion 16.82 0.6343 0.6500 15.52 0.6471 0.6737 PLP , howe ver , in fusion we can see that ND A resulted in bet- ter performance in terms of the primary metric. In scenario B, we analysed the effect of using the short-duration com- pensation technique proposed in Section 4.2. Results indicate superior performance using this technique. In scenario C, we in vestigated the effects of language normalization on the per - formance of the system. If we replace LN-LD A with simple LD A, we can see performance degradation in MFCC as well as fusion, howe ver , PLP seems not to be adversely affected. The effect of using QMF is also inv estigated in scenario D. Finally in scenario E, we can see the major impro vement ob- tained through the use of the domain adaptation technique ex- plained in Section 4.5. For our secondary submission, we incorporated a disjoint portion of the labelled dev elopment set (10 out of 20 speak ers) in either LN-LD A and in-domain PLD A training. W e ev aluated the system on almost 6k out of 24k trials from the other portion to av oid an y ov er-fitting, par- ticularly important for the domain adaptation technique. This resulted in a relativ e improvement of 11% compared to the primary system in terms of the primary metric. Howe ver , the results can be misleading, since the recording condition may be the same for all speakers in the de velopment set. 6. TIME ANAL YSIS This section reports on the CPU ex ecution time (single threaded), and the amount of memory used to process a single trial, which includes the time for creating models from the enrolment data and the time needed for processing the test se gments. The analysis was performed on an Intel(R) Xeon(R) CPU E5-2670 2.60GHz. The results are shown in T able 3. W e used the time command in Unix to report these results. The user time is the actual CPU time used in exe- cuting the process (single thread). The real time is the wall clock time (the elapsed time including time slices used by other processes and the time the process spends blocked). The system time is also the amount of CPU time spent in the kernel within the process. W e hav e also reported the memory allocated for each stage of e xecution. The most computation- ally intensi ve stage is the e xtraction of i -vectors (both MFCC- and PLP-based i -vectors), which also depends on the duration of the segments. For enrolment, we have reported the time T able 3 . CPU execution time and the amount of memory r equir ed to process a single trial. segment dur speech dur stage user time system time real time memory(MB) Enrolment 140s 60s features 0.99s 0.02s 1.02s 12 SAD 8.10s 0.23s 2.26s 25.0 i -vectors 27.47s 2.20s 7.83s 4,014 T est 36s 25s features 0.52s 0.01s 0.54s 7.5 SAD 2.26s 0.09s 0.94s 25.35 i -vectors 26.02s 2.25s 7.9s 4,013 required to extract a model from a segment with a duration of 140 seconds and speech duration of 60 seconds. The time and memory required for front-end processing are negligible compared to the i -vector extraction stage, since the y only include matrix operations. The time required for our SAD is also reported which increases linearly with the duration of segment. 7. CONCLUSIONS AND PERSPECTIVES W e hav e presented the Intelligent V oice speaker recognition system used for the NIST 2016 speaker recognition ev al- uation. Our system is based on a score fusion of MFCC- and PLP-based i -vector/PLD A systems. W e hav e described the main components of the system including, acoustic fea- ture extraction, speech activity detection, i -vector extraction as front-end processing, and language normalization, short- duration compensation, channel compensation and domain adaptation as back-end processing. For our future work, we intend to use the ALISP segmentation technique [2] in order to e xtract meaningful acoustic units so as to train supervised GMM or DNN models. 8. REFERENCES [1] N. Br ¨ ummer and E. de V illiers. The bosaris toolkit user guide: Theory , algorithms and code for binary classifier score processing. Documentation of BOSARIS toolkit , 2011. [2] G. Chollet, J. ˇ Cernock ` y, A. Constantinescu, S. Deligne, and F . Bimbot. T oward alisp: A proposal for automatic language independent speech processing. In Computa- tional Models of Speech P attern Pr ocessing , pages 375– 388. Springer , 1999. [3] N. Dehak, P . Kenn y , R. Dehak, P . Dumouchel, and P . Ouellet. Front-end factor analysis for speaker verifi- cation. Audio, Speech, and Languag e Processing , IEEE T ransactions on , 19(4):788–798, 2011. [4] K. Fukunaga and J. Mantock. Nonparametric discrim- inant analysis. IEEE T ransactions on P attern Analysis and Machine Intelligence , (6):671–678, 1983. [5] D. Garcia-Romero and C. Y . Espy-W ilson. Analysis of i-vector length normalization in speaker recognition sys- tems. In INTERSPEECH , pages 249–252, 2011. [6] D. Garcia-Romero, A. McCree, S. Shum, N. Brum- mer , and C. V aquero. Unsupervised domain adapta- tion for i-vector speaker recognition. In Proceedings of Odysse y , The Speaker and Language Recognition W ork- shop , Joensuu, Finalnd, 2014. [7] C. S. Greenberg, D. Bans ´ e, G. R. Doddington, D. Garcia-Romero, J. J. Godfrey , T . Kinnunen, A. F . Martin, A. McCree, M. Przybocki, and D. A. Reynolds. The nist 2014 speaker recognition i-vector machine learning challenge. In Pr oceedings of Odysse y , The Speaker and Language Recognition W orkshop , Joensuu, Finland, 2014. [8] P . Kenny . Bayesian speaker verification with heavy- tailed priors. In Pr oceedings of Odyssey , The Speaker and Language Reco gnition W orkshop , page 14, 2010. [9] P . Kenny , V . Gupta, T . Stafylakis, P . Ouellet, and J. Alam. Deep neural networks for extracting baum- welch statistics for speaker recognition. In Pr oceed- ings of Odysse y , The Speaker and Language Recogni- tion W orkshop , pages 293–298, Joensuu, Finland, 2014. [10] P . K enny , T . Stafylakis, P . Ouellet, M. J. Alam, and P . Dumouchel. Plda for speaker v erification with utter- ances of arbitrary duration. In 2013 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocess- ing , pages 7649–7653. IEEE, 2013. [11] A. Khosravani and M. Homayounpour . Linearly con- strained minimum variance for robust i-vector based speaker recognition. In Pr oceedings of Odysse y , The Speaker and Languag e Recognition W orkshop , pages 249–253, Joensuu, Finland, 2014. [12] E. Khoury , L. El Shafey , M. Ferras, and S. Marcel. Hier - archical speaker clustering methods for the nist i-vector challenge. In Pr oceedings of Odyssey , The Speaker and Language Recognition W orkshop , Joensuu, Finland, 2014. [13] Y . Lei, N. Schef fer, L. Ferrer , and M. McLaren. A no vel scheme for speaker recognition using a phonetically- aware deep neural network. In 2014 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 1695–1699. IEEE, 2014. [14] M. McLaren, M. I. Mandasari, and D. A. v an Leeuwen. Source normalization for language-independent speaker recognition using i-vectors. In Pr oceedings of Odysse y , The Speaker and Language Recognition W orkshop , pages 55–61, Singapore, 2012. [15] M. Mclaren and D. V an Leeuwen. Source-normalized lda for robust speaker recognition using i-vectors from multiple speech sources. Audio, Speech, and Lan- guage Pr ocessing, IEEE T ransactions on , 20(3):755– 766, 2012. [16] N. Mesgarani, M. Slane y , and S. A. Shamma. Discrim- ination of speech from nonspeech based on multiscale spectro-temporal modulations. IEEE T ransactions on Audio, Speech, and Language Processing , 14(3):920– 930, 2006. [17] T . Ng, B. Zhang, L. Nguyen, S. Matsoukas, X. Zhou, N. Mesgarani, K. V esel ` y, and P . Matejka. Developing a speech activity detection system for the darpa rats pro- gram. In INTERSPEECH , pages 1969–1972, 2012. [18] S. Novoselo v , T . Pekhovsky , and K. Simonchik. Stc speaker recognition system for the nist i-vector chal- lenge. In Pr oceedings of Odysse y , The Speaker and Lan- guage Recognition W orkshop , pages 231–240, Joensuu, Finland, 2014. [19] T . Pfau, D. P . Ellis, and A. Stolcke. Multispeaker speech activity detection for the icsi meeting recorder . In Au- tomatic Speech Recognition and Understanding, 2001. ASR U’01. IEEE W orkshop on , pages 107–110. IEEE, 2001. [20] D. Povey , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz, et al. The kaldi speech recognition toolkit. In IEEE 2011 workshop on automatic speech r ecognition and understanding . IEEE Signal Processing Society , 2011. [21] N. Ryant, M. Liberman, and J. Y uan. Speech acti vity detection on youtube using deep neural networks. In INTERSPEECH , pages 728–731, 2013. [22] S. O. Sadjadi, S. Ganapath y , and J. Pelecanos. The ibm 2016 speaker recognition system. In Odysse y 2016: The Speaker and Languag e Recognition W orkshop , pages 174–180, Bilbao, Spain, June 21-24 2016. [23] B. V esnicer , J. Zganec-Gros, S. Dobrisek, and V . Struc. Incorporating duration information into i-vector-based speaker -recognition systems. In Proceedings of Odysse y , The Speaker and Language Recognition W ork- shop , pages 241–248, Joensuu, Finland, 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment