Building Machines That Learn and Think Like People

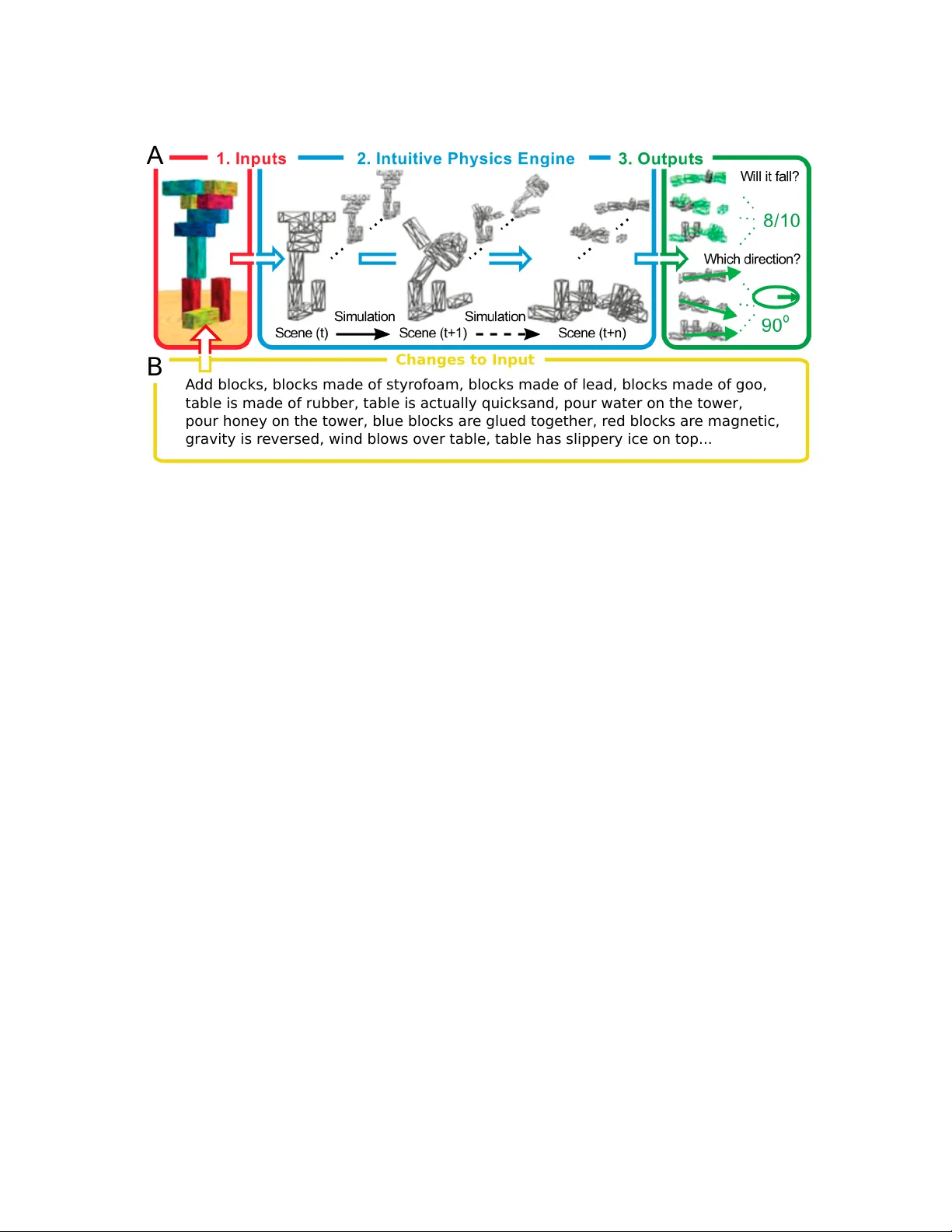

Recent progress in artificial intelligence (AI) has renewed interest in building systems that learn and think like people. Many advances have come from using deep neural networks trained end-to-end in tasks such as object recognition, video games, an…

Authors: Brenden M. Lake, Tomer D. Ullman, Joshua B. Tenenbaum