Large Scale Parallel Computations in R through Elemental

Even though in recent years the scale of statistical analysis problems has increased tremendously, many statistical software tools are still limited to single-node computations. However, statistical analyses are largely based on dense linear algebra …

Authors: Rodrigo Canales, Elmar Peise, Paolo Bientinesi

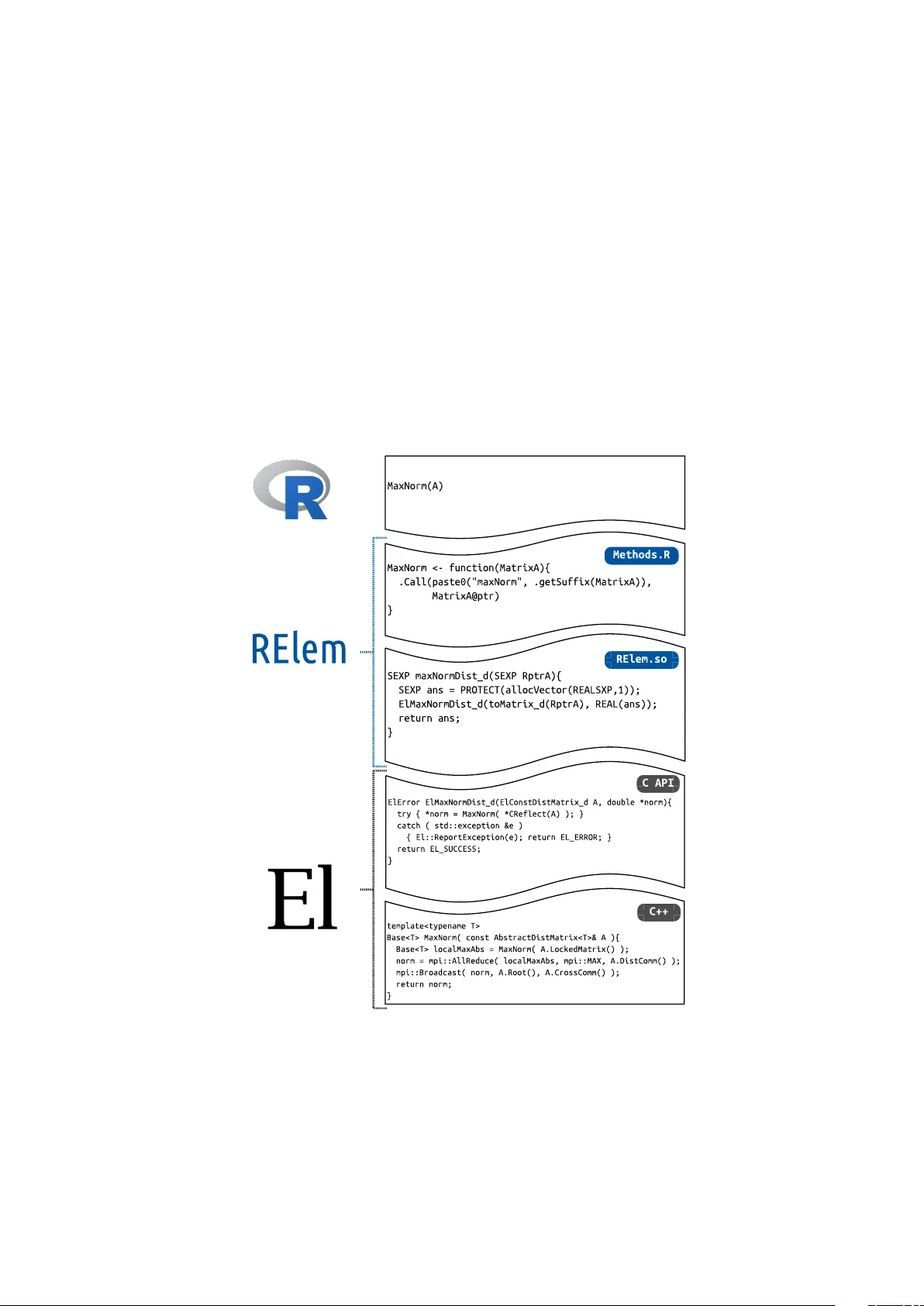

J S S Journal of Statistical Software MMMMMM YYYY, V olume VV, Issue II. http://www.jstatsoft.or g/ Large Scale P arallel Computations in R through Elemen tal Ro drigo Canales R WTH Aachen Univ ersit y Elmar P eise R WTH Aachen Univ ersit y P aolo Bien tinesi R WTH Aachen Univ ersit y Abstract Ev en though in recent year s the scale of statistical analysis problems has increased tremendously , man y statistical soft w are tools are sti ll limited to single-node computat ions. Ho wev er, statistical analyses are largely based on dense linear algebra operations, which ha ve b een deeply studied, optimized and parallelized in the high-p erformance-computing comm unity . T o make high-p erformance distributed computations a v ailable for statistical analysis, and thus enable large scale statistical computations, w e in tro duce RElem , an op en source pac k age that integrates the distributed dense linear algebra library Ele mental in to R . While on the one hand, RElem pro vides dire ct wrappers of Elemental ’s routines, on the other hand, it o verloads v arious op erators and functions to provide an en tirely nativ e R experience for distributed computations. W e show case ho w simple it is to p ort existing R programs to RElem and demonstrate that RElem indeed allo ws to scale beyond the single-node limitation of R with the full p erformance of Elemental without any ov erhead. Keywor ds : Linear Algebra, Elemen tal, Distributed, Parallel, R. 1. In tro duction In man y scien tific fields ranging from Chemistry and Biology to Meteorology , data-sets of increasing size are analyzed with statistical metho ds on a daily basis. Suc h analyses are often p erformed with the help of R , an op en-source soft ware and programming language fo cused on and designed for statistical computations. R is supported by both a large commu nity and CRAN, a w ell-dev elop ed con tribution system with o v er 9000 op en-source pac k ages. R ’s user- base has steadily gro wn since its first release in 1993 and in many disciplines it has b ecome the 2 RElem de-facto standard for statistical computations. Ho wev er, statistical analyses are constantly ev olving, most notably , in the size of their databases. In fields like computational biology , it is quite common that analyses are p erformed on workstations , and tak e da ys to complete. Other problems are simply to o large to fit in ev en mo dern main memories. In the field of scientific computing, which is often only lo osely connected with the statistical computing comm unity , large scale problems are routinely solved through distributed parallel computing: In clusters, the com bined resources and pro cessing p o wer of multiple intercon- nected computers mak e pro cessing large data-sets feasible and fast. F or dense linear algebra op erations, which are at the core of statistical computing, there exist highly optimized libraries for b oth shared-memory and distributed systems. While R already makes use of suc h optimized libraries, namely BLAS 1 and LAP A CK 2 , for single-no de computations, it cannot profit from mo dern distributed memory libraries for larger data-sets. In this pap er, we ov ercome this limitation b y in tegrating R with the state-of-the- art distributed linear algebra library Elemental : W e prop ose the R -pack age RElem . RElem pro vides the functionality and p erformance of Elemen tal in R and thereby enables large scale computations in native R . RElem not only provi des wrappers to Elemen tal , but replicates R ’s op erators and metho ds, allowing the use of native R scripts in distributed computing no des. The remainder of this pap er is structured as follo ws: In Sec. 2 w e summarize curren tly a v ailable distributed computing pac k ages for R and provide an o verview of the distributed Elemen tal library . 2 . In Sec. 3 we then present how RElem connects R and Elemental in and describ e its usage in Sec. 4 . Finally , in Sec. 5 we demonstrate the p erformance and large-scale capabilities of RElem and, in Sec. 6 draw conclusions and p oten tial future directions. 2. Distributed parallelism in R With the adv en t of m ulti-core pro cessors, R b egan to em brace shared memory parallelism. Sev eral built-in routines use m ulti-threading capabilities or rely on parallel high-p erformance k ernels, such as the BLAS . On the con trary , distributed parallelism is not natively av ailable in R . There are sev eral pac k ages that address this issue and enable m ulti-no de execution in R . Most of these pack ages are built on top of the Message Passing Interface ( MPI ), whic h enables the comm unication b et w een different pro cesses. MPI enables the Single Program Multiple Data (SPMD) parallel programming paradigm, where all computing no des execute the same program using a p ortion of the global data. Pro cesses sync hronize with each other using messages, which can b e p oin t-to-p oin t or collectiv e among pro cesses. A lo w-lev el int erface to this parallelism mo del in R is provided by RMPI , a pack age that exp oses MPI routines in R but leav es the parallelization task to the user. Other pac k ages suc h as Snow and Snowf all apply one function to or p erform reductions on a whole distributed data set in parallel. These pac k ages ach ieve go o d p erformance and their use is straigh tforw ard for simple parallelization tasks suc h as n umerical integration ( Schmi db erger, Morgan, Eddelbuettel, Y u, Tierney , and Mansmann 2009 ). 1 Basic Linear Algebra Subprograms 2 Linear Algebra P ACKage Journal of Statistical Softw are 3 The pac k age collection Pr o gr amming Big Data in R ( pb dR) ( Ostroucho v, Chen, Schmidt, and P atel 2012 ) provides mechanisms and routines to handle big amounts of data in distributed en vironments . In particular, the module p dbDMA T offers distributed dense linear algebra functionalit y based on the ScaLAP A CK library (a distributed memory parallelization of LA- P A CK ) ( Choi, Dongarra, Pozo, and W alk er 1992 ). It also implements nativ e R interfaces to and op erators on distributed matrices, making it accessible without extensiv e knowledge of distributed computing in general or ScaLAP A CK in particular. Ho wev er, in the field of parallel dense linear algebra, ScaLAP A CK (1992) is no w adays seen as a legacy library that is still widely used, but no longer represents the state of the art. F or instance, it do es not contain more recen t developmen ts on distributed linear algebra for key routines, such as eigenv alue decomposition ( Gutheil, M ¨ unc hhalfen, and Grotendorst 2014 ). T o pro vide R users access to the state of the art in dense linear algebra, we therefore see the necessit y to provide a more newer alternativ e. F or this purp ose, we use Elemental ( P oulson, Mark er, v an de Geijn, Hammond, and Romero 2013 ), a mo dern distributed linear algebra library that incorp orates the latest algorithms and mo dern programming standards. Elemen tal is a recen t (2013) and actively developed library . Since its op en source license (BSD) allo ws it to b e included in proprietary soft ware, it is an ideal basis for distributed computations b oth in academia and industry . In con trast to legacy libraries such as BLAS , LAP A CK , and ScaLAP A CK , Elemen tal embr aces mo dern programming paradigms to pro vide a simpler ob ject-orien ted programming interface. A t the cen ter of this interface are distributed matrices, which encapsulate not only their data but also their size and distributed storage format. All of Elemen tal ’s computational and input/output routines implicitly op erate on these formats, p erforming optimized matrix comm unications, replications, and redistributions as necessary , while keeping the memory o verhead minimal. Hence, while the distributed storage formats are fully transparent and accessible by the user, kno wledge of such is not required to use the library . T o extend the av ailabilit y of Elemen tal b ey ond its native C++ environmen t, recent releases include C and Python in terfaces that co ver the ma jorit y of the library’s functionalit y . T o also mak e the p ow erful functionality and features of Elemen tal a v ailable to the statistical programming communi ty , w e embrace the task of int egrating the library with R . 3. RElem This section introduces RElem , our nov el interf ace pac k age that provides Elemen tal features and functionalit y in native R . W e b egin with an o v erview of RElem ’s design and structure and in the follo wing subsections fo cus on its implemen tation. The R programming language is in part so widely used in statistical computing and data analysis not so m uch b ecause of its inherent feature set but b ecause of its large and well main tained pack age system and simple extensibilit y . It can nativ ely interface with F ortran and C routines, and separate pack ages provide in teraction with other languages suc h as Java and C++ ( Eddelbuettel and F ran¸ cois 2011 ). Our first attempt to connect R with Elemental w as to use the in terface co de generator SWIG ( Beazley 1996 ) to directly int erface with Elemental ’s core C++ interface. Ho wev er, w e so on ran in to complications due to the extensiv e use of templating and C++11 features within Elemen tal . As an alternative, we turned to Elemental ’s C interface, whic h also serves as the 4 RElem basis for the library’s Python interface. With C as a starting point, there are tw o in terfacing mechanism s provided b y R : On the one hand, the routine .C operates as a simple C wrapper that rein terprets R data as basic C data t yp es; on the other hand, the routine .Call pro vides a low-lev el interface to compiled C ob ject files. As arguments, .Call passes and op erates on internal R ob jects, the so-called SEXP C structures. Since Elemental op erates on matrices and other complex ob jects that are neither basic C data- t yp es nor known to R , the .C in terface is not applicable and w e ha v e to employ R ’s .Call in terface. This means that a separate la yer b et ween R ’s int ernal SEXP ob jects and Elemen tal ’s C interface is required. Ho wev er, this also allo ws to directly benefit from m uc h of R ’s features, suc h as ob ject metho ds, garbage collection, and t yp e chec king. Figure 1: RElem ’s integration sc heme. In the follo wing, we presen t the lay ered structure of RElem from b ottom to top as depicted in Fig. 1 : W e begin with a short description of Elemental ’s C interf ace and describe its mapping to R ’s internal SEXP format. W e then provide an ov erview of the R wrapp ers using the Journal of Statistical Softw are 5 .Call in terface: The definition of Elemen tal matrix types and routines in R , as w ell as the represen tation of Elemental ’s ob ject-orien ted metho ds of matrix classes. W e conclude with the extension Elemental ’s functionality by ov erloading R op erators and functions to provide an interface v ery similar to nativ e R . 3.1. Elemen tal’s C interface T o provide a simple interface to languages other than C++ , Elemen tal offers a C compatibility API and headers. In this API, Elemen tal ’s templated class system is translated into fully t yp ed C structures and functions: • The templated classes are instantiated with El as a prefix (corresp onding to the C++ namespace El ), and suffixes indicating the data-types, e.g., El Matrix_d for a lo cal double-precision matrix. The instantiations are implemen ted as anon ymous structures that are essentially pointers to the C++ ob jects. • T emplated routines are similarly instantiated , e.g., the C++ function MaxNorm(A) in C b ecomes ElMaxNormDist_c(A, &val) for distributed single-precision complex matrix. C++ return v alues are implemen ted as the last argument b y reference, and each routine returns an error co de represen ting errors thro wn in the corresp onding C++ routine. • Instance methods are implemen ted as routines that tak e a reference to the instance as a first argumen t; e.g., A.Width() b ecomes ElMatrixWidth_i(A) for lo cal integer matrices. 3.2. RElem’s C-lay er Since RElem relies on R ’s .Call routine to int eract with Elemen tal , the construction of a C - la yer that connects R ’s SEXP structures and Elemental ’s C interface is necessary . This la yer con tains a wrapp er for each of Elemen tal ’s C -routines. In these wrapp ers, we already simplify some abstractions from the C in terface by implemen ting return v alues as such, instead of final argumen ts by reference. S E X P m a x N o r m D i s t _ d ( S E X P R p t r A ) { S E X P a n s = P R O T E C T ( a l l o c V e c t o r ( R E A L S X P , 1 ) ) ; E l M a x N o r m D i s t _ d ( t o D i s t M a t r i x _ d ( R p t r A ) , R E A L ( a n s ) ) ; U N P R O T E C T ( 1 ) ; r e t u r n a n s ; } Listing 1: maxNormDist_d in RElem ’s C -lay er. As an example, listing 1 shows the definition for maxNormDist_d that serves as a wrapp er for Elemen tal ’s ElMaxNormDist_d . The routine begins b y allo cating a double-precision return v alue using R ’s in ternal allo cator and types 3 . It then in v okes Elemental ’s C interface, passing argumen ts extracted from the SEXP ob jects: toDistMatrix_d is an RElem routine that casts the external p oin ter stored in a SEXP ob ject to a ElDistMatrix_d , and REAL is an R macro 3 The PROTECT and UNPROTECT are necessary to indicate to R ’s garbage collection that the allo cated ob ject is in use. 6 RElem that pro vides a p ointer to the double precision slot in a SEXP . Finally , the SEXP repr esenting the result is returned. 3.3. R Matrix and F unction W rapp ers The C -lay er describ ed ab o ve allows to load and access Elemental ’s C interface in R through the .Call syn tax. In order to offer not a mere cop y of Elemental ’s C in terface but mirror its original ob ject-orien ted C++ functionalit y in R , RElem con tains wrapp ers for all Elemen tal data types (e.g., matrices) and routines (e.g., MaxNorm ). Just as Elemen tal , RElem represents both lo cal and distributed matrices (as w ell as few other ob jects) as classes 4 . Each of these classes contains 1) an external p ointer to an Elemen tal ob ject, and 2) a datat yp e indicator needed to select the corresponding typed C -lay er routines. E l D i s t M a t r i x < - s e t C l a s s ( " E l D i s t M a t r i x " , r e p r e s e n t a t i o n ( p t r = " e x t e r n a l p t r " , d a t a t y p e = " c h a r a c t e r " ) ) Listing 2: R class ElDistMatrix . As an example, listing 2 presen ts the definition of the class ElDistMatrix . In addition to the class name, the class declaration routine SetClass is passed the class “representation” con taining its attributes: The external p oin ter ptr references the Elemental matrix as a C p ointer and the character datatype indicates the type of data in this matrix, i.e., double- precision real ( d ) or complex ( z ), or in teger-precision ( i ). Note that, in contras t to Eleme ntal ’s C in terface, which has separate structures and routines for eac h data-t yp e, thanks to this mec hanism RElem has only one distributed matrix class that encapsulates the data-type as an attribute. M a x N o r m < - f u n c t i o n ( M a t r i x A ) { . C a l l ( p a s t e 0 ( " m a x N o r m " , . g e t S u f f i x ( M a t r i x A ) ) , M a t r i x A @ p t r ) } Listing 3: R wrapp er for MaxNorm . As sho wn in the example of MaxNorm in listing 3 , T o translate Elemen tal functions on matrices to R , we need to use the .Call int erface. The name of the function called in RElem ’s C - la yer is constructed from tw o parts: the name MaxNorm and a type-dep endent suffix that is constructed from the information encapsulated in the matrix ob ject by .getSuffix . E.g., for a complex distributed matrix, the result would b e MaxNormDist_z , while for a real lo cal matrix, it w ould b e MaxNorm_d . The selected C -function is then in v oked with the external p oin ter to the Elemen tal matrix ( MatrixA@ptr ) as an argument. The return v alue of the C -function and thus .Call is implicitly returned by the R wrapp er. 4 W e use R ’s recommended S4 ob ject system. Journal of Statistical Softw are 7 s e t M e t h o d ( " i n i t i a l i z e " , s i g n a t u r e ( . O b j e c t = " E l D i s t M a t r i x " ) , f u n c t i o n ( . O b j e c t , t a g = " d " , c o l D i s t = " M C " , r o w D i s t = " M R " , g r i d = n e w ( " E l G r i d " ) ) { . O b j e c t @ d a t a t y p e < - t a g . O b j e c t @ p t r < - . C a l l ( p a s t e 0 ( " n e w D i s t M a t r i x S p e c i f i c _ " , t a g ) , c o l D i s t , r o w D i s t , g r i d @ p t r ) r e g . f i n a l i z e r ( . O b j e c t @ p t r , . E l D e s t r o y ( t a g , " d e s t r o y D i s t M a t r i x " ) ) r e t u r n ( . O b j e c t ) } ) Listing 4: DistMatrix constructor. Class constructors are implemen ted by ov erloading R ’s initialize function as shown for DistMatrix in listing 4 . Of the constructor’s four arguments, the first ( tag ) indicates the data t yp e and determines the correct C -lay er constructor, while the remaining three relate to Elemen tal ’s data distribution and are passed directly to this constructor and further to Elemen tal ’s C interface. Ho w ever, all argumen ts are optional: By de fault, matrices use double- precision ( d ) as a data-type and the remaining argumen ts map to the default C++ argument and template v alues. The Elemental ob ject is initialized through the C -lay er constructor and the corresp onding C -lay er destructor is asso ciated to the instance to integrate with R ’s garbage collection. Finally the newly created instance is returned. 3.4. Matrix Metho ds Metho ds on matrix instances, suc h as A.Height() , which yields the matrix heigh t, require a slight ly different treatmen t from functions that op erate on suc h matrices as arguments. What makes the issue surprisingly complicated (from a programming language p oint of view) is that R do es not ha ve a direct equiv alent to instance metho ds and, in particular, the dot . is not a reserved symbol in R , i.e., it can app ear as part of a v ariable name. T o ov ercome this shortcoming, we use the same approach as man y other R libraries and resort to the dollar c haracter $ as a substitute for the dot . in C++ . T ec hnically , $ is a binary op erator in R that takes the left and right hand side as argumen ts (suc h as + ), i.e., A$arg is equiv alent to $(A, arg) . s e t M e t h o d ( " $ " , s i g n a t u r e ( x = " E l D i s t M a t r i x " ) , f u n c t i o n ( x , n a m e , . . . ) { i d < - p m a t c h ( n a m e , n a m e s ( D i s t M a t r i x F u n c t i o n s ) ) i f ( i s . n a ( i d ) ) { s t o p ( p a s t e ( " f u n c t i o n " , n a m e , " n o t f o u n d \ n " ) ) } r o u t i n e < - D i s t M a t r i x F u n c t i o n s [ [ i d ] ] f u n c t i o n ( . . . ) { r o u t i n e ( x , . . . ) 8 RElem } } ) Listing 5: Metho d accessor $ for ElDistMatrix . D i s t M a t r i x F u n c t i o n s < - l i s t ( " G e t " = M a t r i x G e t , " S e t " = M a t r i x S e t , " H e i g h t " = M a t r i x H e i g h t , " W i d t h " = M a t r i x W i d t h , " L D i m " = M a t r i x L D i m , " E m p t y " = M a t r i x E m p t y , . . . ) Listing 6: Mapping of instance metho ds to functions for ElDistMatrix (excerpt). Listing 5 shows how setMethod 5 o verloads the op erator $ for the class ElDistMatrix : The asso ciated function receiv es as arguments b oth the matrix instance and the name of the metho d. It first looks up the metho d name in DistMatrixFunctions (listing 6 ) returning an R function (up on failure an error is thro wn); the matc hed function is implemen ted as part of the R -lay er, as described in the previous section. The op erator function for $ returns an anon ymous function that wraps the matched function, passing the matrix instance as a first argumen t and an y additional argumen ts (mask ed as ... ). 3.5. Operator and F unction Overloading While the classes, functions, and metho ds describ ed abov e are already sufficien t to cov er Elemen tal ’s full functionality , RElem further ov erloads several R functions and op erators to simplify many operations and provi de a native experience to R users. s e t M e t h o d ( " + " , s i g n a t u r e ( e 1 = " E l D i s t M a t r i x " , e 2 = " E l D i s t M a t r i x " ) , f u n c t i o n ( e 1 , e 2 ) { i f ( e 1 @ d a t a t y p e ! = e 2 @ d a t a t y p e ) s t o p ( " M a t r i c e s m u s t h a v e t h e s a m e d a t a t y p e " ) i f ( e 1 $ H e i g h t ( ) ! = e 2 $ H e i g h t ( ) & & e 1 $ W i d t h ( ) ! = e 2 $ W i d t h ( ) ) s t o p ( " M a t r i c e s m u s t h a v e t h e s a m e s i z e " ) m a t A < - D i s t M a t r i x ( e 1 @ d a t a t y p e ) C o p y ( e 1 , m a t A ) A x p y ( 1 . 0 , e 2 , m a t A ) m a t A } ) Listing 7: Op erator + for ElDistMatrix . 5 setMethod tak es three argumen ts: a metho d name, a signature sp ecifying the argumen ts and their ty p es, and a function. Journal of Statistical Softw are 9 The ov erloading arithmetic op erators, suc h as + , %*% and * 6 through setMethod is v ery similar to the already o v erloaded op erator $ , y et requires a few additional argumen t c hec ks and a memory allo cation for the return v alue. The ov erloading of + for ElDistMatrix is shown in listing 7 : The op erator receives tw o distributed matrices; first, it is ensured that b oth the matrix data-types and their sizes matc h; then a new matrix is created and initialized with a cop y of the first op erand; finally , the actual computation is p erformed b y Axpy , an Elemen tal function named after the BLAS counterpart that scales (in this case by 1.0) and adds one matrix to another; the new matrix is returned. s e t M e t h o d ( " [ " , s i g n a t u r e ( x = " E l D i s t M a t r i x " ) , f u n c t i o n ( x , i , j , . . . ) { i f ( l e n g t h ( i ) = = 1 & & l e n g t h ( j ) = = 1 ) r e t u r n ( M a t r i x G e t ( x , i - 1 , j - 1 ) ) g < - G r i d ( ) D i s t M a t r i x G r i d ( x , g ) V < - D i s t M a t r i x ( g r i d = g , t a g = x @ d a t a t y p e ) ; V i e w N o r m a l ( V , x , i [ 1 ] - 1 , t a i l ( i , 1 ) , j [ 1 ] - 1 , t a i l ( j , 1 ) ) V } ) Listing 8: Indexing ov erloading in R for ElDistMatrix . In R , arrays and tables con b e indexed not only to access single elements but to address p ortions of such ob jects; e.g., indexing allo ws to extract certain ro ws or columns from matrices. Since this is also a very common op eration in dense linear algebra, Elemen tal pro vides so- called “matrix views” . W e use this feature to o v erload R ’s indexing op erator [ as sho w for ElDistMatrix in listing 8 : If the pro vided indices i and j identify a single matrix elemen t, this is returned as a scalar; otherwise, a submatrix is extracted. s e t M e t h o d ( " p r i n t " , s i g n a t u r e ( x = " E l D i s t M a t r i x " ) , f u n c t i o n ( x ) { P r i n t ( x ) } ) Listing 9: Over loading of R ’s print for ElDistMatrix . Nativ e R methods are o verloaded very similarly , as shown for print and ElDistMatrix in- stances in listing 9 : The o verload simply maps R ’s print to RElem ’s Print wrapp er function. s e t M e t h o d ( " p r c o m p " , s i g n a t u r e ( x = " E l D i s t M a t r i x " ) , f u n c t i o n ( x , r e t x = T R U E , c e n t e r = T R U E , s c a l e . = F A L S E , t o l = N U L L , . . . ) { x _ c e n t e r e d < - s c a l e ( x , c e n t e r , s c a l e . ) 6 matrix-matrix and element-wise multiplication. 10 RElem x _ c e n t e r < - a t t r ( x _ c e n t e r e d , " s c a l e d : c e n t e r " ) s < - s v d ( x _ c e n t e r e d , n u = 0 ) s $ d < - ( 1 / s q r t ( x $ H e i g h t ( ) - 1 ) ) * s $ d l i s t ( s d e v = s $ d , r o t a t i o n = s $ v , c e n t e r = x _ c e n t e r ) } ) Listing 10: Over loading of R ’s SVD-based PCA routine prcomp for ElDistMatrix . Other core R op erations, s uch as the principal comp onen t analysis (PCA), do not hav e a direct coun terpart in Elemental , but can b e assem bled from Elemen tal routines. F or instance, the PCA routine prcomp , whic h is based on the singular v alue decomp osition (SVD) of the n × m dataset X with n observ ations of m v ariables, is o v erloaded in RElem as outlined in listing 10 : First, scale , which is also ov erloaded in RElem , centers (and optionally scales) the input X to ensure that the av erage of eac h observed v ariable is 0: ˜ X = X − ¯ X ; the cen ter ¯ X is extracted since it is one of prcomp ’s list of return v alues. No w svd p erforms the core singular v alue decomp osition U Σ V T = ˜ X through Elemental ’s SVD . While the principle comp onents are directly given b y the right singular vectors V stored in s$d , the v ariance σ for each v ector is computed from the singular v alues (diagonal elemen ts of Σ), and then stored in s$v as σ = Σ √ n − 1 . The returned data structure consists of the principle comp onen ts, their v ariance, and the matrix cen ter. 4. Usage This section offers a brief introduction to RElem from the user’s persp ective: W e start b y describing RElem ’s installation pro cess, then introduce its parallel execution across mul tiple pro cesses, and conclude by comparing distributed RElem scripts with nativ e R , and with Elemen tal . 4.1. Installat ion RElem is provided as a standard R pack age and installed through the command R C M D I N S T A L L R E l e m . z i p Since Elemental is not distributed and compiled along with RElem , the installation script searc hes the file system for a v alid Elemental installation and compiles RElem accordingly using Elemen tal headers. RElem has b een successfully installed and tested on b oth Linux and OS X. 7 4.2. P arallel Launch Since Elemen tal is based on MPI and is typ ically launched in parallel with mpirun , so is RElem : mpirun is used to run one instance of R p er process, each of which receives the same input file. Elemental is loaded lo cally on each pro cess and p erforms all interaction with MPI — the R instances are at no p oin t confronted with commun ication and are actually una ware 7 Windo ws is curren tly not supported by Elemental but is also uncommon on distributed memory cluster systems. Journal of Statistical Softw are 11 that they are running in parallel. A user migh t for instance run an RElem script myScript.R on 64 pro cesses using m p i r u n - n 6 4 R - f m y S c r i p t . R 4.3. Syn tax and Interface The comp onen t to successfully run RElem is the R script that uses it. T o giv e an impression not only of how RElem is used but also of how it compares to pure R and Elemental , let us start with a simple example in pure R that loads a Hermitian matrix from a file data.txt , and computes its eigendecomp osition, storing the result (eigenv alues and eigen vectors) in the structure ans : A < - r e a d . t a b l e ( " d a t a . t x t " ) a n s < - e i g e n ( A ) Listing 11: Pure R eigen decomp osition. T o conv ert the ab o ve co de to use RElem ’s distributed matrices, only few c hanges are necessary: l i b r a r y ( R e l e m ) A < - r e a d . t a b l e . d i s t ( " d a t a . t x t " ) a n s < - e i g e n ( A ) Listing 12: RElem eigen decomp osition. Besides loading RElem , the script only needs the additi on of .dist to the read.table routine, whic h then loads the passed file as ElDistMatrix . If we compare the ab o ve RElem script with a C++ co de p erforming the same op eration, we notice that the RElem version is considerably shorter: # i n c l u d e " E l . h p p " u s i n g n a m e s p a c e E l ; i n t m a i n ( i n t a r g c , c h a r * a r g v [ ] ) { I n i t i a l i z e ( a r g c , a r g v ) ; D i s t M a t r i x < d o u b l e > A , w , X ; R e a d ( A , " d a t a . t x t " ) ; H e r m i t i a n E i g ( L O W E R , A , w , X ) ; r e t u r n 0 ; } Listing 13: Elemen tal C++ eigen decomp osition. 5. P erformance and Scalabilit y In this chapter w e presen t the p erformance and scalability of RElem ; w e compare it with b oth pure R and Elemental and sho w computations only made p ossible in R through RElem . W e demonstrate that RElem translates b oth Elemental ’s performance and scalability directly to R with minimal ov erhead. Our test environme nt is a computing cluster of no des equipp ed with tw o 12-core Intel Xeon 12 RElem E5-2680 pro cessors (Haswell microarch itecture) and 64GB of main memory . W e use R v er- sion 3.1.0, Elemental v ersion 0.85, and link to In tel’s Math Kernel Library ( MKL ) v ersion 11.3. 5.1. P erformance: R vs. RElem W e begin with a performance comparison on a single node (24 cores) betw een R linked to m ulti-threaded MKL , and RElem using Elemental ’s MPI -based parallelization; as test-cases w e use tw o basic linear algebra op erations: the multiplication of tw o 6 , 000 × 6 , 000 matrices ( gemm , the central and most optimized op eration in dense linear algebra) and the solution of a linear system with 12 , 000 unknowns and 1 , 000 right-hand sides. 0 5 10 15 20 25 30 35 40 0 5 10 15 20 25 Running time [s] Processes / Threads Pure R vs RElem Gemm - R Gemm - RElem Solve - R Solve - RElem Figure 2: Execution time in R and RElem . Figure 2 presents the execution time of these t wo operations for b oth R and RElem , with increasing num b er of cores (threads in R , pro cesses in RElem ): Up to small differences, the libraries are on-par with one another, as neither is clearly faster than the other. Note that an alternativ e would b e to run one instance of RElem p er no de and rely on m ulti- threaded mkl for shared memory parallel p erformance. 5.2. Scalabilit y: R vs. RElem The main goal of RElem is to extend R ’s processing capabilities beyond the memory and com- pute limitations of a single note. Hence, in the following, we consider a Principle Comp onent Analysis ( prcomp ) with 5 , 000 v ariables and b et w een 125 , 000 and 875 , 000 observ ations, i.e., an input matrix that is b etw een 4.66GB and 41.90GB. Figure 3 presents the execution time of the PCA of both R and RElem for increasing problem sizes. First off, already on a single no de, RElem is b etw een 18% and 43% faster than native R since Elemental offers a faster singular v alue decomp osition algorithm than MKL . F urther- more, since during the PCA computation multiple copies of the input matrix are created, b oth Journal of Statistical Softw are 13 0 50 100 150 200 250 300 350 400 0 5 10 15 20 25 30 35 40 45 Running time [s] Matrix Size [GB] Scaling Principal component Analysis R 24 Threads RElem 24 Procs RElem 48 Procs RElem 72 Procs RElem 96 Procs Figure 3: PCA Execution time in R and RElem . V ertical lines: memory limitations p er no de. R and RElem can only solve problems of up to 12GB in size (roughly 320 , 000 observ ations) on a single no de. While this limitation cannot p ossibly b e o vercome in native R , it is easily addressed in RElem by adding more pro cesses. In addition to sp eeding up single-no de anal- yses, each added compute no de allows to linearly increase the size of the pro cessed problem b y 12GB. 5.3. Ov erhead ov er Elementa l The previous exp eriment s hav e shown the p erformance and scalability adv antages of RElem compared to R ; in the follo wing, w e consider RElem as an interface to Elemen tal and study its ov erhead in execution time and memory consumption. W e b egin b y analyzing the execution time ov erhead of RElem ov er Elemen tal : F or this pur- p ose, we consider the multiplication of tw o matrices of size 12 , 000 × 12 , 000 and the solution of a linear system with 20000 unknowns and 1000 righ t-hand sides (b oth problems take up around 3GB). Figure 4 shows the execution ti me of these t wo op erations for b oth REl em and Elemental : The t wo differen t setups are virtually identical, sho wing that RElem causes no relev ant ov erhead o ver Elemen tal for large computations. This b eha vior w as exp ected since RElem only intro- duces v ery ligh t-weigh t wrapping lay ers around Elemen tal ’s core functions. Measurements of simple query routines such as A.Width() (In RElem : A$Width() ) confirm this observ ation and suggest a marginal ov erhead of, on av erage, 0.6ms p er function call. Next, w e study the memory ov erhead of RElem ov er Elemen tal ; for this purp ose, we con- sider a principal comp onen t analysis with 5000 v ariables and b etw een 125 , 000 and 725 , 000 observ ations (b etw een 3.66GB and 27.00GB) on three compute no des (72 cores). Figure 5 presen ts the peak memory requirement s of this computation per pro cess for b oth RElem and Elemen tal : Indep endent of the problem size, the memory ov erhead of RElem is constant at ab out 30MB p er pro cess. This corresponds to the memory fo otprin t of the 14 RElem 0 20 40 60 80 100 120 140 10 15 20 25 30 35 40 45 50 Running time [s] Processes Overhead RElem Gemm - RElem Gemm - Elemental Solve - RElem Solve - Elemental Figure 4: Perfo rmance comparison of RElem with Elemental . 0 0.5 1 1.5 2 0 5 10 15 20 25 30 Peak Memory per process [GB] Matrix Size [GB] PCA - Memory Overhead RElem RElem Elemental Figure 5: Memory requirements for distributed PCA with 72 pro cesses. R envi ronment and its libraries, y et for large scale computations on gigab ytes of data is negligible. 6. Conclusions The presen ted pac k age RElem enables large scale distributed computations in R through the state-of-the-art linear algebra library Elemental . RElem allo ws R users to tac kle enormous problems on compute clusters that pure R cannot p ossibly pro cess b eing limited to a single shared memory pro cessor. Journal of Statistical Softw are 15 By not only translating Elemen tal ’s in terface to R but giving it a native R feeling by o ver- loading v arious op erators and functions, we created a pack age that b oth offers full access to Elemen tal for exp erienced users, and allows for a seamless transition to distributed memory computations for R users. Nonetheless, RElem is a light-w eigh t in terface from R to Elemen tal that in b oth execution time and memory requirements introduces virtually no o verhead at all. W e demonstrated b oth RElem ’s p erformance and its scalabilit y b ey ond the memory-limitations of a single compute no des. RElem is a v ailable b oth on GitHub under the open-source MIT licence ( https://github. com/HPAC/RElem ). F uture plans for RElem include the support for sparse linear algebra routines a v ailable in Elemen tal . Ac kno wledgment s Financial supp ort from The Aac hen Institute for Adv anced Study in Computational Engineer- ing Science (AICES) through the Deutsche F orsc hungsgemeinsc haft (DFG) gran t GSC 111 is gratefully ac knowledged. The authors thank Jack Poulson for his tec hnical supp ort on Elemen tal. References Beazley DM (1996). “SWIG: An Easy to Use T o ol for Integrating Scripting Languages with C and C++.” In Pr o c e e dings of the 4th Confer enc e on USENIX Tcl/Tk Workshop, 1996 - V olume 4 , TCL TK’96, pp. 15–15. USENIX Asso ciation, Berkeley , CA, USA. URL http: //dl.acm.org/citation.c fm?id=1267498.1267513 . Choi J, Dongarra JJ, P ozo R, W alk er DW (1992). “ScaLAP A CK: A scalable linear algebra library for distributed memory concurren t computers.” In F r ontiers of Massively Par al lel Computation, 1992., F ourth Symp osium on the , pp. 120–127. IEEE. Eddelbuettel D, F ran¸ cois R (2011). “Rcpp: Seamless R and C++ In tegration.” Journal of Statistic al Softwar e , 40 (8), 1–18. URL http://www.jstatsoft.org/v40/i08/ . Gutheil I, M ¨ unc hhalfen JF, Grotendorst J (2014). “Per formance of Dense Eigensolvers on BlueGene/Q.” In Par al lel Pr o c essing and Applie d Mathematics , pp. 26–35. Springer. Ostrouc hov G, Chen W C, Schmidt D, Pat el P (2012). “Programming with Big Data in R.” http://r- pbd.org/ . Accessed: 2014-11-04. P oulson J, Marker B, v an de Geijn RA, Hammond JR, Romero NA (2013). “Elemen tal: A New F ramew ork for Distributed Memory Dense Matrix Computations.” ACM T r ans. Math. Softw. , 39 (2), 13:1–13:24. ISSN 0098-3500. doi:10.1145/2427023.2427 030 . URL http://doi.acm.org/10.1 145/2427023.2427030 . Sc hmidb erger M, Morgan M, Eddelbuettel D, Y u H, Tierney L, Mansmann U (2009). “State- of-the-art in Parallel Computing with R.” Journal of Statistic al Softwar e , 47 (1). 16 RElem Affiliation: Ro drigo Canales Aac hen Institute for Adv anced Study in Computational Engineering Science R WTH Aachen Univ ersit y Sc hinkelstr . 2 52062 Aachen, German y E-mail: canales@aices.rwth-a achen.de URL: http://hpac.rwth- aachen.de Journal of Statistical Software http://www.jstatsoft.or g/ published by the American Statistical Asso ciation http://www.amstat.org/ V olume VV, Issue I I Submitte d: yyyy-mm-dd MMMMMM YYYY A c c epte d: yyyy-mm-dd

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment