Voice Conversion using Convolutional Neural Networks

The human auditory system is able to distinguish the vocal source of thousands of speakers, yet not much is known about what features the auditory system uses to do this. Fourier Transforms are capable of capturing the pitch and harmonic structure of…

Authors: Shariq Mobin, Joan Bruna

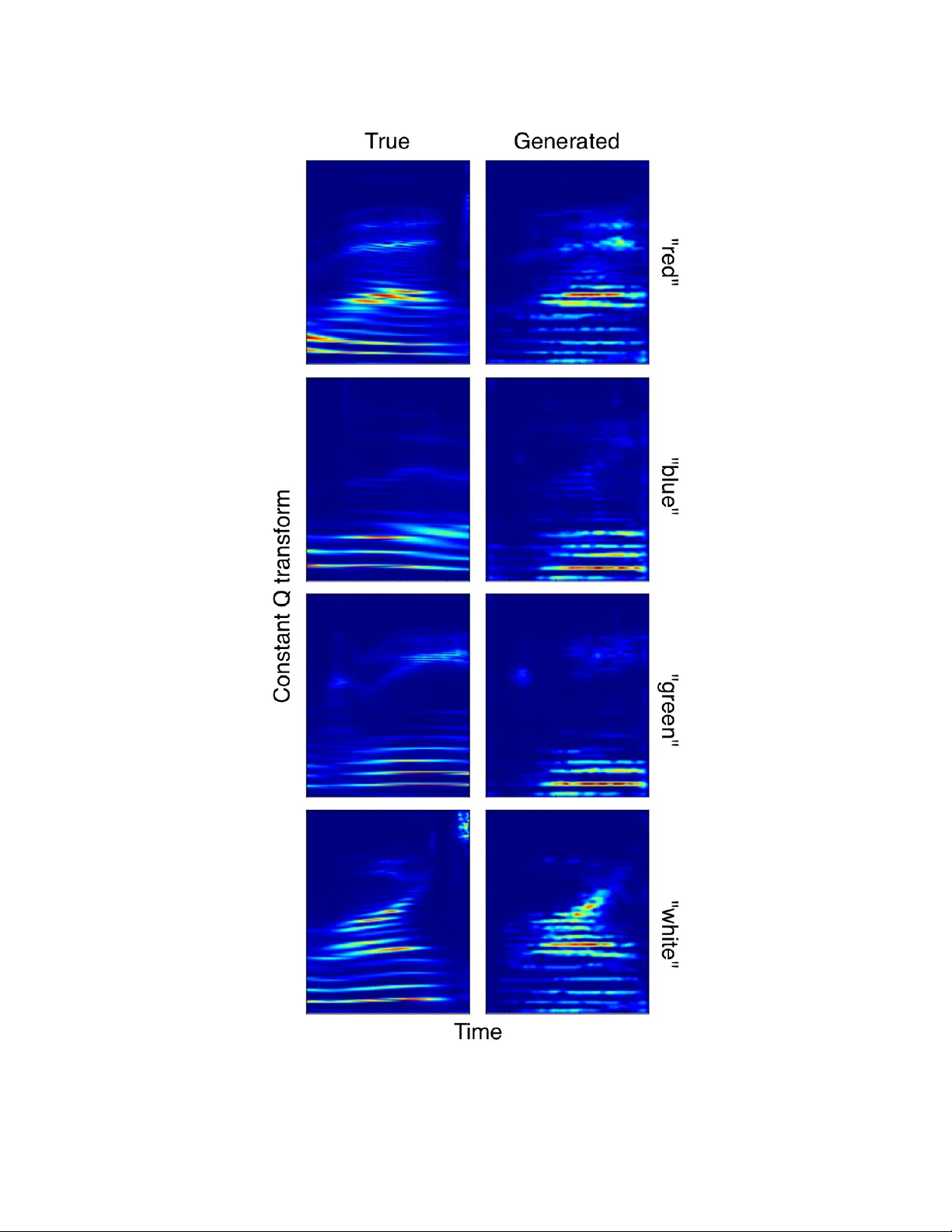

V oice Con version using Con v olutional Neural Networks Shariq A. Mobin Redwood Center for Theoretical Neuroscience UC Berkeley shariq.mobin@gmail.com Joan Bruna Department of Statistics UC Berkeley joan.bruna@gmail.com Abstract The human auditory system is able to distinguish the vocal source of thousands of speakers, yet not much is kno wn about what features the auditory system uses to do this. Fourier T ransforms are capable of capturing the pitch and harmonic structure of the speaker but this alone pro ves insuf ficient at identifying speakers uniquely . The remaining structure, often referred to as timbre, is critical to identifying speakers but we understood little about it. In this paper we use recent advances in neural networks in order to manipulate the voice of one speaker into another by transforming not only the pitch of the speaker , but the timbre. W e re view generativ e models b uilt with neural networks as well as architectures for creating neural networks that learn analogies. Our preliminary results conv erting voices from one speaker to another are encouraging. 1 Introduction When audiologists describe what makes the sound of one person to the next sound different they first refer to the pitches of the speakers and second to the timbre of speakers. While pitch is well described by the harmonic structure the timbre is described broadly as e verything besides pitch and intensity . Often, sounds with identical pitches can sound completely dif ferent. For e xample, we can tell the dif ference between a trumpet and piano playing the same pitch, this is because the timbre of each instrument giv es rise to dif ferent perceptions of the sound. One can think about v ocal signals as being an entanglement of two f actors - what the speak er is saying and who is saying it. The vocal signal is a non-stationary process which causes the disentanglement of these two factors to be very difficult. In this paper we will explore if it possible to hold one of these two factors constant and alternate the other . That is, we will see if it is possible to conv ert the speaker of a vocal signal while holding the spoken word constant. In [ 4 ] it was shown that, using auditory representations inspired by the brain, it w as possible to interpolate sound along the timbre axis between a trumpet and a piano. Howe ver , the model was hand engineered, in this work we seek to learn the transformational operator using neural networks. 2 Background 2.1 Constant Q-T ransf orm In theory , we could train our neural network with the ra w audio wa veformm as input. Ho wev er , in the signal processing community some type of frequency analysis of the wav eform is often analyzed as this transformation makes explicit the harmonic structure of the signal. Here, we apply a constant-Q wa velet transformation (CQT) to the audio signal. This transformation has a number of desirable properties, the most important are: 1 1. The transformation uses logarithmic scaling in frequency . This is very useful when the sound wafeform spans many octav es as in the case of wa veforms from the human vocal system. 2. The CQT transformation has very high temporal resolution and lo w spectral resolution for high frequencies whereas the con verse is true for lo w frequncies. This is very similar to the transformation the basilar membrane of the cochlea performs on the sound wa veform. 2.2 Deep V isual Analogy Making Deep V isual Analogy Networks [ 3 ] are a recent neural network architecture that has been able to achiev e incredible results rotating sprites in the image domain. The goal of the netw ork is to make analogies: "A is to B as C is to D". That is, gi ven A, B, and C as input we w ould like to predict D. An example would be: "groom is to bride as king is to queen". The approach taken by this model is to learn an embedding of the input such that solving these analogies is easy , e.g. linear: Φ( D ) − Φ( C ) ≈ Φ( B ) − Φ( A ) This embedding can be visualized in Figure 1. In practice the relationship does not hav e to be linear , the relationship can further be approximated by more neural network layers, as in the case of our model. A visualization of the network can be seen in Figure 2. Figure 1: By learning an embedding operator , Φ , we are able to linearize the analogy "A is to B as C is to D" Figure 2: A visualization of the Con volutional Neural Netw ork used in the V isual Analogy Network Here our objectiv e function is: E = X ( a,b,c,d ) 1 2 || d − g (Φ( b ) − Φ( a ) + Φ( c ) || 2 2.3 Generative Adversarial Networks Generativ e adversarial netw orks (GANs) [ 2 ] are a recent neural network architecture that allo w for very good generativ e models. These networks hav e been used in the image domain to create very con vincing images of a v ariety of objects [ 1 ]. The basic idea is to use one neural network that is a 2 generator and use another neural network as a discriminator . The networks are adv ersarial in the sense that the generati ve model is trying to imitate the distribution of some true distribution, e.g. images, while the discriminativ e network is trying to classify images as coming from the true distribuitoin or the generativ e, fak e, distribution. This is articulated in Figure 3. Figure 3: A visualization of the the Generati ve Adv ersarial Network idea The goal is then to solve a minimax problem: min β max θ h E x ∼ p ( a ) log p θ ( y = ‘real’ | x ) + E x ∼ p β ( a ) log p θ ( y = ‘fake’ | x ) i In practice optimizing these networks is very dif ficult and realizes on many tricks. 3 Model Here, we combine the ideas from Deep V isual Analogy Networks (V ANs) and Generati ve Adversarial Networks (GANs) in order to create a model capable of doing voice con version. A V AN serv es as our generative model in the GAN sense. The discriminator of our GAN is then implemented by a classifier which distinguishes not only real and fake CQT samples b ut what speaker and w ord category the sample belongs to. This can be summarized by the new minimax equation: min β max θ " X ( w,s ) E x ∼ p ( a | W = w ,S = s ) log p θ ( W = w, S = s | x ) + E x ∼ p β ( a | W = w ,S = s ) log p θ ( w = ‘fak e’ , s = ‘fake’ | x ) # Note, in order to weight the classifier to wards distinguishing fake w ords and speakers we bias half of the samples in a batch to be from the generati ve model. The sampling is otherwise uniform o ver the speaker and word. Our code can be viewed at https://github .com/ShariqM/smcnn. The model parameters are found in models/cnn.lua. 3.1 Results Our results can be seen in Figure 4. While the model is able to capture the harmonic structure of the speaker well, the frequenc y resolution is a bit poor . This likely an artif act of up sampling in the decoding phase. Note that this data is from the training set and there is only 1 speaker and 4 words in the data set. The audio samples can be heard at the follo wing links: • https://dl.dropboxusercontent.com/u/7518467/Bruna/model2/GAN_results/results_red.wa v • https://dl.dropboxusercontent.com/u/7518467/Bruna/model2/GAN_results/results_blue.wa v • https://dl.dropboxusercontent.com/u/7518467/Bruna/model2/GAN_results/results_green.wa v • https://dl.dropboxusercontent.com/u/7518467/Bruna/model2/GAN_results/results_white.wa v A sample from the true distribution comes first, and then one from our generati ve model, for each file. 3 3.2 Conclusion W e began by dev eloping algorithms in order to transfer the timbre of one speaker to another . Our algorithms were able to produce speech that occassionally sounded perceptually similar to the target speak er but w ork remains to be done. Training Generati ve Adversarial Networks has proven very dif ficult in practice and more time will need to be spent understanding ho w best optimize the Conditional Generativ e Adversarial Netw ork model dev eloped here. 4 Figure 4: The left column corresponds to samples of Speaker 2 saying the color indicated on the row from the training data. The right column corresponds to generates samples from our model of the same speaker and color . 5 References [1] Emily L Denton, Soumith Chintala, Rob Fergus, et al. Deep generative image models using a laplacian pyramid of adversarial netw orks. In Advances in neural information pr ocessing systems , pages 1486–1494, 2015. [2] Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair , Aaron Courville, and Y oshua Bengio. Generative adv ersarial nets. In Advances in Neural Information Processing Systems , pages 2672–2680, 2014. [3] Scott E Reed, Y i Zhang, Y uting Zhang, and Honglak Lee. Deep visual analogy-making. In Advances in Neural Information Pr ocessing Systems , pages 1252–1260, 2015. [4] Dmitry N Zotkin, Shihab A Shamma, Powen Ru, Ramani Duraiswami, and Larry S Davis. Pitch and timbre manipulations using cortical representation of sound. In Multimedia and Expo, 2003. ICME’03. Pr oceedings. 2003 International Confer ence on , volume 3, pages III–381. IEEE, 2003. 6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment