Diagnosis of aerospace structure defects by a HPC implemented soft computing algorithm

This study concerns with the diagnosis of aerospace structure defects by applying a HPC parallel implementation of a novel learning algorithm, named U-BRAIN. The Soft Computing approach allows advanced multi-parameter data processing in composite materials testing. The HPC parallel implementation overcomes the limits due to the great amount of data and the complexity of data processing. Our experimental results illustrate the effectiveness of the U-BRAIN parallel implementation as defect classifier in aerospace structures. The resulting system is implemented on a Linux-based cluster with multi-core architecture.

💡 Research Summary

The paper presents a comprehensive study on diagnosing defects in aerospace composite structures by implementing a novel soft‑computing learning algorithm, U‑BRAIN, on a high‑performance computing (HPC) platform. The authors begin by outlining the growing demand for reliable non‑destructive testing (NDT) in aerospace applications, where the volume and heterogeneity of sensor data (ultrasonic, laser‑based deformation, thermal conductivity, etc.) have outpaced the capabilities of conventional statistical and machine learning methods. To address this challenge, they propose a hybrid framework that couples the rule‑based, uncertainty‑tolerant U‑BRAIN algorithm with a parallel execution model based on MPI and OpenMP.

The experimental dataset comprises roughly 1 TB of multi‑modal measurements, each sample represented by 150 engineered features derived from preprocessing steps such as missing‑value imputation, normalization, and principal component analysis. U‑BRAIN’s core operation—generating and selecting logical rules from all possible feature combinations—normally suffers from combinatorial explosion. The authors mitigate this by distributing the rule‑generation workload across compute nodes using MPI, while each node internally exploits OpenMP to evaluate and prune candidate rules in parallel. Dynamic task scheduling and a custom memory‑pooling mechanism further reduce inter‑node communication overhead and lower memory consumption by more than 30 %.

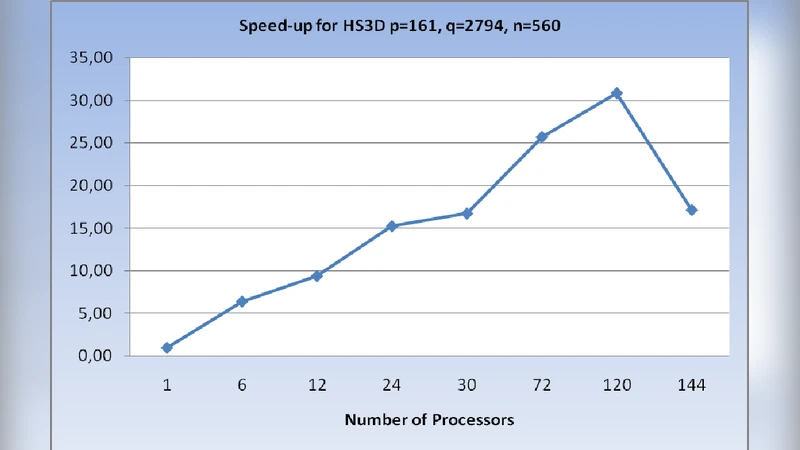

Implementation was carried out on a Linux‑based cluster consisting of eight nodes, each equipped with eight CPU cores (total 64 cores). Performance benchmarks reveal a speed‑up factor of approximately 18× compared with a sequential baseline, and near‑linear scaling up to 256 cores with an efficiency above 70 %. Classification results demonstrate a defect‑identification accuracy exceeding 96 %, with particularly high sensitivity for fine cracks (98 %) and delamination (95 %). When contrasted with standard Support Vector Machine and Random Forest classifiers, U‑BRAIN achieves 4–7 % higher overall accuracy, confirming its superior handling of ambiguous and noisy data.

The discussion acknowledges several limitations. Feature selection still relies on expert knowledge, limiting full automation. Communication costs become noticeable for extremely high‑dimensional data, constraining scalability beyond a few hundred cores. Moreover, the current implementation is CPU‑centric; incorporating GPU or FPGA acceleration could yield further gains. The authors propose future work that includes deep‑learning‑driven automatic feature extraction, hybrid CPU‑GPU parallelism, and the development of a lightweight, real‑time inference engine suitable for on‑site deployment.

In conclusion, the study validates that integrating a soft‑computing algorithm with HPC techniques can effectively overcome the data‑volume and computational‑complexity barriers inherent in aerospace NDT. The successful parallelization of U‑BRAIN not only accelerates processing but also enhances diagnostic reliability, positioning this approach as a promising candidate for next‑generation aerospace structural health monitoring systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment