On the Expressive Power of Deep Learning: A Tensor Analysis

It has long been conjectured that hypotheses spaces suitable for data that is compositional in nature, such as text or images, may be more efficiently represented with deep hierarchical networks than with shallow ones. Despite the vast empirical evid…

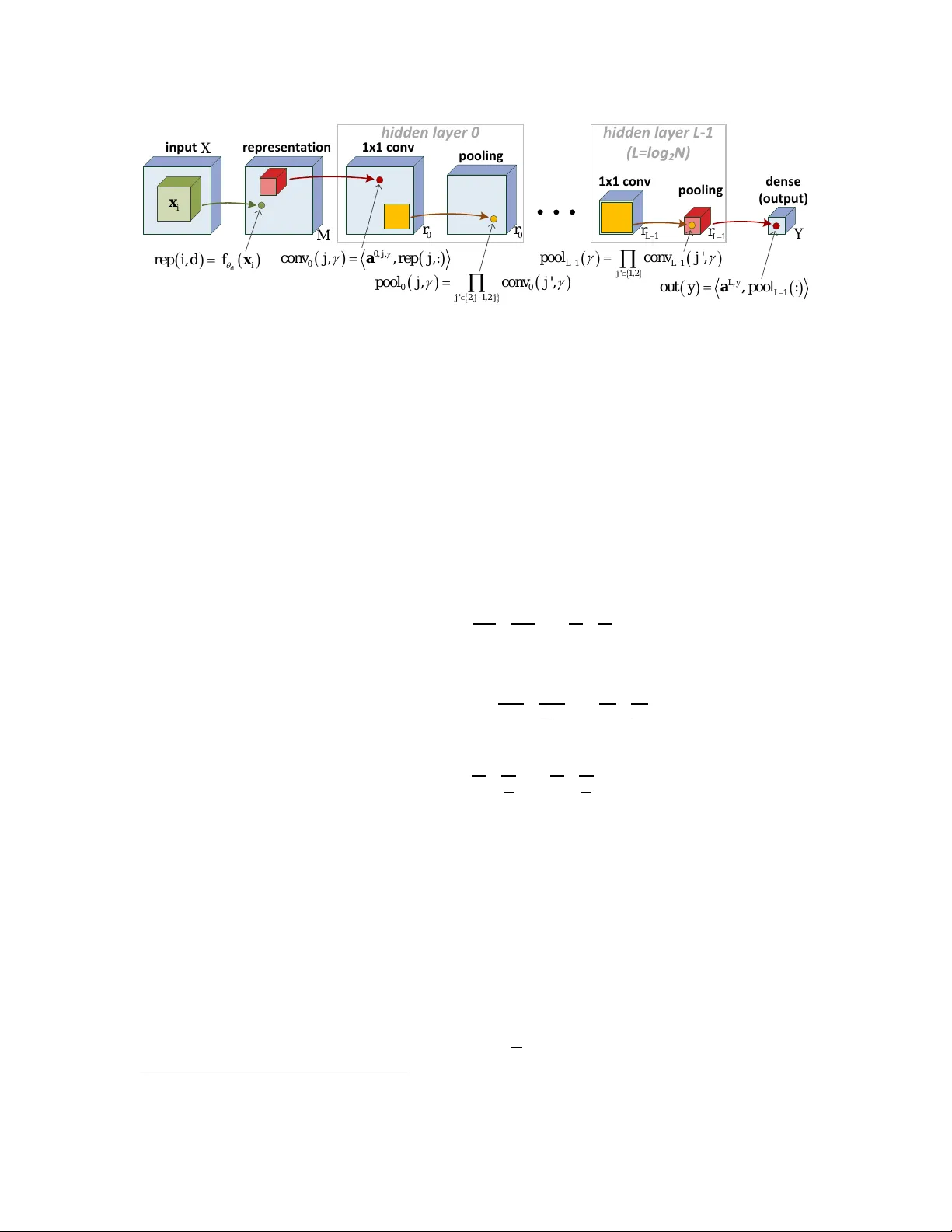

Authors: Nadav Cohen, Or Sharir, Amnon Shashua