Big Data Analytics in Cloud environment using Hadoop

The Big Data management is a problem right now. The Big Data growth is very high. It is very difficult to manage due to various characteristics. This manuscript focuses on Big Data analytics in cloud environment using Hadoop. We have classified the Big Data according to its characteristics like Volume, Value, Variety and Velocity. We have made various nodes to process the data based on their volume, velocity, value and variety. In this work we have classify the input data and routed to various processing node. At the last after processing from each node, we can combine the output of all nodes to get the final result. We have used Hadoop to partition the data as well as process it.

💡 Research Summary

The paper addresses the growing challenge of managing and analyzing massive data sets by proposing a cloud‑based framework that leverages Hadoop for distributed storage and processing. The authors adopt the well‑known “4V” model—Volume, Value, Variety, and Velocity—to classify incoming data and route it to specialized processing nodes, each optimized for a particular characteristic.

In the first stage, a classification module examines metadata, sample content, and predefined rules to decide whether a data item belongs to the high‑volume batch category, requires low‑latency handling, exhibits heterogeneous formats, or is intended for value‑driven analytics. Once classified, the data are dispatched to one of four logical nodes:

- Volume Node – Stores data in HDFS and runs conventional MapReduce jobs for large‑scale batch analytics.

- Velocity Node – Intended for high‑speed streams; the authors mention the possibility of integrating Spark Streaming or Flink on YARN, but the actual implementation reverts to batch MapReduce, limiting true real‑time capability.

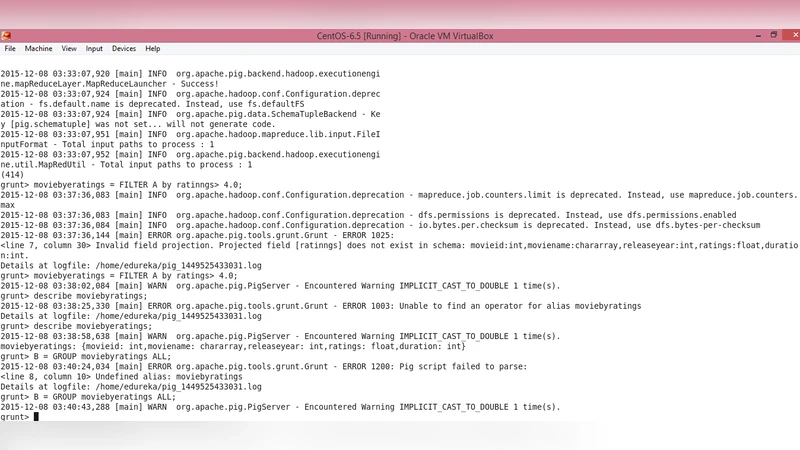

- Variety Node – Handles mixed data formats (structured relational tables, semi‑structured JSON/XML, unstructured text) using a combination of Hive, Pig, and custom parsers.

- Value Node – Focuses on business‑oriented processing, applying machine‑learning models or domain‑specific algorithms and attaching weight metadata to the results.

After node‑level processing, a central aggregation module collects intermediate outputs. This module performs result validation, duplicate elimination, weight‑based aggregation, and final report generation. The aggregated results are exposed through dashboards or APIs for downstream decision‑making.

The experimental setup consists of a modest Hadoop cluster (four nodes, each with 8 CPU cores, 32 GB RAM, and 4 TB HDD). Test data include a 10 TB log corpus (batch), a 5 GB/hour sensor stream (velocity), and 2 TB of mixed‑format files (variety). The authors report per‑node processing times and overall pipeline latency, but they do not provide a direct comparison with a traditional single‑cluster Hadoop deployment, nor do they quantify network overhead, cost efficiency, or scalability under increased load.

In the discussion, the authors claim that the 4V‑driven node segregation simplifies big‑data management and improves resource utilization. They acknowledge that the current prototype is preliminary and outline future work: deeper integration of true streaming engines, richer machine‑learning pipelines, automated scheduling, and dynamic resource allocation.

Critical assessment reveals several strengths and weaknesses. The conceptual division of workloads by 4V characteristics is intuitive and aligns with industry practices of separating batch and streaming workloads. However, the lack of concrete implementation details—especially for the Velocity node—means the framework does not yet deliver on low‑latency promises. The Variety node’s reliance on Hive and Pig without schema‑evolution handling may struggle with rapidly changing data formats. The Value node’s description is vague; there is no discussion of model training, evaluation, or how business value is quantified.

Performance evaluation is another weak point. Without baseline benchmarks, it is impossible to judge whether the multi‑node approach yields measurable gains in throughput, latency, or cost. Moreover, the paper does not address fault tolerance across nodes, data consistency during aggregation, or security considerations in a multi‑tenant cloud environment.

Overall, the paper presents an interesting high‑level architecture that maps the 4V model onto a Hadoop‑centric processing pipeline. To become a compelling contribution, future versions should (1) implement genuine streaming processing for Velocity, (2) provide detailed schema‑management strategies for Variety, (3) define clear value‑extraction workflows with measurable KPIs, and (4) conduct rigorous, quantitative experiments comparing the proposed system against established big‑data platforms. Such enhancements would substantiate the claim that a 4V‑aware, cloud‑native Hadoop framework can effectively tame the complexities of modern big‑data analytics.

Comments & Academic Discussion

Loading comments...

Leave a Comment