Reproducible Experiments for Comparing Apache Flink and Apache Spark on Public Clouds

Big data processing is a hot topic in today’s computer science world. There is a significant demand for analysing big data to satisfy many requirements of many industries. Emergence of the Kappa architecture created a strong requirement for a highly capable and efficient data processing engine. Therefore data processing engines such as Apache Flink and Apache Spark emerged in open source world to fulfil that efficient and high performing data processing requirement. There are many available benchmarks to evaluate those two data processing engines. But complex deployment patterns and dependencies make those benchmarks very difficult to reproduce by our own. This project has two main goals. They are making few of community accepted benchmarks easily reproducible on cloud and validate the performance claimed by those studies.

💡 Research Summary

The paper presents a systematic approach to reproducibly compare the performance of Apache Flink and Apache Spark on public cloud infrastructures, using the Karamel orchestration engine to automate deployment and experiment execution. Recognizing that existing benchmarks for these data‑processing engines suffer from complex deployment procedures and hidden dependencies, the authors aim to (1) make several community‑accepted benchmarks easily reproducible on cloud platforms, and (2) validate the performance claims made in prior studies.

The authors first introduce Karamel, a web‑based tool that combines a domain‑specific language (DSL) for describing cluster configurations with Chef cookbooks that encapsulate software installation steps. Karamel builds an execution directed‑acyclic graph (DAG) from declared dependencies, allowing parallel provisioning of nodes and sequential execution of benchmark phases. The tool supports multiple cloud providers (e.g., AWS EC2, Google Cloud) and can be driven through a graphical UI or a plain‑text DSL file, making it accessible to both novice and expert users.

Two categories of benchmarks are integrated: a batch benchmark (Terasort) and two streaming benchmarks (Yahoo Streaming and Intel HiBench Streaming). For the batch workload, the authors use Hadoop’s Teragen to generate 200 GB, 400 GB, and 600 GB of synthetic data on an HDFS cluster. The generated data serve as input for Flink‑based and Spark‑based Terasort implementations originally adapted from Dongwong Kim’s code. For streaming, Yahoo Streaming simulates an advertising campaign, producing continuous events via Clojure scripts, while HiBench Streaming provides a suite of seven micro‑benchmarks (e.g., word count, grep). Both streaming suites require auxiliary Kafka and Zookeeper clusters; the authors also develop Flink equivalents for HiBench’s micro‑benchmarks, which were originally only available for Spark and Storm.

The experimental environment consists of Amazon EC2 spot instances to reduce cost. Master nodes run on m3.xlarge instances (4 vCPU, 16 GB RAM), and worker nodes on i2.4xlarge instances (16 vCPU, 122 GB RAM). The authors allocate substantial memory to each engine to reflect their in‑memory processing nature: Spark is configured with 100 GB executor memory and 8 GB driver memory; Flink receives 100 GB task‑manager heap and 8 GB job‑manager heap. These settings are exposed in the Karamel DSL and can be altered via the UI without editing code.

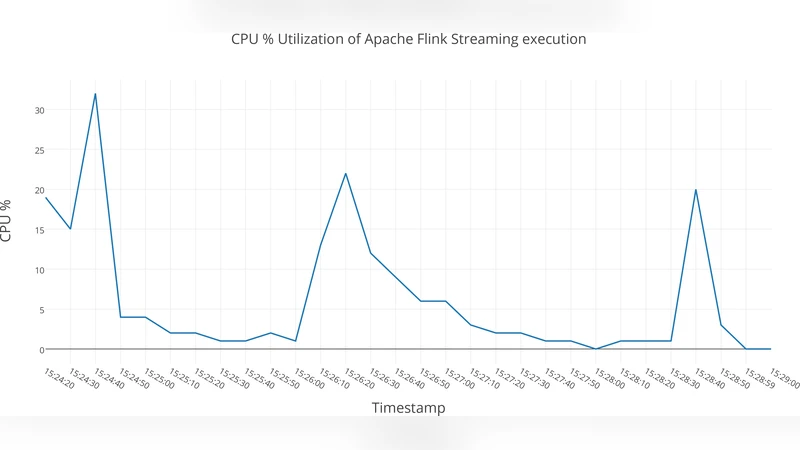

Performance data are collected at two levels. Application‑level metrics (execution time, latency, throughput) are obtained directly from the benchmark programs. System‑level metrics (CPU frequency and load, memory usage, network traffic, disk I/O) are gathered using the lightweight tool collectl, which the authors wrap in a custom “collectl‑monitoring” repository for automated start‑stop and report generation. Four reports (CPU, memory, network, disk) are produced for each run.

Results for the batch Terasort show near‑linear scaling of execution time with data size for both engines. With the generous memory allocations, Flink marginally outperforms Spark on the largest dataset (600 GB), suggesting that Flink’s task scheduling may be slightly more efficient under these conditions. In the streaming experiments, Spark achieves higher throughput in the Yahoo advertising scenario, whereas Flink maintains lower and more stable latency across varying event rates. The HiBench streaming micro‑benchmarks confirm similar trends, with Spark generally faster on compute‑heavy tasks and Flink excelling on latency‑sensitive workloads.

The study demonstrates that Karamel dramatically simplifies the reproducibility of complex big‑data experiments: a single configuration file defines the entire stack (Hadoop for data generation, Spark, Flink, Kafka, Zookeeper, Redis, etc.), and the same file can be reused across clouds or modified to explore different parameter spaces. All cookbooks and DSL files are publicly released on GitHub, enabling other researchers to replicate the experiments or extend them with new workloads, cluster sizes, or cloud providers.

Limitations acknowledged by the authors include reliance on spot instances, which can be terminated unexpectedly, potentially affecting result consistency; a focus on memory‑intensive workloads, leaving I/O‑bound or mixed workloads unexamined; and the fact that the streaming benchmarks, while realistic, still abstract away many production‑level concerns such as multi‑tenant interference and fault tolerance under node failures. Future work is suggested to broaden the benchmark suite, test on additional cloud platforms, and incorporate more diverse workload characteristics.

In conclusion, the paper provides a valuable, open‑source framework for reproducible performance evaluation of Flink and Spark on public clouds, offering detailed methodology, automated tooling, and empirical insights that can serve both the research community and industry practitioners seeking to make informed decisions about big‑data processing engines.

Comments & Academic Discussion

Loading comments...

Leave a Comment