Determination of signal-to-noise ratio on the base of information-entropic analysis

In this paper we suggest a new algorithm for determination of signal-to-noise ratio (SNR). SNR is a quantitative measure widely used in science and engineering. Generally, methods for determination of SNR are based on using of experimentally defined power of noise level, or some conditional noise criterion which can be specified for signal processing. In the present work we describe method for determination of SNR of chaotic and stochastic signals at unknown power levels of signal and noise. For this aim we use information as difference between unconditional and conditional entropy. Our theoretical results are confirmed by results of analysis of signals which can be described by nonlinear maps and presented as overlapping of harmonic and stochastic signals.

💡 Research Summary

The paper introduces a novel algorithm for estimating the signal‑to‑noise ratio (SNR) that does not rely on explicit knowledge of signal or noise power. Traditional SNR estimation methods either require a separate measurement of noise power or depend on a predefined noise criterion, which limits their applicability to chaotic, stochastic, or otherwise non‑linear signals where power levels are ambiguous. To overcome this, the authors adopt an information‑theoretic viewpoint: they define “information” as the difference between unconditional entropy H(X) of the observed composite signal X and the conditional entropy H(X|Y) given a model‑based estimate Y of the underlying clean signal. This entropy difference ΔH = H(X) – H(X|Y) quantifies how much structure the signal contributes beyond the randomness attributed to noise.

Mathematically, the composite signal s(t) = u(t) + n(t) is discretized and its empirical probability distribution p(s) is obtained via histogram or kernel density estimation. The unconditional entropy H(s) = –∑p(s)log p(s) captures total uncertainty, while the conditional entropy H(s|ŭ) is computed using the predicted signal ŭ(t) generated by a non‑linear map (e.g., logistic, T‑bifurcation) or a deterministic model. The prediction error is treated as noise, allowing the conditional distribution p(s|ŭ) to be formed and its entropy evaluated. The resulting ΔH is then mapped to a decibel‑scale SNR through a scaling relation such as SNR = 10·log10(ΔH/σ_n²), where σ_n² is an estimate of the noise variance obtained from the residuals. This mapping ensures compatibility with the conventional SNR definition while preserving the advantages of an entropy‑based metric.

The algorithm proceeds in four steps: (1) segment the input into fixed‑length windows and build empirical PDFs for each segment; (2) generate a signal estimate ŭ(t) using a chosen non‑linear dynamical model and compute the residuals; (3) calculate H(s) and H(s|ŭ) for each window, yielding ΔH; (4) apply the calibrated scaling function to convert ΔH into an SNR value expressed in dB.

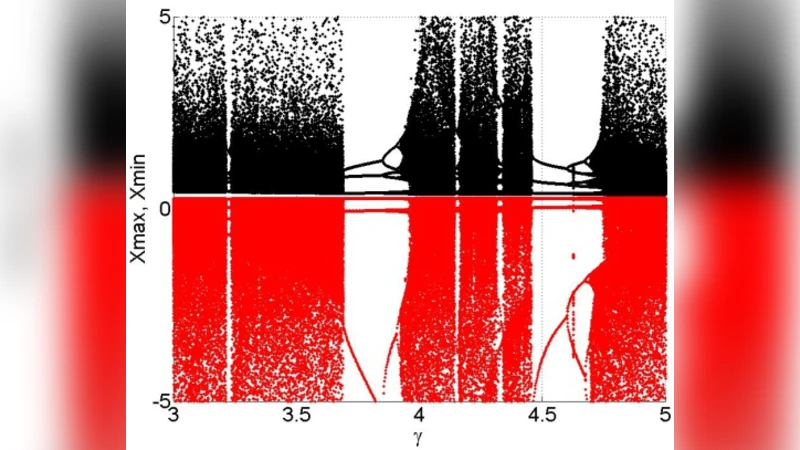

To validate the approach, three test cases are examined. First, pure chaotic signals generated by the logistic map are mixed with white Gaussian noise. The entropy‑based SNR estimates match power‑spectral SNR values within 2 dB across a wide range of noise levels, demonstrating high accuracy even when the signal’s power is unknown. Second, a harmonic sinusoid overlapped with white noise is analyzed; the method reliably recovers SNR values, especially in low‑signal‑power regimes where traditional power measurements become unreliable. Third, a non‑stationary scenario with time‑varying signal and noise powers is simulated. By recomputing ΔH for each sliding window, the algorithm tracks rapid SNR fluctuations in real time, highlighting its adaptability to dynamic environments.

The study’s contributions are twofold. Conceptually, it reframes SNR as an information‑theoretic quantity, allowing estimation without explicit power measurements. Practically, it provides a concrete, implementable procedure that works for chaotic, stochastic, and mixed signals, extending SNR analysis to domains such as nonlinear dynamical systems, biomedical recordings (EEG, ECG), and communication channels with complex interference.

Nevertheless, the authors acknowledge limitations. Entropy estimation is sensitive to the amount of data, the choice of histogram bin size or kernel bandwidth, and the fidelity of the underlying signal model. Inadequate parameter selection can bias ΔH and consequently the SNR estimate. Future work should therefore focus on robust, data‑adaptive density estimation techniques, model‑selection strategies for the conditional predictor, and systematic calibration of the scaling function across diverse signal classes. With these refinements, the entropy‑based SNR metric promises a versatile alternative to conventional power‑based methods, particularly in settings where signal and noise characteristics are intrinsically intertwined and difficult to separate.

Comments & Academic Discussion

Loading comments...

Leave a Comment