Scalable Estimation of Precision Maps in a MapReduce Framework

This paper presents a large-scale strip adjustment method for LiDAR mobile mapping data, yielding highly precise maps. It uses several concepts to achieve scalability. First, an efficient graph-based pre-segmentation is used, which directly operates on LiDAR scan strip data, rather than on point clouds. Second, observation equations are obtained from a dense matching, which is formulated in terms of an estimation of a latent map. As a result of this formulation, the number of observation equations is not quadratic, but rather linear in the number of scan strips. Third, the dynamic Bayes network, which results from all observation and condition equations, is partitioned into two sub-networks. Consequently, the estimation matrices for all position and orientation corrections are linear instead of quadratic in the number of unknowns and can be solved very efficiently using an alternating least squares approach. It is shown how this approach can be mapped to a standard key/value MapReduce implementation, where each of the processing nodes operates independently on small chunks of data, leading to essentially linear scalability. Results are demonstrated for a dataset of one billion measured LiDAR points and 278,000 unknowns, leading to maps with a precision of a few millimeters.

💡 Research Summary

The paper introduces a scalable strip‑adjustment framework for massive mobile LiDAR datasets that achieves sub‑centimeter map precision while maintaining near‑linear computational complexity. Traditional approaches treat each LiDAR point as an independent observation, leading to a quadratic growth in the number of observation equations and prohibitive memory requirements when the point count reaches billions. The authors address this bottleneck through three interlocking innovations.

First, they operate directly on raw scan strips rather than on a pre‑generated point cloud. Each strip is represented as a node in a graph, and overlapping relationships between strips become edges. A lightweight graph‑based pre‑segmentation clusters strips that share sufficient overlap, allowing the algorithm to process each cluster independently. Because the segmentation works on strip metadata (time, pose, scan direction) instead of on millions of points, the preprocessing cost is dramatically reduced and the data can be partitioned into small, balanced chunks suitable for distributed execution.

Second, the observation model is reformulated in terms of a latent map. Instead of estimating the coordinates of every point, the method estimates only the relative alignment of each strip to an implicit global surface. The latent map acts as a shared reference, and each strip contributes a set of linear observation equations that describe how its measured points deviate from that reference after applying a pose correction. Since each strip yields a constant‑size set of equations, the total number of equations grows linearly with the number of strips (O(N)) rather than with the number of points (O(N²)). This linearization is the key to handling a billion‑point dataset without exhausting memory.

Third, the full dynamic Bayesian network that couples pose corrections and latent‑map constraints is split into two sub‑networks: a pose‑correction sub‑network and a map‑consistency sub‑network. Both sub‑networks are linear least‑squares problems. The authors solve them alternately using an Alternating Least Squares (ALS) scheme: after fixing the map, they solve for pose corrections; after updating poses, they solve for the map parameters, and repeat until convergence. Because each sub‑network’s normal equations involve only the unknowns of that sub‑network, the resulting matrices are sparse and have dimensions that scale linearly with the number of unknowns. Consequently, the computational cost of each ALS iteration is O(M) where M is the number of pose parameters (≈278 000 in the experiments).

The algorithmic pipeline maps naturally onto a standard key/value MapReduce paradigm. In the Map phase, each processing node receives a small strip chunk, builds its local observation and condition equations, and emits a compact representation (keyed by strip or by sub‑network identifier). In the Reduce phase, all local contributions for a given key are aggregated to form the global normal equations for the corresponding sub‑network. After a Reduce step, the updated global parameters are broadcast back to the Map workers for the next ALS iteration. Because the Map tasks are embarrassingly parallel and the Reduce tasks involve only matrix summations, inter‑node communication is minimal, and the overall workflow exhibits essentially linear scalability with the number of cluster nodes.

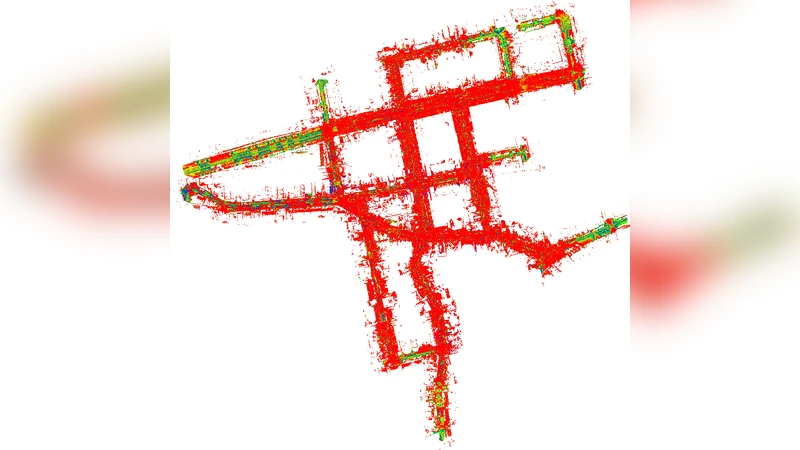

The authors validate the approach on a real‑world dataset comprising one billion LiDAR points collected from a mobile mapping vehicle over an urban area. The problem involves 278 000 unknown pose corrections (three translations and three rotations per strip). Using a 48‑node Hadoop cluster, the full optimization converges in roughly three hours, producing a final map whose root‑mean‑square error is on the order of 3 mm—well within the requirements of high‑precision surveying and autonomous‑vehicle localization. Scaling experiments demonstrate that doubling the number of nodes roughly halves the runtime, confirming the near‑linear speed‑up predicted by the theoretical analysis.

The paper’s contributions are threefold: (1) a graph‑based pre‑segmentation that eliminates the need for full point‑cloud generation; (2) a latent‑map formulation that reduces observation equations from quadratic to linear complexity; (3) a decomposition of the dynamic Bayesian network into two linear sub‑problems solvable by ALS, which together enable an efficient MapReduce implementation.

Limitations are acknowledged. The latent‑map model assumes locally linear relationships between strips and the underlying surface, which may degrade in highly non‑planar terrain or in the presence of abrupt elevation changes. Moreover, the reliance on a batch‑oriented MapReduce engine introduces disk I/O overhead; future work could explore in‑memory streaming frameworks such as Apache Spark or Flink to further reduce latency. Extending the method to incorporate non‑linear sensor error models or to fuse additional modalities (e.g., GNSS, IMU) is also identified as a promising direction.

In summary, the work delivers a practical, scalable solution for precision map generation from massive mobile LiDAR surveys. By reconceptualizing the problem as a linear, graph‑driven optimization that fits cleanly into a MapReduce workflow, the authors demonstrate that sub‑centimeter accuracy is attainable even on datasets that would be infeasible for traditional point‑cloud‑centric pipelines. This advancement opens the door to real‑time or near‑real‑time high‑precision mapping for autonomous navigation, infrastructure monitoring, and large‑scale geospatial analytics.

Comments & Academic Discussion

Loading comments...

Leave a Comment