Nonparametric Bayesian Topic Modelling with the Hierarchical Pitman-Yor Processes

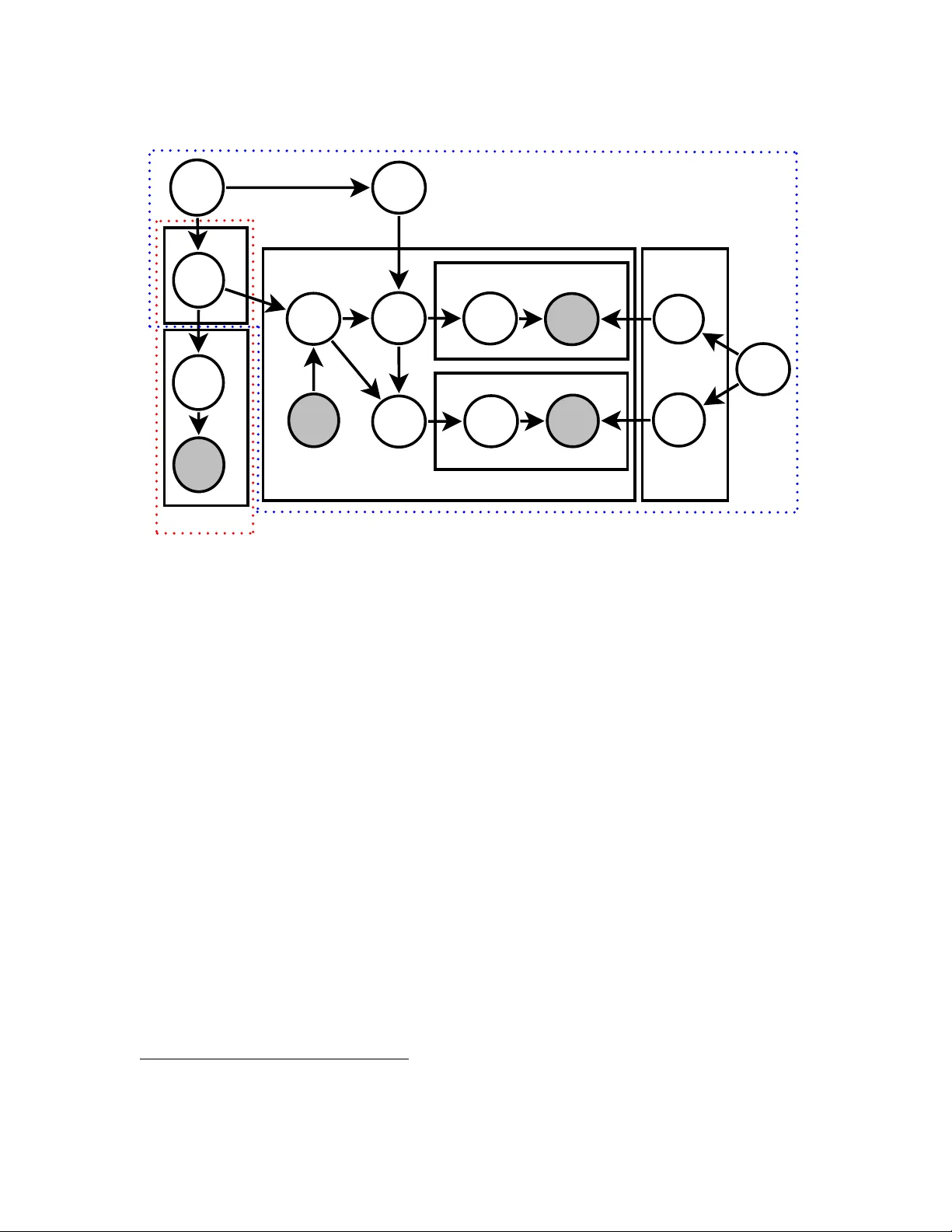

The Dirichlet process and its extension, the Pitman-Yor process, are stochastic processes that take probability distributions as a parameter. These processes can be stacked up to form a hierarchical nonparametric Bayesian model. In this article, we p…

Authors: Kar Wai Lim, Wray Buntine, Changyou Chen