Bibliographic Analysis on Research Publications using Authors, Categorical Labels and the Citation Network

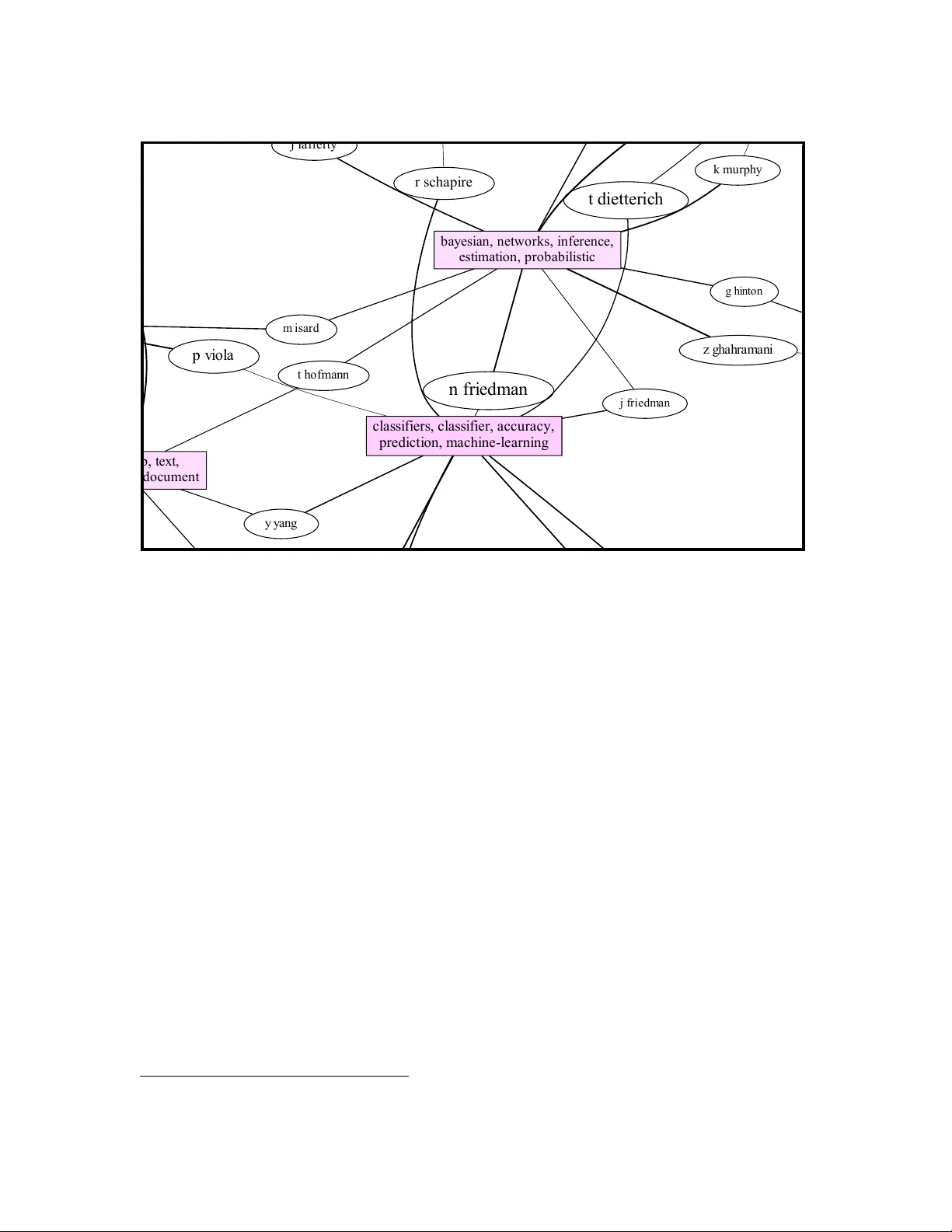

Bibliographic analysis considers the author's research areas, the citation network and the paper content among other things. In this paper, we combine these three in a topic model that produces a bibliographic model of authors, topics and documents, …

Authors: Kar Wai Lim, Wray Buntine