AMOS: An Automated Model Order Selection Algorithm for Spectral Graph Clustering

One of the longstanding problems in spectral graph clustering (SGC) is the so-called model order selection problem: automated selection of the correct number of clusters. This is equivalent to the problem of finding the number of connected components…

Authors: Pin-Yu Chen, Thibaut Gensollen, Alfred O. Hero III

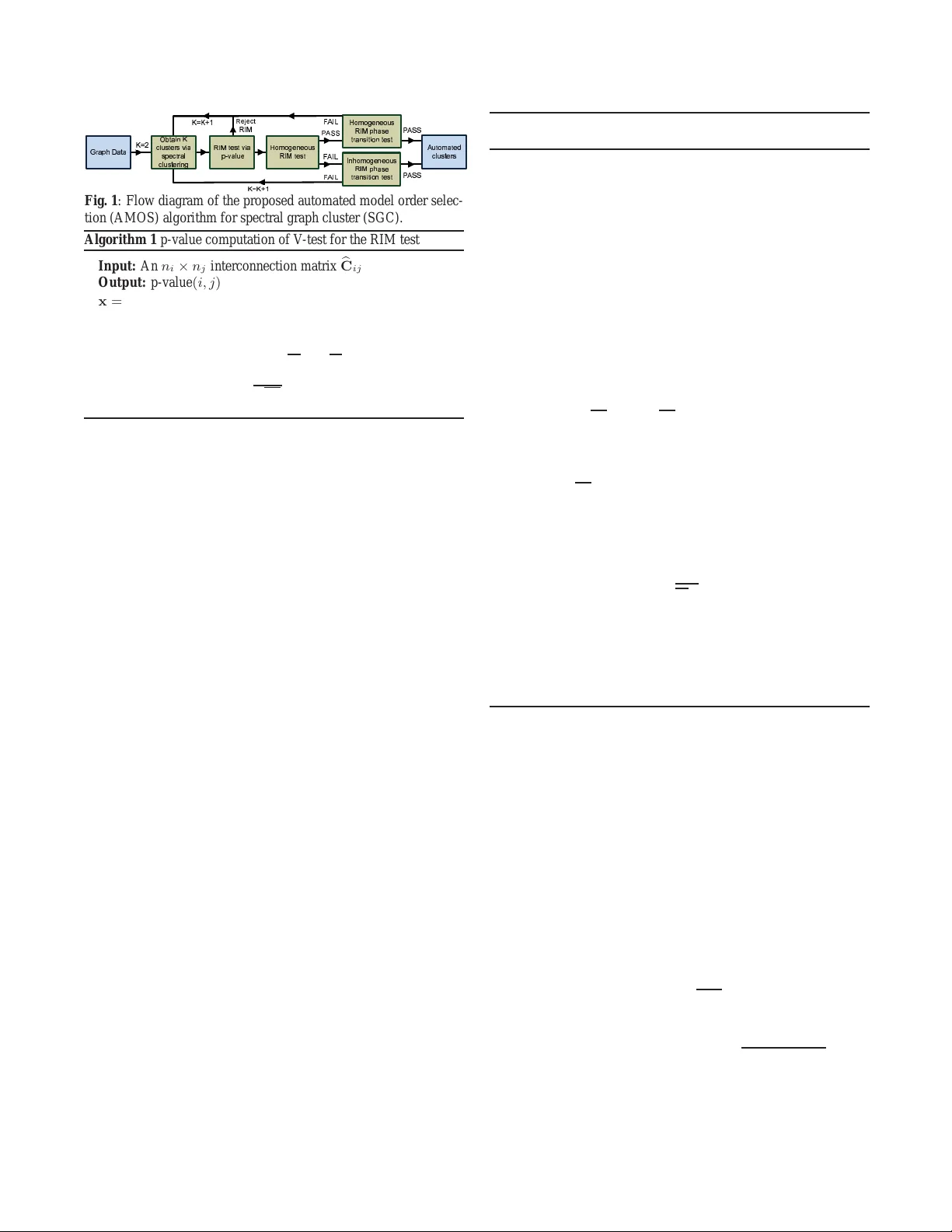

AMOS: AN A UT OMA TED MODEL ORDER SELECTION ALGORITHM FOR SPECTRAL GRAPH CLUSTERING Pin-Y u Chen Thibaut Gensollen Alfr ed O. Her o III, F ellow , IEEE Department of Electrical Engineering and Computer Science, Univer sity of Michigan, Ann Arbor , USA { pinyu, thibautg, hero } @umich.edu ABSTRA CT One of the lon gstanding problems in spec tral g raph clustering (SGC) is the so-called model order selection problem: automated selection of t he correct number of clusters. This is equiv alent to the problem of finding the number of connected components or communities in an undirected graph. In this paper , we propose AMOS, an automated model orde r selection algorithm for SGC. Based on a recent analy- sis of clustering reliability for SGC under the random interconnec- tion model, AMOS works by increm entally inc reasing the number o f clusters, estimating the quality of identified clusters, and providing a series of clustering reliability tests. Consequently , AMOS outputs clusters of mi nimal model order with statistical clustering reliabil- ity guarantees. Comparing to th ree other automated graph clustering methods on real-wo rld da tasets, AMOS sho ws su perior p erformance in terms of multiple externa l and internal clustering metrics. 1. INTRODUCTION Undirected graphs are widely used for network data analysis, whe re nodes represent entities or data samples, and the existence and strength of edges represe nt relations or af finity between nodes. The goal of graph clustering is to group the nodes into clusters of high similarit y . Applications of graph clustering, also known as community detection [1, 2], include but are not limited to g raph sig- nal processing [3–11], multiv ariate data clustering [12–14], image segmen tation [15, 16], and netw ork vulnerability assessment [17]. Spectral clustering [12–14] is a popular method for graph clus- tering, which we refer to as spectral graph cl ustering (SGC). It w orks by transforming the graph adjacenc y matrix into a graph Laplacian matrix [18], computing its eigendecom position, and performing K- means clustering [19] on the eigen v ectors to partition the n odes into clusters. Alt hough heuristic methods hav e been proposed to auto- matically select the number of clusters [12, 1 3, 20], rigorous theo ret- ical justifi cations on the selection of the number of ei gen vectors for clustering are sti ll lacking and lit tle is known abou t the capabilities and limitations of spectral clustering on graphs. Based on a recent de velopment of clustering r eliability analysis for SGC under the random interconnection model (RIM) [21], we propose a nov el automated mo del order selection (AMOS ) algorithm for SGC. AMOS works by incrementally i ncreasing the number of clusters, estimating the quality of identified clusters, and providing a series of clustering reliability tests. Consequently , AMOS outputs clusters of mi nimal model order with statistical clustering reliabil- ity gu arantees. Comparing the clustering performa nce on real-world This work w as p artial ly supported by Army Research Of fi ce gr ant W911NF-15-1-0479 and the Consortium for V erificati on T echnology under Departmen t of Energy Nati onal Nuc lear Securit y Administr ation a ward num- ber de-na0 002534. datasets, AM OS outperforms three o ther au tomated grap h clustering methods in terms of multiple external and internal clustering metr ics. 2. RELA TED WORK Most existing model selection algorithms specify an upper bound K max on the number K of clusters and then select K based on op- timizing some objectiv e function, e.g., the goodness of fit of the k - cluster model for k = 2 , . . . , K max . In [12], the objecti ve is to minimize the sum of cluster-wise Euclidean distances between each data point and the centroid obtained from K-means cluster- ing. In [20], the objectiv e is to maximize the gap between the K -th largest and the ( K + 1) -th largest eigen value. In [13], the authors propose to minimize an objecti ve function that is associated with the cost of aligning the eigenv ectors with a canonical coordinate system. In [22], t he authors propose to iteratively divide a cluster based on the leading eigenv ector of the modularity matrix until no significant impro vemen t in the mod ularity measure can be achiev ed. The Louv ain method in [23] uses a greedy algorithm for modularity maximization. In [ 24, 25], the authors propose to use the eigen v ec- tors of the nonbacktracking matrix for graph clustering, where the number of clusters i s determined by t he number of real eigen v alues with magnitude larger than th e square root of the largest eigen value . The proposed AMOS algorithm not only automatically selects the number of clusters but also provides multi-stage statistical tests for e v aluating clustering reliability of SGC. 3. THEORETICAL FRAMEWORK FOR AMOS 3.1. Random interconnection model (RIM) Consider an undirected graph where its co nnecti vity structure i s rep - resented by an n × n b inary symmetric adjacenc y matrix A , where n is the number of nodes in the graph. [ A ] uv = 1 if there exists an edge between the node pair ( u, v ), and otherwise [ A ] uv = 0 . An un- weighted undirected graph is completely specified by its adjacency matrix A , while a weighted undirected graph is specified by a non- negati ve matrix W , where nonzero entries denote the edge weights. Assume there are K clusters in the graph and denote the size of cluster k by n k . The size of the l argest and smallest cluster is de- noted by n max and n min , respectiv ely . Let A k denote the n k × n k adjacenc y matrix representing the internal edge connections in clus- ter k and let C ij ( i, j ∈ { 1 , 2 , . . . , K } , i 6 = j ) be an n i × n j matrix representing the adjacenc y matrix of inter-cluster edge connections between t he cluster pair ( i, j ). The matri x A k is symmetric and C ij = C T j i for all i 6 = j . The random interconnection m odel (RIM) [21] assumes t hat: (1) the adjacency matrix A k is associated with a connected graph of n k nodes but is otherwise arbitrary; (2) the K ( K − 1) / 2 matrices { C ij } i>j are random mutually independ ent, and each C ij has i.i.d. Bernoulli distributed entries with Bernoulli parameter p ij ∈ [0 , 1] . (3) For undirected weighted graphs the edge weight of each inter- cluster edge between clusters i and j is independently drawn from a common nonnegati v e distribution wi th mean W ij and bounded fourth moment. In particular , W e call this model a homo geneo us RIM when all random i nterconnection s hav e equal probability and mean edge weight, i .e., p ij = p and W ij = W for all i 6 = j . Otherwise, the model is called an inhomo ge neous RIM. 3.2. Sp ectral graph clustering (SGC) The graph Laplacian matrix of the entire graph i s defined as L = S − W , where S = diag ( W1 n ) is a diagonal matrix and 1 n ( 0 n ) is the n × 1 column vector of ones (zeros). Similarly , the graph Laplacian matrix accounting for the within-cluster edges of clu ster k is denoted by L k . W e also denote the i -th smallest eigen v alue of L by λ i ( L ) and define the partial eigen v alue sum S 2: K ( L ) = P K i =2 λ i ( L ) . T o partition the nodes i n t he graph into K ( K ≥ 2 ) clusters, spectral clustering [14] uses the K eigen vectors { u k } K k =1 associated wit h the K smallest eigen v alues of L . Each node can be vie wed as a K - dimensional vector in the subspace spanned by these eigen v ectors. K-means clustering [19] is then implem ented on the K -dimensional vectors to grou p the nodes into K clusters. Throughout this paper we assume the graph is connected, oth- erwise the connected components can be easily found and the pro- posed algorithm can be applied t o each connected component sepa- rately . If the g raph is co nnected, b y the de finition of t he graph Lap la- cian matrix L , the smallest eigen v ector u 1 is a constant vector and λ i ( L ) > 0 ∀ i ≥ 2 . As a result, for connected undirected graphs, it suf fices to use the K − 1 eigen v ectors { u k } K k =2 of L for SGC. In particular , these K − 1 eigenv ectors are represented by the colu mns of the eigen vector matrix Y = [ u 2 , u 3 , . . . , u K ] ∈ R n × ( K − 1) . 3.3. Ph ase transitions under homogeneous RIM Let Y = [ Y T 1 , Y T 2 , . . . , Y T K ] T be the cluster partit ioned eigen ve c- tor matrix associated with L for S GC, where Y k ∈ R n k × ( K − 1) with its rows indexing the nodes in cluster k . Under the homogeneous RIM, let t = p · W be the inter-cluster edge connecti vity parameter . Fixing the wi thin-cluster edge connections and v arying t , Theorem 1 below shows that there exists a critical value t ∗ that separates the behav ior of Y for t he cases of t < t ∗ and t > t ∗ . Theorem 1. Under the h omog eneous R IM wit h parameter t = p · W , ther e exists a critical value t ∗ such t hat the following holds almost sur ely as n k → ∞ ∀ k ∈ { 1 , 2 , . . . , K } and n min n max → c > 0 : (a) If t < t ∗ , Y k = 1 n k 1 T K − 1 V k = v k 1 1 n k , v k 2 1 n k , . . . , v k K − 1 1 n k , ∀ k ; If t > t ∗ , Y T k 1 n k = 0 K − 1 , ∀ k ; If t = t ∗ , Y k = 1 n k 1 T K − 1 V k or Y T k 1 n k = 0 K − 1 , ∀ k , wher e V k = diag ( v k 1 , v k 2 , . . . , v k K − 1 ) ∈ R ( K − 1) × ( K − 1) . In particular , when t < t ∗ , Y has the following pr operties: (a-1) The columns of Y k ar e constant vectors. (a-2) E ach column of Y has at least tw o nonzer o cluster-wise con- stant components, and these constants have alternating signs such that their weighted sum equals 0 (i.e., P k n k v k j = 0 , ∀ j ∈ { 1 , 2 , . . . , K − 1 } ). (a-3) No two columns of Y have the same sign on t he cluster-wise nonzer o components. Furthermor e, t ∗ satisfies: (b) t LB ≤ t ∗ ≤ t UB , wher e t LB = min k ∈{ 1 , 2 ,...,K } S 2: K ( L k ) ( K − 1) n max ; t UB = min k ∈{ 1 , 2 ,...,K } S 2: K ( L k ) ( K − 1) n min . In particular , t LB = t UB when c = 1 . Theorem 1 (a) sh o ws that there exists a critical value t ∗ that sep - arates the beha vior of the rows of Y into two r egimes: (1) when t < t ∗ , based on conditions (a-1) to (a-3), the rows of each Y k is identical and cluster-wise distinct such t hat S GC can be successful. (2) when t > t ∗ , the row sum of each Y k is zero, and the incoher- ence of the entries in Y k make it i mpossible for SGC to separate the clusters. T heorem 1 (b) provid es closed-form upper and lower bounds on t he criti cal value t ∗ , and these two bounds become tight when e very cluster has identical size (i.e., c = 1 ). 3.4. Phase transitions under inhomogeneous RIM W e can extend the phase transiti on analysis of the homogeneou s RIM to the inhomogeneo us RIM. Let Y ∈ R n × ( K − 1) be t he eigen vector matrix of L under the inhomogeneous RIM, and let e Y ∈ R n × ( K − 1) be the eigen v ector matrix of the graph Lapla- cian e L of another random grap h, independen t of L , generated by a homogeneous RIM w ith cluster interconnectivity parameter t . W e can specify the distance between the subspaces spanned by the columns of Y and e Y by inspecting their principal an- gles [14] . Since Y and e Y both have orthonormal columns, the vector v of K − 1 principal angles between their column spaces i s v = [cos − 1 σ 1 ( Y T e Y ) , . . . , cos − 1 σ K − 1 ( Y T e Y )] T , where σ k ( M ) is the k -th largest singular v alue of real rectangular matri x M . Let Θ ( Y , e Y ) = diag ( v ) , and let sin Θ ( Y , e Y ) be defined entrywise. When t < t ∗ , the follo wing th eorem pro vides an upper bound on the Frobenius norm of sin Θ ( Y , e Y ) , denoted by k sin Θ ( Y , e Y ) k F . Theorem 2. Under the inhomogen eous RIM wit h intercon nection para meters { t ij = p ij · W ij } , let t ∗ be the critical thresh old value for the homog eneou s RIM specified b y Theor em 1, and define δ t,n = min { t, | λ K +1 ( L n ) − t |} . F or a fixed t , if t < t ∗ and δ t,n → δ t > 0 as n k → ∞ ∀ k ∈ { 1 , 2 , . . . , K } , the following statement holds almost sur ely as n k → ∞ ∀ k and n min n max → c > 0 : k sin Θ ( Y , e Y ) k F ≤ k L − e L k F nδ t . Furthermor e, let t max = max i 6 = j t ij . If t max < t ∗ , then k sin Θ ( Y , e Y ) k F ≤ min t ≤ t max k L − e L k F nδ t . By T heorem 1, since under the homogen eous RIM the rows of e Y has cluster-wise separability when t < t ∗ , Theorem 2 sho ws that under the inhomogeneou s RIM cluster-wise separa- bility in Y can still be expected provided that the subspace distance k sin Θ ( Y , e Y ) k F is small and t < t ∗ . Moreov er , if t max < t ∗ , we can obtain a tighter upper bound on k sin Θ ( Y , e Y ) k F . These two theorems serve as the cornerstone of the prop osed AMOS algorithm, and the proofs are gi ven in the e xtended version [21]. 4. A UTOMA TED MODEL ORDER SELECTION (AMOS) ALGORITHM FOR SPECTRAL GRAPH CLUSTERING Based on the theoretical frame work in Sec. 3, we propose an auto- mated mod el order selec tion (AMOS) a lgorithm for automated clus- ter assignment for SGC. The flow diagram of AMOS i s displayed in ! " # $ % & ' ' ( # $ # ! # # # $ # ! # # ( # $ # ! # & ' ' & ' ' Fig. 1 : Flow diagram of the proposed automated model order selec- tion (AMOS) algorithm for spectral graph cluster (SGC). Algorithm 1 p-v alue computation of V -test for the RIM test Input: An n i × n j interconnection matrix b C ij Output: p-value ( i, j ) x = b C ij 1 n j (# of nonzero entries of each row in b C ij ) y = n j 1 n i − x (# of zero entries of each row in b C ij ) X = x T x − x T 1 n i and Y = y T y − y T 1 n i . N = n i n j ( n j − 1) and V = √ X + √ Y 2 . Compute test statistic Z = V − N √ 2 N Compute p-v alue ( i, j )= 2 · min { Φ( Z ) , 1 − Φ( Z ) } Fig. 1 , and the algo rithm is summarized in Algorithm 2. The AMOS codes can be do wnloaded from https://github .com/tgenso l/AMOS. AMOS works by iteratively increasing the numb er of clusters K and performing multi-stage statistical clustering reliability tests un- til the identified clusters are deemed reliable. T he stati stical tests in AMOS are implemented in two phases. The fi rst phase is to test the RIM ass umption based on the interconnecti vity pattern of each clus- ter (Sec. 4.1), and the second phase is t o test the homoge neity and v ariation o f the interconnectivity parameter p ij for e very cluster pair i and j in addition to making comparisons to t he critical phase tran- sition t hreshold (S ec. 4.2). The proofs of the established stat istical clustering reliability tests are gi ven in the e xtended version [21]. The input graph data of AMOS is a matrix representing a con- nected undirected weighted graph. For each iteration in K , SGC is implemented to produc e K clusters { b G k } K k =1 , where b G k is the k -th identified cluster with number of n odes b n k and number of edges b m k . 4.1. RIM test via p-value fo r local homogeneity testing Giv en clusters { b G k } K k =1 obtained from SGC with model order K , let b C ij be the b n i × b n j interconnection matrix of between -cluster edges connecting clusters i and j . The goal o f local homogeneity testing is to compute a p-v alue to test the hypothesis tha t t he identified clusters satisfy the RIM. More spec ifically , we a re testing the null hypothesis that b C ij is a r ealization of a random matrix with i.i.d. Bernoulli en- tries (RIM) and the alternati v e hy pothesis that b C ij is a r ealization of a random matrix wit h independent Bernoulli entries (not RIM), for all i 6 = j , i > j . T o compute a p-value f or t he RIM test we use the V -test [26] for homogeneity t esting of the row sums of each intercon- nection matrix b C ij . Specifically , the V -t est tests t hat th e rows of b C ij are all identically distr ibuted. For any b C ij the t est statistic Z of the V -test con verges to a standard normal distribution as n i , n j → ∞ , and the p-v alue for the hypothe sis that the ro w sums of b C ij are i.i.d. is p-v alue ( i, j ) = 2 · min { Φ( Z ) , 1 − Φ( Z ) } , where Φ( · ) is the cu- mulativ e distribution function (cdf) of the standard normal distribu- tion. The proposed V -test procedure is summarized in Algorithm 1. The RIM test o n b C ij rejects the null hy pothesis if p-value ( i, j ) ≤ η , where η i s the desired single comparison significance level. T he AMOS algorithm won ’t proceed to the phase transition test stage (Sec. 4.2) unless ev ery b C ij passes the RIM test. Algorithm 2 Automated model order selection (AMOS) algorithm for spectral graph clustering (SGC) Input: a connected undirected weighted graph, p-v alue signifi- cance lev el η , RIM confidence interval param eters α , α ′ Output: number of clusters K and identified clusters { b G k } K k =1 Initialization: K = 2 . Flag = 1 . while Flag = 1 do Obtain K clusters { b G k } K k =1 via spectral clustering ( ∗ ) # Local homog eneity testing # fo r i = 1 t o K do fo r j = i + 1 to K d o Calculate p-v alue( i, j ) from Algorithm 1. if p-v alue( i, j ) ≤ η then Reject RIM Go back to ( ∗ ) with K = K + 1 . end if end fo r end fo r Estimate b p , c W , { b p ij } , { c W ij } , and b t LB specified in Sec. 4.2. # Homog eneous RIM test # if b p l ies within the confidence interva l in (1) then # Homog eneous RIM phase transition test # if b p · c W < b t LB then Flag = 0 . else Go back to ( ∗ ) with K = K + 1 . end if else if b p does not lie within th e confidence interv al in (1) then # Inhomo geneo us RIM phase transition test # if Q K i =1 Q K j = i +1 F ij b t LB c W ij , b p ij ≥ 1 − α ′ then Flag = 0 . else Go back to ( ∗ ) with K = K + 1 . end if end if end while Output K clusters { b G k } K k =1 . 4.2. Phase transition tests Once the i dentified clusters { b G k } K k =1 pass the RIM test, one can em- pirically determine the reliability of the clustering results using the phase transition analysis in Sec. 3. AMOS first t ests the assumption of homogeneous R IM, and performs the homog eneous RIM phase transition test by comparing the empirical estimate b t of the inter- connecti vity pa rameter t with the empirical esti mate b t LB of the lo wer bound t LB on t ∗ based on Theorem 1. If the test on the assumption of homogen eous RIM fails, AMOS then performs the inhomog eneous RIM phase transition test by comparing the empirical estimate b t max of t max with b t LB based on Theorem 2. • Homogeneous RIM test : The homogene ous RIM test is summa- rized as fo llows. Given clusters { b G k } K k =1 , we estimate the intercon- necti vity parameters { b p ij } by b p ij = b m ij b n i b n j , where b m ij is the number of inter-cluster edges between clusters i and j , and b p ij is the max- imum likelihood estimator (MLE) of p ij . Under the homogene ous RIM, the estimate of the parameter p is b p = 2( m − P K k =1 b m k ) n 2 − P K k =1 b n 2 k , where b m k is the number of within-cluster edges of cluster k and m is the total number of edges in t he graph. A generalized log-likelihood ra- tio test (GLR T) is used to test the validity of th e homogeneo us RIM. By the Wilk’ s t heorem [31], an asymptotic 100 (1 − α )% confidence Dataset Node Edge Ground truth IEEE reliability test system (R TS) [27] 73 po wer stations 108 po wer lines 3 po wer subsystems Hibernia Internet backbon e map [28] 55 cities 162 connections American & Europe cities Cogent Internet backbon e map [28] 197 cities 243 connections American & Europe cities Minnesota road map [29] 2640 intersections 3302 roads None Faceboo k [30] 4039 users 88234 friendships None T able 1 : Summary of real-world datasets. interv al for p in an assumed homogeneous RIM is ( p : ξ ( K 2 ) − 1 , 1 − α 2 ≤ 2 K X i =1 K X j = i +1 I { b p ij ∈ (0 , 1) } [ b m ij ln b p ij (1) +( b n i b n j − b m ij ) ln( 1 − b p ij )] − 2 m − K X k =1 b m k ! ln p − " n 2 − K X k =1 b n 2 k − 2 m − K X k =1 b m k !# ln(1 − p ) ≤ ξ ( K 2 ) − 1 , α 2 ) , where ξ q,α is the upper α -th quan tile of the central chi-square distri- bution with degree of freedom q . The clusters pass the ho mogeneous RIM test if b p is wit hin the confidence interv al specified in (1). • Homogeneous RIM phase transi tion test : By Theorem 1, if the i dentified clusters follow t he homogeneous RIM, then they are deemed reliable whe n b t < b t LB , where b t = b p · c W , c W is the average of all between-cluster ed ge weights, and b t LB = min k ∈{ 1 , 2 ,...,K } S 2: K ( b L k ) ( K − 1) b n max . • Inhomogeneous RIM p hase transition test : If the clusters fail the homogeneous RIM t est, we then use the maximum of MLEs of t ij ’ s, denoted by b t max = max i>j b t ij , as a test stati stic for testing the null hypothesis H 0 : b t max < t LB against the alternative hypo the- sis H 1 : b t max ≥ t LB . The test accepts H 0 if b t max < t LB and hence by Theorem 2 the identified clusters are deemed reliable. Using the Anscombe transformation on the b p ij ’ s fo r v ariance stabilization [32], let A ij ( x ) = sin − 1 s x + c ′ b n i b n j 1+ 2 c ′ b n i b n j , where c ′ = 3 8 . Under the null hypothesis that b t max < t LB , from [33 , T heorem 2.1], an asymp- totic 100(1 − α ′ )% confidence interv al for b t max is [0 , ψ ] , where ψ ( α ′ , { b t ij } ) is a function of the precision parameter α ′ ∈ [0 , 1] and { b t ij } . Furthermore, it ca n be shown that verifying ψ < b t LB is equ iv - alent to checkin g the condition K Y i =1 K Y j = i +1 F ij b t LB c W ij , b p ij ! ≥ 1 − α ′ , (2) where F ij ( x, b p ij ) = Φ p 4 b n i b n j + 2 · ( A ij ( x ) − A ij ( b p ij )) · I { b p ij ∈ (0 , 1) } + I { b p ij

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment