Robust Fusion of Multi-Band Images with Different Spatial and Spectral Resolutions for Change Detection

Archetypal scenarios for change detection generally consider two images acquired through sensors of the same modality. However, in some specific cases such as emergency situations, the only images available may be those acquired through different kin…

Authors: Vinicius Ferraris, Nicolas Dobigeon, Qi Wei

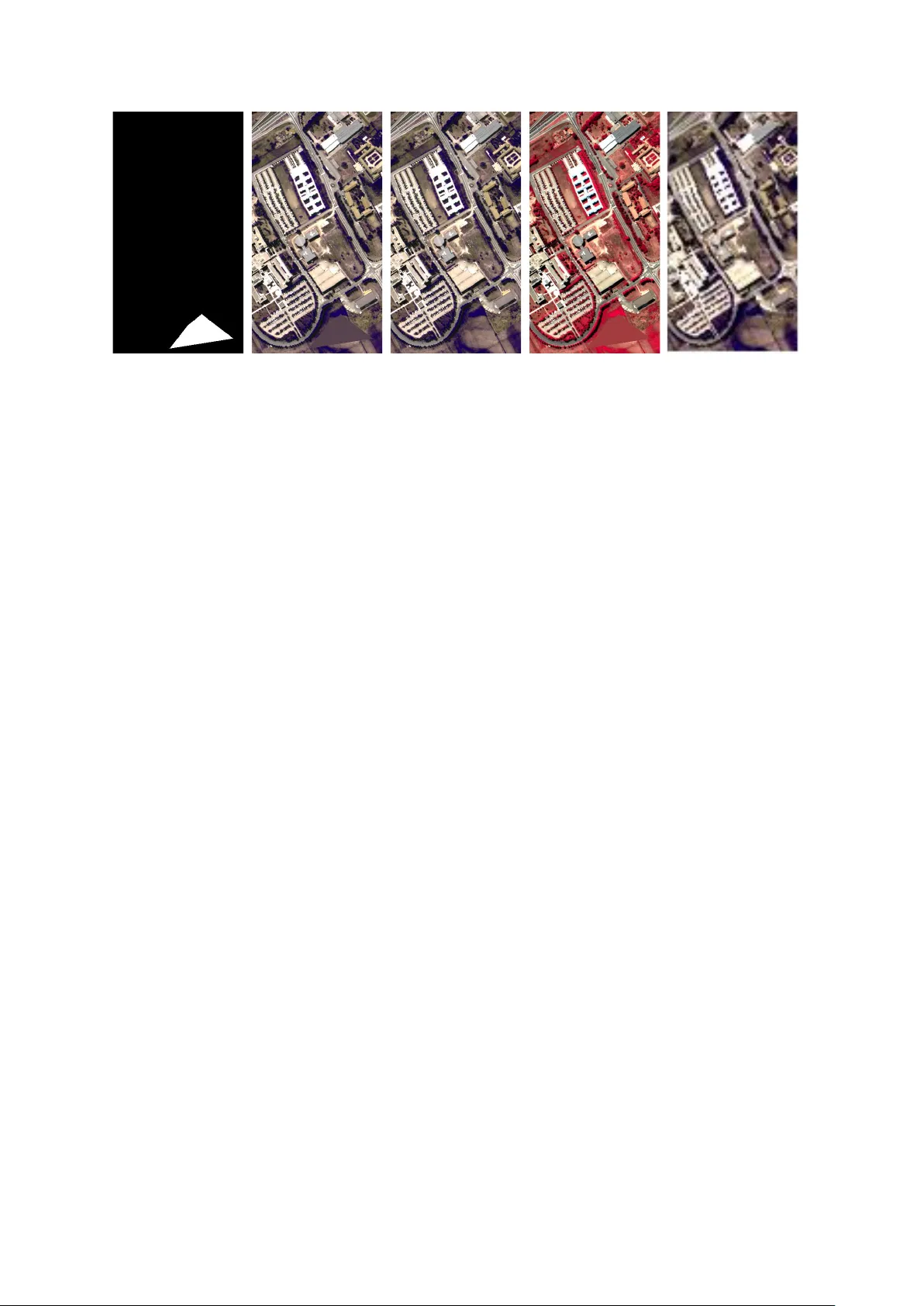

1 Rob ust fusion of multi-band images with dif ferent spatial and spectral resolutions for change detection V inicius Ferraris, Nicolas Dobigeon, Senior Member , IEEE , Qi W ei, Member , IEEE , and Marie Chabert Abstract Archetypal scenarios for change detection generally consider two images acquired through sen- sors of the same modality . Howe ver , in some specific cases such as emergency situations, the only images av ailable may be those acquired through different kinds of sensors. More precisely , this paper addresses the problem of detecting changes between two multi-band optical images characterized by different spatial and spectral resolutions. This sensor dissimilarity introduces additional issues in the context of operational change detection. T o alleviate these issues, classical change detection methods are applied after independent preprocessing steps (e.g., resampling) used to get the same spatial and spectral resolutions for the pair of observ ed images. Nevertheless, these preprocessing steps tend to throw away relev ant information. Con versely , in this paper, we propose a method that more effecti vely uses the av ailable information by modeling the two observed images as spatial and spectral versions of two (unobserved) latent images characterized by the same high spatial and high spectral resolutions. As they cover the same scene, these latent images are expected to be globally similar except for possible changes in sparse spatial locations. Thus, the change detection task is en visioned through a robust multi-band image fusion method which enforces the differences between the estimated latent images to be spatially sparse. This robust fusion problem is formulated as an in verse problem which is iterati vely solv ed using an efficient block-coordinate descent algorithm. The proposed method is applied to real panchormatic/multispectral and hyperspectral images with Part of this work has been submitted to the IEEE Int. Conf. Acoust., Speech and Signal Process. (ICASSP), 2017 [1]. Part of this work has been supported by Coordenação de Aperfeiçoamento de Ensino Superior (CAPES), Brazil, and EU FP7 through the ERANETMED JC-W A TER Program, MapInvPlnt Project ANR-15-NMED-0002-02. V . Ferraris, N. Dobigeon and M. Chabert are with Univ ersity of T oulouse, IRIT/INP-ENSEEIHT , France (email: {vinicius.ferraris, nicolas.dobigeon, marie.chabert}@enseeiht.fr). Q. W ei is with Department of Engineering, University of Cambridge, CB2 1PZ, Cambridge, UK (email: qw245@cam.ac.uk). September 21, 2016 DRAFT 2 simulated realistic changes. A comparison with state-of-the-art change detection methods evidences the accuracy of the proposed strategy . Index T erms Change detection, image fusion, different resolutions, hyperspectral imagery , multispectral im- agery . I . I N T R O D U C T I O N Remote sensing is a reliable technique for Earth surface monitoring and observ ation [2], [3]. One of the most important applications using remotely sensed data is the so-called change detection (CD) problem. CD has many definitions and it is generally considered as the ability of analyzing two or more multi-date (i.e., acquired at different time instants) and possibly multi-source (i.e., acquired by dif ferent sensors) images of the same scene to detect areas where potential changes hav e occurred [4], [5]. Because of the increasing number of satellites and of ne w policies for data distribution, more multi-temporal data becomes av ailable. While it increases the amount of information on the present scene, it highlights some additional issues when designing operational change detection techniques. Each remotely sensed observation image is intimately connected to the acquisition modality pro- viding a particular excerpt of the observed scene according to the sensor specifications. For instance, optical images are generally well suited to map horizontal structures, e.g., land-co ver type at large scales [6]. More particularly , remote sensing images acquired by multi-band optical sensors can be classified according to their spectral and spatial resolutions. The spectral resolution is related to the capability in sensing the electromagnetic spectrum. This term can also refer to the number of spectral bands [3], [7], which generally leads to a commonly adopted classification of these images: panchr omatic (P AN) images, characterized by a low spectral resolution, multispectral (MS) and hyperspectr al (HS) images which sense part of the spectrum with higher precision. Alternati vely , multi-band optical images can be classified with respect to (w .r .t.) their spatial resolution [3], [6]. The concept of spatial resolution should be understand as the capability of representing the smallest object that can be resolved up to a specific pixel size. Images having small resolution size and finer details are generally identified as of (high r esolution (HR) in contrast to low r esolution (LR) images where only coarse features are observable. Because of the ph ysical limitations of optical passiv e sensors, multi-band optical images suffer from a trade-of f between spectral and spatial resolution [2], [8]. T o ensure that any sensor has sufficient amount of energy to guarantee a proper acquisition (in terms of, e.g., signal-to-noise ratio), one of the resolutions must be decreased allowing the other to be increased. For this reason, P AN images are generally characterized by higher spatial resolution and lower spectral resolution than MS or a HS images. September 21, 2016 DRAFT 3 Optical images hav e been the most studied remote sensing modality for CD since the widely admitted additiv e Gaussian modeling of optical optical sensor noises allows CD techniques to be implemented through a simple operation of image differencing [4], [5]. Originally designed for single- band images, CD differencing methods hav e been adapted to handle multi-band images by considering spectral change vectors [9], [10] and transform analysis [11], [12]. The possibility of detecting changes by exploiting both spatial and spectral information is one of the greatest advantages of these multi- band images. Nev ertheless, images of same modality are not always av ailable. In some specific scenarios, for instance consecutive to natural disasters, the av ailability of data imposes observ ation images acquired through different kind of sensors. Such disadvantageous emergenc y situations yet require fast, flexible and accurate methods able to handle also the incompatibilities introduced by the each sensor modality [13]–[16]. Most of the CD classical methods do not support differences in resolutions. Generally , each observed image is independently preprocessed in order to get the same resolution and then classical CD techniques are applied. Howe ver , independent resampling operations do not take into account the pair of observed images and even throw a way important information. Recently , a general CD framework has been proposed in [17] to deal with multi-band images with dif ferent spatial and spectral resolutions based on a 3 -step procedure (fusion, prediction, detection). Instead of independently preprocessing each observed image, this approach consists in recovering a latent (i.e., unobserv ed) HR-HS image containing changed and unchanged regions by fusing both observed images. Then, it predicts pseudo-observ ed images by artificially degrading the estimated HR- HS latent image using the same forward models underlying the actually observed images. As the pairs of predicted and observ ed observ ations hav e the same spatial and spectral resolutions, an y classical multi-band CD method can be finally applied to build a change map. Albeit significantly improving detection performance when compared to crude methods relying on independent preprocessing, the 3 -step sequential formulation appears to be non-optimal for the following twofold reasons: i) any inaccuracies in the fusion step are propagated throughout the subsequent degradation and detection steps, ii) relev ant information re garding the change may be lost during the prediction steps, since it consists in spatially or spectrally degrading the latent images to estimate the pseudo-observed images. Thus, significant improvements in terms of change detection performance may be expected provided one is able to overcome both limitations. In this paper , capitalizing on the general framew ork dev eloped in [17], we show that the CD task can be formulated as a particular instance of the multi-band image fusion problem. Ho wev er , contrary to the 3 -step procedure in [17], the proposed approach jointly estimates a couple of distinct HR-HS latent images corresponding to the two acquisition times as well as the change image. Since the two HR-HS latent images are supposed to represent the same scene, they are expected to share a high le vel of similarity or , equi v alently , to differ only in a few spatial locations. Thus, akin to numerous September 21, 2016 DRAFT 4 robust factorizing models such as robust principal component analysis [18] and rob ust nonnegati ve matrix factorization [19], the tw o observed images are jointly approximated by a standard linear decomposition model complemented with an HR-HS outlier term corresponding to the change image. This so-called CD-driv en r obust fusion of multi-band images is formulated as an in verse problem where, in particular , the outlier term is characterized by a spatial sparsity-inducing regularization. The resulting objective function is solved through the use of a block coordinate descent (BCD) algorithm, which iterati vely optimizes w .r .t. one latent image and the change image. Remarkably , optimizing w .r .t. the latent image boils down to a classical multi-band image fusion step and can be efficiently conducted following the algorithmic solutions proposed in [20]. The CD map can be finally generated from the recov ered HR-HS change image. The paper is or ganized as follows. Section II formulates the change detection problem for multi- band optical image. Section III presents the solution for the formulated problem based on robust fusion. The simulation strategy as well as the results and considerations are present in Section IV. I I . F R O M C H A N G E D E T E C T I O N T O R O B U S T F U S I O N A. Generic forward model Let us consider the image formation process as a sequence of transformations, denoted T [ · ] , of the original scene into an output image. The output image of a particular sensor is referred to as the observed image and denoted Y ∈ R n λ × m where m and n λ are the numbers of pix els and spectral bands in the observed image, respecti vely . It provides a limited version of the original scene with characteristics imposed by the image signal processor (ISP) characterizing the sensor . The original scene can be con veniently represented by an (unknown) latent image of higher spatial and spectral resolutions, X ∈ R m λ × n , where n ≥ m and m λ ≥ n λ are the numbers of pixels and spectral bands, respecti vely , related to the observed image following Y = T [ X ] . (1) The intrinsic sequence of transformations of the sensor over the latent image X can be typically classified as spectral or spatial degradations. On one hand, spatial degradations are related to the spatial characteristics of the sensor such as sampling scheme and optical transfer function. On the other hand, spectral degradations refer to the wa velength sensitivity and the spectral sampling. There are many ways to represent the degradation process. In this paper , is is considered as a sequence of linear operations leading to the follo wing generic forward model [21]–[23] Y = LXR + N (2) where September 21, 2016 DRAFT 5 • L ∈ R n λ × m λ is the spectral degradation matrix, • R ∈ R n × m is the spatial degradation matrix, • N is the additive term comprising sensor noise and modeling errors. In (2), the left-multiplying matrix L ∈ R n λ × m λ degrades the latent image by combination of some spectral bands for each pixel while the right-multiplying matrix R ∈ R n × m degrades the latent image by linear combination of pixels within the same spectral band. The former degradation corresponds to a spectral resolution reduction with respect to the latent image X as in [20], [22], [23]. In practice, this degradation models an intrinsic characteristic of the sensor , namely the spectral response. It can be either learned by cross-calibration or known a priori [23], [24]. Con versely , the spatial degradation matrix R models the combination of different transformations which are specific of the sensor architecture taking into account external factors including wrap, blurring, translation and decimation [20], [24], [25]. In this work, since geometrical transformations such as wrap and translations can be corrected using image co-re gistration techniques in pre-processing steps, only a spatially in variant blurring and a decimation (i.e., subsampling) will be considered. A space- in variant blur can be modeled by a symmetric con volution kernel associated with a sparse symmetric T oeplitz matrix B ∈ R n × n which operates a cyclic con volution on the each individual band [26]. The decimation operation, denoted by the n × m matrix S , corresponds to a uniform downsampling operator 1 of factor d = d r × d c with m = n/d ones on the block diagonal and zeros elsewhere, such that S T S = I m [20]. T o summarize, the overall spatial degradation process corresponds to the matrix composition R = BS ∈ R n × m . The noise corrupting multi-band optical images is generally modeled as additive and Gaussian [2], [5], [20], [27]. Thus the noise matrix N in (2) is assumed to be distributed according to the follo wing matrix normal distribution 2 N ∼ MN n λ ,m ( 0 n λ × m , Λ , Π ) . (3) The row cov ariance matrix Λ carries information regarding the between-band spectral correlation. Follo wing [20], in what follows, this cov ariance matrix Λ will be assumed to be diagonal, which implies that the noise is independent from one band to the other and characterized by a specific v ariance in each band. Conv ersely , the column co variance matrix Π models the noise correlation 1 The corresponding operator S T represents an upsampling transformation by zero-interpolation from m to n . 2 The probability density function p ( X | M , Σ r , Σ r ) of a matrix normal distribution MN r,c ( M , Σ r , Σ c ) is given by [28] p ( X | M , Σ r , Σ r ) = exp ( − 1 2 tr [ Σ − 1 c ( X − M ) T Σ − 1 r ( X − M ) ]) (2 π ) rc/ 2 | Σ c | r/ 2 | Σ r | c/ 2 where M ∈ R r × c is the mean matrix, Σ r ∈ R r × r is the row covariance matrix and Σ c ∈ R c × c is the column covariance matrix. September 21, 2016 DRAFT 6 w .r .t. to the pix el locations. Follo wing a widely admitted hypothesis of the literature, this matrix is assumed to be identity , Π = I m , to reflect the fact the noise is spatially independent. In real applications, both matrices Λ and Π can be estimated by previous calibrations [24]. B. Pr oblem statement Let us denote t j and t i the acquisition times of two co-registered multi-band optical images. It is not assumed any specific information about time ordering, either t i < t j or t i > t j are possible cases. Hence, without loss of generality , the HR-P AN/MS image acquired at time t i is assumed to be a low spectral resolution (i.e., P AN or MS) image of high spatial resolution denoted Y t i HR ∈ R n λ × n . The image acquired at time t j is a LR-HS image denoted Y t j LR ∈ R m λ × m . The problem addressed in this paper consists of detecting significant changes between these two images. This is a challenging task mainly due to the spatial and spectral resolution dissimilarity which prevents any use of simple yet efficient dif ferencing operation [4], [5]. T o alle viate this issue, this work proposes to generalize the CD framework introduced in [17]. More precisely , following the widely admitted forward model described in Section II-A and adopting consistent notations, the observed images Y t i HR and Y t j LR can be related to two HR-HS latent images X t i and X t j , respectiv ely , as follo ws Y t i HR = LX t i + N HR (4a) Y t j LR = X t j BS + N LR . (4b) Note that (4a) and (4b) are a specific double instance of (2). Indeed, the HR-P AN/MS (resp., LR-HS) image Y t i HR (resp., Y t j LR ) is assumed to be only a spectrally (resp., spatially) degraded version of the HR-HS latent image X t i (resp., X t i ) such that both latent images X t i ∈ R m λ × n and X t j ∈ R m λ × n share the same spectral and spatial resolutions which correspond to the highest resolutions of both observed images. Thereby , provided these two latent images can be ef ficiently inferred, any classical dif ferencing technique can be subsequently implemented on them to detect changes, notably at a high spatial resolution. More specifically , it w ould consist of ev aluating an HR-HS change image denoted ∆ X = [∆ x 1 , . . . , ∆ x n ] that would gather information related to any change between the two observed images ∆ X = X t i − X t j (5) where ∆ x p ∈ R m λ denotes the spectral change vector in the p th pixel ( p = 1 , . . . , n ). This spectral change image can be exploited by conducting a pixel-wise change vector analysis (CV A) [29] which exhibits the polar coordinates (i.e., magnitude and direction) of the spectral change vectors. T o spatially locate the changes, a natural approach consists of monitoring the information contained in the magnitude part of this representation [9], [10], [30], by considering the corresponding HR September 21, 2016 DRAFT 7 spectral change energy image e = [ e 1 , . . . , e n ] ∈ R n (6) with e p = k ∆ x p k 2 , p = 1 , . . . , n. (7) When the CD problem in the p th pixel is formulated as the binary hypothesis testing H 0 ,p : no change occurs in the p th pixel H 1 ,p : a change occurs in the p th pixel (8) the pixel-wise statistical test can be written for a given threshold τ as e p H 1 ,p ≷ H 0 ,p τ . (9) The final binary HR CD map denoted d = [ d 1 , . . . , d n ] ∈ { 0 , 1 } n can be deriv ed as d p = 1 if e p ≥ τ ( H 1 ,p ) 0 otherwise ( H 0 ,p ) . (10) When complementary information needs to be extracted from the change image ∆ X , e.g., to identify dif ferent types of changes, the whole polar representation (i.e., both magnitude and direction) can be fully exploited [9], [10]. As a consequence, to solve the multi-band image CD problem, the key issue lies in the joint estimation of the pair of HR-HS latent images X t i , X t j from the forward model (4) or , equi valently , the joint estimation of one of this latent image and the difference image, e.g., X t j , ∆ X . The next paragraph shows that this problem can be formulated as a particular instance of multi-band image fusion. C. Robust multi-band image fusion Linear forw ard models similar to (4) ha ve been extensiv ely in vestigated in the image processing literature for v arious applications. When a unique LR-HS image Y t j LR has been observed at time t j , recov ering the HR-HS latent image X t j from the direct model (4b) can be cast as a superresolution problem [31], [32]. Besides, when a complementary HR-P AN/MS image Y t i HR of lower spectral resolution (i.e., P AN or MS) has been simultaneously acquired at time t i = t j under (4a), the two corresponding latent images are expected to represent exactly the same scene, i.e., ∆ X = 0 or , equi valently , X t i = X t j = X where the time index can be omitted. In such scenario, estimating the common HR-HS latent image X from the two observed images Y HR and Y LR is a multi-band image fusion problem addressed in [20]–[23], [26], [33]–[35], also referred to as MS or HS pansharpening in some specific cases [27]. Whether the problem consists in increasing the resolution of a single image or fusing multiple images of different spatial and spectral resolutions, the underlying objecti ve consists in compensating the energy trade-of f of optical sensors to get highly spatially and spectrally resolved September 21, 2016 DRAFT 8 images. Those problems are often formulated as an in verse problem, which is generally ill-posed or , at least, ill-conditioned. T o overcome this issue, a classical approach consists of penalizing the data fitting terms deriv ed from the linear models (4) and the noise statistics (3) with additional regularizing terms exploiting an y prior information on the latent image. V arious penalizations ha ve been considered in the literature, including T ikhonov regularizations expressed in the image domain [21], [36] or a in a transformed (e.g., gradient) domain [37], [38], dictionary- or patch-based regularizations [26], [31], total variation (TV) [23], [39] or regularizations based on sparse wav elet representations [40], [41]. In this work, we propose to follow a similar route by addressing, in a first step, the CD problem as a linear inv erse problem deri ved from (4). Howe ver , the CD problem addressed here differs from the computational imaging problems discussed above by the fact that two distinct HR-HS latent images X t i and X t j need to be inferred, which makes the in verse problem highly ill-posed. Howe ver , this particular applicati ve scenario of CD yields a natural reparametrization where rele vant prior kno wledge can be con veniently exploited. More precisely , since the two HR-HS latent images are related to the same scene observed at two time instants, they are expected to share a high level of similarity , i.e., the change image ∆ X is expected to be spatially sparse. Thus, instead of jointly estimating the pair X t i , X t j of HR-HS latent images, we take benefit from this crucial information to re write the joint observ ation model (4) as a function of X t j , ∆ X , i.e., Y t i HR = L X t j + ∆ X + N HR (11a) Y t j LR = X t j BS + N LR . (11b) It is worthy to note that this dual observ ation model parametrized by the new pair X t j , ∆ X of images to be inferred can be straightforwardly associated with a particular instance of the multi-band image fusion discussed earlier . Indeed, giv en the HR-HS change image ∆ X and the HR-P AN/MS image observed at time t i , an HR-P AN/MS corr ected image denoted Y t j cHR that would be acquired by the HR-P AN/MS sensor at time t j can be defined as Y t j cHR = Y t i HR − L ∆ X . (12) In such case, the HR forward model (11a) can be easily rewritten, leading to Y t i cHR = LX t j + N HR (13a) Y t j LR = X t j BS + N LR . (13b) This observ ation model (13) defines a standard multi-band image fusion problem for the LR-HS observed image Y t j LR and the corrected HR-P AN/MS image Y t j cHR . Consequently , since the change image ∆ X can be considered as an outlier term, akin to those encountered in several rob ust factorizing models such as robust principal component analysis (RPCA) [18] and robust nonnegati ve factorization September 21, 2016 DRAFT 9 [19] which relies on a similar sparse outlier term, the joint observation model (11) naturally defines a so-called r obust fusion scheme whose objectiv e function is detailed in the next paragraph. D. Robust fusion objective function Because of the additiv e nature and the statistical properties of the noise N HR and N LR , both observed images Y t i HR and Y t j LR can be assumed matrix normally distributed Y t i HR | X t j , ∆ X ∼ MN n λ ,n L X t j + ∆ X , Λ HR , I n Y t j LR | X t j ∼ MN m λ ,m X t j BS , Λ LR , I m . Besides, since both observations are acquired by different modality sensors, the noise, which is sensor-dependent, can be assumed statistically independent. Thus, Y t i HR | X t j , ∆ X and Y t j LR | X t j are also statistically independent and the joint likelihood function p ( Y t i HR , Y t j LR | X t j , ∆ X ) can be written as a simple product of the conditional distributions p ( Y t i HR | X t j , ∆ X ) and p ( Y t j LR | X t j ) . A Bayesian formulation of the rob ust multi-band image fusion problem allows prior information to be introduced to regularize the underlying estimation problem [42]. Bayesian estimators can be deri ved from the joint posterior distribution p ( X t j , ∆ X | Y t i HR , Y t j LR ) ∝ p ( Y t i HR , Y t j LR | X t j , ∆ X ) p ( X t i ) p (∆ X ) (14) where p ( X t i ) and p (∆ X ) correspond to the prior distributions associated with the latent and change HR-HS images, respecti vely , assumed to be a priori independent. Under a maximum a posteriori (MAP) paradigm, the joint MAP estimator n ˆ X t j MAP , ∆ ˆ X MAP o can be deriv ed by minimizing the negati ve log-posterior, leading to the following minimization problem n ˆ X t i MAP , ∆ ˆ X MAP o ∈ Argmin X t j , ∆ X J X t j , ∆ X (15) with J X t j , ∆ X = 1 2 Λ − 1 2 HR Y t i HR − L X t j + ∆ X 2 F + 1 2 Λ − 1 2 LR Y t j LR − X t j BS 2 F + λφ 1 X t j + γ φ 2 (∆ X ) . (16) The regularizing functions φ 1 ( · ) and φ 2 ( · ) can be related to the negati ve log-prior distributions of the HR-HS latent and change images, respectively , and the parameters λ and γ tune the amount of corresponding penalizations in the overall objective function J ( X t j , ∆ X ) . These functions should be carefully designed to exploit any prior knowledge regarding the parameters of interest. As discussed in Section II-C, numerous regularizations can be advocated for the HR-HS latent image X t j . In this work, a Tikhono v regularization proposed in [21] has been adopted φ 1 X t j = X t j − ¯ X t j 2 F (17) September 21, 2016 DRAFT 10 where ¯ X t j refers to a crude estimate of X t j , e.g., resulting from a naiv e spatial interpolation of the observed LR-HS image Y t j LR . This choice has been proven to maintain computational efficiency while providing accurate results [27]. Additionally , a subspace-based representation can also be adopted to enforce X t j to liv e in a previously identified subspace, as advocated in [43] and [23]. Con versely and more critically , a specific attention should be paid to the regularizing function φ 2 ( · ) . This function should reflect the fact that most of the pixels are expected to remain unchanged in X t i and X t j , i.e., most of the columns of the change image ∆ X are expected to be null vectors. This noticeable property can be easily translated by promoting the sparsity of the spectral change energy image e defined by (6). As a consequence, the regularizing function φ 2 ( · ) is chosen as the sparsity-inducing ` 1 -norm of the change energy image e or , equiv alently , as the ` 2 , 1 -norm of the change image φ 2 (∆ X ) = k ∆ X k 2 , 1 = n X p =1 k ∆ x p k 2 . (18) This regularization is a specific instance of the non-ov erlapping group-lasso penalization [44] which has been considered in various applications to promote structured sparsity [19], [45]–[50]. The next section describes an iterativ e algorithm which solves the minimization problem in (15). I I I . M I N I M I Z A T I O N A L G O R I T H M Computing the joint MAP estimator of the HR-HS latent image X t j at time t j and of the change image ∆ X can be achieved by solving the minimization problem in (15). Howe ver , no closed-form solution can be deri ved for this problem. Thus this section presents a minimization algorithm which iterati vely con ver ges to this solution. It consists in sequentially solving the problem w .r .t. to each indi vidual v ariables X t j and ∆ X . This block coordinate descent algorithm is summarized in Algo. 1 whose main steps (fusion and correction) are detailed in what follows. Algorithm 1 BCD algorithm for robust multi-band image fusion Input: Y t j LR , Y t i HR , L , B , S , Λ HR , Λ LR . 1: Set ∆ X 1 . 2: f or k = 1 , . . . , K do 3: X t j k +1 = arg min X t j J ( X t j , ∆ X k ) 4: ∆ X k +1 = arg min ∆ X J ( X t j k +1 , ∆ X ) 5: end for Output: ˆ X t j MAP , X t j K +1 and ∆ ˆ X MAP , ∆ ˆ X K +1 September 21, 2016 DRAFT 11 A. Fusion: optimization w .r .t X t j At the k th iteration of the BCD algorithm, let assume that the current value of the HR-HS change image is denoted ∆ X k . As suggested in Section II-C, an HR-P AN/MS corrected image Y t j cHR ,k that would be observed at time t j gi ven the HR-P AN/MS image Y t i HR observed at time t i and the HR-HS change image ∆ X k can be introduced as Y t j cHR ,k = Y t i HR − L ∆ X k . (19) Updating the current value of the HR-HS latent image consists in minimizing w .r .t. X t j the partial function J 1 X t j , J X t j , ∆ X k = Λ − 1 2 LR Y t j LR − X t j BS 2 F + Λ − 1 2 HR Y t j cHR ,k − LX t j 2 F + λφ 1 X t j . (20) As noticed earlier , this sub-problem boils do wn to the multi-band image fusion which has receiv ed considerable attention in the recent image processing and remote sensing literature [20], [21], [23], [26], [27], [43]. The two difficulties arising from this formulation lies in the high dimension of the optimization problem and in the fact that the sub-sampling operator S pre vents any fast resolution in the frequency domain by diagonalization of the spatial degradation matrix R = BS . Howe ver , with the particular choice (17) of the regularization function φ 1 ( · ) adopted in this paper , a closed-form solution can still be deri ved and efficiently implemented. It consists in solving a matrix Sylvester equation [20] of the form C 1 X t j + X t j C 2 = C 3 (21) where the matrices C 1 , C 2 and C 3 depend on the quantities in volved in the problem, i.e., the virtual and observed images, the degradation operators, the noise cov ariance matrices and the spatially interpolated image defined in (17) (see [20] for more details). Note that when a more complex regularization function φ 1 ( · ) is considered (e.g., TV or sparse representation ov er a dictionary), iterati ve algorithmic strategies can be adopted to approximate the minimizer of J 1 X t j . B. Corr ection: optimization w .r .t ∆ X Follo wing the same strategy as in [17], let introduce the pr edicted HR-P AN/MS image Y t j pHR ,k = LX t j k (22) that would be observed at time index t j by the HR-P AN/MS sensor given its spectral response L and the current state of the HR-HS latent image X t j k at the k th iteration of the BCD algorithm. Similarly to (5), the predicted HR-P AN/MS change image can thus be defined as ∆ Y pHR ,k = Y t i HR − Y t j pHR ,k . (23) September 21, 2016 DRAFT 12 The objecti ve function (16) w .r .t ∆ X is then rewritten by combining (22) and (23) with (16), leading to J 2 (∆ X ) , J ( X t j k , ∆ X ) = Λ − 1 2 HR (∆ Y pHR ,k − L ∆ X ) 2 F + γ φ 2 (∆ X ) . (24) W ith the specific CD-driv en choice of φ 2 ( · ) in (18), minimizing J 2 (∆ X ) is an ` 2 , 1 -penalized least square problem. It is characterized by the sum of a con ve x and differentiable data fitting term with β -Lipschitz continuous gradient ∇ f ( · ) f (∆ X ) , Λ − 1 2 HR (∆ Y pHR ,k − L ∆ X ) 2 F (25) and a con vex but non-smooth penalization g (∆ X ) , γ φ 2 (∆ X ) = γ k ∆ X k 2 , 1 . (26) V arious algorithms ha ve been proposed to solv e such con vex optimization problems including forward- backward splitting [51], [52], Douglas-Rachford splitting [52], [53] and alternating direction method of multipliers [54], [55]. Since the proximal operator related to g ( · ) can be efficiently computed (see belo w), in this work, we propose to resort to an iterativ e forward-backward algorithm which has sho wn to provide the fastest yet reliable results. This algorithmic scheme is summarized in Algo. 2. It relies on a forward step which consists in conducting a gradient descent using the data-fitting function f ( · ) in (25), and a backward step relying on the proximal mapping associated with the penalizing function g ( · ) in (26). Algorithm 2 Correction step: forward-backward algorithm Input: ∆ X k , ∆ Y pHR ,k , Λ HR , L , { η j } J j =1 Set V 1 , ∆ X k 2: f or j = 1 , . . . , J do % forward step 4: U j +1 = V j − η j ∇ f ( V j ) % backwar d step 6: V j +1 = pro x η j g ( U j +1 ) end for Output: ∆ X k +1 , V J +1 Since the HR-P AN/MS observed image has only a few spectral bands (e.g., n λ ∼ 10 ), the spectral degradation matrix L ∈ R n λ × m λ is a fat (and generally full-ro w rank) matrix. Thus, the corresponding gradient operator ∇ f ( · ) defining the forward step (see line 4 of Algo. 2) can be easily and ef ficiently September 21, 2016 DRAFT 13 computed. Con versely , the proximal operator associated with g ( · ) in (26) and required during the backward step (see line 6 of Algo. 2) is defined as prox η g ( U ) = arg min Z γ k Z k 2 , 1 + 1 2 η k Z − U k 2 F (27) for some η > 0 . The function g ( U ) in (26) can be split as P n p =1 g p ( u p ) with, for each column, g p ( · ) = γ k·k 2 . Based on the separability property of proximal operators [55], the operator (27) can be decomposed and computed for each pixel location p ( p = 1 , . . . , n ) as prox η g ( U ) p = prox η g p ( u p ) (28) where the notations [ · ] p stands for the p th column. Thus, only the proximal operator associated with the Euclidean distance induced by the ` 2 -norm is necessary . The Moreau decomposition [55] u p = prox η g ( u p ) + η prox η − 1 g ∗ p η − 1 u p (29) establishes a relationship between the proximal operators of the function g p ( · ) and its conjugate g ∗ p ( · ) . When the function g ( · ) is a general norm, its conjugate corresponds to the indicator function into the ball B defined by its dual norm [48], [55], leading to prox η g ( u p ) = u p − η P B u p η (30) where P B ( · ) denotes the projection. When g ( · ) is defined by (26), since the ` 2 -norm is self-dual, this projection is P B ( u p ) = γ u p k u p k 2 if k u p k 2 > γ u p otherwise. (31) Consequently , replacing (31) in (30), the proximal operator associated with the function g p ( · ) in (28) is prox η g p ( u p ) = 1 − η γ k u p k 2 u p if k u p k 2 > η γ 0 otherwise. (32) T o conclude, the correction procedure can be interpreted as first a gradient descent step for spectral deblurring of the HR-HS change image from the HR-P AN/MS predicted change image (forward step), follo wed by a soft-thresholding of the resulting HR-HS change image to promote spatial sparsity (backward step). I V . E X P E R I M E N T S A. Simulation framework Real dataset for assessing performance of CD algorithms is rarely av ailable. Indeed, this assessment requires a couple of images acquired at tw o different dates, geometrically and radiometrically pre- corrected, presenting changes and, for the scenario considered in this paper , coming from two September 21, 2016 DRAFT 14 (a) d HR (b) X t i (c) X t j (d) Y t i HR (e) Y t j LR Fig. 1: One particular simulation configuration: (a) the HR change mask d HR , (b)-(c) the HR-HS latent images X t i and X t j , (d)-(e) the spectrally degraded version HR-MS observed image Y t i HR and the spatially degraded LR-HS observed image Y t j LR . Note that, in this particular configuration, the change-inducing function ϑ t j ( · , d HR ) is the identity operator (i.e., A t j = A ref ) since it does not apply any change into the corresponding HR-HS latent image X t j while the function ϑ t i ( · , d HR ) includes a triangular re gion of pixels in X t i af fected by changes. Moreo ver , the HR observed image is here an MS image. dif ferent optical sensors. T o alleviate this issue, inspired by the well-known W ald’ s ev aluation protocol dedicated to pansharpening algorithms [56], a frame work has been proposed in [17] to assess the performance of CD algorithms when dealing with optical images of different spatial and spectral resolutions. This frame work only requires a single HR-HS reference image X ref and generates a pair of latent HR-HS images X t i and X t j resulting from a unmixing-mixing process. This process allo ws synthetic yet realistic changes to be incorporated within one of these latent images, w .r .t. a pre-defined binary reference HR change mask d HR ∈ R n locating the pixels af fected by these changes and further used to assess the performance of the CD algorithms. This procedure allows v arious physically-inspired changes to be considered, e.g., by tuning the relative abundance of a each endmember or replacing one of them my another . This protocol is briefly described below (see [17] for more details). 1) Refer ence image: The HR-HS reference image X ref used in the experiments reported in this paper is a 610 × 330 × 115 HS image of the Pavia Univ ersity , Italy , acquired by the reflectiv e optics system imaging spectrometer (R OSIS) sensor . This image has undergone a pre-precessing to smooth the atmospheric effects of v apor water absorption by removing some bands. Thus the final HR-HS reference image is of size 610 × 330 × 93 . September 21, 2016 DRAFT 15 2) Generating the changes: Using the same procedure proposed in [17], the HR-HS reference image X ref ∈ R m λ × n has been linearly unmixed to define the reference matrix M ref ∈ R m λ × R of R endmember spectral signatures and the corresponding reference abundance matrix A ref ∈ R R × n such that X ref ≈ M ref A ref . The two latent HR-HS images X t i and X t j are then computed as linear mixture of the endmembers in M ref with corresponding ab undance matrices A t i and A t j , respecti vely , deri ved from the reference abundances A ref and the change mask d HR , i.e., X t i = M ref A t i and X t j = M ref A t j with A t i = ϑ t i A ref , d HR and A t j = ϑ t j A ref , d HR where the two change-inducing functions ϑ t · : R R × n × R n → R R × n are defined to simulate realistic changes in some pixels of the HR-HS latent images. Three sets of 75 predefined change masks hav e been designed according to three specific change rules introduced in [17]. For each simulated pair X t i , X t j , one of the tw o functions ϑ t · ( · , d HR ) is defined as a “no-change” operator , i.e., ϑ t · ( A ref , d HR ) = A ref , which leads to an ov erall set of 450 simulated pairs X t i , X t j of HR-HS latent images. 3) Generating the observed images: The HR-P AN/MS observed image Y t i HR is obtained by spec- trally degrading the corresponding HR-HS latent image X t i . T wo scenarios are considered. Scenario 1 consists in av eraging the first 43 bands of the HR-HS latent image to produce an HR-P AN image. Con versely , Scenario 2 considers an HR-MS image by spectrally filtering the HR-HS latent image X t i with a 4 -band LANDSA T -like spectral response. Moreov er , to generate a spatially degraded image Y t j LR , the respecti ve latent image X t i has been blurred by a 5 × 5 Gaussian kernel and subsequently equally do wn-sampled in the vertical and horizontal directions with a down-sampling ratio d = 5 . T o illustrate, Fig. 1 shows one of the 450 simulation configurations used during the experiments. B. Compar ed methods The proposed robust fusion-based CD technique has been compared to four methods able to deal with optical images of dif ferent spatial and spectral resolutions. The first one has been proposed in [17] and also relies on a fusion-based approach. Up to the authors’ knowledge, it was the first operational CD technique able to operate with multi-band optical images of different spatial and spectral images. Contrary to the model (4) proposed in this paper , it consists in recov ering a common latent image by fusing the two observed images and then predicting an HR-P AN/MS image ˆ Y F ,t i HR from the underlying forward model. An HR-P AN/MS change image ∆ Y F ,t i HR has been then computed as in (5) from the pair of HR-P AN/MS observed and predicted images n Y t i HR , ˆ Y F ,t i HR o . Finally , as September 21, 2016 DRAFT 16 recommended in [17], a spatially-regularized CV A (sCV A) similar to the decision rule detailed in Section II-B has been conducted on ∆ Y F ,t i HR to produce an estimated HR CD mask denoted ˆ d F . The second method aims at producing an HR-P AN/MS predicted image by successiv e spatial superresolution and spectral degradation. More precisely , an HR-HS latent image is first recovered by conducting a band-wise spatial superresolution of the observed LR-HS Y t j LR follo wing the fast method in [32]. Then this latent image is spectrally degraded according to produce an HR-P AN/MS predicted image ˆ Y SD ,t j HR . Similarly to the pre vious fusion-based method, sCV A has been finally conducted on the pair n Y t i HR , ˆ Y SD ,t j HR o to produce an HR CD mask denoted ˆ d SD . The third CD method applies the same procedure with a rev erse order of spatial superresolution and spectral de gradation, and produces produces an HR change mask denoted ˆ d DS from the pair of HR-P AN/MS images n Y t i HR , ˆ Y DS ,t j HR o . The fourth CD method, referred to as the worst-case (WC) as in [17], build a LR change mask ˆ d WC by crudely conducting a sCV A on a spatially degraded version of the HR-P AN/MS image and a spectrally degraded version of the LR-HS image. C. F igur es-of-merit The CD performances of these four methods, as well as the performance of the proposed rob ust fusion-based method whose HR change mask is denoted ˆ d RF , ha ve been visually assessed from empirical recei ver operating characteristics (R OC), representing the estimated pixel-wise probability of detection ( PD ) as a function of the probability of false alarm ( PF A ). Moreover , two quantitativ e criteria deri ved from these R OC curves hav e been computed, namely , i ) the area under the curve (A UC), corresponding to the integral of the ROC curve and ii ) the distance between the no detection point ( P F A = 1 , PD = 0) and the point at the interception of the R OC curve with the diagonal line defined by PF A = 1 − PD . For both metrics, greater the criterion, better the detection. T ABLE I: Scenarios 1 & 2: quantitative detection performance (A UC and distance). ˆ d RF ˆ d RF ˆ d WC ˆ d DS ˆ d SD Scenario 1 A UC 0 . 9936 0 . 9853 0 . 9777 0 . 8623 0 . 8469 Dist. 0 . 9896 0 . 9578 0 . 9249 0 . 7832 0 . 7716 Scenario 2 A UC 0 . 9974 0 . 9919 0 . 9809 0 . 8881 0 . 8915 Dist. 0 . 9944 0 . 9590 0 . 9356 0 . 8141 0 . 8197 D. Results 1) Scenario 1 (HR-P AN vs. LR-HS): The ROC curv es depicted in Fig. 2 with corresponding metrics in T able I (first two rows) correspond to the CD results obtained from a pair of HR-P AN and LR-HS September 21, 2016 DRAFT 17 PFA 0 0.2 0.4 0.6 0.8 1 PD 0 0.2 0.4 0.6 0.8 1 ˆ d RF ˆ d F ˆ d WC ˆ d DS ˆ d SD Fig. 2: Scenario 1 (HR-P AN vs. LR-HS): R OC curves. observed images. Clearly , the proposed robust fusion-based CD technique outperforms the four other CD techniques. More importantly , it provides almost perfect detections ev en for very low PF A, i.e., for very lo w energy changes. Note that the CD mask d WC estimated by the worst-case method is defined at an LR. 2) Scenario 2 (HR-MS vs. LR-HS): Applying the same procedure of Scenario 1 but now considering an HR-MS observed image instead of the HR-P AN observ ed image leads to very similar ov erall performance. The R OC plot is depicted in Fig. 3 with corresponding metrics in T able I (last two ro ws). As in Scenario 1, comparing curves in Fig. 2 shows that the proposed method offers a higher precision ev en when analyzing a lower spectral resolution HR observed image. As an additional result, for Scenario 2, Fig. 4 compares the abilities of detecting changes of September 21, 2016 DRAFT 18 PFA 0 0.2 0.4 0.6 0.8 1 PD 0 0.2 0.4 0.6 0.8 1 ˆ d RF ˆ d F ˆ d WC ˆ d DS ˆ d SD Fig. 3: Scenario 2 (HR-MS vs. LR-HS): R OC curves. decreasing size of the proposed method against the fusion-based CD method [17] and the worst-case CD method. Figure 4(a) and 4(b) shows the observed image pair Y t i HR and Y t j LR containing multiple changes with size varying from 1 × 1 - pixel to 61 × 61 -pixels, with the corresponding change mask d HR presented in Fig. 4(c). Figures 4(d) and 4(e) present the change masks ˆ d F and ˆ d WC recov ered by the two competing methods, respectively , while the CD mask ˆ d RF recov ered by the proposed robust fusion-based method is reported in Fig. 4(f) sho ws the proposed CD. For each technique, the decision threshold τ required in the CV A in (10) has been tuned to reach the higher distance v alue in the corresponding ROC curves. The first advantage of the proposed method is a significant decrease of the number of false alarm which are due to propagated errors when implementing the two other methods. Moreover , these results prov es once again that the proposed method achiev es a better September 21, 2016 DRAFT 19 detection rate with a higher resolution, ev en when considering e xtremely localized change regions. Remaining false alarms only occur near edges between change and no-change regions of small size due to the difference of spatial resolutions and the width of the blur k ernel. Note also that the CD mask estimated by the worst-case method is of coarse scale since based on the comparison of two LR-MS images. (a) Y t i HR (b) Y t j LR (c) d HR (d) ˆ d F (e) ˆ d WC (f) ˆ d RF Fig. 4: CD precision for Scenario 2 (HR-MS vs. LR-HS): (a) HR-MS observed image Y t i HR , (b) LR-HS observed image Y t j LR , (c) actual change mask d HR , (d) change mask ˆ d F estimated by the fusion-based approach [17], (e) change mask ˆ d WC estimated by the worst-case approach, (f) change mask ˆ d RF estimated by the proposed robust fusion-based approach. V . C O N C L U S I O N This paper proposed a robust fusion-based change detection technique to handle two multi-band optical observed images of different spatial and spectral resolutions. The technique w as based on the definition of two high resolution hyperspectral latent images related to the observ ed images via a double physically-inspired forward model. The dif ference between these tw o latent images was September 21, 2016 DRAFT 20 assumed to be spatially sparse, implicitly locating the changes at a high resolution scale. Inferring these two latent images was formulated as an in verse problem which w as solv ed within a 2 -step iterati ve scheme. This algorithmic strategy amounted to solve a standard fusion problem and an ` 2 , 1 - penalized spectral deblurring step. Contrary to the methods already proposed in the literature, modeling errors were not anymore propagate in-between steps. A simulation protocol allowed the performance of the proposed technique in terms of detection and precision to be assessed and compared with the performance of various algorithms. The detection rate as well as the accuracy of this method was clearly impro ved by this robust-fusion based algorithm. Future works include the detection of change between optical and non-optical data. R E F E R E N C E S [1] V . Ferraris, N. Dobigeon, Q. W ei, and M. Chabert, “Change detection between multi-band images using a robust fusion-based approach, ” in Pr oc. IEEE Int. Conf. Acoust., Speech and Signal Pr ocess. (ICASSP) , 2016, submitted. [2] C. Elachi and J. V an Zyl, Introduction to the physics and techniques of r emote sensing , 2nd ed., ser . Wile y series in remote sensing. Hoboken, N.J.: W iley-Interscience, 2006. [3] J. B. Campbell and R. H. W ynne, Introduction to remote sensing , 5th ed. New Y ork: Guilford Press, 2011. [4] A. Singh, “Review Article Digital change detection techniques using remotely-sensed data, ” Int. J. Remote Sens. , vol. 10, no. 6, pp. 989–1003, June 1989. [5] F . Bovolo and L. Bruzzone, “The time variable in data fusion: A change detection perspectiv e, ” IEEE Geosci. Remote Sens. Mag. , vol. 3, no. 3, pp. 8–26, Sept. 2015. [6] M. Dalla Mura, S. Prasad, F . Pacifici, P . Gamba, J. Chanussot, and J. A. Benediktsson, “Challenges and Opportunities of Multimodality and Data Fusion in Remote Sensing, ” Pr oc. IEEE , vol. 103, no. 9, pp. 1585–1601, Sept. 2015. [7] D. Landgrebe, “Hyperspectral image data analysis, ” IEEE Signal Pr ocess. Mag. , vol. 19, no. 1, pp. 17–28, 2002. [8] J. C. Price, “Spectral band selection for visible-near infrared remote sensing: spectral-spatial resolution tradeoffs, ” IEEE T rans. Geosci. Remote Sens. , vol. 35, no. 5, pp. 1277–1285, 1997. [9] F . Bo volo and L. Bruzzone, “ A theoretical framework for unsupervised change detection based on change v ector analysis in the polar domain, ” IEEE T rans. Geosci. Remote Sens. , vol. 45, no. 1, pp. 218–236, Jan. 2007. [10] F . Bov olo, S. Marchesi, and L. Bruzzone, “ A frame work for automatic and unsupervised detection of multiple changes in multitemporal images, ” IEEE T rans. Geosci. Remote Sens. , v ol. 50, no. 6, pp. 2196–2212, June 2012. [11] A. A. Nielsen, K. Conradsen, and J. J. Simpson, “Multi variate alteration detection (MAD) and MAF postprocessing in multispectral, bitemporal image data: New approaches to change detection studies, ” Remote Sens. Envir onment , vol. 64, no. 1, pp. 1–19, 1998. [12] A. A. Nielsen, “The Regularized Iteratively Reweighted MAD Method for Change Detection in Multi- and Hyperspectral Data, ” IEEE T rans. Image Pr ocess. , vol. 16, no. 2, pp. 463–478, Feb. 2007. [13] J. Inglada, “Similarity measures for multisensor remote sensing images, ” in Pr oc. IEEE Int. Conf. Geosci. Remote Sens. (IGARSS) , vol. 1. IEEE, 2002, pp. 104–106. [14] V . Alberga, M. Idrissa, V . Lacroix, and J. Inglada, “Performance estimation of similarity measures of multi-sensor images for change detection applications, ” in Pr oc. IEEE Int. W orkshop Analysis Multitemporal Remote Sensing Images (MultiT emp) . Leuven: IEEE, 2007, pp. 1 – 5. [15] G. Mercier, G. Moser , and S. Serpico, “Conditional copula for change detection on heterogeneous sar data, ” in Pr oc. IEEE Int. Conf. Geosci. Remote Sens. (IGARSS) . IEEE, 2007, pp. 2394–2397. September 21, 2016 DRAFT 21 [16] J. Prendes, M. Chabert, F . Pascal, A. Giros, and J.-Y . T ourneret, “ A new multiv ariate statistical model for change detection in images acquired by homogeneous and heterogeneous sensors, ” IEEE T rans. Image Pr ocess. , v ol. 24, no. 3, pp. 799–812, 2015. [17] V . Ferraris, N. Dobigeon, Q. W ei, and M. Chabert, “Detecting changes between optical images of different spatial and spectral resolutions: a fusion-based approach, ” 2016, submitted. [18] E. J. Candés, X. Li, Y . Ma, and J. Wright, “Robust principal component analysis?” Journal of the ACM (JA CM) , vol. 58, no. 3, p. 11, 2011. [19] C. Févotte and N. Dobigeon, “Nonlinear hyperspectral unmixing with robust nonnegati ve matrix factorization, ” IEEE T rans. Image Pr ocess. , vol. 24, no. 12, pp. 4810–4819, 2015. [20] Q. W ei, N. Dobigeon, and J.-Y . T ourneret, “Fast Fusion of Multi-Band Images Based on Solving a Sylvester Equation, ” IEEE T rans. Image Pr ocess. , vol. 24, no. 11, pp. 4109–4121, Nov . 2015. [21] ——, “Bayesian Fusion of Multi-Band Images, ” IEEE J. Sel. T opics Signal Process. , vol. 9, no. 6, pp. 1117–1127, Sept. 2015. [22] N. Y okoya, T . Y airi, and A. Iwasaki, “Coupled nonnegati ve matrix factorization unmixing for hyperspectral and multispectral data fusion, ” IEEE T rans. Geosci. Remote Sens. , v ol. 50, no. 2, pp. 528–537, Feb . 2012. [23] M. Simões, J. Bioucas Dias, L. Almeida, and J. Chanussot, “ A conv ex formulation for hyperspectral image superresolution via subspace-based regularization, ” IEEE T rans. Geosci. Remote Sens. , vol. 6, no. 53, pp. 3373–3388, June 2015. [24] N. Y okoya, N. Mayumi, and A. Iwasaki, “Cross-Calibration for Data Fusion of EO-1/Hyperion and T erra/ASTER, ” IEEE J. Sel. T opics Appl. Earth Observations Remote Sens. , vol. 6, no. 2, pp. 419–426, April 2013. [25] F . Heide, O. Gallo, M. Steinber ger, J. Liu, Y .-T . Tsai, W . Heidrich, M. Rouf, K. Egiazarian, D. Pajak, J. Kautz, D. Reddy , and K. Pulli, “FlexISP: A Flexible Camera Image Processing Framew ork, ” ACM T ransactions on Graphics (TOG) - Proceedings of A CM SIGGRAPH Asia 2014 , vol. 33, no. 6, 2014. [26] Q. W ei, J. Bioucas-Dias, N. Dobigeon, and J.-Y . T ourneret, “Hyperspectral and multispectral image fusion based on a sparse representation, ” IEEE T rans. Geosci. Remote Sens. , v ol. 53, no. 7, pp. 3658–3668, 2015. [27] L. Loncan, L. B. de Almeida, J. M. Bioucas-Dias, X. Briottet, J. Chanussot, N. Dobigeon, S. Fabre, W . Liao, G. A. Licciardi, M. Simoes, J.-Y . T ourneret, M. A. V eganzones, G. V iv one, Q. W ei, and N. Y okoya, “Hyperspectral pansharpening: A revie w , ” IEEE Geosci. Remote Sens. Mag . , vol. 3, no. 3, pp. 27–46, Sept. 2015. [28] A. K. Gupta and D. K. Nagar , Matrix V ariate Distribution , ser . Monographs and Surveys in Pure and Applied Mathematics. Chapman and Hall, 1999, no. 104. [29] R. D. Johnson and E. S. Kasischke, “Change vector analysis: A technique for the multispectral monitoring of land cov er and condition, ” Int. J. Remote Sens. , v ol. 19, no. 3, pp. 411–426, Jan. 1998. [30] A. Singh, “Digital change detection techniques using remotely-sensed data, ” Int. J. Remote Sens. , vol. 10, no. 6, pp. 989–1003, 1989. [31] Jianchao Y ang, J. Wright, T . S. Huang, and Y i Ma, “Image super-resolution via sparse representation, ” IEEE T rans. Image Process. , vol. 19, no. 11, pp. 2861–2873, Nov . 2010. [32] N. Zhao, Q. W ei, A. Basarab, N. Dobigeon, D. Kouame, and J.-Y . T ourneret, “Fast Single Image Super-Resolution Using a New Analytical Solution for ` 2 – ` 2 Problems, ” IEEE T rans. Image Pr ocess. , vol. 25, no. 8, pp. 3683–3697, Aug. 2016. [33] R. C. Hardie, M. T . Eismann, and G. L. Wilson, “MAP estimation for hyperspectral image resolution enhancement using an auxiliary sensor, ” IEEE T rans. Image Pr ocess. , vol. 13, no. 9, pp. 1174–1184, Sept. 2004. [34] M. T . Eismann and R. C. Hardie, “Hyperspectral resolution enhancement using high-resolution multispectral imagery with arbitrary response functions, ” IEEE T rans. Image Pr ocess. , vol. 43, no. 3, pp. 455–465, March 2005. September 21, 2016 DRAFT 22 [35] Y . Zhang, S. De Backer , and P . Scheunders, “Noise-resistant wav elet-based Bayesian fusion of multispectral and hyperspectral images, ” IEEE T rans. Geosci. Remote Sens. , vol. 47, no. 11, pp. 3834–3843, Nov . 2009. [36] M. Ebrahimi and E. R. Vrscay , “Regularization schemes in volving self-similarity in imaging inverse problems, ” in J. Physics: Conf. Series , 2008. [37] Y .-W . T ai, S. Liu, M. S. Brown, and S. Lin, “Super resolution using edge prior and single image detail synthesis, ” in Pr oc. IEEE Conference on Computer V ision and P attern Recognition (CVPR) , 2010, pp. 2400 – 2407. [38] J. Sun, J. Sun, Z. Xu, and H.-Y . Shum, “Gradient profile prior and its applications in image super-resolution and enhancement, ” IEEE T rans. Image Pr ocess. , vol. 20, no. 6, pp. 1529 – 1542, 2011. [39] H. A. Aly and E. Dubois, “Image up-sampling using total-variation regularization with a new observation model, ” IEEE T rans. Image Pr ocess. , vol. 14, no. 10, pp. 1647–1659, 2005. [40] C. V . Jiji, M. V . Joshi, and S. Chaudhuri, “Single-frame image super-resolution using learned wa velet coefficients, ” Int. J. Imaging Syst. T echnol. , vol. 14, pp. 105–112, 2004. [41] J. M. Bioucas-Dias, “Bayesian wa velet-based image decon volution: A GEM algorithm exploiting a class of heavy-tailed priors, ” IEEE T rans. Image Pr ocess. , vol. 15, no. 4, pp. 937–951, 2006. [42] J. Idier , Bayesian appr oach to in verse problems , J. Idier, Ed. ISTE ; Wile y , 2008. [43] Q. W ei, N. Dobigeon, and J.-Y . T ourneret, “Bayesian fusion of multispectral and hyperspectral images using a block coordinate descent method, ” in Pr oc. IEEE GRSS W orkshop Hyperspectral Image SIgnal Pr ocess.: Evolution in Remote Sens. (WHISPERS) , 2015. [44] F . Bach, R. Jenatton, J. Mairal, and G. Obozinski, Con vex Optimization with Spar sity-Inducing Norms , ser . Optimization for Machine Learning. MIT Press, 2011. [45] S. Cotter, B. Rao, Kjersti Engan, and K. Kreutz-Delgado, “Sparse solutions to linear in verse problems with multiple measurement vectors, ” IEEE T rans. Signal Process. , vol. 53, no. 7, pp. 2477–2488, July 2005. [46] C. Ding, D. Zhou, X. He, and H. Zha, “R1-PCA: rotational in variant L1-norm principal component analysis for robust subspace factorization, ” in Pr oc. Int. Conf. Machine Learning (ICML) , 2006, pp. 281–288. [47] J. Liu, S. Ji, and J. Y e, “Multi-task feature learning via efficient L2,1-norm minimization, ” Uncertainty in Artificial Intelligence , 2009. [48] S. Wright, R. Nowak, and M. Figueiredo, “Sparse Reconstruction by Separable Approximation, ” IEEE T rans. Signal Pr ocess. , vol. 57, no. 7, pp. 2479–2493, July 2009. [49] F . Nie, H. Huang, X. Cai, and C. H. Ding, “Efficient and robust feature selection via joint l2, 1-norms minimization, ” in Advances in neural information processing systems , 2010, pp. 1813–1821. [50] H. Lu, X. Long, and J. Lv , “ A fast algorithm for recovery of jointly sparse vectors based on the alternating direction methods, ” in International Confer ence on Artificial Intelligence and Statistics , 2011, pp. 461–469. [51] P . L. Combettes and V . R. W ajs, “Signal recovery by proximal forward-backw ard splitting, ” Multiscale Modeling & Simulation , vol. 4, no. 4, pp. 1168–1200, 2005. [52] P . L. Combettes and J.-C. Pesquet, “Proximal Splitting Methods in Signal Processing, ” Fixed-P oint Algorithm for In verse Pr oblems in Science and Engineering , no. Springer Optimization and Its Applications, 2011. [53] ——, “ A Douglas-Rachford splitting approach to nonsmooth conv ex variational signal recovery , ” IEEE J. Sel. T opics Signal Pr ocess. , vol. 1, no. 4, pp. 564–574, 2007. [54] S. Bo yd, “Distributed optimization and statistical learning via the alternating direction method of multipliers, ” F oundations and T r ends in Machine Learning , vol. 3, no. 1, pp. 1–122, 2010. [55] N. Parikh and S. Boyd, “Proximal Algorithms, ” F oundations and T rends in Optimization , vol. 1, no. 3, pp. 123–231, 2013. [56] L. W ald, T . Ranchin, and M. Mangolini, “Fusion of satellite images of different spatial resolutions: assessing the quality of resulting images, ” Photogrammetric engineering and r emote sensing , vol. 63, no. 6, pp. 691–699, 1997. September 21, 2016 DRAFT

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment