Computing Functions of Random Variables via Reproducing Kernel Hilbert Space Representations

We describe a method to perform functional operations on probability distributions of random variables. The method uses reproducing kernel Hilbert space representations of probability distributions, and it is applicable to all operations which can be…

Authors: Bernhard Sch"olkopf, Krikamol Mu, et

Computing Functions of Random V ariables via Repr oducing K er nel Hilbert Space Repr esentations Bernhard Sch ¨ olkopf ∗ Krikamol Muandet ∗ Kenji Fukumizu † Jonas Peters ‡ September 5, 2018 Abstract W e describe a method to perform functional operations on probability distri- butions of random variables. The method uses reproducing kernel Hilbert space representations of probability distributions, and it is applicable to all operations which can be applied to points drawn from the respectiv e distributions. W e refer to our approach as kernel probabilistic pr ogramming . W e illustrate it on synthetic data, and show how it can be used for nonparametric structural equation models, with an application to causal inference. 1 Intr oduction Data types, deriv ed structures, and associated operations play a crucial role for pro- gramming languages and the computations we carry out using them. Choosing a data type, such as Integer , Float, or String, determines the possible values, as well as the operations that an object permits. Operations typically return their results in the form of data types. Composite or deri v ed data types may be constructed from simpler ones, along with specialised operations applicable to them. The goal of the present paper is to propose a way to represent distrib utions ov er data types, and to generalize operations built originally for the data types to operations ap- plicable to those distributions. Our approach is nonparametric and thus not concerned with what distribution models make sense on which statistical data type (e.g., binary , ordinal, categorical). It is also general, in the sense that in principle, it applies to all data types and functional operations. The price to pay for this generality is that • our approach will, in most cases, pro vide approximate results only; howe v er , we include a statistical analysis of the con ver gence properties of our approximations, and ∗ Max Planck Institute for Intelligent Systems, 72076 T ¨ ubingen, Germany † Institute for Statistical Mathematics, 10-3 Midori-cho, T achikawa, T okyo, Japan ‡ ETH Z ¨ urich, Seminar f ¨ ur Statistik, 8092 Z ¨ urich, Switzerland 1 • for each data type inv olv ed (as either input or output), we require a positiv e definite kernel capturing a notion of similarity between two objects of that type. Some of our results require, moreover , that the kernels be characteristic in the sense that they lead to injecti v e mappings into associated Hilbert spaces. In a nutshell, our approach represents distributions over objects as elements of a Hilbert space generated by a kernel, and describes ho w those elements are updated by opera- tions av ailable for sample points. If the kernel is tri vial in the sense that each distinct object is only similar to itself, the method reduces to a Monte Carlo approach where the operations are applied to sample points which are propagated to the next step. Com- putationally , we represent the Hilbert space elements as finite weighted sets of objects, and all operations reduce to finite expansions in terms of kernel functions between objects. The remainder of the present article is organized as follows. After describing the necessary preliminaries, we provide an exposition of our approach (Section 3). Sec- tion 4 analyses an application to the problem of cause-effect inference using structural equation models. W e conclude with a limited set of experimental results. 2 K er nel Maps 2.1 Positi ve definite k er nels The concept of representing probability distributions in a reproducing kernel Hilbert space (RKHS) has recently attracted attention in statistical inference and machine learning [2, 36]. One of the advantages of this approach is that it allows us to apply RKHS methods to probability distributions, often with strong theoretical guarantees [44, 45]. It has been applied successfully in many domains such as graphical models [38, 39], two-sample testing [11], domain adaptation [16, 13, 23], and supervised learn- ing on probability distributions [24, 48]. W e be gin by briefly revie wing these methods, starting with some prerequisites. W e assume that our input data { x 1 , . . . , x m } liv e in a nonempty set X and are gen- erated i.i.d. by a random experiment with Borel probability distribution p . By k , we denote a positive definite kernel on X × X , i.e., a symmetric function k : X × X → R (1) ( x , x 0 ) 7→ k ( x , x 0 ) (2) satisfying the following nonne gati vity condition: for any m ∈ N , and a 1 , . . . , a m ∈ R , m ∑ i , j = 1 a i a j k ( x i , x j ) ≥ 0 . (3) If equality in (3) implies that a 1 = · · · = a m = 0, the kernel is called strictly positive definite. 2 2.2 K ernel maps f or points Kernel methods in machine learning, such as Support V ector Machines or Kernel PCA, are based on mapping the data into a reproducing kernel Hilbert space (RKHS) H [3, 34, 35, 15, 47], Φ : X → H (4) x 7→ Φ ( x ) , (5) where the featur e map (or kernel map ) Φ satisfies k ( x , x 0 ) = h Φ ( x ) , Φ ( x 0 ) i (6) for all x , x 0 ∈ X . One can show that ev ery k taking the form (6) is positive definite, and ev ery positiv e definite k allo ws the construction of H and Φ satisfying (6). The canonical feature map, which is what by default we think of whene ver we write Φ , is Φ : X → R X (7) x 7→ k ( x , . ) , (8) with an inner product satisfying the r epr oducing kernel pr operty k ( x , x 0 ) = h k ( x , . ) , k ( x 0 , . ) i . (9) Mapping observ ations x ∈ X into a Hilbert space is rather con v enient in some cases. If the original domain X has no linear structure to begin with (e.g., if the x are strings or graphs), then the Hilbert space representation provides us with the possibility to construct geometric algorithms by using the inner product of H . Moreover , ev en if X is a linear space in its own right, it can be helpful to use a nonlinear feature map in order to construct algorithms that are linear in H while corresponding to nonlinear methods in X . 2.3 K ernel maps f or sets and distributions One can generalize the map Φ to accept as inputs not only single points, b ut also sets of points or distributions. It was pointed out that the kernel map of a set of points X : = { x 1 , . . . , x m } , µ [ X ] : = 1 m m ∑ i = 1 Φ ( x i ) , (10) corresponds to a kernel density estimator in the input domain [33, 32], provided the kernel is nonnegati ve and integrates to 1. Howe ver , the map (10) can be applied for all positive definite kernels, including ones that take negati v e v alues or that are not normalized. Moreov er , the fact that µ [ X ] li ves in an RKHS and the use of the associ- ated inner product and norm will hav e a number of subsequent adv antages. For these reasons, it would be misleading to think of (10) simply as a kernel density estimator . The kernel map of a distrib ution p can be defined as the expectation of the feature map [2, 36, 11], µ [ p ] : = E x ∼ p [ Φ ( x )] , (11) 3 k ( x , x 0 ) = h x , x 0 i mean of X k ( x , x 0 ) = ( h x , x 0 i + 1 ) n moments of X up to order n ∈ N k ( x , x 0 ) strictly p.d. all of X (i.e., µ injective) T able 1: What information about a sample X does the kernel map µ [ X ] (see (10)) contain? k ( x , x 0 ) = h x , x 0 i expectation of p k ( x , x 0 ) = ( h x , x 0 i + 1 ) n moments of p up to order n ∈ N k ( x , x 0 ) characteristic/uni versal all of p (i.e., µ injectiv e) T able 2: What information about p does the kernel map µ [ p ] (see (11)) contain? For the notions of characteristic/uni versal kernels, see [46, 8, 7]; an example thereof is the Gaussian kernel (26). where we overload the symbol µ and assume, here and below , that p is a Borel proba- bility measure, and E x , x 0 ∼ p [ k ( x , x 0 )] < ∞ . (12) A suf ficient condition for this to hold is the assumption that there e xists an M ∈ R such that k k ( x , . ) k ≤ M < ∞ , (13) or equiv alently k ( x , x ) ≤ M 2 , on the support of p . Kernel maps for sets of points or distributions are sometimes referred to as kernel mean maps to distinguish them from the original kernel map. Note, ho we ver , that they include the kernel map of a point as a special case, so there is some justification in using the same term. If p = p X is the law of a random variable X , we sometimes write µ [ X ] instead of µ [ p ] . In all cases it is important to understand what information we retain, and what we lose, when representing an object by its kernel map. W e summarize the kno wn results [47, 8, 36, 11, 45] in T ables 1 and 2. W e conclude this section with a discussion of ho w to use kernel mean maps. T o this end, first assume that Φ is injectiv e, which is the case if k is strictly positiv e definite (see T able 1) or characteristic/univ ersal (see T able 2). P articular cases include the moment generating function of a R V with distribution p , M p ( . ) = E x ∼ p h e h x , · i i , (14) which equals (11) for k ( x , x 0 ) = e h x , x 0 i using (8). W e can use the map to test for equality of data sets, k µ [ X ] − µ [ X 0 ] k = 0 ⇐ ⇒ X = X 0 , (15) or distributions, k µ [ p ] − µ [ p 0 ] k = 0 ⇐ ⇒ p = p 0 . (16) 4 T wo applications of this idea lead to tests for homogeneity and independence . In the latter case, we estimate k µ [ p x p y ] − µ [ p xy ] k [1, 12]; in the former case, we estimate k µ [ p ] − µ [ p 0 ] k [11]. Estimators for these applications can be constructed in terms of the empirical mean estimator (the kernel mean of the empirical distrib ution) µ [ ˆ p m ] = 1 m m ∑ i = 1 Φ ( x i ) = µ [ X ] , (17) where X = { x 1 , . . . , x m } is an i.i.d. sample from p (cf. (10)). As an aside, note that using ideas from James-Stein estimation [17], we can construct shrinkage estimators that improv e upon the standard empirical estimator (see e.g., [25, 26]). One can sho w that µ [ ˆ p m ] con verges at rate m − 1 / 2 (cf. [36] and [37, Theorem 27]): Theorem 1 Assume that k f k ∞ ≤ 1 for all f ∈ H with k f k H ≤ 1 . Then with proba- bility at least 1 − δ , k µ [ ˆ p m ] − µ [ p ] k H ≤ 2 m E h √ tr K i + r 2 ln ( 2 / δ ) m , (18) wher e K i j : = k ( x i , x j ) . Independent of the requirement of injectivity , µ can be used to compute expecta- tions of arbitrary functions f living in the RKHS, using the identity E x ∼ p [ f ( x )] = h µ [ p ] , f i , (19) which follows from the f act that k represents point ev aluation in the RKHS, f ( x ) = h k ( x , . ) , f i . (20) A small RKHS, such as the one spanned by the linear kernel k ( x , x 0 ) = h x , x 0 i , (21) may not contain the functions we are interested in. If, on the other hand, our RKHS is sufficiently rich (e.g., associated with a universal kernel [46]), we can use (19) to approximate, for instance, the probability of any interval ( a , b ) on a bounded domain, by approximating the indicator function I ( a , b ) as a kernel expansion ∑ n i = 1 a i k ( x i , . ) , and substituting the latter into (19). See [19] for further discussion. Alternativ ely , if p has a density , we can estimate it using methods such as reproducing kernel moment matching and combinations with kernel density estimation [41, 19]. This shows that the map is not a one-way road: we can map our objects of interest into the RKHS, perform linear algebra and geometry on them [34], and at the end answer questions of interest. In the next section, we shall take this a step further , and discuss how to implement rather general operations in the RKHS. Before doing so, we mention two additional applications of kernel maps. W e can map conditional distributions and perform Bayesian updates [8, 51, 9], and we can connect kernel maps to Fourier optics, leading to a physical realization as Fraunhofer diffraction [14]. 5 3 Computing Functions of Independent Random V ari- ables 3.1 Introduction and Earlier W ork A random v ariable (R V) is a measurable function mapping possible outcomes of an un- derlying random experiment to a set E (often, E ⊂ R d , b ut our approach will be more general). The probability measure of the random e xperiment induces the distribution of the random variable. W e will below not deal with the underlying probability space ex- plicitly , and instead directly start from random v ariables X , Y with distributions p X , p Y and values in X , Y . Suppose we hav e access to (data from) p X and p Y , and we want to compute the distribution of the random variable f ( X , Y ) , where f is a measurable function defined on X × Y . For instance, if our operation is addition f ( X , Y ) = X + Y , and the distributions p X and p Y hav e densities, we can compute the density of the distribution of f ( X , Y ) by con v olving those densities. If the distributions of X and Y belong to some para- metric class, such as a class of distributions with Gaussian density functions, and if the arithmetic expression is elementary , then closed form solutions for certain fav or - able combinations exist. At the other end of the spectrum, we can resort to numerical integration or sampling to approximate f ( X , Y ) . Arithmetic operations on random variables are abundant in science and engineer- ing. Measurements in real-world systems are subject to uncertainty , and thus subse- quent arithmetic operations on these measurements are operations on random variables. An example due to [42] is signal amplification. Consider a set of n amplifiers connected together in a serial fashion. If the amplification of the i -th amplifier is denoted by X i , then the total amplification, denoted by Y , is Y = X 1 · X 2 · · · X n , i.e., a product of n random variables. A well-established framew ork for arithmetic operation on independent random variables (iR Vs) relies on inte gral transform methods [42]. The above example of addition already suggests that Fourier transforms may help, and indeed, people hav e used transforms such as the ones due to Fourier and Mellin to deriv e the distribution function of either the sum, difference, product, or quotient of iR Vs [5, 43, 30, 42]. [49] proposes an approximation using Laguerre polynomials, and a notion of en velopes bounding the cumulati ve distribution function. This framework also allows for the treatment of dependent random v ariables, but the bounds can become very loose after repeated operations. [21] approximate the probability distrib utions of the input random variables as mixture models (using uniform and Gaussian distributions), and apply the computations to all mixture components. [18] considers a numerical approach to implement arithmetic operations on iR Vs, representing the distributions using piecewise Chebyshev approximations. This lends itself well to the use of approximation methods that perform well as long as the func- tions are well-behaved. Finally , Monte Carlo approaches can be used as well, and are popular in scientific applications (see e.g., [6]). The goal of the present paper is to develop a deri v ed data type representing a distri- bution over another data type, and to generalize the av ailable computational operations 6 to this data type, at least approximately . This would allow us to conv eniently handle error propagation as in the example discussed earlier . It w ould also help us perform in- ference inv olving conditional distrib utions of such variables giv en observed data. The latter is the main topic of a subject area that has recently begun to attract attention, pr obabilistic pr ogr amming [10]. A variety of probabilistic programming languages has been proposed [50, 27, 4]. T o emphasize the central role that kernel maps play in our approach, we refer to it as kernel pr obabilistic pr ogr amming (KPP) . 3.2 Computing Functions of Independent Random V ariables using K ernel Maps The key idea of KPP is to provide a consistent estimator of the kernel map of an ex- pression in v olving operations on random variables. This is done by applying the ex- pression to the sample points, and showing that the resulting kernel expansion has the desired property . Operations in volving more than one R V will increase the size of the expansion, but we can resort to existing RKHS approximation methods to keep the complexity of the resulting expansion limited, which is advisable in particular if we want to use it as a basis for further operations. The benefits of KPP are three-fold. First, we do not make parametric assumptions on the distributions associated with the random variables. Second, our approach applies not only to real-valued random v ari- ables, but also to multiv ariate random variables, structured data, functional data, and other domains, as long as positiv e definite kernels can be defined on the data. Finally , it does not require explicit density estimation as an intermediate step, which is difficult in high dimensions. W e begin by describing the basic idea. Let f be a function of two independent R Vs X , Y taking values in the sets X , Y , and suppose we are gi ven i.i.d. m -samples x 1 , . . . , x m and y 1 , . . . , y m . W e are interested in the distribution of f ( X , Y ) , and seek to estimate its representation µ [ f ( X , Y )] : = E [ Φ ( f ( X , Y ))] in the RKHS as 1 m 2 m ∑ i , j = 1 Φ ( f ( x i , y j )) . (22) Although x 1 , . . . , x m ∼ p X and y 1 , . . . , y m ∼ p Y are i.i.d. observ ations, this does not im- ply that the { f ( x i , y j ) | i , j = 1 , . . . , m } form an i.i.d. m 2 -sample from f ( X , Y ) , since — loosely speaking — each x i (and each y j ) leaves a footprint in m of the observations, leading to a (possibly weak) dependency . Therefore, Theorem 1 does not imply that (22) is consistent. W e need to do some additional work: Theorem 2 Given two independent random variables X , Y with values in X , Y , mu- tually independent i.i.d. samples x 1 , . . . , x m and y 1 , . . . , y n , a measurable function f : X × Y → Z , and a positive definite kernel on Z × Z with RKHS map Φ , then 1 mn m ∑ i = 1 n ∑ j = 1 Φ ( f ( x i , y j )) (23) is an unbiased and consistent estimator of µ [ f ( X , Y )] . 7 Mor eover , we have con ver gence in pr obability 1 mn m ∑ i = 1 n ∑ j = 1 Φ ( f ( x i , y j )) − E [ Φ ( f ( X , Y ))] = O p 1 √ m + 1 √ n , ( m , n → ∞ ) . (24) As an aside, note that (23) is an RKHS valued tw o-sample U-statistic. Pr oof For any i , j , we have E [ Φ ( f ( x i , y j ))] = E [ Φ ( f ( X , Y ))] , hence (23) is unbiased. The con vergence (24) can be obtained as a corollary to Theorem 3, and the proof is omitted here. 3.3 A ppr oximating Expansions T o keep computational cost limited, we need to use approximations when performing multi-step operations. If for instance, the outcome of the first step takes the form (23), then we already hav e m · n terms, and subsequent steps would further increase the number of terms, thus quickly becoming computationally prohibitiv e. W e can do so by using the methods described in Chapter 18 of [34]. They fall in two categories. In reduced set selection methods, we provide a set of expansion points (e.g., all points f ( x i , y j ) in (23)), and the approximation method sparsifies the vector of ex- pansion coefficients. This can be for instance done by solving eigen value problems or linear programs. Reduced set construction methods, on the other hand, construct ne w expansion points. In the simplest case, they proceed by sequentially finding approxi- mate pre-images of RKHS elements. They tend to be computationally more demanding and suffer from local minima; ho wever , they can lead to sparser expansions. Either way , we will end up with an approximation p ∑ k = 1 γ k Φ ( z k ) (25) of (23), where usually p m · n . Here, the z k are either a subset of the f ( x i , y j ) , or other points from Z . It is instructi ve to consider some special cases. For simplicity , assume that Z = R d . If we use a Gaussian kernel k ( x , x 0 ) = exp ( − k x − x 0 k 2 / ( 2 σ 2 )) (26) whose bandwidth σ is much smaller than the closest pair of sample points, then the points mapped into the RKHS will be almost orthogonal and there is no way to sparsify a kernel expansion such as (23) without incurring a lar ge RKHS error . In this case, we can identify the estimator with the sample itself, and KPP reduces to a Monte Carlo method. If, on the other hand, we use a linear kernel k ( z , z 0 ) = h z , z 0 i on Z = R d , then Φ is the identity map and the expansion (23) collapses to one real number, i.e., we would effectiv ely represent f ( X , Y ) by its mean for any further processing. By choosing kernels that lie ‘in between’ these two extremes, we retain a varying amount of information which we can thus tune to our wishes, see T able 1. 8 3.4 Computing Functions of RKHS A ppr oximations More generally , consider approximations of kernel means µ [ X ] and µ [ Y ] ˆ µ [ X ] : = m 0 ∑ i = 1 α i Φ x ( x 0 i ) , ˆ µ [ Y ] : = n 0 ∑ j = 1 β j Φ y ( y 0 j ) . (27) In our case, we think of (27) as RKHS-norm approximations of the outcome of pre- vious operations performed on random v ariables. Such approximations typically hav e coefficients α ∈ R n 0 and β ∈ R m 0 that are not uniform, that may not sum to one, and that may take neg ati ve v alues [34], e.g., for conditional mean maps [40, 9]. W e propose to approximate the kernel mean µ [ f ( X , Y )] by the estimator ˆ µ [ f ( X , Y )] : = 1 ∑ m 0 i = 1 α i ∑ n 0 j = 1 β j m 0 ∑ i = 1 n 0 ∑ j = 1 α i β j Φ z ( f ( x 0 i , y 0 j )) , (28) where the feature map Φ z defined on Z , the range of f , may be different from both Φ x and Φ y . The expansion has m 0 · n 0 terms, which we can subsequently approximate more compactly in the form (25), ready for the next operation. Note that (28) contains (23) as a special case. One of the adv antages of our approach is that (23) and (28) apply for general data types. In other words, X , Y , Z need not be vector spaces — they may be arbitrary nonempty sets, as long as positiv e definite kernels can be defined on them. Con ver gence analysis in an idealized setting W e analyze the conv ergence of (28) under the assumption that the expansion points are actually samples x 1 , . . . , x m from X and y 1 , . . . , y n from Y , which is for instance the case if the expansions (27) are the result of reduced set selection methods (cf. Section 3.3). Moreover , we assume that the expansion coefficients α 1 , . . . , α m and β 1 , . . . , β n are constants, i.e., independent of the samples. The following proposition gives a suf ficient condition for the approximations in (27) to con ver ge. Note that below , the coefficients α 1 , . . . , α m depend on the sample size m , but for simplicity we refrain from writing them as α 1 , m , . . . , α m , m ; likewise, for β 1 , . . . , β n . W e make this remark to ensure that readers are not puzzled by the below statement that ∑ m i = 1 α 2 i → 0 as m → ∞ . Proposition 1 Let x 1 , . . . , x m be an i.i.d. sample and ( α i ) m i = 1 be constants with ∑ m i = 1 α i = 1 . Assume E [ k ( X , X )] > E [ k ( X , ˜ X )] , wher e X and ˜ X are independent copies of x i . Then, the con ver gence E m ∑ i = 1 α i Φ ( x i ) − µ [ X ] 2 → 0 ( m → ∞ ) holds true if and only if ∑ m i = 1 α 2 i → 0 as m → ∞ . Pr oof From the expansion E m ∑ i = 1 α i Φ ( x i ) − µ [ X ] 2 9 = m ∑ i , s = 1 α i α s E [ k ( x i , x s )] − 2 m ∑ i = 1 α i E [ k ( x i , X )] + E [ k ( X , ˜ X )] = 1 − ∑ i α i 2 E [ k ( X , ˜ X )] + ∑ i α 2 i n E [ k ( X , X )] − E [ k ( X , ˜ X )] o , the assertion is straightforward. The ne xt result shows that if our approximations (27) conv erge in the sense of Proposition 1, then the estimator (28) (with expansion coefficients summing to 1) is consistent. Theorem 3 Let x 1 , . . . , x m and y 1 , . . . , y n be mutually independent i.i.d. samples, and the constants ( α i ) m i = 1 , ( β j ) n j = 1 satisfy ∑ m i = 1 α i = ∑ n j = 1 β j = 1 . Assume ∑ m i = 1 α 2 i and ∑ n j = 1 β 2 j con ver ge to zer o as n , m → ∞ . Then m ∑ i = 1 n ∑ j = 1 α i β j Φ ( f ( x i , y j )) − µ [ f ( X , Y )] = O p r ∑ i α 2 i + r ∑ j β 2 j ! as m , n → ∞ . Pr oof By expanding and taking expectations, one can see that E m ∑ i = 1 n ∑ j = 1 α i β j Φ ( f ( x i , y j )) − E [ Φ ( f ( X , Y ))] 2 equals m ∑ i = 1 n ∑ j = 1 α 2 i β 2 j E [ k ( f ( X , Y ) , f ( X , Y ))] + ∑ s 6 = i ∑ j α i α s β 2 j E [ k ( f ( X , Y ) , f ( ˜ X , Y ))] + ∑ i ∑ t 6 = j α 2 i β j β t E [ k ( f ( X , Y ) , f ( X , ˜ Y ))] + ∑ s 6 = i ∑ t 6 = j α i α s β j β t E [ k ( f ( X , Y ) , f ( ˜ X , ˜ Y ))] − 2 ∑ i ∑ j α i β j E [ k ( f ( X , Y ) , f ( ˜ X , ˜ Y ))] + E [ k ( f ( X , Y ) , f ( ˜ X , ˜ Y ))] = ∑ i α 2 i ∑ j β 2 j E [ k ( f ( X , Y ) , f ( X , Y ))] + ( 1 − ∑ i α i ∑ j β j 2 + ∑ i α 2 i ∑ j β 2 j − ∑ i α 2 i ( ∑ j β j ) 2 − ( ∑ i α i ) 2 ∑ j β 2 j ) E [ k ( f ( X , Y ) , f ( ˜ X , ˜ Y ))] + ( ∑ i α i ) 2 − ∑ i α 2 i ∑ j β 2 j E [ k ( f ( X , Y ) , f ( ˜ X , Y ))] 10 + ∑ i α 2 i ( ∑ j β j ) 2 − ∑ j β 2 j E [ k ( f ( X , Y ) , f ( X , ˜ Y ))] = ∑ i α 2 i ∑ j β 2 j E [ k ( f ( X , Y ) , f ( X , Y ))] + ( ∑ i α 2 i ∑ j β 2 j − ∑ i α 2 i − ∑ j β 2 j ) E [ k ( f ( X , Y ) , f ( ˜ X , ˜ Y ))] + 1 − ∑ i α 2 i ∑ j β 2 j E [ k ( f ( X , Y ) , f ( ˜ X , Y ))] + ∑ i α 2 i 1 − ∑ j β 2 j E [ k ( f ( X , Y ) , f ( X , ˜ Y ))] , which implies the norm in the assertion of the theorem con v erges to zero at O p q ∑ i α 2 i + q ∑ j β 2 j under the assumptions on α i and β j . Here ( ˜ X , ˜ Y ) is an inde- pendent copy of ( X , Y ) . This concludes the proof. Note that in the simplest case, where α i = 1 / m and β j = 1 / n , we have ∑ i α 2 i = 1 / m and ∑ j β 2 j = 1 / n , which pro v es Theorem 2. It is also easy to see from the proof that we do not strictly need ∑ i α i = ∑ j β j = 1 — for the estimator to be consistent, it suffices if the sums con v erge to 1. For a suf ficient condition for this con vergence, see [19]. More general expansion sets T o conclude our discussion of the estimator (28), we turn to the case where the expansions (27) are computed by reduced set construction, i.e., they are not necessarily expressed in terms of samples from X and Y . This is more difficult, and we do not pro vide a formal result, b ut just a qualitati ve discussion. T o this end, suppose the approximations (27) satisfy m 0 ∑ i = 1 α i = 1 and for all i , α i > 0 , (29) n 0 ∑ j = 1 β j = 1 and for all j , β j > 0 , (30) and we approximate µ [ f ( X , Y )] by the quantity (28). W e assume that (27) are good approximations of the kernel means of two unknown random v ariables X and Y ; we also assume that f and the kernel mean map along with its inv erse are continuous. W e hav e no samples from X and Y , b ut we can turn (27) into sample estimates based on artificial samples X and Y , for which we can then appeal to our estimator from Theorem 2. T o this end, denote by X 0 = ( x 0 1 , . . . , x 0 m 0 ) and Y 0 = ( y 0 1 , . . . , y 0 n 0 ) the expansion points in (27). W e construct a sample X = ( x 1 , x 2 , . . . ) whose kernel mean is close to ∑ m 0 i = 1 α i Φ x ( x 0 i ) as follows: for each i , the point x 0 i appears in X with multiplicity b m · α i c , i.e., the largest integer not e xceeding m · α i . This leads to a sample of size at most m . Note, moreover , that the multiplicity of x 0 i , divided by m , dif fers from α i by at most 1 / m , so ef fecti vely we hav e quantized the α i coefficients to this accurac y . 11 Since m 0 is constant, this implies that for any ε > 0, we can choose m large enough to ensure that 1 m m ∑ 1 = 1 Φ x ( x i ) − m 0 ∑ i = 1 α i Φ x ( x 0 i ) 2 < ε . (31) W e may thus work with 1 m ∑ m 1 = 1 Φ x ( x i ) , which for strictly positi ve definite kernels corresponds uniquely to the sample X = ( x 1 , . . . , x m ) . By the same argument, we ob- tain a sample Y = ( y 1 , . . . , y n ) approximating the second expansion. Substituting both samples into the estimator from Theorem 2 leads to ˆ µ [ f ( X , Y )] = 1 ∑ m 0 i = 1 ˆ α i ∑ n 0 j = 1 ˆ β j m 0 ∑ i = 1 n 0 ∑ j = 1 ˆ α i ˆ β j Φ z ( f ( x 0 i , y 0 j )) , (32) where ˆ α i = b m · α i c / m , and ˆ β i = b n · β i c / n . By choosing sufficiently large m , n , this becomes an arbitrarily good approximation (in the RKHS norm) of the proposed es- timator (28). Note, ho we ver , that we cannot claim based on this argument that this estimator is consistent, not the least since Theorem 2 in the stated form requires i.i.d. samples. Larger sets of random variables W ithout analysis, we include the estimator for the case of more than two variables: Let g be a measurable function of jointly independent R Vs U j ( j = 1 , . . . , p ) . Giv en i.i.d. observ ations u j 1 , . . . , u j m ∼ U j , we hav e 1 m p m ∑ m 1 ,..., m p = 1 Φ g ( u 1 m 1 , . . . , u p m p ) m → ∞ − − − → µ [ g ( U 1 , . . . , U p )] . (33) in probability . Here, in order to keep notation simple, we hav e assumed that the samples sizes for each R V are identical. As above, we note that (i) g need not be real-v alued, it can take values in some set Z for which we hav e a (possibly characteristic) positive definite kernel; (ii) we can extend this to general kernel expansions like (28); and (iii) if we use Gaussian kernels with width tending to 0, we can think of the abov e as a sampling method. 4 Dependent R Vs and Structural Equation Models For dependent R Vs, the proposed estimators are not applicable. One way to handle dependent R Vs is to appeal to the fact that any joint distribution of random variables can be written as a structural equation model with independent noises. This leads to an interesting application of our method to the field of causal inference. W e consider a model X i = f i ( P A i , U i ) , for i = 1 , . . . , p , with jointly independent noise terms U 1 , . . . , U p . Such models arise for instance in causal inference [28]. Each random v ariable X i is computed as a function f i of its noise term U i and its parents P A i in an underlying directed acyclic graph (D A G). Every graphical model w .r .t. a D A G can be expressed as such a structural equation model with suitable functions and noise terms (e.g., [29]). 12 If we recursiv ely substitute the parent equations, we can express each X i as a func- tion of only the independent noise terms U 1 , . . . , U p , X i = g i ( U 1 , . . . , U p ) . (34) Since we know how to compute functions of independent R Vs, we can try to test such a model (assuming knowledge of all inv olv ed quantities) by estimating the distance between RKHS images, ∆ = k µ [ X i ] − µ [ g i ( U 1 , . . . , U p )] k 2 (35) using the estimator described in (33) (we discuss the bi v ariate case in Theorem 4). It may be unrealistic to assume we hav e access to all quantities. Howe ver , there is a special case where this is con vei vable, which we will presently discuss. This is the case of additiv e noise models [29] Y = f ( X ) + U , with X ⊥ ⊥ U . (36) Such models are of interest for cause-effect inference since it is kno wn [29] that in the generic case, a model (36) can only be fit in one direction, i.e., if (36) holds true, then we cannot simultaneously hav e X = g ( Y ) + V , with Y ⊥ ⊥ V . (37) T o measure how well (36) fits the data, we define an estimator ∆ em p : = 1 m m ∑ i = 1 Φ ( y i ) − 1 m 2 m ∑ i , j = 1 Φ ( f ( x i ) + u j ) 2 . (38) Analogously , we define the estimator in the backward direction ∆ bw em p : = 1 m m ∑ i = 1 Φ ( x i ) − 1 m 2 m ∑ i , j = 1 Φ ( g ( y i ) + v j ) 2 . (39) Here, we assume that we are gi ven the conditional mean functions f : x 7→ E [ Y | X = x ] and g : y 7→ E [ X | Y = y ] . In practice, we would apply the following procedure: we are giv en a sample ( x 1 , y 1 ) , . . . , ( x m , y m ) . W e estimate the function f as well as the residual noise terms u 1 , . . . , u m by regression, and like wise for the backward function g [29]. Strictly speaking, we need to use separate subsamples to estimate function and noise terms, respecti vely , see [20]. Below , we show that ∆ em p con v erges to 0 for additiv e noise models (36). For the incorrect model (37), howe ver , ∆ bw em p will in the generic case not con verge to zero. W e can thus use the comparison of both values for deciding causal direction. Theorem 4 Suppose x 1 , . . . , x m and u 1 , . . . , u m ar e mutually independent i.i.d. samples, and y i = f ( x i ) + u i . Assume further that the dir ection of the additive noise model is identifiable [29] and the kernel for x is char acteristic. W e then have ∆ em p → 0 and (40) 13 ∆ bw em p 6→ 0 (41) in pr obability as m → ∞ . Pr oof Equation (40) follows from Theorem 2 since k 1 m ∑ m i = 1 Φ ( y i ) − µ [ Y ] k → 0 and k 1 m 2 ∑ m 2 i , j = 1 Φ ( f ( x i ) + u j ) − µ [ Y ] k → 0 (all conv er gences in this proof are in probability). T o prove (41), we assume that ∆ bw em p → 0 which implies 1 m 2 m ∑ i , j = 1 Φ ( g ( y i ) + v j ) − µ [ X ] → 0 . (42) The key idea is to introduce a random variable ˜ V that has the same distrib ution as V but is independent of Y and to consider the follo wing decomposition of the sum appearing in (42): 1 m 2 m ∑ i , j = 1 Φ ( g ( y i ) + v j ) = 1 m 2 m ∑ i = 1 Φ ( g ( y i ) + v i ) + 1 m 2 m ∑ i = 1 m − 1 ∑ k = 1 Φ ( g ( y i ) + v i + k ) = 1 m 1 m m ∑ i = 1 Φ ( x i ) + 1 m m − 1 ∑ k = 1 1 m m ∑ i = 1 Φ ( g ( y i ) + v i + k ) = : A m + B m , where the index for v is interpreted modulo m , for instance, v m + 3 : = v 3 . Since v i + k = x i + k − g ( y i + k ) is independent of y i , it further follows from Theorem 2 that k A m − 1 m µ [ X ] k → 0 and k B m − m − 1 m µ [ g ( Y ) + ˜ V ] k → 0. Therefore, A m + B m − 1 m µ [ X ] − m − 1 m µ [ g ( Y ) + ˜ V ] → 0 . T ogether with (42) this implies µ [ X ] − 1 m µ [ X ] − m − 1 m µ [ g ( Y ) + ˜ V ] → 0 and therefore µ [ g ( Y ) + ˜ V ] = µ [ X ] . Since the kernel is characteristic, this implies g ( Y ) + ˜ V = X (in distribution), with Y ⊥ ⊥ ˜ V , which contradicts the identifiability of the additive noise model. As an aside, note that Theorem 4 would not hold if in (39) we were to estimate µ [ g ( Y ) + V ] by 1 m ∑ m i = 1 Φ ( g ( y i ) + v i ) instead of 1 m 2 ∑ m i , j = 1 Φ ( g ( y i ) + v j ) . 14 5 Experiments 5.1 Synthetic data W e consider basic arithmetic expressions that in v olv e multiplication X · Y , division X / Y , and exponentiation X Y on two independent scalar R Vs X and Y . Letting p X = N ( 3 , 0 . 5 ) and p Y = N ( 4 , 0 . 5 ) , we draw i.i.d. samples X = { x 1 , . . . , x m } and Y = { y 1 , . . . , y m } from p X and p Y . In the experiment, we are interested in the conv ergence (in RKHS norm) of our estimators to µ [ f ( X , Y )] . Since we do not have access to the latter , we use an indepen- dent sample to construct a proxy ˆ µ [ f ( X , Y )] = ( 1 /` 2 ) ∑ ` i , j = 1 Φ z ( f ( x i , y j )) . W e found that ` = 100 led to a suf ficiently good approximation. Next, we compare the three estimators, referred to as µ 1 , µ 2 and µ 3 below , for sample sizes m ranging from 10 to 50: 1. The sample-based estimator (23) 2. The estimator (28) based on approximations of the k ernel means, taking the form ˆ µ [ X ] : = ∑ m 0 i = 1 α i Φ x ( x i ) and ˆ µ [ Y ] : = ∑ m 0 j = 1 β j Φ y ( y j ) of µ [ X ] and µ [ Y ] , respec- tiv ely . W e used the simplest possible reduced set selection method: we randomly subsampled subsets of size m 0 ≈ 0 . 4 · m from X and Y , and optimized the coef- ficients { α 1 , . . . , α m 0 } and { β 1 , . . . , β m 0 } to best approximate the original kernel means (based on X and Y ) in the RKHS norm [34, Section 18.3]. 3. Analogously to the case of one variable (17), we may also look at the estimator ˆ µ 3 [ X , Y ] : = ( 1 / m ) ∑ m i = 1 Φ z ( f ( x i , y i )) , which sums only over m mutually indepen- dent terms, i.e., a small fraction of all terms of (23). For i = 1 , 2 , 3, we ev aluate the estimates ˆ µ i [ f ( X , Y )] using the error measure L = k ˆ µ i [ f ( X , Y )] − ˆ µ [ f ( X , Y )] k 2 . (43) W e use (6) to ev aluate L in terms of kernels. In all cases, we employ a Gaussian RBF kernel (26) whose bandwidth parameter is chosen using the median heuristic, setting σ to the median of the pairwise distances of distinct data points [12]. Figure 1 depicts the error (43) as a function of sample size m . For all operations, the error decreases as sample size increases. Note that Φ z is dif ferent across the three operations, resulting in different scales of the a v erage error in Figure 1. 5.2 Causal discovery via functions of k er nel means W e also apply our KPP approach to biv ariate causal inference problem (cf. Section 4). That is, given a pair of real-valued random v ariables X and Y with joint distribution p X Y , we are interested in identifying whether X causes Y (denote as X → Y ) or Y causes X (denote as Y → X ) based on observational data. W e assume an additi ve noise model E = f ( C ) + U with C ⊥ ⊥ U where C , E , U denote cause, effect, and residual (or “unexplained”) variable, respectiv ely . Belo w we present a preliminary result on the CauseEffectPairs benchmark data set [22]. 15 10 20 30 40 50 0 0.05 0.1 0.15 0.2 multiplication sa m pl e si ze ( m ) error ˆ µ 1 ˆ µ 2 ˆ µ 3 10 20 30 40 50 0 0.01 0.02 0.03 0.04 division sa m pl e si ze ( m ) error ˆ µ 1 ˆ µ 2 ˆ µ 3 10 20 30 40 50 0 0.02 0.04 0.06 0.08 0.1 exponentiation sa m pl e si ze ( m ) error ˆ µ 1 ˆ µ 2 ˆ µ 3 Figure 1: Error of the proposed estimators for three arithmetic operations — multipli- cation X · Y , division X / Y , and exponentiation X Y — as a function of sample size m . The error reported is an av erage of 30 repetitions of the simulations. The expensi ve estimator ˆ µ 1 (see (23)) performs best. The approximation ˆ µ 2 (see (28)) works well as sample sizes increase. For each causal pair ( X , Y ) = { ( x 1 , y 1 ) , . . . , ( x m , y m ) } , we estimate functions y ≈ f ( x ) and x ≈ g ( y ) as least-squares fits using degree 4 polynomials. W e illustrate one example in Figure 2a. Next, we compute the residuals in both directions as u i = y i − f ( x i ) and v j = x j − g ( y j ) . 1 Finally , we compute scores ∆ X → Y and ∆ Y → X by ∆ X → Y : = 1 m m ∑ i = 1 Φ ( y i ) − 1 m 2 m ∑ i , j = 1 Φ ( f ( x i ) + u j ) 2 , ∆ Y → X : = 1 m m ∑ i = 1 Φ ( x i ) − 1 m 2 m ∑ i , j = 1 Φ ( g ( y i ) + v j ) 2 . Follo wing Theorem 4, we can use the comparison between ∆ X → Y and ∆ Y → X to infer the causal direction. Specifically , we decide that X → Y if ∆ X → Y < ∆ Y → X , and that Y → X otherwise. In this experiment, we also use a Gaussian RBF kernel whose bandwidth parameter is chosen using the median heuristic. T o speed up the computation of ∆ X → Y and ∆ Y → X , we adopted a finite approximation of the feature map using 100 random Fourier features (see [31] for details). W e allow the method to abstain whenev er the two values are closer than δ > 0. By increasing δ , we can compute the method’ s accuracy as a function of a decision rate (i.e., the fraction of decisions that our method is forced to make) ranging from 100% to 0%. Figure 2b depicts the accuracy versus the decision rate for the 81 pairs in the CauseEffectPairs benchmark collection. The method achiev es an accuracy of 80%, which is significantly better than random guessing, when forced to infer the causal direction of all 81 pairs. 1 For simplicity , this was done using the same data; but cf. our discussion follo wing (38). 16 X Y P air 78 ( X → Y ) y=f(x) x=g(y) (a) Pair 78 and re gressors f , g 0 2 0 4 0 6 0 8 0 1 0 0 d e c i si o n r a t e ( % ) 0 2 0 4 0 6 0 8 0 1 0 0 a c c u r a c y ( % ) (b) the accuracy curv e Figure 2: (a) Scatter plot of the data of causal pair 78 in the CauseEffectPairs benchmarks, along with t he forward and backward function fits, y = f ( x ) and x = g ( y ) . (b) Accuracy of cause-effect decisions on all the 81 pairs in the CauseEffectPairs benchmarks. . 6 Conclusions W e have de veloped a kernel-based approach to compute functional operations on ran- dom variables taking values in arbitrary domains. W e have proposed estimators for RKHS representations of those quantities, ev aluated the approach on synthetic data, and showed how it can be used for cause-effect inference. While the results are en- couraging, the material presented in this article only describes the main ideas, and much remains to be done. W e believ e there is significant potential for a unified per- spectiv e on probabilistic programming based on the described methods, and hope that some of the open problems will be addressed in future work. Acknowledgements Thanks to Dominik Janzing, Le Song and Ilya T olstikhin for dicussions and comments. Refer ences [1] Bach, F .R., Jordan, M.I.: K ernel independent component analysis. Journal of Machine Learning Research 3 , 1–48 (2002) [2] Berlinet, A., Agnan, T .C.: Reproducing Kernel Hilbert Spaces in Probabilit y and Statistics. Kluwer Academic Publishers (2004) [3] Boser , B.E., Guyon, I.M., V apnik, V .N.: A training algorithm for optimal margin classi- fiers. In: Proceedings of the Fifth Annual W orkshop on Computational Learning Theory , COL T ’92, pp. 144–152. ACM, Ne w Y ork, NY , USA (1992) [4] Cassel, J.: Probabilistic programming with stochastic memoization: Implementing non- parametric bayesian inference. Mathematica Journal 16 (2014) 17 [5] Epstein, B.: Some applications of the Mellin transform in statistics. The Annals of Math- ematical Statistics 19 (3), 370–379 (1948) [6] Ferson, S.: What Monte Carlo methods cannot do. Human and Ecological Risk Assess- ment: An International Journal 2 (4), 990–1007 (1996). DOI 10.1080/10807039609383659 [7] Fukumizu, K., Bach, F ., Jordan, M.I.: Kernel dimension reduction in regression. Annals of Statistics 37 (4), 1871–1905 (2009) [8] Fukumizu, K., Gretton, A., Sun, X., Sch ¨ olkopf, B.: Kernel measures of conditional depen- dence. In: J.C. Platt, D. K oller , Y . Singer , S. Roweis (eds.) Advances in Neural Information Processing Systems, vol. 20, pp. 489–496. Curran, Red Hook, NY , USA (2008) [9] Fukumizu, K., Song, L., Gretton, A.: Kernel Bayes’ rule: Bayesian inference with positiv e definite kernels. Journal of Machine Learning Research 14 , 3753–3783 (2013) [10] Gordon, A.D., Henzinger , T .A., Nori, A.V ., Rajamani, S.K.: Probabilistic programming. In: International Conference on Software Engineering (ICSE, FOSE track) (2014) [11] Gretton, A., Borgwardt, K.M., Rasch, M.J., Sch ¨ olkopf, B., Smola, A.: A kernel two- sample test. Journal of Machine Learning Research 13 , 723–773 (2012) [12] Gretton, A., Bousquet, O., Smola, A.J., Sch ¨ olkopf, B.: Measuring statistical dependence with Hilbert-Schmidt norms. In: S. Jain, H.U. Simon, E. T omita (eds.) Algorithmic Learn- ing Theory: 16th International Conference, pp. 63–78. Springer , Berlin, German y (2005) [13] Gretton, A., Smola, A.J., Huang, J., Schmittfull, M., Borgwardt, K., Sch ¨ olkopf, B.: Co- variate shift by kernel mean matching. In: J.Q. Candela, M. Sugiyama, A. Schwaighofer , N.D. Lawrence (eds.) Dataset Shift in Machine Learning, pp. 131–160. MIT Press, Cam- bridge, MA, USA (2009) [14] Harmeling, S., Hirsch, M., Schlkopf, B.: On a link between kernel mean maps and Fraun- hofer diffraction, with an application to super-resolution beyond the diffraction limit. In: CVPR, pp. 1083–1090. IEEE (2013) [15] Hofmann, T ., Sch ¨ olkopf, B., Smola, A.J.: K ernel methods in machine learning. Annals of Statistics 36 (3), 1171–1220 (2008). URL [16] Huang, J., Smola, A., Gretton, A., Borgwardt, K., Sch ¨ olkopf, B.: Correcting sample se- lection bias by unlabeled data. In: Advances in Neural Information Processing Systems (NIPS), pp. 601–608. MIT Press, Cambridge, MA, USA (2007) [17] James, W ., Stein, J.: Estimation with quadratic loss. In: Proceedings of the Third Berke- ley Symposium on Mathematical Statistics and Probability , pp. 361–379. University of California Press (1961) [18] Jarosze wicz, S., Korzen, M.: Arithmetic operations on independent random variables: A numerical approach. SIAM J. Scientific Computing 34 (3) (2012) [19] Kanagaw a, M., Fukumizu, K.: Recov ering distributions from Gaussian RKHS embed- dings. In: JMLR W&CP 33 (Proc. AIST A TS 2014), pp. 457–465 (2014) [20] Kpotufe, S., Sgouritsa, E., Janzing, D., Sch ¨ olkopf, B.: Consistency of causal inference under the additiv e noise model. In: ICML (2014) 18 [21] Milios, D.: Probability distrib utions as program variables. Master’ s thesis, School of In- formatics, Univ ersity of Edinb urgh (2009) [22] Mooij, J.M., Peters, J., Janzing, D., Zscheischler , J., Sch ¨ olkopf, B.: Distinguishing cause from effect using observ ational data: methods and benchmarks. arXiv:1412.3773 (2014) [23] Muandet, K., Balduzzi, D., Sch ¨ olkopf, B.: Domain generalization via in variant feature representation. In: ICML, pp. 10–18 (2013) [24] Muandet, K., Fukumizu, K., Dinuzzo, F ., Sch ¨ olkopf, B.: Learning from distributions via support measure machines. In: P . Bartlett, F . Pereira, C. Bur ges, L. Bottou, K. W einberger (eds.) Advances in Neural Information Processing Systems 25, pp. 10–18 (2012) [25] Muandet, K., Fukumizu, K., Sriperumbudur , B., Gretton, A., Sch ¨ olkopf, B.: Kernel mean estimation and Stein effect. In: E.P . Xing, T . Jebara (eds.) Proceedings of the 31st Inter- national Conference on Machine Learning, W&CP 32 (1), pp. 10–18. Journal of Machine Learning Research (2014) [26] Muandet, K., Sriperumbudur , B., Sch ¨ olkopf, B.: Kernel mean estimation via spectral fil- tering. In: Z. Ghahramani, M. W elling, C. Cortes, N. Lawrence, K. W einberger (eds.) Advances in Neural Information Processing Systems 27, pp. 1–9. Curran Associates, Inc. (2014) [27] Paige, B., W ood, F .: A compilation target for probabilistic programming languages. In: Journal of Machine Learning Research; ICML 2014, pp. 1935–1943 (2014) [28] Pearl, J.: Causality: Models, Reasoning, and Inference, 2nd edn. Cambridge University Press, New Y ork, NY (2009) [29] Peters, J., Mooij, J., Janzing, D., Sch ¨ olkopf, B.: Causal discovery with continuous additive noise models. Journal of Machine Learning Research 15 , 2009–2053 (2014) [30] Prasad, R.D.: Probability distributions of algebraic functions of independent random vari- ables. SIAM Journal on Applied Mathematics 18 (3), 614–626 (1970) [31] Rahimi, A., Recht, B.: Random features for large-scale kernel machines. In: Advances in Neural Information Processing Systems (2007) [32] Sch ¨ olkopf, B., Platt, J.C., Shawe-T aylor , J.C., Smola, A.J., W illiamson, R.C.: Estimating the support of a high-dimensional distribution. Neural Computation 13 (7), 1443–1471 (2001) [33] Sch ¨ olkopf, B., Smola, A.J.: Learning with Kernels: Support V ector Machines, Regulariza- tion, Optimization, and Beyond. MIT Press, Cambridge, MA, USA (2001) [34] Sch ¨ olkopf, B., Smola, A.J.: Learning with Kernels. MIT Press, Cambridge, MA, USA (2002). URL http://www.learning- with- kernels.org [35] Shawe-T aylor, J., Cristianini, N.: Kernel Methods for Pattern Analysis. Cambridge Uni- versity Press, Cambridge, UK (2004) [36] Smola, A., Gretton, A., Song, L., Sch ¨ olkopf, B.: A Hilbert space embedding for distribu- tions. In: Proc. Algorithmic Learning Theory , pp. 13–31. Springer-V erlag (2007) 19 [37] Song, L.: Learning via Hilbert space embedding of distributions. Ph.D. thesis, The School of Information T echnologies, The Univ ersity of Sydney (2008) [38] Song, L., Boots, B., Siddiqi, S.M., Gordon, G., Smola, A.J.: Hilbert space embeddings of hidden Marko v models. In: Proceedings of the 27th International Conference on Machine Learning (ICML) (2010) [39] Song, L., Gretton, A., Bickson, D., Lo w , Y ., Guestrin, C.: Kernel belief propagation. In: Proceedings of the 14th International Conference on Artificial Intelligence and Statistics (AIST A TS) (2011) [40] Song, L., Huang, J., Smola, A., Fukumizu, K.: Hilbert space embeddings of conditional distributions with applications to dynamical systems. In: ICML (2009) [41] Song, L., Zhang, X., Smola, A.J., Gretton, A., Sch ¨ olkopf, B.: T ailoring density estimation via reproducing kernel moment matching. In: W .W . Cohen, A. McCallum, S. Roweis (eds.) Proceedings of the 25th International Conference on Machine Learning, pp. 992– 999. A CM Press, Ne w Y ork (2008) [42] Springer , M.: The algebra of random v ariables. W iley series in probability and mathemat- ical statistics. W iley (1979) [43] Springer , M.D., Thompson, W .E.: The distribution of products of independent random variables. SIAM Journal on Applied Mathematics 14 (3), 511–526 (1966) [44] Sriperumbudur , B.K., Gretton, A., Fukumizu, K., Lanckriet, G., Sch ¨ olkopf, B.: Injective Hilbert space embeddings of probability measures. In: The 21st Annual Conference on Learning Theory (COL T) (2008) [45] Sriperumbudur , B.K., Gretton, A., Fukumizu, K., Sch ¨ olkopf, B., Lanckriet, G.: Hilbert space embeddings and metrics on probability measures. Journal of Machine Learning Research 99 , 1517–1561 (2010) [46] Steinwart, I.: On the influence of the kernel on the consistency of support vector machines. Journal of Machine Learning Research 2 , 67–93 (2002) [47] Steinwart, I., Christmann, A.: Support V ector Machines. Springer (2008) [48] Szab ´ o, Z., Gretton, A., P ´ oczos, B., Sriperumbudur , B.: T wo-stage sampled learning theory on distributions. arXiv:1402.1754 (2014) [49] W illiamson, R.C.: Probabilistic arithmetic. Ph.D. thesis, Department of Electrical Engi- neering, Univ ersity of Queensland, St. Lucia, Queensland, Australia, (1989) [50] W ood, F ., v an de Meent, J.W ., Mansinghka, V .: A new approach to probabilistic program- ming inference. In: Artificial Intelligence and Statistics (2014) [51] Zhang, K., Peters, J., Janzing, D., Sch ¨ olkopf, B.: Kernel-based Conditional Independence T est and Application in Causal Discovery . In: F . Cozman, A. Pfeffer (eds.) 27th Con- ference on Uncertainty in Artificial Intelligence (UAI 2011), pp. 804–813. A U AI Press, Corvallis, OR, USA (2011) 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

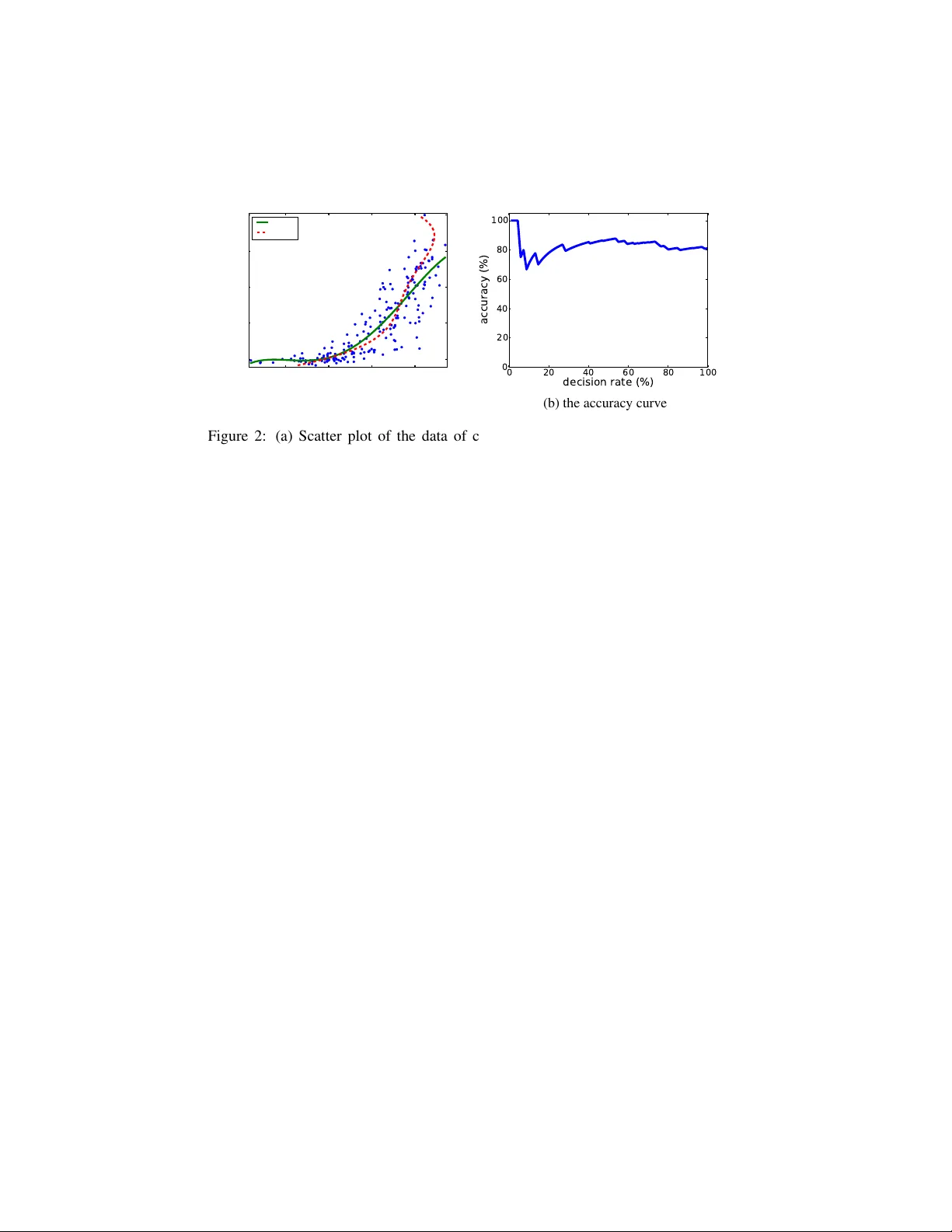

Leave a Comment