Basic models and questions in statistical network analysis

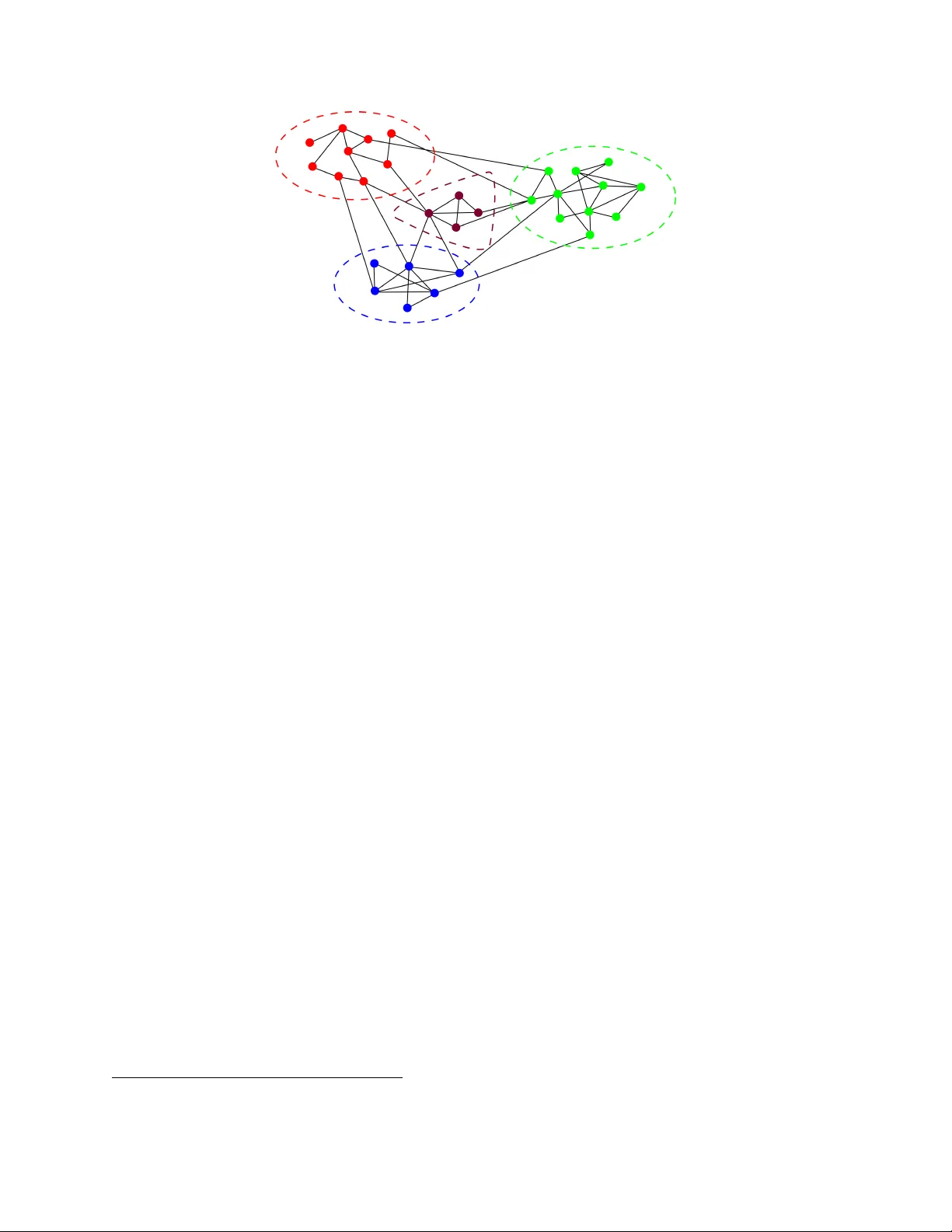

Extracting information from large graphs has become an important statistical problem since network data is now common in various fields. In this minicourse we will investigate the most natural statistical questions for three canonical probabilistic m…

Authors: Miklos Z. Racz, Sebastien Bubeck