Distributed Online Optimization in Dynamic Environments Using Mirror Descent

This work addresses decentralized online optimization in non-stationary environments. A network of agents aim to track the minimizer of a global time-varying convex function. The minimizer evolves according to a known dynamics corrupted by an unknown…

Authors: Shahin Shahrampour, Ali Jadbabaie

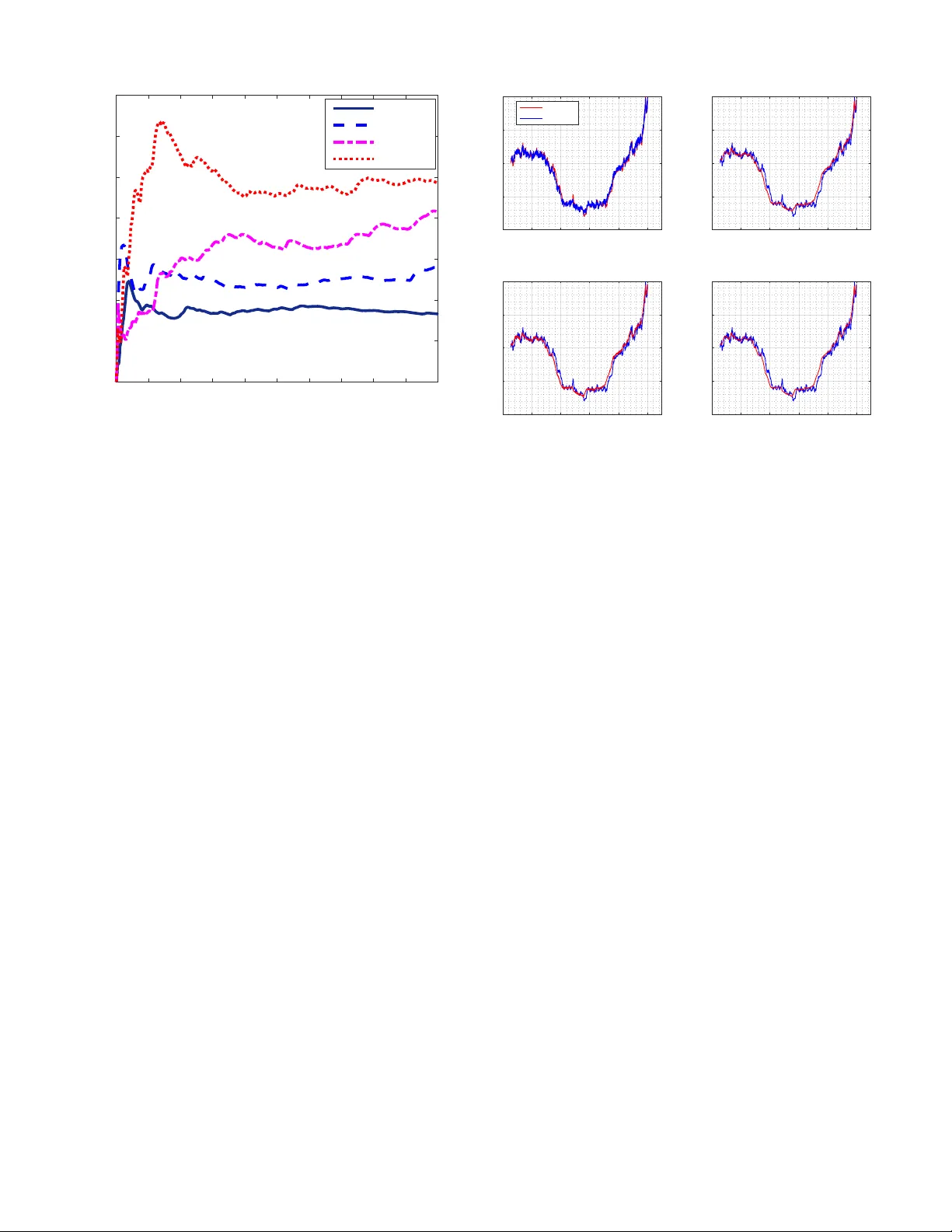

1 Distrib uted Online Optimization in Dynamic En vironments Using Mirror Descent Shahin Shahrampour and Ali Jadbabaie Abstract —This work addr esses decentralized online optimiza- tion in non-stationary en vironments. A network of agents aim to track the minimizer of a global time-varying con vex function. The minimizer evolv es according to a known dynamics corrupted by an unknown, unstructured noise. At each time, the global function can be cast as a sum of a finite number of local functions, each of which is assigned to one agent in the network. Moreo ver , the local functions become available to agents sequentially , and agents do not have a prior knowledge of the future cost functions. Theref ore, agents must communicate with each other to build an online approximation of the global function. W e propose a decen- tralized variation of the celebrated Mirror Descent, developed by Nemiro vksi and Y udin. Using the notion of Bregman divergence in lieu of Euclidean distance for projection, Mirror Descent has been shown to be a powerful tool in large-scale optimization. Our algorithm builds on Mirror Descent, while ensuring that agents perform a consensus step to follow the global function and take into account the dynamics of the global minimizer . T o measure the perf ormance of the proposed online algorithm, we compare it to its offline counterpart, where the global functions are available a priori. The gap between the two is called dynamic regret. W e establish a regret bound that scales in versely in the spectral gap of the network, and more notably it repr esents the deviation of minimizer sequence with r espect to the given dynamics. W e then show that our results subsume a number of results in distributed optimization. W e demonstrate the application of our method to decentralized tracking of dynamic parameters and verify the results via numerical experiments. I . I N T RO D U C T I O N Distributed con vex optimization has received a great deal of interest in science and engineering. Classical engineering prob- lems such as decentralized tracking, estimation, and detection are optimization problems in essence [1]–[7], and early studies on parallel and distributed computation dates back to three decades ago with seminal works of [8]–[10]. In any decentral- ized scheme, the objecti ve is to perform a global task, assigned to a number of agents in a network. Each individual agent has limited resources or partial information about the task. As a result, agents engage in local interactions to complement their insufficient knowledge and accomplish the global task. The use of decentralized techniques has increased rapidly since they impose low computational burden on agents and are robust to node failures as opposed to centralized algorithms which heavily rely on a single information processing unit. The authors gratefully acknowledge the support of ONR BRC Program on Decentralized, Online Optimization. Shahin Shahrampour is with the Department of Electrical Engi- neering at Harvard University , Cambridge, MA 02138 USA. (e-mail: shahin@seas.harvard.edu ). Ali Jadbabaie is with the Institute for Data, Systems, and Society at Massachusetts Institute of T echnology , Cambridge, MA 02139 USA. (email: jadbabai@mit.edu ). In distributed optimization, the main task is often minimiza- tion of a global con vex function, written as the sum of local con vex functions, where each agent holds a pri vate copy of one specific local function. Then, based on a communication protocol, agents exchange local gradients to minimize the global cost function. Decentralized optimization is a mature discipline in address- ing problems dealing with time-in variant cost functions [11]– [17]. Howe ver , in many real-world applications, cost functions vary ov er time. Consider, for instance, the problem of tracking a moving target, where the goal is to follow the position, velocity , and acceleration of the target. One should tackle the problem by minimizing a loss function defined with respect to these parameters; howe ver , since they are time-variant , the cost function becomes dynamic . When the problem frame work is dynamic in nature, there are two key challenges one needs to consider: 1) Agents often observe the local cost functions in an online or sequential fashion, i.e., the local functions are re vealed only after they make their instantaneous decision at each round, and they are una ware of future cost functions. In the last ten years, this problem (in the centralized domain) has been the main focus of the online optimization field in the machine learning community [18]. 2) Any online algorithm should mimic the performance of its of fline counterpart, and the gap between the two is called r e gr et . The most stringent benchmark is an offline problem that aims to track the minimizer of the global cost function over time, which brings forward the notion of dynamic re gret [19]. It is well-known that this benchmark makes the problem intractable in the worst-case. Howe ver , as studied in the centralized online optimization [19]–[22], the hardness of the problem can be characterized via a complexly measure that captures the variation in the minimizer sequence. In this paper , we aim to address the above directions simultaneously . W e consider an online optimization problem, where the global cost is realized sequentially , and the objective is to track the minimizer of the function. The dynamics of the minimizer is common knowledge, but the time-v arying minimizer sequence can deviate from this dynamics due to an unstructur ed noise. At each time step, the global function can be cast as sum of local functions, each of which is associated to one agent. Therefore, agents need to exchange information to solve the global problem. Our multi-agent tracking setup is reminiscent of a dis- tributed Kalman [23]. Howe ver , there are fundamental distinc- tions in our approach: (i) W e do not assume that the minimizer 2 sequence is corrupted with a Gaussian noise. Nor do we assume that this noise has a statistical distribution. Instead, we consider an adversarial-noise model with unknown structure. (ii) Agents observ ations are not necessarily linear; in fact, the observations are local gradients being potentially non-linear . Furthermore, our focus is on the finite-horizon analysis rather than asymptotic results. W e also note that our setup differs from the distributed particle filtering [24] as it is online , and agents receiv e only one observ ation per iteration. Moreover , we reiterate that the noise does not ha ve a certain statistical distribution. For this setup, we propose a decentralized version of the well-known Mirror Descent 1 , developed by Nemirovksi and Y udin [25]. Using the notion of Bregman di vergence in lieu of Euclidean distance for projection, Mirror Descent has been shown to be a powerful tool in large-scale optimization. Our algorithm consists of three interleav ed updates: (i) each agent follows the local gradient while staying close to pre vious esti- mates in the local neighborhood; (ii) agents take into account the dynamics of the minimizer sequence; (iii) agents average their estimates in their local neighborhood in a consensus step. Motiv ated by centralized online optimization, we use the notion of dynamic regret to characterize the dif ference between our online decentralized algorithm and its offline centralized version. W e establish a regret bound that scales inv ersely in the spectral gap of the network, and more notably it represents the deviation of minimizer sequence with respect to the given dynamics. That is, it highlights the impact of the arbitrary noise dri ving the dynamical model of the minimizer . W e further consider stochastic optimization, where agents observe only noisy versions of their local gradients, and we prov e that in this case, our regret bound holds true in the expectation sense. Our main theoretical contribution is providing a compre- hensiv e analysis on networked online optimization in dynamic setting. Our results subsume two important classes of decen- tralized optimization in the literature: (i) decentralized opti- mization of time-invariant objectives, and (ii) decentralized optimization of time-v ariant objectives over fixed v ariables. This generalization is an artifact of allowing dynamics in both objectiv e and v ariable. W e finally sho w that our algorithm is applicable to decen- tralized tracking of dynamic parameters. In fact, we sho w that the problem can be posed as the minimization of the square loss using Euclidean distance as the Bregman div ergence. W e then empirically verify that the tracking quality depends on how well the parameter follows its giv en dynamics. A. Related Literatur e This work is related to two distinct bodies of literature: (i) decentralized optimization, and (ii) online optimization in dynamic en vironments. Our goal in this work is to bridge 1 Algorithms relying on Gradient Descent minimize Euclidean distance in the projection step. Mirror Descent generalizes the projection step using the concept of Bregman diver gence [25], [26]. Euclidean distance is a special Bregman diver gence that reduces Mirror Descent to Gradient Descent. Kullback-Leibler diver gence is another well-known type of Bregman div er- gence (see e.g. [27] for more details on Bre gman div ergence). the two and provide a general frame work for decentralized online optimization in non-stationary en vironments. Below , we provide an overvie w of the related works to both scenarios: Decentralized Optimization: There are a host of results in the literature on decentralized optimization for time-in variant functions. The seminal work of [11] studies distributed sub- gradient methods over time-varying networks and provides con vergence analysis. The effect of stochastic gradients is then considered in [13]. Shi et al. [17] prove fast con ver gence rates for Lipschitz-differentiable objecti ves by adding a correction term to the decentralized gradient descent algorithm. Of par - ticular relev ance to this work is [28], where decentralized mirror descent has been dev eloped for when agents receiv e the gradients with a delay . More recently , the application of mirror descent to saddle point problems is studied in [29]. Moreo ver , Rabbat in [30] proposes a decentralized mirror descent for stochastic composite optimization problems and provide guar- antees for strongly con vex regularizers. In [31], Raginsky and Bouvrie inv estigate distributed stochastic mirror descent in the continuous-time domain. On the other hand, Duchi et al. [14] study dual averaging for distributed optimization, and provide a comprehensiv e analysis on the impact of netw ork parameters on the problem. The extension of dual av eraging to online distributed optimization is considered in [32]. Mateos-N ´ unez and Cort ´ es [33] consider online optimization using subgradient descent of local functions, where the graph structure is time- varying. In [34], a decentralized variant of Nesterov’ s primal- dual algorithm is proposed for online optimization. Finally , in [35], distributed online optimization is studied for strongly con vex objective functions over time-v arying netw orks. Online Optimization in Dynamic En vironments: In online optimization, the benchmark can be defined abstractly in terms of a time-varying sequence, a particular case of which is the minimizer sequence of a time-varying cost function. Several versions of the problem have been studied in the literature of machine learning in the centralized case. In [19], Zinkevich dev elops the celebrated online gradient descent and considers its extension to time-v arying sequences. The authors of [20] generalize this idea to study time-varying sequences following giv en dynamics. Besbes et al. [21] restrict their attention to minima sequence and introduce a complexity measure for the problem in terms of v ariation in cost functions. For the same problem, the authors of [22] dev elop an adaptiv e algorithm whose regret bound is expressed in terms of the variation of both functions and minima sequence, while in [36] an improv ed rate is deri ved for strongly con v ex objecti ves. Moreov er, online dynamic optimization with linear objecti ves is discussed in [37]. Fazlyab et al. [38] consider interior point methods and provide continuous-time analysis for the problem. Finally , Y ang et al. [39] provide optimal bounds for when the minimizer belongs to the feasible set. B. Or ganization The paper is or ganized as follows. The notation, problem formulation, assumptions, and algorithm are described in Section II. In Section III, we provide our theoretical results characterizing the behavior of the dynamic re gret. Section IV 3 is dedicated to application of our method to decentralized tracking of dynamic parameters. Section V concludes, and the proofs are given in Section VI (Appendix). I I . P RO B L E M F O R M U L A T I O N A N D A L G O R I T H M Notation: W e use the following notation in the exposition of our results: [ n ] The set { 1 , 2 , ..., n } for an y inte ger n x > T ranspose of the v ector x x ( k ) The k -th element of v ector x I n Identity matrix of size n ∆ d The d -dimensional probability simplex h· , ·i Standard inner product operator E [ · ] Expectation operator k·k p p -norm operator k·k ∗ The dual norm of k·k λ i ( W ) The i -th largest eigenv alue of matrix W σ i ( W ) The i -th lar gest singular of matrix W Throughout the paper, all the vectors are in column format. A. Decentralized Optimization in Dynamic En vir onments In this work, we consider an optimization problem in volving a global conv ex function. W e let X be a con vex set and represent the global function by f t : X → R at time t . The global function is time-v ariant, and the goal is to track the minimizer of f t ( · ) , denoted by x ? t . W e address a finite-time problem whose offline and centralized version can be posed as follows minimize x 1 ,...,x T T X t =1 f t ( x t ) subject to x t ∈ X , t ∈ [ T ] . (1) Howe ver , we want to solve the problem in an online and decentralized fashion. In particular, the global function at each time t can be written as the sum of n local functions as f t ( x ) := 1 n n X i =1 f i,t ( x ) , (2) where f i,t : X → R is a local con vex function on X for all i ∈ [ n ] . W e hav e a network of n agents facing two challenges in solving problem (1): (i) agent j ∈ [ n ] receiv es priv ate information only about f j,t ( · ) and does not hav e access to the global function f t ( · ) , which is common to decentralized schemes; (ii) The functions are revealed to agents sequentially along the time horizon, i.e., at an y time instance s , agent j has observed f j,t ( · ) for t < s , whereas the agent does not know f j,t ( · ) for s ≤ t ≤ T , which is common to online settings. The agents can exchange information with one another , and their relationship is encoded via an undirected graph G = ( V , E ) , where V = [ n ] denotes the set of nodes (agents), and E is the set of edges (links between agents). Each agent i assigns a positi ve weight [ W ] ij for the information receiv ed from agent j 6 = i . Hence, the set of neighbors of agent i is defined as N i := { j : [ W ] ij > 0 } . Note that our frame work subsumes two important classes of decentralized optimization in the literature: 1) Existing methods often consider time-in variant objecti ves (see e.g. [11], [14], [28]). This is simply the special case where f t ( x ) = f ( x ) and x t = x in (1). 2) Online algorithms deal with time-varying functions, b ut often the network’ s objecti ve is to minimize the temporal av erage of { f t ( x ) } T t =1 ov er a fixed variable x (see e.g. [32], [33]). This can be captured by our setup when x t = x in (1). T o exhibit the online nature of the problem, the latter class in abov e is usually reformulated by a popular performance metric called r e gret . Since in that setup x t = x for t ∈ [ T ] , denoting by x ? := argmin x ∈X P T t =1 f t ( x ) , the solution to problem (1) becomes P T t =1 f t ( x ? ) . Then, the goal of online algorithm is to mimic its of fline version by minimizing the regret defined as follows Reg s T = 1 n n X i =1 T X t =1 f t ( x i,t ) − T X t =1 f t ( x ? ) , (3) where x i,t is the estimate of agent i for x ? at time t . Moreover , the superscript “s” reiterates the fact that the benchmark is minimum of the sum P T t =1 f t ( x ) over a static or fixed comparator variable x that resides in the set X . In this setup, a successful algorithm incurs a sub-linear regret, which asymptotically closes the gap between the online algorithm and the offline algorithm (when normalized by T ). On the contrary , the focal point of this paper is to study the scenario where functions and comparator variables ev olve simultaneously , i.e., the variables { x t } T t =1 are not constrained to be fixed in (1). Let x ? t := argmin x ∈X f t ( x ) be the minimizer of the global function at time t . Then, the solution to problem (1) is simply P T t =1 f t ( x ? t ) . Therefore, to capture the online nature of problem (1), we reformulate it using the notion of dynamic regret as Reg d T = 1 n n X i =1 T X t =1 f t ( x i,t ) − T X t =1 f t ( x ? t ) , (4) where x i,t is the estimate of agent i for x ? t at time t . The goal is to minimize the dynamic regret measuring the gap between the online algorithm and its offline version. The superscript “d” indicates that the benchmark is the sum of minima P T t =1 f t ( x ? t ) characterized by dynamic variables { x ? t } T t =1 that lie in the set X . It is well-kno wn that the more stringent benchmark in the dynamic setup makes the problem intractable in the worst- case, i.e., achieving a sub-linear regret could be impossible. Howe ver , as studied in the centralized online optimization [20]–[22], we would like to characterize the hardness of the problem via a complexly measure that captures the pattern of the minimizer sequence { x ? t } T t =1 . More specifically , assuming that a dynamics A is a common knowledge in the network, and x ? t +1 = Ax ? t + v t , (5) 4 we want to pro ve a regret bound in terms of C T := T X t =1 x ? t +1 − Ax ? t = T X t =1 k v t k , (6) which represents the deviation of minimizer sequence with respect to dynamics A . Note that generalizing the results to the time-variant case is straightforw ard, i.e., when A is replaced by A t in (5). The problem setup (1) coupled with the dynamics giv en in (5) is reminiscent of distrib uted Kalman filtering [23]. Howe ver , there are fundamental distinctions here: (i) The mismatch noise v t is neither Gaussian nor of known statistical distribution. It can be thought as an adversarial noise with unknown structure, which represents the deviation from the dynamics 2 . (ii) Agents observations are not necessarily linear; in fact, the observ ations are local gradients of { f i,t ( · ) } T t =1 and are non-linear when the objecti ve is not quadratic. Fur - thermore, another implicit distinction in this work is our focus on finite-time analysis rather than asymptotic results. W e note that our framework also differs from distributed particle filtering [24] since agents receive only one observ ation per iteration, and the mismatch noise v t has no structure or distribution. Having that in mind, to solve the online consensus opti- mization (4), we propose to decentralize the Mirror Descent algorithm [25] and to analyze it in a dynamic framew ork. The appealing feature of Mirror Descent is extension of the projection step using Bre gman div ergence in lieu of Euclidean distance, which makes the algorithm applicable to a wide range of problems. Before defining Bregman diver gence and elabo- rating the algorithm, we start by stating a couple of standard assumptions in the context of decentralized optimization. Assumption 1. F or any i ∈ [ n ] , the function f i,t ( · ) is Lipschitz continuous on X with a uniform constant L . That is, | f i,t ( x ) − f i,t ( y ) | ≤ L k x − y k , for any x, y ∈ X . This further implies that the gradient of f i,t ( · ) denoted by ∇ f i,t ( · ) is uniformly bounded on X by the constant L , i.e., we have k∇ f i,t ( · ) k ∗ ≤ L . 3 Assumption 2. The network is connected, i.e., ther e exists a path fr om any agent i ∈ [ n ] to any agent j ∈ [ n ] . Also, the matrix W is doubly stochastic 4 with positive diagonal. That is, n X i =1 [ W ] ij = n X j =1 [ W ] ij = 1 . The connectivity constraint in Assumption 2 guarantees the information flow in the network. It simply implies uniqueness of λ 1 ( W ) = 1 and warrants that other eigen values of W are strictly less than one in magnitude [40]. 2 In online learning, the focus is not on distribution of data. Instead, data is thought to be generated arbitrarily , and its effect is observed through the loss functions [18]. 3 This relationship is standard, see e.g. Lemma 2.6. in [18] for more details. 4 For the sake of simplicity , we assume that the topology is time-in variant, and W is fixed. The extension of problem to time-v arying topology is straightforward, as previously inv estigated in the literature (see e.g. [11], [14], [28]). B. Decentralized Online Mirr or Descent The dev elopment of Mirror Descent relies on the Bregman div ergence outlined in this section. Consider a conv ex set X in a Banach space B , and let R : B → R denote a 1-strongly con vex function on X with respect to a norm k·k . That is, R ( x ) ≥ R ( y ) − h∇R ( y ) , x − y i + 1 2 k x − y k 2 . for any x, y ∈ X . Then, the Bregman div ergence D R ( · , · ) with respect to the function R ( · ) is defined as follows: D R ( x, y ) := R ( x ) − R ( y ) − h x − y , ∇R ( y ) i . Combining the two identities abov e yields an important prop- erty of the Bregman di vergence, and for any x, y ∈ X we get D R ( x, y ) ≥ 1 2 k x − y k 2 , (7) due to the strong con vexity of R ( · ) . T wo famous examples of Bregman di vergence are the Euclidean distance and the Kullback-Leibler (KL) div ergence generated from R ( x ) = 1 2 k x k 2 2 and R ( x ) = P d i =1 x ( i ) log x ( i ) − x ( i ) , respectively . Assumption 3. Let x and { y i } n i =1 be vectors in R d . The Br e gman diver gence satisfies the separate con vexity in the following sense D R ( x, n X i =1 α ( i ) y i ) ≤ n X i =1 α ( i ) D R ( x, y i ) , wher e α ∈ ∆ n is on the n -dimensional simplex. The assumption is satisfied for commonly used cases of Bregman diver gence. For instance, the Euclidean distance evi- dently respects the condition. The KL-di vergence also satisfies the constraint, and we refer the reader to Theorem 6.4. in [27] for the proof. Assumption 4. The Bre gman diver gence satisfies a Lipschitz condition of the form |D R ( x, z ) − D R ( y , z ) | ≤ K k x − y k , for all x, y , z ∈ X . When the function R is Lipschitz on X , the Lipschitz condition on the Bregman diver gence is automatically sat- isfied. Again, for the Euclidean distance the assumption evidently holds. In the particular case of KL di vergence, the condition can be achie ved via mixing a uniform dis- tribution to avoid the boundary . More specifically , consider R ( x ) = P d i =1 x ( i ) log x ( i ) − x ( i ) for which |∇R ( x ) | = | P d i =1 log x ( i ) | ≤ d log T as long as x ∈ { µ : P d i =1 µ ( i ) = 1; µ ( i ) ≥ 1 T , ∀ i ∈ [ d ] } . Therefore, in this case the constant K is of O (log T ) (see e.g. [22] for more comments on the assumption). W e are no w ready to propose a three-step algorithm to solve the optimization problem formulated in terms of dynamic regret in (4). Let us define ∇ i,t := ∇ f i,t ( x i,t ) as the shorthand for the local gradients. Noticing the dynamic framework, 5 we de velop the decentralized online mirror descent via the following updates 5 ˆ x i,t +1 = argmin x ∈X η t h x, ∇ i,t i + D R ( x, y i,t ) , (8a) x i,t = A ˆ x i,t , and y i,t = n X j =1 [ W ] ij x j,t , (8b) where { η t } T t =1 is the step-size sequence, and A ∈ R d × d is the gi ven dynamics in (5) which is a common knowledge. Recall that x i,t ∈ R d represents the estimate of agent i for the global minimizer x ? t at time t . The step-size sequence is non-increasing and positiv e. Our proposed methodology can also be recognized as the decentralized variant of the Dynamic Mirror Descent algorithm in [20] though we restrict our attention only to linear dynamics. The update (8a) allows the algorithm to follo w the pri vate gradient while staying close to the previous estimates in the local neighborhood. This closeness is achie ved in the sense of minimizing the Bregman div ergence. On the other hand, the first update in (8b) takes into account the potential dynamics that the minimizer sequence follow , and the second update in (8b) is the consensus term av eraging the estimates in the local neighborhood. Assumption 5. The mapping A is assumed to be non- expansive. That is, the condition D R Ax, Ay ≤ D R x, y , holds for all x, y ∈ X , and k A k ≤ 1 . The assumption postulates a natural constraint on the map- ping A : it does not allow the ef fect of a poor prediction (at one step) to be amplified as the algorithm moves forward. I I I . T H E O R E T I C A L R E S U L T S In this section, we state our theoretical results and their consequences. The proofs are presented later in the Appendix (Section VI). Our main result (Theorem 3) prov es a bound on the dynamic regret, which captures the de viation of the minimizer trajectory from the dynamics A (tracking error) as well as the decentralization cost (network error). After stating the theorem, we show that our result reco vers previous rates on decentralized optimization (static regret) once the tracking error is removed. Also, it recovers previous rates on centralized online optimization in dynamic setting when the network error is factored out. Therefore, we establish that our generalization is bona fide. A. Pr eliminary Results W e start with a con vergence result on the local estimates, which presents an upper bound on the de viation of the local estimates at each iteration from their consensual value. A similar result has been proven in [28] for time-in variant functions without dynamics; howe ver , the follo wing lemma extends that of [28] to online setting and takes into account the dynamics A in (8b). 5 The algorithm is initialized at x i,t = 0 to a void clutter in the analysis. In general, any initialization could work for the algorithm. Lemma 1. (Network Err or) Let X be a con vex set in a Banac h space B , R : B → R denote a 1-str ongly con vex function on X with r espect to a norm k · k , and D R ( · , · ) r epresent the Br e gman diver gence with respect to R , respectively . Further - mor e, assume that the local functions are Lipschitz continuous (Assumption 1), the matrix W is doubly stochastic (Assump- tion 2), and the mapping A is non-expansive (Assumption 5). Then, the local estimates { x i,t } T t =1 generated by the updates (8a) - (8b) satisfy k x i,t +1 − ¯ x t +1 k ≤ L √ n t X τ =0 η τ σ t − τ 2 ( W ) , for any i ∈ [ n ] , wher e ¯ x t := 1 n P n i =1 x i,t . It turns out that the error bound depends on the network pa- rameter σ 2 ( W ) and the step-size sequence { η t } T t =1 . It is well- known that smaller σ 2 ( W ) results in closeness of estimates to their average by speeding up the mixing rate (see e.g. results of [14]). For instance, when the communication is all-to-all, i.e., the graph is complete, σ 2 ( W ) = 0 and the mixing rate is most rapid since each agent recei ves the priv ate gradients of others only after one iteration delay . On the other hand, a usual diminishing step-size, which asymptotically goes to zero, can guarantee asymptotic closeness; howe ver , such step- size sequence is most suitable for static rather than dynamic en vironments. W e will discuss the choice of step-size carefully when we state our main result. Before that, we need to state another lemma as follo ws. Lemma 2. (T rac king Err or) Let X be a con vex set in a Banach space B , R : B → R denote a 1-str ongly con vex function on X with respect to a norm k · k , and D R ( · , · ) r epr esent the Bre g- man diver gence with r espect to R , respectively . Furthermor e, assume that the matrix W is doubly stochastic (Assumption 2), the Bre gman diver gence satisfies the Lipschitz condition and the separate conve xity (Assumptions 3-4), and the mapping A is non-expansive (Assumption 5). Then, it holds that 1 n n X i =1 T X t =1 1 η t D R ( x ? t , y i,t ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) ≤ 2 R 2 η T +1 + T X t =1 1 η t +1 x ? t +1 − Ax ? t , wher e R 2 := sup x,y ∈X D R ( x, y ) . In the update (8a), each agent i calculates ˆ x i,t +1 , while staying close to y i,t by minimizing the Bre gman diver gence. Lemma 2 establishes a bound on difference of these tw o quan- tities, when they are ev aluated in the Bregman with respect to x ? t . The relation of left-hand side with dynamic re gret is not immediate, and it becomes clear in the analysis. Howe ver , the term x ? t +1 − Ax ? t = k v t k in the bound highlights the impact of mismatch noise v t in the tracking quality . Lemmata 1 and 2 disclose the critical parameters inv olved in the regret bound. W e carefully discuss the consequences of these bounds in the subsequent section. 6 B. F inite-horizon P erformance: Re gr et Bound W e now state our main result on the non-asymptotic perfor- mance of the decentralized online mirror descent in dynamic en vironments. The succeeding theorem provides the regret bound in the general case, and it is follo wed by a corollary characterizing the regret rate for the optimized fixed step-size sequence. In particular, the theorem uses the results in the previous section to present an upper bound on the dynamic regret decomposed into tracking and network errors. Theorem 3. Let X be a conve x set in a Banach space B , R : B → R denote a 1-strongly con vex function on X with r espect to a norm k · k , and D R ( · , · ) r epresent the Br e gman di- ver gence with r espect to R , respectively . Furthermor e, assume that the local functions are Lipschitz continuous (Assumption 1), the matrix W is doubly stochastic (Assumption 2), the Br e gman diver gence satisfies the Lipschitz condition and the separate con vexity (Assumptions 3-4), and the mapping A is non-expansive (Assumption 5). Then, using the local estimates { x i,t } T t =1 generated by the updates (8a) - (8b) , the r e gr et (4) can be bounded as Reg d T ≤ E Track + E Net , wher e E Track := 2 R 2 η T +1 + T X t =1 K η t +1 x ? t +1 − Ax ? t + L 2 T X t =1 η t 2 , and E Net := 4 L 2 √ n T X t =1 t − 1 X τ =0 η τ σ t − τ − 1 2 ( W ) . Corollary 4. Under the same conditions stated in Theorem 3, using the fixed step-size η = p (1 − σ 2 ( W )) C T /T yields a r e gret bound of order Reg d T ≤ O s C T T 1 − σ 2 ( W ) ! , wher e C T = P T t =1 x ? t +1 − Ax ? t . Pr oof: The proof of the corollary follo ws directly from substituting the step-size into the bound in Theorem 3. Theorem 3 decomposes the upper bound into two terms for a general step-size { η t } T t =1 . In Corollary 4, we fix the step-size and observe the role of C T in controlling the regret bound . As we recall from (5), this quantity collects mismatch errors { v t } T t =1 that are not necessarily Gaussian or of some statistical distribution. In Section II, we discussed that our setup generalizes some of previous works, and it is important to notice that our result recovers the corresponding rates when restricted to those special cases: 1) When the global function f t ( x ) = f ( x ) is time-in variant, the minimizer sequence { x ? t } T t =1 is fixed, i.e., the map- ping A = I d and v t = 0 in (5). In this case in Theorem 3, the term inv olving x ? t +1 − Ax ? t in E Track is equal to zero, and we can use the step-size sequence η = p (1 − σ 2 ( W )) /T to recov er the result of compa- rable algorithms, such as [14] in which distributed dual av eraging is proposed. 2) The same argument holds when the global function is time-variant, but the comparator variables are fixed. In this case, the problem is reduced to minimizing the static regret (3). Since x ? t +1 − Ax ? t = 0 again, our result recov ers that of [32] on distributed online dual averaging. 3) When the graph is complete, σ 2 ( W ) = 0 and the E Net term in Theorem 3 vanishes. W e then recover the results of [20] on centralized online learning in dynamic en vironments. As we mentioned earlier , when mismatch errors { v t } T t =1 are large, the minimizer sequence { x ? t } T t =1 fluctuates drastically , and C T could become linear in time. The bound in the corollary is then not useful in the sense of k eeping the dynamic regret sub-linear . Such behavior is natural since ev en in the centralized online optimization, the algorithm receiv es only a single gradient to predict the next step 6 . As discussed in Section II, in this worst-case, the problem is generally intractable. Howe ver , our goal was to consider C T as a complexity measure of the problem en vironment and express the regret bound with respect to this parameter . In practice, if the algorithm is allowed to query multiple gradients per time, the error would be reduced, but this direction is beyond the scope of this paper . C. Optimization with Stoc hastic Gr adients In many engineering applications such as decentralized tracking, learning, and estimation, agents observations are usually noisy . In this section, we demonstrate that the result of Theorem 3 does not rely on exact gradients, and it holds true in expectation sense when agents follo w stochastic gradients. Mathematically speaking, let F t be the σ -field containing all information prior to the outset of round t + 1 . Let also ∇ i,t represent the stochastic gradient observed by agent i after calculating the estimate x i,t . Then, we define a stochastic oracle that provides noisy gradients respecting the following conditions E h ∇ i,t F t − 1 i = ∇ i,t E h k ∇ i,t k 2 ∗ F t − 1 i ≤ G 2 . (9) The new updates take the follo wing form ˆ x i,t +1 = argmin x ∈X η t h x, ∇ i,t i + D R ( x, y i,t ) , (10a) x i,t = A ˆ x i,t , and y i,t = n X j =1 [ W ] ij x j,t , (10b) where the only distinction between (10a) and (8a) is using the stochastic gradient in the former . A commonly used model to generate stochastic gradients satisfying (9) is an additi ve zero-mean noise with bounded variance. W e now discuss the impact of stochastic gradients in the follo wing theorem. Theorem 5. Let X be a conve x set in a Banach space B , R : B → R denote a 1-strongly conve x function on X with r espect to a norm k · k , and D R ( · , · ) r epresent the Bre gman diverg ence 6 Even in a more structured problem setting such as Kalman filtering, when we know the exact value of a state at a time step, we cannot exactly predict the next state, and we incur a minimum mean-squared error of the size of noise variance. 7 with r espect to R , respectively . Furthermore , assume that the local functions ar e Lipschitz continuous (Assumption 1), the matrix W is doubly stochastic (Assumption 2), the Br e gman diver gence satisfies the Lipschitz condition and the separate con vexity (Assumptions 3-4), and the mapping A is non- expansive (Assumption 5). Let the local estimates { x i,t } T t =1 be generated by updates (10a) - (10b) , where the stochastic gradients satisfy the condition (9) . Then, E Reg d T ≤ 2 R 2 η T +1 + T X t =1 K η t +1 x ? t +1 − Ax ? t + G 2 T X t =1 η t 2 + 4 G 2 √ n T X t =1 t − 1 X τ =0 η τ σ t − τ − 1 2 ( W ) . The theorem indicates that when using stochastic gradients, the result of Theorem 3 holds true in expectation sense. Thus, the algorithm can be used in dynamic en vironments where agents observations are noisy . I V . N U M E R I C A L E X P E R I M E N T : S TA T E E S T I M A T I O N A N D T R AC K I N G D Y N A M I C P A R A M E T E R S The generality of Mirror Descent stems from the freedom ov er the selection of the Bregman di vergence. A particularly well-known Ber gman div ergence is the Euclidean distance, which turns our framework to state estimation and tracking. In this section, we focus on this scenario as an application of our method. Distributed state estimation and tracking dynamic parameters has a long history in the literature of control and signal processing. Howe ver , there are key distinctions in our approach to the dynamical model of the parameter and agents observations. W e elaborate on these differences as we describe our numerical experiment. Let us consider a slowly maneuvering target in the 2 D plane and assume that each position component of the target ev olves independently according to a near constant velocity model [41]. The state of the target at each time consists of four components: horizontal position, vertical position, horizontal velocity , and vertical velocity . Therefore, representing such state at time t by x ? t ∈ R 4 , the state space model takes the form x ? t +1 = Ax ? t + v t , where v t ∈ R 4 is the system noise, and using ⊗ for Kronecker product, A is described as A = I 2 ⊗ 1 0 1 , with being the sampling interv al 7 . The goal is to track x ? t using a network of agents. This problem has been studied in the context of distributed Kalman filtering [23], [42], state estimation [43]–[45], and particle filtering [24], [46], [47]. Howe ver , as opposed to Kalman filtering, we need not assume that the system noise v t is Gaussian. Also, unlike particle filtering, we do not assume receiving a large number of samples (particles) per iteration since our setup is online, i.e., 7 The sampling interval of (seconds) is equivalent to the sampling rate of 1 / ( H z ) . agents only observe one sample per iteration. Moreov er , we do not assume a statistical distrib ution on v t in our analysis, which makes our framew ork different from state estimation. W e ha ve a model-free approach in which the noise can be deterministic with unkno wn structure, or ev en stochastic with dependence over time. For our experiment, we generate this noise according to a zero-mean Gaussian distribution with cov ariance matrix Σ as follows Σ = σ 2 v I 2 ⊗ 3 / 3 2 / 2 2 / 2 . W e let the sampling interval be = 0 . 1 seconds which is equiv alent to frequency 10 H z . The constant σ 2 v is changed in different scenarios, so we describe the choice of this pa- rameter later . Importantly , we remark that though this noise is generated randomly , it is fix ed with each run of our experiment later . That is, the noise is generated once and remains fixed throughout, so it can be considered deterministic. W e consider a sensor network of n = 25 agents located on a 5 × 5 grid. Agents aim to track the moving target x ? t collaborativ ely . At time t , agent i observes z i,t , a noisy version of one coordinate of x ? t as follows z i,t = e > k i x ? t + w i,t , where w i,t ∈ R denotes the observation noise, and e k is the k - th unit vector in the standard basis of R 4 for k ∈ { 1 , 2 , 3 , 4 } . W e di vide agents into four groups, and for each group we choose one specific k i from the set { 1 , 2 , 3 , 4 } . Furthermore, the observ ation noise must satisfy the standard assumption of being zero-mean and finite-v ariance. Our results are not de- pendent on Gaussian noise, so we generate w i,t independently from a uniform distrib ution on [ − 1 , 1] . Though not locally observable to each agent, it is straight- forward to see that the target x ? t is globally identifiable from the standpoint of the whole network (see e.g. [43] for the exact definition of the global identifiability in a general tracking problem). At time t , each agent i forms an estimate x i,t of x ? t based on observations { z i,τ } t − 1 τ =1 . After that, the ne w signal z i,t becomes av ailable to the agent. The online nature of the problem allows us to pose it as an instance of online optimization formulated in (4). T o deri ve an explicit update for x i,t , we need to introduce the loss functions. W e use the local square loss f i,t ( x ) := E h z i,t − e > k i x 2 x ? t i , for each agent i , resulting in the network loss f t ( x ) := 1 n n X i =1 E h z i,t − e > k i x 2 x ? t i . In our experiment v t is a deterministic noise, but in both definitions x ? t could be random in the case that v t is random, so we use the conditional expectation to be precise. Now using Euclidean distance as the Bre gman div ergence in updates (10a)-(10b), we can deri ve the following update x i,t = n X j =1 [ W ] ij A x j,t − 1 + η t A e k i z i,t − 1 − e > k i x i,t − 1 . 8 0 100 200 300 400 500 600 700 800 900 1000 Iteration 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 Normalized Expected Dynamic Regret < 2 v =0.25 < 2 v =0.5 < 2 v =0.75 < 2 v =1 Fig. 1. The plot of dynamic regret versus iterations. Naturally , when σ 2 v is smaller, the innov ation noise added to the dynamics is smaller with high probability , and the network incurs a lo wer dynamic regret. In this plot, the dynamic regret is normalized by iterations, so the y -axis is E Reg d T /T . W e fix the step size to η t = η = 0 . 5 since using diminishing step size is not useful in tracking unless we ha ve diminishing system noise [48]. The update is akin to consensus+innovation updates in the literature (see e.g. [48]–[50]) though we recall that we did not analyze this update for a system noise v t with a statistical distribution. It is proved in [51] that in decentralized tracking, the dynamic regret can be presented in terms of the tracking error x i,t − x ? t of all agents. More specifically , the dynamic regret averages the tracking error ov er space and time (when normalized by T ). Exploiting this connection and combining that with the result of Theorem 5, we observe that once the parameter does not deviate too much from the dynamics, i.e., when P T t =1 k v t k is small, the bound on the dynamic regret (or equiv alently the collecti ve tracking error) becomes small and vice versa. W e demonstrate this intuitiv e idea by tuning σ 2 v . Larger values for σ 2 v are more likely to cause deviations from the dynamics A ; therefore, we expect large dynamic regret (w orse performance) when σ 2 v is large. In Fig. 1, we plot the dynamic regret for σ 2 v ∈ { 0 . 25 , 0 . 5 , 0 . 75 , 1 } . For each specific value of σ 2 v , we run the experiment 50 times and a verage out the dynamic re gret o ver all runs. As we conjectured, the performance improves once σ 2 v tends to smaller v alues. Let us no w focus on the case that σ 2 v = 0 . 5 . For one run of this case, we provide a snapshot of the target trajectory (in red) in Fig. 2 and plot the estimator trajectory (in blue) for agents i ∈ { 1 , 6 , 12 , 23 } . While the dynamic regret can be controlled in the expectation sense (Theorem 5), Fig. 2 suggests that agents’ estimators closely follo w the trajectory of the moving target with high probability . -1000 -800 -600 -400 -200 0 Agent 1 -20 -15 -10 -5 0 Target Estimator -1000 -800 -600 -400 -200 0 Agent 6 -20 -15 -10 -5 0 -1000 -800 -600 -400 -200 0 Agent 12 -20 -15 -10 -5 0 -1000 -800 -600 -400 -200 0 Agent 23 -20 -15 -10 -5 0 Fig. 2. The trajectory of x ? t over T = 1000 iterations is shown in red. W e also depict the trajectory of the estimator x i,t (shown in blue) for i ∈ { 1 , 6 , 12 , 23 } and observe that it closely follows x ? t in every case. V . C O N C L U S I O N The work unifies a number of frame works in the literature by addressing decentralized , online optimization in dynamic en vironments. W e considered tracking the minimizer of a global time-varying con vex function via a network of agents. The minimizer of the global function has a dynamics known to agents, but an unknown, unstructured noise causes deviation from this dynamics. The global function can be written as a sum of local functions at each time step, and each agent can only observe its associated local function. Ho wev er, these local functions appear sequentially , and agents do not ha ve a prior knowledge of the future cost functions. Our proposed algorithm for this setup can be cast as a decentralized version of Mirror Descent. Howe ver , the algorithm possesses two additional steps to include agents interactions and dynamics of the minimizer . W e used a notion of network dynamic regret to measure the performance of our algorithm versus its offline counterpart. W e established that the regret bound scales inv ersely in the spectral gap of the network and captures the deviation of minimizer sequence with respect to the gi ven dynamics. W e next considered stochastic optimization, where agents observe only noisy versions of their local gradients, and we prov ed that in this case, our regret bound holds true in the expectation sense. W e showed that our generalization is valid and con vincing in the sense that the results recov er those of distributed optimization in online and offline setting. W e also applied our method to decentralized tracking of dynamic parameters in the numerical experiments. Our work opens a few directions for future works. W e conjecture that our theoretical results can be strengthened in a setup where agents receiv e multiple gradients per time step. Howe ver , as mentioned in Section III, this is still an open 9 question. Also, the result of Corollary 4 assumes the step- size is tuned in advance. This would require the kno wledge of C T or an upper bound on the quantity . For the centralized setting, one can potentially avoid the issue using doubling tricks which requires online accumulation of the mismatch noise v t . Howe ver , it is more natural to consider that this noise is not fully observable in the decentralized setting. Therefore, an adapti ve solution to step-size tuning remains open for the future in vestigation. V I . A P P E N D I X The follo wing lemma is standard in the analysis of mirror descent. W e state the lemma here and revok e it in our analysis later . Lemma 6 (Beck and T eboulle [26]) . Let X be a conve x set in a Banach space B , R : B → R denote a 1-strongly con vex function on X with r espect to a norm k·k , and D R ( · , · ) r epr esent the Br e gman diver gence with respect to R , r espectively . Then, any update of the form x ? = ar gmin x ∈X h a, x i + D R ( x, c ) , satisfies the following inequality h x ? − d, a i ≤ D R ( d, c ) − D R ( d, x ? ) − D R ( x ? , c ) , for any d ∈ X . A. Pr oof of Lemma 1 Applying Lemma 6 to the update (8a), we get η t h ˆ x i,t +1 − y i,t , ∇ i,t i ≤ −D R ( y i,t , ˆ x i,t +1 ) − D R ( ˆ x i,t +1 , y i,t ) In vie w of the strong con ve xity of R , the Bregman diver gence satisfies D R ( x, y ) ≥ 1 2 k x − y k 2 for any x, y ∈ X (see (7)). Therefore, we can simplify the equation abo ve as follows η t h y i,t − ˆ x i,t +1 , ∇ i,t i ≥ D R ( y i,t , ˆ x i,t +1 ) + D R ( ˆ x i,t +1 , y i,t ) ≥ k y i,t − ˆ x i,t +1 k 2 . (11) On the other hand, for any primal-dual norm pair it holds that h y i,t − ˆ x i,t +1 , ∇ i,t i ≤ k y i,t − ˆ x i,t +1 k k∇ i,t k ∗ ≤ L k y i,t − ˆ x i,t +1 k , using Assumption 1 in the last line. Combining above with (11), we obtain k y i,t − ˆ x i,t +1 k ≤ Lη t . (12) Letting e i,t := ˆ x i,t +1 − y i,t , we can no w rewrite update (8b) as ˆ x i,t +1 = n X j =1 [ W ] ij x j,t + e i,t , which implies x i,t +1 = A ˆ x i,t +1 = n X j =1 [ W ] ij Ax j,t + Ae i,t . (13) Using Assumption 2 (doubly stochasticity of W ), the above immediately yields ¯ x t +1 := 1 n n X i =1 x i,t +1 = 1 n n X i =1 n X j =1 [ W ] ij Ax j,t + 1 n n X i =1 Ae i,t = 1 n n X j =1 n X i =1 [ W ] ij ! Ax j,t + 1 n n X i =1 Ae i,t = A ¯ x t + A ¯ e t , where ¯ e t := 1 n P n i =1 e i,t , and ¯ x t = 1 n P n i =1 x i,t as defined in the statement of the lemma. As a result, ¯ x t +1 = t X τ =0 A t +1 − τ ¯ e τ . (14) On the other hand, stacking the local v ectors x i,t and e i,t in (13) in the follo wing form x t := [ x > 1 ,t , x > 2 ,t , . . . , x > n,t ] > e t := [ e > 1 ,t , e > 2 ,t , . . . , e > n,t ] > , and using ⊗ to denote the Kronecker product, we can write (13) in the matrix format as x t +1 = ( W ⊗ A ) x t + ( I n ⊗ A ) e t = t X τ =0 ( W ⊗ A ) t − τ ( I n ⊗ A ) e τ = t X τ =0 ( W t − τ ⊗ A t − τ )( I n ⊗ A ) e τ Therefore, using above, we have x i,t +1 = t X τ =0 n X j =1 W t − τ ij A t +1 − τ e j,τ . Combining above with (14), we deri ve x i,t +1 − ¯ x t +1 = t X τ =0 n X j =1 W t − τ ij − 1 n A t +1 − τ e j,τ , which entails k x i,t +1 − ¯ x t +1 k ≤ t X τ =0 n X j =1 W t − τ ij − 1 n Lη τ , (15) where we used k e i,τ k ≤ Lη τ obtained in (12) as well as the assumption k A k ≤ 1 (Assumption 5). By standard properties of doubly stochastic matrices (see e.g. [40]), the matrix W satisfies n X j =1 W t ij − 1 n ≤ √ nσ t 2 ( W ) . Substituting above into (15) finishes the proof. 10 B. Pr oof of Lemma 2 W e start by adding, subtracting, and regrouping sev eral terms as follows 1 η t D R ( x ? t ,y i,t ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) = + 1 η t D R ( x ? t , y i,t ) − 1 η t +1 D R ( x ? t +1 , y i,t +1 ) + 1 η t +1 D R ( x ? t +1 , y i,t +1 ) − 1 η t +1 D R ( Ax ? t , y i,t +1 ) + 1 η t +1 D R ( Ax ? t , y i,t +1 ) − 1 η t +1 D R ( x ? t , ˆ x i,t +1 ) + 1 η t +1 D R ( x ? t , ˆ x i,t +1 ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) . (16) W e now need to bound each of the four terms abov e. For the second term, we note that D R ( x ? t +1 , y i,t +1 ) − D R ( Ax ? t , y i,t +1 ) ≤ K x ? t +1 − Ax ? t , (17) by the Lipschitz condition on the Bregman diver gence (As- sumption 4). Also, by the separate con vexity of Bregman div ergence (Assumption 3) as well as stochasticity of W (Assumption 2), we ha ve n X i =1 D R ( Ax ? t , y i,t +1 ) − n X i =1 D R ( x ? t , ˆ x i,t +1 ) = n X i =1 D R ( Ax ? t , n X j =1 [ W ] ij x j,t +1 ) − n X i =1 D R ( x ? t , ˆ x i,t +1 ) ≤ n X i =1 n X j =1 [ W ] ij D R ( Ax ? t , x j,t +1 ) − n X i =1 D R ( x ? t , ˆ x i,t +1 ) = n X j =1 D R ( Ax ? t , x j,t +1 ) n X i =1 [ W ] ij − n X i =1 D R ( x ? t , ˆ x i,t +1 ) = n X j =1 D R ( Ax ? t , x j,t +1 ) − n X i =1 D R ( x ? t , ˆ x i,t +1 ) = n X i =1 D R ( Ax ? t , A ˆ x i,t +1 ) − n X i =1 D R ( x ? t , ˆ x i,t +1 ) ≤ 0 , (18) where the last inequality follows from the f act that A is non-expansi ve (Assumption 5). When summing (16) ov er t ∈ [ T ] the first term telescopes, while the second and third terms are handled with the bounds in (17) and (24), respectiv ely . Recalling from the statement of the lemma that R 2 = sup x,y ∈X D R ( x, y ) , we obtain 1 n n X i =1 T X t =1 1 η t D R ( x ? t , y i,t ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) ≤ R 2 η 1 + T X t =1 K η t +1 x ? t +1 − Ax ? t + R 2 T X t =1 1 η t +1 − 1 η t ≤ 2 R 2 η T +1 + T X t =1 K η t +1 x ? t +1 − Ax ? t , where we used the fact that the step-size is positive and decreasing in the last line. C. An Auxiliary Lemma In the proof of Theorem 3, we make use of another technical lemma provided below . Lemma 7. Let X be a conve x set in a Banach space B , R : B → R denote a 1-strongly con vex function on X with r espect to a norm k · k , and D R ( · , · ) r epresent the Br e gman di- ver gence with r espect to R , respectively . Furthermor e, assume that the local functions are Lipschitz continuous (Assumption 1), the matrix W is doubly stochastic (Assumption 2), the Br e gman diver gence satisfies the Lipschitz condition and the separate con vexity (Assumptions 3-4), and the mapping A is non-expansive (Assumption 5). Then, for the local estimates { x i,t } T t =1 generated by the updates (8a) - (8b) , it holds that 1 n n X i =1 T X t =1 f i,t ( x i,t ) − f i,t ( x ? t ) ≤ 2 R 2 η T +1 + T X t =1 K η t +1 x ? t +1 − Ax ? t + L 2 T X t =1 η t 2 + 2 L 2 √ n T X t =1 t − 1 X τ =0 η τ σ t − τ − 1 2 ( W ) , wher e R 2 := sup x,y ∈X D R ( x, y ) . Pr oof: In vie w of the con ve xity of f i,t ( · ) , we have f i,t ( x i,t ) − f i,t ( x ? t ) ≤ h∇ i,t , x i,t − x ? t i = h∇ i,t , ˆ x i,t +1 − x ? t i + h∇ i,t , x i,t − y i,t i + h∇ i,t , y i,t − ˆ x i,t +1 i (19) for any i ∈ [ n ] . W e no w need to bound each of the three terms on the right hand side of (19). Starting with the last term and using boundedness of gradients (Assumption 1), we have that h∇ i,t , y i,t − ˆ x i,t +1 i ≤ k y i,t − ˆ x i,t +1 k k∇ i,t k ∗ ≤ L k y i,t − ˆ x i,t +1 k ≤ 1 2 η t k y i,t − ˆ x i,t +1 k 2 + η t 2 L 2 , (20) 11 where the last line is due to AM-GM inequality . Next, we recall update (8b) to bound the second term in (19) using Assumption 1 and 2 as h∇ i,t , x i,t − y i,t i = h∇ i,t , x i,t − ¯ x t + ¯ x t − y i,t i = h∇ i,t , x i,t − ¯ x t i + n X j =1 [ W ] ij h∇ i,t , ¯ x t − x j,t i ≤ L k x i,t − ¯ x t k + L n X j =1 [ W ] ij k x j,t − ¯ x t k ≤ 2 L 2 √ n t − 1 X τ =0 η τ σ t − τ − 1 2 ( W ) , (21) where in the last line we appealed to Lemma 1. Finally , we apply Lemma 6 to (19) to get h∇ i,t , ˆ x i,t +1 − x ? t i ≤ 1 η t D R ( x ? t , y i,t ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) − 1 η t D R ( ˆ x i,t +1 , y i,t ) ≤ 1 η t D R ( x ? t , y i,t ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) − 1 2 η t k ˆ x i,t +1 − y i,t k 2 , (22) since the Bregman diver gence satisfies D R ( x, y ) ≥ 1 2 k x − y k 2 for any x, y ∈ X . Substituting (20), (21), and (22) into the bound (19), we derive f i,t ( x i,t ) − f i,t ( x ? t ) ≤ η t 2 L 2 + 2 L 2 √ n t − 1 X τ =0 η τ σ t − τ − 1 2 ( W ) + 1 η t D R ( x ? t , y i,t ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) . (23) Summing ov er t ∈ [ T ] and i ∈ [ n ] , and applying Lemma 2 completes the proof. D. Pr oof of Theor em 3 T o bound the regret defined in (4), we start with f t ( x i,t ) − f t ( x ? t ) = f t ( x i,t ) − f t ( ¯ x t ) + f t ( ¯ x t ) − f t ( x ? t ) ≤ L k x i,t − ¯ x t k + f t ( ¯ x t ) − f t ( x ? t ) = 1 n n X i =1 f i,t ( ¯ x t ) − 1 n n X i =1 f i,t ( x ? t ) + L k x i,t − ¯ x t k , where we used the Lipschitz continuity of f t ( · ) (Assumption 1) in the second line. Using the Lipschitz continuity of f i,t ( · ) for i ∈ [ n ] , we simplify abov e as follows f t ( x i,t ) − f t ( x ? t ) ≤ 1 n n X i =1 f i,t ( x i,t ) − 1 n n X i =1 f i,t ( x ? t ) + L k x i,t − ¯ x t k + L n n X i =1 k x i,t − ¯ x t k . (24) Summing o ver t ∈ [ T ] and i ∈ [ n ] , and applying Lemmata 1 and 7 completes the proof. E. Pr oof of Theor em 5 W e need to rew ork the proof of Theorem 3 using stochastic gradients by tracking the changes. Follo wing the lines in the proof of Lemma 1, equation (12) will be changed to k y i,t − ˆ x i,t +1 k ≤ η t k ∇ i,t k ∗ , yielding k x i,t +1 − ¯ x t +1 k ≤ √ n t X τ =0 η τ k ∇ i,τ k ∗ σ t − τ 2 ( W ) . (25) On the other hand, at the beginning of Lemma 7, we should use the stochastic gradient as f i,t ( x i,t ) − f i,t ( x ? t ) ≤ h∇ i,t , x i,t − x ? t i = h ∇ i,t , x i,t − x ? t i + h∇ i,t − ∇ i,t , x i,t − x ? t i = h ∇ i,t , ˆ x i,t +1 − x ? t i + h ∇ i,t , x i,t − y i,t i + h ∇ i,t , y i,t − ˆ x i,t +1 i + h∇ i,t − ∇ i,t , x i,t − x ? t i Moreov er, as in Lemma 1, any bound in volving L which was originally an upper bound on the e xact gradient must be replaced by the norm of stochastic gradient, which changes inequality (23) to f i,t ( x i,t ) − f i,t ( x ? t ) ≤ η t 2 ∇ 2 i,t ∗ + 2 √ n t − 1 X τ =0 η τ ∇ 2 i,τ ∗ σ t − τ − 1 2 ( W ) + 1 η t D R ( x ? t , y i,t ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) + h∇ i,t − ∇ i,t , x i,t − x ? t i Then taking expectation from abo ve, since E [ h∇ i,t − ∇ i,t , x i,t − x ? t i ] = E E h∇ i,t − ∇ i,t , x i,t − x ? t i F t − 1 = E E ∇ i,t − ∇ i,t F t − 1 , x i,t − x ? t = 0 , using condition (9), we get E [ f i,t ( x i,t )] − f i,t ( x ? t ) ≤ η t 2 E h k ∇ i,t k 2 ∗ i + 2 √ n t − 1 X τ =0 η τ E h k ∇ i,τ k 2 ∗ i σ t − τ − 1 2 ( W ) + E 1 η t D R ( x ? t , y i,t ) − 1 η t D R ( x ? t , ˆ x i,t +1 ) 12 Summing ov er i ∈ [ n ] and t ∈ [ T ] , we apply bounded second moment condition (9) and Lemma 2 to get the same as bound as Lemma 7, except for L being replaced by G . Then the proof is finished once we return to (24). R E F E R E N C E S [1] D. Li, K. D. W ong, Y . H. Hu, and A. M. Sayeed, “Detection, classifica- tion, and tracking of targets, ” IEEE signal processing magazine , vol. 19, no. 2, pp. 17–29, 2002. [2] M. Rabbat and R. No wak, “Distributed optimization in sensor networks, ” in Proceedings of the 3rd international symposium on Information pr ocessing in sensor networks . A CM, 2004, pp. 20–27. [3] L. Xiao, S. Boyd, and S.-J. Kim, “Distributed average consensus with least-mean-square deviation, ” Journal of P arallel and Distributed Computing , vol. 67, no. 1, pp. 33–46, 2007. [4] V . Lesser , C. L. Ortiz Jr, and M. T ambe, Distributed sensor networks: A multiag ent perspective . Springer Science & Business Media, 2012, vol. 9. [5] S. Shahrampour , A. Rakhlin, and A. Jadbabaie, “Distributed detection : Finite-time analysis and impact of network topology , ” IEEE T r ansactions on Automatic Control , vol. 61, 2016. [6] A. Nedi ´ c, A. Olshevsky , and C. A. Uribe, “Fast conv ergence rates for distributed non-bayesian learning, ” arXiv preprint , 2015. [7] L. Qipeng, Z. Jiuhua, and W . Xiaofan, “Distributed detection via bayesian updates and consensus, ” in 34th Chinese Control Confer ence (CCC) . IEEE, 2015, pp. 6992–6997. [8] J. N. Tsitsiklis, “Problems in decentralized decision making and compu- tation, ” Ph.D. dissertation, Massachusetts Institute of T echnology , 1984. [9] J. N. Tsitsiklis, D. P . Bertsekas, and M. Athans, “Distributed asyn- chronous deterministic and stochastic gradient optimization algorithms, ” in American Contr ol Confer ence (ACC) , 1984, pp. 484–489. [10] D. P . Bertsekas and J. N. Tsitsiklis, P arallel and distributed computation: numerical methods . Prentice hall Englewood Cliffs, NJ, 1989, vol. 23. [11] A. Nedic and A. Ozdaglar, “Distributed subgradient methods for multi- agent optimization, ” IEEE T ransactions on Automatic Contr ol , vol. 54, no. 1, pp. 48–61, 2009. [12] B. Johansson, M. Rabi, and M. Johansson, “ A randomized incremental subgradient method for distributed optimization in networked systems, ” SIAM Journal on Optimization , vol. 20, no. 3, pp. 1157–1170, 2009. [13] S. S. Ram, A. Nedi ´ c, and V . V . V eeravalli, “Distributed stochastic subgradient projection algorithms for conv ex optimization, ” Journal of optimization theory and applications , v ol. 147, no. 3, pp. 516–545, 2010. [14] J. C. Duchi, A. Agarwal, and M. J. W ainwright, “Dual averaging for distributed optimization: con vergence analysis and network scaling, ” IEEE T ransactions on Automatic Contr ol , vol. 57, no. 3, pp. 592–606, 2012. [15] M. Zhu and S. Mart ´ ınez, “On distrib uted conv ex optimization under inequality and equality constraints, ” IEEE T r ansactions on Automatic Contr ol , v ol. 57, no. 1, pp. 151–164, 2012. [16] D. Jakoveti ´ c, J. Xavier , and J. M. Moura, “Fast distrib uted gradient methods, ” IEEE T r ansactions on Automatic Control , vol. 59, no. 5, pp. 1131–1146, 2014. [17] W . Shi, Q. Ling, G. Wu, and W . Y in, “Extra: An exact first-order algorithm for decentralized consensus optimization, ” SIAM Journal on Optimization , vol. 25, no. 2, pp. 944–966, 2015. [18] S. Shalev-Shwartz, “Online learning and online conve x optimization, ” F oundations and T rends in Machine Learning , vol. 4, no. 2, pp. 107– 194, 2011. [19] M. Zinkevich, “Online conv ex programming and generalized infinites- imal gradient ascent, ” International Confer ence on Machine Learning (ICML) , 2003. [20] E. C. Hall and R. M. W illett, “Online con vex optimization in dynamic en vironments, ” IEEE Journal of Selected T opics in Signal Processing , vol. 9, no. 4, pp. 647–662, 2015. [21] O. Besbes, Y . Gur , and A. Zeevi, “Non-stationary stochastic optimiza- tion, ” Operations Research , vol. 63, no. 5, pp. 1227–1244, 2015. [22] A. Jadbabaie, A. Rakhlin, S. Shahrampour, and K. Sridharan, “Online optimization: Competing with dynamic comparators, ” in Pr oceedings of the Eighteenth International Confer ence on Artificial Intellig ence and Statistics , 2015, pp. 398–406. [23] R. Olfati-Saber , “Distributed kalman filtering for sensor networks, ” in IEEE Conference on Decision and Contr ol , 2007, pp. 5492–5498. [24] D. Gu, “Distrib uted particle filter for tar get tracking, ” in IEEE Interna- tional Conference on Robotics and A utomation , 2007, pp. 3856–3861. [25] D. Y udin and A. Nemirovskii, “Problem complexity and method effi- ciency in optimization, ” 1983. [26] A. Beck and M. T eboulle, “Mirror descent and nonlinear projected subgradient methods for con vex optimization, ” Operations Researc h Letters , vol. 31, no. 3, pp. 167–175, 2003. [27] H. H. Bauschke and J. M. Borwein, “Joint and separate conv exity of the bregman distance, ” Studies in Computational Mathematics , vol. 8, pp. 23–36, 2001. [28] J. Li, G. Chen, Z. Dong, and Z. W u, “Distributed mirror descent method for multi-agent optimization with delay , ” Neurocomputing , vol. 177, pp. 643–650, 2016. [29] J. Li, G. Chen, Z. Dong, Z. W u, and M. Y ao, “Distributed mirror descent method for saddle point problems over directed graphs, ” Complexity , 2016. [30] M. Rabbat, “Multi-agent mirror descent for decentralized stochastic optimization, ” in Computational Advances in Multi-Sensor Adaptive Pr ocessing (CAMSAP), 2015 IEEE 6th International W orkshop on . IEEE, 2015, pp. 517–520. [31] M. Raginsky and J. Bouvrie, “Continuous-time stochastic mirror descent on a network: V ariance reduction, consensus, con vergence, ” in IEEE Confer ence on Decision and Control (CDC) , 2012, pp. 6793–6800. [32] S. Hosseini, A. Chapman, and M. Mesbahi, “Online distributed op- timization via dual a veraging, ” in IEEE Confer ence on Decision and Contr ol (CDC) , 2013, pp. 1484–1489. [33] D. Mateos-N ´ unez and J. Cort ´ es, “Distributed online conv ex optimization over jointly connected digraphs, ” IEEE T ransactions on Network Science and Engineering , vol. 1, no. 1, pp. 23–37, 2014. [34] A. Nedi ´ c, S. Lee, and M. Raginsky , “Decentralized online optimization with global objectiv es and local communication, ” in IEEE American Contr ol Confer ence (A CC) , 2015, pp. 4497–4503. [35] M. Akbari, B. Gharesifard, and T . Linder , “Distributed online con vex optimization on time-varying directed graphs, ” IEEE T ransactions on Contr ol of Network Systems , 2015. [36] A. Mokhtari, S. Shahrampour, A. Jadbabaie, and A. Ribeiro, “Online op- timization in dynamic en vironments: Impro ved regret rates for strongly con vex problems, ” arXiv pr eprint arXiv:1603.04954 , 2016. [37] C.-J. Lee, C.-K. Chiang, and M.-E. Wu, “Resisting dynamic strategies in gradually evolving worlds, ” in Third International Conference on Robot, V ision and Signal Pr ocessing (RVSP) . IEEE, 2015, pp. 191–194. [38] M. Fazlyab, S. Paternain, V . M. Preciado, and A. Ribeiro, “Interior point method for dynamic constrained optimization in continuous time, ” in IEEE American Contr ol Conference (ACC) , July 2016, pp. 5612–5618. [39] T . Y ang, L. Zhang, R. Jin, and J. Y i, “T racking slowly moving clair- voyant: Optimal dynamic re gret of online learning with true and noisy gradient, ” International Confer ence on Machine Learning (ICML) , 2016. [40] R. A. Horn and C. R. Johnson, Matrix analysis . Cambridge univ ersity press, 2012. [41] Y . Bar-Shalom, T racking and data association . Academic Press Professional, Inc., 1987. [42] F . S. Catti velli and A. H. Sayed, “Diffusion strategies for distrib uted kalman filtering and smoothing, ” IEEE T ransactions on automatic contr ol , v ol. 55, no. 9, pp. 2069–2084, 2010. [43] U. Khan, S. Kar, A. Jadbabaie, J. M. Moura et al. , “On connectivity , ob- servability , and stability in distributed estimation, ” in IEEE Confer ence on Decision and Control (CDC) , 2010, pp. 6639–6644. [44] S. Das and J. M. Moura, “Distributed state estimation in multi-agent networks, ” in IEEE International Confer ence on Acoustics, Speech and Signal Processing (ICASSP) , 2013, pp. 4246–4250. [45] D. Han, Y . Mo, J. W u, S. W eerakkody , B. Sinopoli, and L. Shi, “Stochastic event-triggered sensor schedule for remote state estimation, ” IEEE Tr ansactions on Automatic Contr ol , vol. 60, no. 10, pp. 2661– 2675, 2015. [46] O. Hlinka, O. Sluciak, F . Hlawatsch, P . M. Djuric, and M. Rupp, “Likeli- hood consensus and its application to distributed particle filtering, ” IEEE T ransactions on Signal Pr ocessing , vol. 60, no. 8, pp. 4334–4349, 2012. [47] J. Li and A. Nehorai, “Distributed particle filtering via optimal fusion of gaussian mixtures, ” in Information Fusion (Fusion), 2015 18th International Conference on . IEEE, 2015, pp. 1182–1189. [48] D. Acemoglu, A. Nedi ´ c, and A. Ozdaglar, “Con vergence of rule-of- thumb learning rules in social networks, ” in IEEE Confer ence on Decision and Contr ol (CDC) , 2008, pp. 1714–1720. [49] S. Shahrampour , S. Rakhlin, and A. Jadbabaie, “Online learning of dynamic parameters in social networks, ” in Advances in Neural Infor- mation Processing Systems , 2013. [50] S. Kar , J. M. Moura, and K. Ramanan, “Distributed parameter estimation in sensor networks: Nonlinear observation models and imperfect com- 13 munication, ” IEEE T ransactions on Information Theory , vol. 58, no. 6, pp. 3575–3605, 2012. [51] S. Shahrampour, A. Rakhlin, and A. Jadbabaie, “Distributed estimation of dynamic parameters: Regret analysis, ” in American Contr ol Confer- ence (ACC) , July 2016, pp. 1066–1071.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment