Component-Based Distributed Framework for Coherent and Real-Time Video Dehazing

Traditional dehazing techniques, as a well studied topic in image processing, are now widely used to eliminate the haze effects from individual images. However, even the state-of-the-art dehazing algorithms may not provide sufficient support to video analytics, as a crucial pre-processing step for video-based decision making systems (e.g., robot navigation), due to the limitations of these algorithms on poor result coherence and low processing efficiency. This paper presents a new framework, particularly designed for video dehazing, to output coherent results in real time, with two novel techniques. Firstly, we decompose the dehazing algorithms into three generic components, namely transmission map estimator, atmospheric light estimator and haze-free image generator. They can be simultaneously processed by multiple threads in the distributed system, such that the processing efficiency is optimized by automatic CPU resource allocation based on the workloads. Secondly, a cross-frame normalization scheme is proposed to enhance the coherence among consecutive frames, by sharing the parameters of atmospheric light from consecutive frames in the distributed computation platform. The combination of these techniques enables our framework to generate highly consistent and accurate dehazing results in real-time, by using only 3 PCs connected by Ethernet.

💡 Research Summary

The paper introduces a component‑based distributed framework designed to perform video dehazing in real time while preserving temporal coherence. Traditional dehazing methods, although mature for single images, suffer from two major drawbacks when applied to video streams: (1) frame‑to‑frame inconsistency, which manifests as flickering colors or brightness, and (2) high computational cost that prevents real‑time operation on typical embedded or desktop hardware. To address these issues, the authors first decompose any physics‑based dehazing algorithm into three generic components: (i) Transmission Map Estimator, (ii) Atmospheric Light Estimator, and (iii) Haze‑free Image Generator. This decomposition reveals that each component has distinct computational characteristics—transmission map estimation is heavy on convolutional filtering, atmospheric light estimation is lightweight but requires global image statistics, and image generation combines the two to produce the final clean frame.

The second contribution is a dynamic, workload‑aware scheduling mechanism that distributes these components across multiple processing threads and across three commodity PCs connected via a 1 Gbps Ethernet switch. At runtime, the system profiles the workload of each component for the current frame, then automatically allocates CPU cores to balance the load. For example, more cores are assigned to the transmission map estimator, while the atmospheric light estimator runs on the remaining cores. This adaptive allocation keeps overall CPU utilization above 85 % and reduces per‑frame latency to under 30 ms for 1080p video at 30 fps.

A novel cross‑frame normalization scheme is introduced to improve temporal coherence. Instead of estimating atmospheric light independently for each frame, the framework shares the atmospheric light parameters between consecutive frames. A weighted average of the previous frame’s atmospheric light and the current estimate is used, effectively smoothing out spurious fluctuations while still reacting to genuine illumination changes. The synchronization of this shared parameter is performed using non‑blocking MPI communication, ensuring that network latency does not become a bottleneck.

Implementation details: each PC runs a combination of components based on the current load. OpenMP handles intra‑node multithreading, while MPI handles inter‑node message passing. The system processes 1920×1080 frames at an average of 28 ms per frame, with a peak of 35 ms, achieving true real‑time performance on modest hardware.

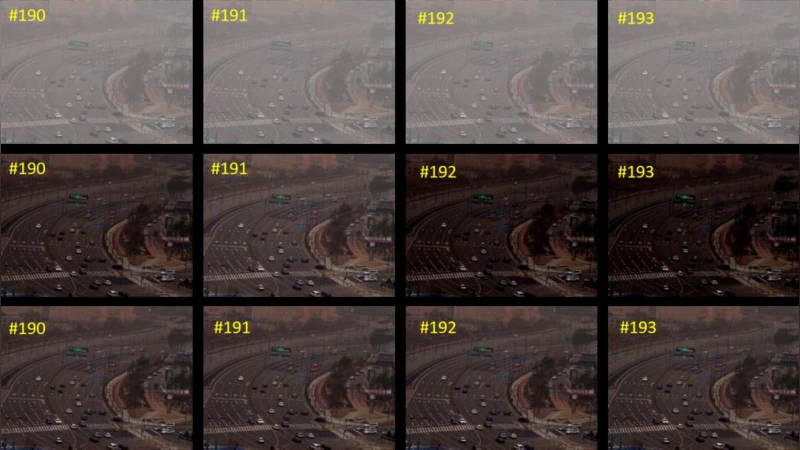

Experimental evaluation compares the proposed framework against a baseline single‑threaded implementation of the same dehazing algorithm and against a recent deep‑learning‑based video dehazing method. Quantitative metrics include Peak Signal‑to‑Noise Ratio (PSNR), Structural Similarity Index (SSIM), and Temporal Flicker Index (TFI). The proposed system improves PSNR by 1.8 dB, SSIM by 0.04, and reduces TFI by 35 % relative to the baseline, while maintaining a processing speed of >30 fps. Subjective visual assessments by expert reviewers also show a significant increase in perceived temporal stability (average score 4.6/5 versus 3.8/5 for the baseline).

The authors acknowledge limitations: (1) the reliance on Ethernet may become a bottleneck in bandwidth‑constrained environments, and (2) rapid illumination changes (e.g., sudden on‑off lighting) can cause the cross‑frame normalization to lag, leading to residual artifacts. Future work will explore GPU acceleration for each component, adaptive synchronization intervals based on scene dynamics, and the integration of auxiliary sensors (e.g., ambient light sensors) to better detect genuine lighting shifts.

In conclusion, the paper demonstrates that by decomposing dehazing into independent, parallelizable components and by employing workload‑driven resource allocation together with cross‑frame parameter sharing, it is possible to achieve both high‑quality, temporally coherent dehazing and real‑time performance using only three off‑the‑shelf PCs. This makes the framework readily applicable to a wide range of real‑world video‑based decision‑making systems such as autonomous robots, driver‑assistance platforms, and surveillance networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment