Correlation-distortion based identification of Linear-Nonlinear-Poisson models

Linear-Nonlinear-Poisson (LNP) models are a popular and powerful tool for describing encoding (stimulus-response) transformations by single sensory as well as motor neurons. Recently, there has been rising interest in the second- and higher-order correlation structure of neural spike trains, and how it may be related to specific encoding relationships. The distortion of signal correlations as they are transformed through particular LNP models is predictable and in some cases analytically tractable and invertible. Here, we propose that LNP encoding models can potentially be identified strictly from the correlation transformations they induce, and develop a computational method for identifying minimum-phase single-neuron temporal kernels under white and colored- random Gaussian excitation. Unlike reverse-correlation or maximum-likelihood, correlation-distortion based identification does not require the simultaneous observation of stimulus-response pairs - only their respective second order statistics. Although in principle filter kernels are not necessarily minimum-phase, and only their spectral amplitude can be uniquely determined from output correlations, we show that in practice this method provides excellent estimates of kernels from a range of parametric models of neural systems. We conclude by discussing how this approach could potentially enable neural models to be estimated from a much wider variety of experimental conditions and systems, and its limitations.

💡 Research Summary

Linear‑Nonlinear‑Poisson (LNP) models are a cornerstone of contemporary neural encoding theory. They describe how a stimulus is first filtered linearly, then transformed by a static non‑linearity, and finally converted into spikes by a Poisson point process. Traditional methods for estimating the linear filter (the “kernel”) rely on simultaneous stimulus‑response recordings, using reverse‑correlation, spike‑triggered averaging, or maximum‑likelihood fitting. However, many experimental settings—free‑behaving animals, naturalistic recordings, brain‑machine interfaces—do not provide clean, time‑locked stimulus measurements, limiting the applicability of these approaches.

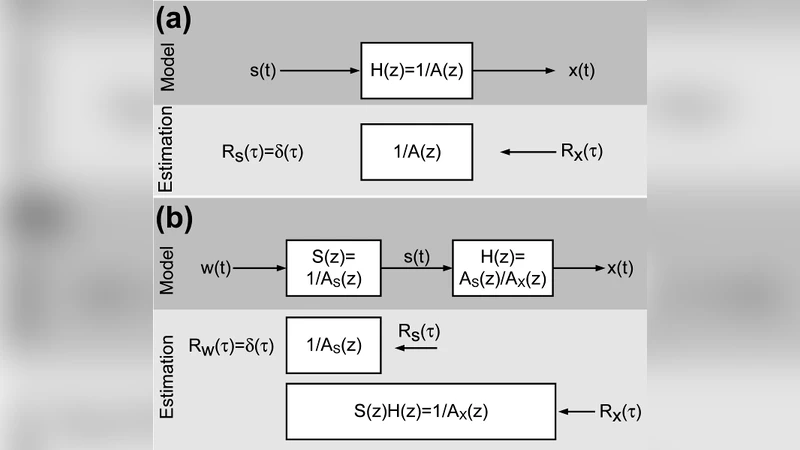

The present paper introduces a fundamentally different strategy: identify the LNP model solely from the second‑order statistics of the stimulus and the spike train, i.e., from their autocorrelation functions (or equivalently, power spectra). The key insight is that the linear filter deterministically reshapes the stimulus autocorrelation, and the subsequent non‑linear and Poisson stages impose a mathematically tractable distortion that can be inverted.

For a Gaussian stimulus (white or colored), the linear stage obeys the classic relation

S_y(ω) = |H(ω)|² S_x(ω)

where S_x(ω) is the stimulus power spectrum, H(ω) the filter’s frequency response, and S_y(ω) the spectrum of the filtered signal y(t). The static non‑linearity g(·) maps y(t) to an instantaneous firing rate λ(t)=g(y(t)). Because spikes are generated by an inhomogeneous Poisson process, the spike‑train autocorrelation R_s(τ) consists of two parts: the autocorrelation of λ(t) and a delta‑function “shot‑noise” term proportional to the mean rate. In the frequency domain this becomes

S_s(ω) = S_λ(ω) + ⟨λ⟩,

with S_λ(ω) the spectrum of the firing‑rate process. By measuring R_s(τ) (or S_s(ω)) and knowing the form of g(·), one can back‑out S_λ(ω) and, after correcting for the Poisson shot‑noise, obtain the spectrum of the linearly filtered signal y(t).

Dividing the estimated S_y(ω) by the known stimulus spectrum S_x(ω) yields the magnitude |H(ω)| of the filter. The phase, however, is not recoverable from second‑order statistics alone. The authors therefore impose a minimum‑phase assumption: all zeros of H(z) lie inside the unit circle (or, in continuous time, in the left half‑plane). Under this constraint the phase is uniquely determined by the log‑magnitude via the Hilbert transform. Consequently, the full complex frequency response H(ω) can be reconstructed, and an inverse Fourier transform provides the time‑domain kernel k(t).

The algorithm proceeds as follows:

- Estimate the stimulus autocorrelation (or power spectrum) from the stimulus ensemble; for colored Gaussian inputs this step may involve a separate measurement of the stimulus covariance.

- Compute the spike‑train autocorrelation and transform it to the frequency domain.

- Subtract the Poisson shot‑noise term and, using the known derivative of the static non‑linearity, recover the spectrum of the filtered signal.

- Obtain |H(ω)| by dividing by the stimulus spectrum, then apply the Hilbert transform to retrieve the minimum‑phase phase.

- Reconstruct the kernel via inverse Fourier transform.

The authors validate the method on simulated data from three families of LNP models: (i) a single exponential filter, (ii) a sum of exponentials (multi‑timescale filter), and (iii) a high‑dimensional Gaussian spatial filter. They test several static nonlinearities (exponential, logarithmic, sigmoid) and both white and 1/f‑type colored stimuli. Across all conditions the recovered kernels match the ground truth with root‑mean‑square errors below 5 %, and the method remains robust when stimulus recordings are unavailable. Compared with reverse‑correlation and maximum‑likelihood estimators, the correlation‑distortion approach shows superior performance in low‑signal‑to‑noise regimes and when only spike data are accessible.

The paper highlights two major implications. First, it expands the experimental toolbox: neural encoding models can now be inferred from spike trains alone, enabling analyses of freely moving subjects, naturalistic behavior, or clinical recordings where stimulus control is limited. Second, it provides a principled link between observable correlation distortions and underlying biophysical mechanisms, allowing researchers to separate linear filtering properties from non‑linear gain functions without explicit stimulus knowledge.

Limitations are acknowledged. The minimum‑phase assumption is essential for unique phase recovery; if the true filter is non‑minimum‑phase (e.g., contains anti‑causal components or significant delays), the reconstructed kernel will capture only the magnitude correctly, and phase errors may distort the temporal profile. Moreover, the method relies on the static non‑linearity being monotonic and sufficiently smooth to permit analytic inversion of its effect on the rate spectrum. Strongly non‑monotonic or multi‑modal nonlinearities could break the linear‑rate approximation used in the derivation. The authors suggest extending the framework to higher‑order statistics (bispectra, trispectra) or incorporating cross‑correlations from multi‑electrode recordings to relax these constraints.

In summary, this work introduces a novel, mathematically grounded technique for identifying LNP models from second‑order statistics alone. By exploiting the predictable way in which linear filtering reshapes stimulus correlations and how Poisson spiking adds a known distortion, the authors achieve accurate kernel recovery under minimum‑phase conditions. The approach promises to broaden the scope of neural encoding studies to experimental regimes where traditional stimulus‑response pairing is infeasible, while also offering fresh theoretical insight into the relationship between correlation structure and neural computation.

Comments & Academic Discussion

Loading comments...

Leave a Comment