A General Strategy for Physics-Based Model Validation Illustrated with Earthquake Phenomenology, Atmospheric Radiative Transfer, and Computational Fluid Dynamics

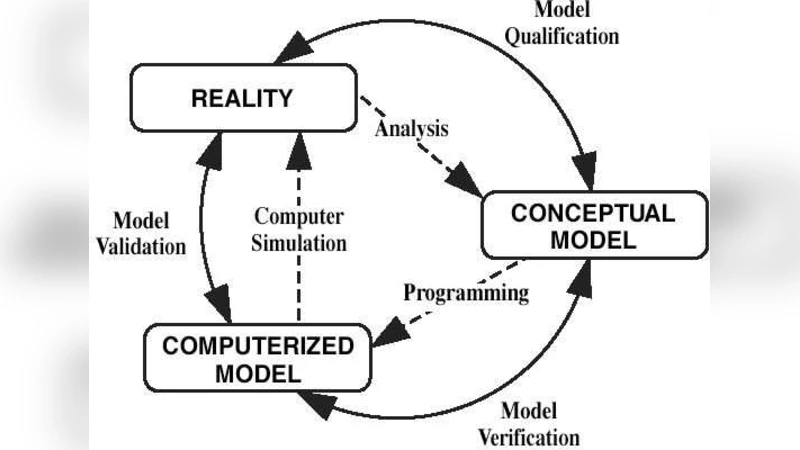

Validation is often defined as the process of determining the degree to which a model is an accurate representation of the real world from the perspective of its intended uses. Validation is crucial as industries and governments depend increasingly on predictions by computer models to justify their decisions. In this article, we survey the model validation literature and propose to formulate validation as an iterative construction process that mimics the process occurring implicitly in the minds of scientists. We thus offer a formal representation of the progressive build-up of trust in the model, and thereby replace incapacitating claims on the impossibility of validating a given model by an adaptive process of constructive approximation. This approach is better adapted to the fuzzy, coarse-grained nature of validation. Our procedure factors in the degree of redundancy versus novelty of the experiments used for validation as well as the degree to which the model predicts the observations. We illustrate the new methodology first with the maturation of Quantum Mechanics as the arguably best established physics theory and then with several concrete examples drawn from some of our primary scientific interests: a cellular automaton model for earthquakes, an anomalous diffusion model for solar radiation transport in the cloudy atmosphere, and a computational fluid dynamics code for the Richtmyer-Meshkov instability. This article is an augmented version of Sornette et al. [2007] that appeared in Proceedings of the National Academy of Sciences in 2007 (doi: 10.1073/pnas.0611677104), with an electronic supplement at URL http://www.pnas.org/cgi/content/full/0611677104/DC1. Sornette et al. [2007] is also available in preprint form at physics/0511219.

💡 Research Summary

The paper tackles a fundamental problem in modern science and engineering: how to assess whether a computational model faithfully represents reality for its intended use. Traditional discussions of validation often end with the claim that “models cannot be fully validated,” which the authors argue is a dead‑end. Instead, they propose to view validation as an iterative construction of trust, mirroring the way scientists actually work—hypothesize, test, revise, and repeat.

The core of the methodology is a quantitative, cumulative trust score (C). For each new experiment or observation (E_k) the authors decompose its contribution into two orthogonal dimensions:

- Redundancy vs. Novelty – The degree to which the experiment repeats previously performed tests is measured by a redundancy factor (R_k\in

Comments & Academic Discussion

Loading comments...

Leave a Comment