Q-Learning with Basic Emotions

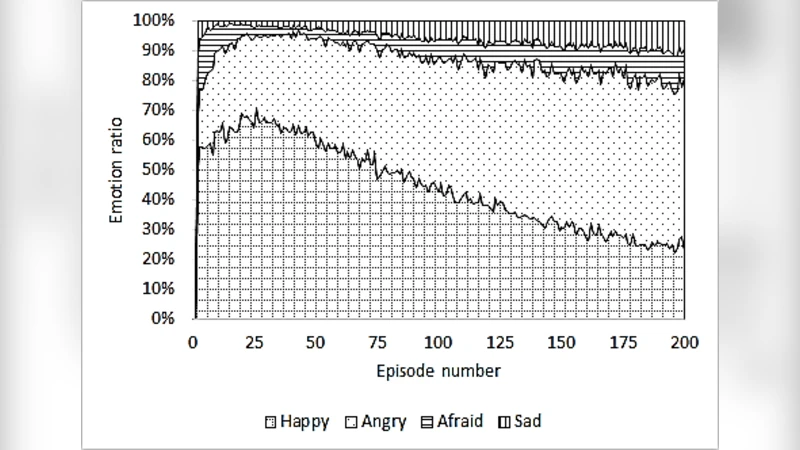

Q-learning is a simple and powerful tool in solving dynamic problems where environments are unknown. It uses a balance of exploration and exploitation to find an optimal solution to the problem. In this paper, we propose using four basic emotions: joy, sadness, fear, and anger to influence a Qlearning agent. Simulations show that the proposed affective agent requires lesser number of steps to find the optimal path. We found when affective agent finds the optimal path, the ratio between exploration to exploitation gradually decreases, indicating lower total step count in the long run

💡 Research Summary

The paper introduces a novel affective reinforcement‑learning framework that embeds four basic emotions—joy, sadness, fear, and anger—into the classic Q‑learning algorithm. The authors begin by highlighting a fundamental limitation of conventional exploration strategies such as ε‑greedy or Boltzmann: they rely on a fixed or pre‑scheduled exploration rate that does not adapt to the agent’s ongoing experience. Drawing inspiration from psychological theories that humans modulate risk‑taking and reward‑seeking behavior through emotions, the authors hypothesize that an artificial agent equipped with a simple emotional model could dynamically balance exploration and exploitation more efficiently.

To operationalize this idea, each emotion is mapped to a specific modulation of the learning parameters. Joy is triggered when the received reward exceeds the agent’s expectation, temporarily raising the exploration probability ε to encourage the discovery of new routes. Sadness occurs after a series of sub‑par rewards, lowering ε to consolidate the current policy. Fear is associated with high‑cost or hazardous states; it sharply reduces ε, steering the agent toward safer actions. Anger emerges when repeated negative feedback is observed; it re‑elevates ε to break out of potential local minima. These emotional states are represented by a discrete variable E_t that evolves over time according to empirically defined transition probabilities, effectively forming a simple Markov chain of affective dynamics.

Mathematically, the standard Q‑learning update

Q(s,a) ← Q(s,a) + α

Comments & Academic Discussion

Loading comments...

Leave a Comment