High Dimensional Human Guided Machine Learning

Have you ever looked at a machine learning classification model and thought, I could have made that? Well, that is what we test in this project, comparing XGBoost trained on human engineered features to training directly on data. The human engineered…

Authors: Eric Holloway, Robert Marks II

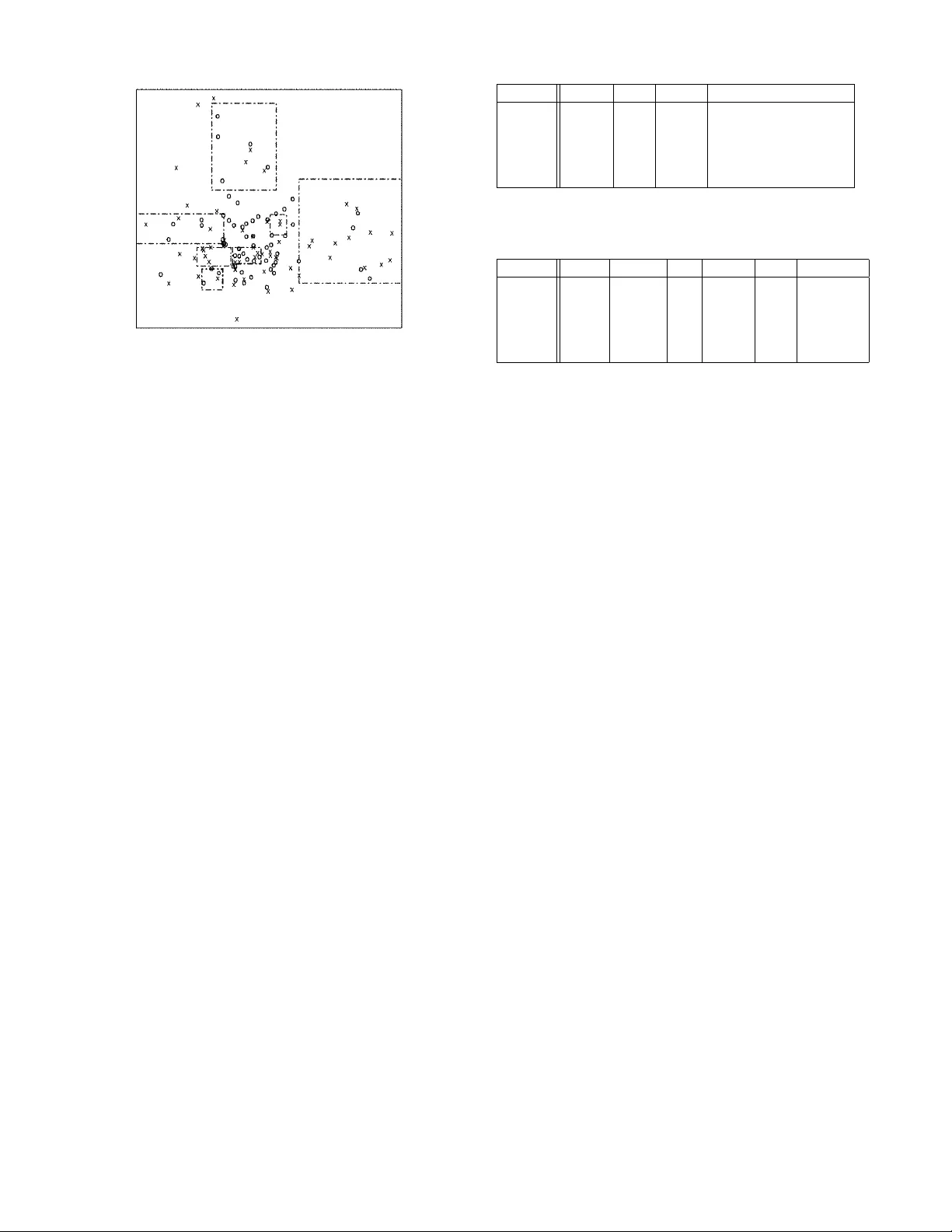

High Dimensional Human Guided Machine Learning Eric Holloway , Robert Marks II Dept. of Electrical & Computer Engineering Baylor Univ ersity W aco, T exas email: first last @ baylor .edu Abstract Hav e you ever looked at a machine learning classification model and thought, I could hav e made that? W ell, that is what we test in this project, comparing XGBoost trained on human engineered features to training directly on data. The human engineered features do not outperform XGBoost trained di- rectly on the data, but they are comparable. This project con- tributes a novel method for utilizing human created classifi- cation models on high dimensional datasets. Why Human Guided? In the artificial intelligence, machine learning, and human computation fields there is little research into the ef fectiv e- ness of human generated models. One research project is hu- man guided simple search (Anderson et al. 2000), and tab u search (Klau et al. 2002). Humans outperform the state of the art algorithms when solving complex visual problems, such as the trav elling salesman problem (Krolak, Felts, and Marble 1971; Dry et al. 2006; Acu ˜ na and Parada 2010). Nu- merous machine learning algorithms are NP-Complete or harder , such as the set cover machine (SCM) (Marchand and T aylor 2003). Breakthroughs have been achie ved by in- cluding humans-in-the-loop for hard optimization and com- binatorial problems, (Le Bras et al. 2014) and (Khatib et al. 2011). W ith these promising results there is need for further in vestigation into human guided machine learning. Machine learning algorithms typically work with high di- mensional datasets, which a human cannot visualize in en- tirity . But the high dimensionality of a dataset is not an in- surmountable obstacle to effecti vely using a human-in-the- loop. A pproach and Implementation In this project we use a dimension subset approach to test out human effecti veness in creating classification models. Instead of having a human attempt high dimensional visu- alization, we hav e humans design models on pairs of dimen- sions. These models are then used to transform the dataset into a feature space. XGBoost (Chen and Guestrin 2016), short for eXtreme Gradient Boosting, is a popular machine learning library Copyright c 2016, Association for the Advancement of Artificial Intelligence (www .aaai.org). All rights reserved. that has been used to win multiple Kaggle competitions. An XGBoost model is trained on the transformed data, and the results are compared to training XGBoost on the untrans- formed data. W e are not restricted to only using XGBoost, other machine learning approaches also work and we hav e tested linear perceptrons, linear regression and support vec- tor machines. The following process is used to create each model. 1. A pair of dimensions are selected and the training dataset ( X train ) centered and normalized for those dimensions. The training dataset contains about 100 samples. The pairs are selected based on low correlation between the two dimensions. If there is low correlation, then it is eas- ier to identify clusters of data points. Not all dimensions in a dataset are used by the workers. 2. The worker is given a scatterplot of the two dimensions and proceeds to draw polygons ( ρ ) to separate the data into classification re gions. Each polygon classifies a sam- ple to one class ( ρ class ). For simplicity , the polygon is a rectangle, making the models similar to those produced by SCM (2003). 3. The collection of polygons drawn by the work er on a pair of dimensions is a single model ( Φ ). An example of a model is shown in Figure 1. 4. The model is ev aluated on a test dataset ( X test ) produc- ing an accurac y score for the classification re gions ( Φ acc ), see Equation 1. Only samples contained by a polygon ( X ρ v alid ) in the model ( X Φ v alid ) contribute to the accuracy score. The test dataset contains about 200 samples. Φ acc = P ρ ∈ Φ P x ∈ X ρ valid [ x class = ρ class ] | X Φ v alid | (1) The sample transformation function is sho wn in Equation 2, which is a weighted sum of model polygons containing the sample. f ( x, Φ) = [ x ∈ Φ] ∗ Φ acc (2) Then, for M samples and N models we ha ve the follo wing Figure 1: Example of polygons drawn by work er . M × N feature matrix. f ( x 1 , Φ 1 ) f ( x 1 , Φ 2 ) . . . f ( x 1 , Φ N ) f ( x 2 , Φ 1 ) f ( x 2 , Φ 2 ) . . . f ( x 2 , Φ N ) . . . . . . . . . . . . f ( x M , Φ 1 ) f ( x M , Φ 2 ) . . . f ( x M , Φ N ) XGBoost is trained on a subset M 0 of the M samples, and then used to classify the remaining samples. T o perform a fair comparison, only the dimensions used by the workers are included in the untransformed samples. For example, if the dataset has D dimensions, but only D 0 dimensions are used, the XGBoost model is trained on an M 0 × D 0 matrix. Thus, one XGBoost model is trained on the untransformed samples ( M 0 × D 0 data matrix), and another on the trans- formed samples ( M 0 × N feature matrix). The Amazon online service Mechanical T urk (AMT) is used to gather human produced models. 1. The AMT job directs the worker to a website where they can perform the classification task. 2. A scatterplot shows X train plotted according to the ran- domly chosen dimension pair , and the worker dra ws boxes on the scatterplot. 3. A progress bar gi ves feedback on the accuracy of the model. Accuracy is calculated on a validation dataset X v alid . The validation dataset contains about 100 sam- ples. Only models that achiev e an accuracy above 50% are accepted, to provide quality control. 4. Once the model has been accepted, the website giv es the worker a job completion code. 5. Back at the AMT job posting, the worker submits the code for payment. W e use fiv e datasets with binary classification tasks. Datasets consist of one synthetic clustering task, and the rest are real world datasets from Kaggle. Most of the datasets are highly unbalanced, so we balance the datasets to have an equal number of both classes. Additionally , with the ex- ception of the synthetic dataset, the dimensions consist of both nominal and continuous variables. A summary of the Name Nom. Int. Cont. Note Mad. 0 500 0 hyper-XOR problem Car . 18 0 14 car auction Home. 295 0 1 real estate Mel. 178 61 11 grant applications Credit 0 6 4 credit risks T able 1: Datasets and their characteristics. Nom = nominal. Int = integer . Cont = continuous. Name M’ M-M’ D’ Data N F eatur es Mad. 2000 600 73 0.650 320 0.655 Car . 2000 2230 7 0.521 194 0.481 Home. 2000 1806 43 0.795 194 0.723 Mel. 2000 2500 9 0.542 64 0.512 Credit 2000 18052 8 0.762 156 0.717 T able 2: Accuracy results of training XGBoost directly on data , and on featur es produced by AMT workers. M is the total number of samples. M’ is the number of samples in the training dataset. M-M’ is the number of samples in the test dataset. D’ is the number of dimensions used by the work- ers. N is the number of models the work ers created and the number of features generated. datasets is in T able 1, and the dataset sources are the follow- ing. • Madelon (Mad.) (Guyon et al. 2004) • Carvana (Car .) (Carv ana 2011) • Homesite (Home.) (Homesite 2015) • Melbourne (Mel.) (of Melbourne 2010) • Credit (Kaggle 2011) Results and Conclusion T able 2 demonstrates the results from training XGBoost di- rectly on the data, as well as on the features generated by the AMT workers. XGBoost’ s model is parameterized by cross validation. The parameters are learning rate (0.01, 0.05, 0.1, 0.3), max tree depth (2, 5, 10, 15), and number of rounds (50, 100, 200, 400, 800). W e’ v e shown that human guided machine learning can be crowd sourced through workers drawing polygons on scat- terplots. These models do not outperform standard algorith- mic approaches, but are comparable. The contribution of this project is human model creation on high dimensional datasets. Future research will discov er if and when human pro- duced models outperform purely algorithmic approaches. In this research, human produced models did not outperform algorithmic approaches likely due to loss of information. T ransforming the data using the models reduces the data granularity . A way ahead is to find a way to preserve granu- larity while using the human produced models. Acknowledgements The researchers thank the AMT workers who contributed their valuable insight. References [Acu ˜ na and Parada 2010] Acu ˜ na, D. E., and P arada, V . 2010. People efficiently explore the solution space of the computa- tionally intractable trav eling salesman problem to find near- optimal tours. PloS one 5(7):e11685. [Anderson et al. 2000] Anderson, D.; Anderson, E.; Lesh, N.; Marks, J.; Mirtich, B.; Ratajczak, D.; and Ryall, K. 2000. Human-guided simple search. In AAAI/IAAI , 209–216. [Carvana 2011] Carvana. 2011. Don’t get kicked! https://www.kaggle.com/c/DontGetKicked/ data . [Chen and Guestrin 2016] Chen, T ., and Guestrin, C. 2016. Xgboost: A scalable tree boosting system. arXiv preprint arXiv:1603.02754 . [Dry et al. 2006] Dry , M.; Lee, M. D.; V ickers, D.; and Hughes, P . 2006. Human performance on visually pre- sented traveling salesperson problems with varying numbers of nodes. The J ournal of Pr oblem Solving 1(1):4. [Guyon et al. 2004] Guyon, I.; Gunn, S.; Ben-Hur, A.; and Dror , G. 2004. Result analysis of the nips 2003 feature selection challenge. In Advances in neural information pr o- cessing systems , 545–552. [Homesite 2015] Homesite. 2015. Homesite quote con version. https://www.kaggle.com/c/ homesite- quote- conversion/data . [Kaggle 2011] Kaggle. 2011. Giv e me some credit. https://www.kaggle.com/c/ GiveMeSomeCredit/data . [Khatib et al. 2011] Khatib, F .; DiMaio, F .; Cooper , S.; Kazmierczyk, M.; Gilski, M.; Krzywda, S.; Zabranska, H.; Pichov a, I.; Thompson, J.; Popovi ´ c, Z.; et al. 2011. Crystal structure of a monomeric retroviral protease solved by pro- tein folding game players. Natur e structural & molecular biology 18(10):1175–1177. [Klau et al. 2002] Klau, G. W .; Lesh, N.; Marks, J.; and Mitzenmacher , M. 2002. Human-guided tabu search. In AAAI/IAAI , 41–47. [Krolak, Felts, and Marble 1971] Krolak, P .; Felts, W .; and Marble, G. 1971. A man-machine approach to ward solv- ing the trav eling salesman problem. Communications of the A CM 14(5):327–334. [Le Bras et al. 2014] Le Bras, R.; Xue, Y .; Bernstein, R.; Gomes, C. P .; and Selman, B. 2014. A human computation framew ork for boosting combinatorial solvers. In Second AAAI Confer ence on Human Computation and Cr owdsour c- ing . [Marchand and T aylor 2003] Marchand, M., and T aylor, J. S. 2003. The set covering machine. The Journal of Machine Learning Resear ch 3:723–746. [of Melbourne 2010] of Melbourne, U. 2010. Predict grant applications. https://www.kaggle.com/c/unimelb/data .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment