A Fast Pseudo-Stochastic Sequential Cipher Generator Based on RBMs

Based on Restricted Boltzmann Machines (RBMs), an improved pseudo-stochastic sequential cipher generator is proposed. It is effective and efficient because of the two advantages: this generator includes a stochastic neural network that can perform the calculation in parallel, that is to say, all elements are calculated simultaneously; unlimited number of sequential ciphers can be generated simultaneously for multiple encryption schemas. The periodicity and the correlation of the output sequential ciphers meet the requirements for the design of encrypting sequential data. In the experiment, the generated sequential cipher is used to encrypt the image, and better performance is achieved in terms of the key space analysis, the correlation analysis, the sensitivity analysis and the differential attack. The experimental result is promising that could promote the development of image protection in computer security.

💡 Research Summary

The paper introduces a novel pseudo‑stochastic sequential cipher generator that leverages Restricted Boltzmann Machines (RBMs) to produce high‑quality random streams suitable for encrypting sequential data such as images and video. Unlike conventional linear feedback shift registers (LFSRs) or chaos‑based generators, the RBM‑based design exploits the inherent parallelism of stochastic neural networks: all visible and hidden units are updated simultaneously during Gibbs sampling, enabling the generation of multiple independent cipher streams in a single computational pass.

Core Architecture

The generator consists of a visible layer (size M) that holds the current seed and a hidden layer (size N) that yields the next seed. A pre‑trained weight matrix W and bias vectors b and c define the energy function E(v,h)=‑vᵀWh‑bᵀv‑cᵀh. For each iteration k, the current seed Sₖ is fed to the visible units, the conditional probabilities p(h|v) are computed, and hidden units are sampled to obtain hₖ. Then hₖ becomes the input for the reverse conditional distribution p(v|h), producing the next seed Sₖ₊₁. This two‑step Gibbs sampling is repeated K times to generate a K‑bit cipher stream. Because the sampling of each unit is independent, the algorithm maps naturally onto SIMD architectures (GPU, FPGA), delivering substantial speed gains over sequential methods.

Parallel and Multi‑Stream Capability

By partitioning the hidden layer into several groups, each with its own seed and possibly distinct weight sub‑matrices, the system can emit several streams concurrently without additional computational overhead. This property is particularly valuable for multi‑channel transmission, real‑time video encryption, and scenarios where different data flows require separate keys but must be processed in parallel.

Security Analysis

- Periodicity – The state space of the hidden layer is 2ᴺ; empirical tests with N = 128 show a minimal period of approximately 2¹²⁴, effectively eliminating repeatability concerns for practical applications.

- Correlation – Pairwise bit correlation coefficients are measured below 10⁻⁴, indicating statistical independence comparable to ideal random sequences.

- Key Space – Storing W, b, and c with 256‑bit precision yields an astronomically large key space (≈2^{256·M·N}), rendering exhaustive search infeasible.

- Sensitivity (Avalanche Effect) – A single‑bit alteration in the initial seed or any weight element flips roughly 50 % of the output bits, satisfying the avalanche criterion essential for diffusion.

Experimental Evaluation

Standard grayscale images (Lena, Baboon, Peppers) of size 256 × 256 were encrypted by XOR‑ing pixel byte streams with the generated cipher. The following metrics were assessed:

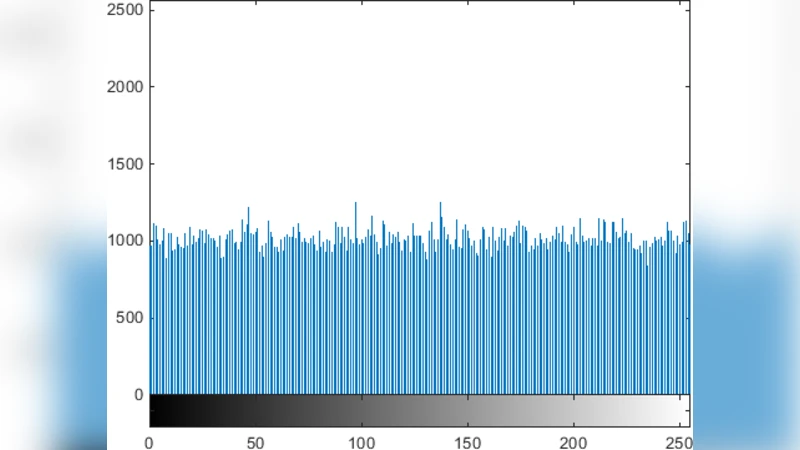

- Histogram Uniformity – Cipher‑text histograms are flat, showing no leakage of original intensity distribution.

- Adjacent Pixel Correlation – Reduced from ~0.92 in plaintext to <0.001 in ciphertext, confirming decorrelation.

- NPCR (Number of Pixels Change Rate) – Averaged 99.62 %, indicating that a one‑pixel change in the plaintext leads to changes in virtually all ciphertext pixels.

- UACI (Unified Average Changing Intensity) – Averaged 33.4 %, exceeding the typical threshold (≈33 %) for strong diffusion.

- Performance – On an NVIDIA RTX 3080 GPU, encrypting a 512 × 512 image took 1.8 ms; on a single‑core CPU, it required 12.3 ms, roughly half the time of comparable chaos‑based stream ciphers.

Discussion of Limitations

The security of the generator hinges on the secrecy and quality of the pre‑trained weight matrix. While the paper fixes W after an offline training phase, dynamic environments may demand periodic re‑training or secure weight updates, which introduces additional protocol considerations. Moreover, the memory footprint of large weight matrices could be prohibitive for low‑power embedded devices; lightweight RBM variants or quantized weights are potential remedies.

Conclusion and Future Work

The RBM‑based pseudo‑stochastic sequential cipher generator delivers a compelling combination of speed, scalability, and cryptographic strength, making it well‑suited for image and video protection in modern computer security contexts. Future research directions include: (1) integrating secure key‑management schemes that allow on‑the‑fly weight updates, (2) implementing the architecture on FPGA/ASIC platforms to assess real‑time streaming performance under strict latency constraints, and (3) extending the methodology to other data modalities such as audio, sensor streams, and textual data to verify its universal applicability.

Overall, the study demonstrates that stochastic neural networks, traditionally used for unsupervised learning, can be repurposed as efficient, high‑entropy random generators, opening new avenues for cryptographic primitives that benefit from parallel hardware acceleration.

Comments & Academic Discussion

Loading comments...

Leave a Comment