Content-based image retrieval tutorial

This paper functions as a tutorial for individuals interested to enter the field of information retrieval but wouldn’t know where to begin from. It describes two fundamental yet efficient image retrieval techniques, the first being k - nearest neighbors (knn) and the second support vector machines(svm). The goal is to provide the reader with both the theoretical and practical aspects in order to acquire a better understanding. Along with this tutorial we have also developed the equivalent software1 using the MATLAB environment in order to illustrate the techniques, so that the reader can have a hands-on experience.

💡 Research Summary

**

The paper “Content‑Based Image Retrieval Tutorial” is written as a practical guide for newcomers to the field of image retrieval, focusing on two classic techniques: k‑Nearest Neighbour (k‑NN) and Support Vector Machines (SVM). It begins by motivating the need for efficient image search in today’s multimedia‑rich environment, where billions of pictures are uploaded daily from smartphones and cameras. The authors then describe a complete preprocessing pipeline that transforms raw pixel data into a compact, discriminative feature vector.

The pipeline consists of five distinct descriptors: (1) a colour histogram in HSV space (8 × 2 × 2 = 32 bins), (2) a colour auto‑correlogram in RGB space (4 × 4 × 4 = 64 bins), (3) first‑order colour statistics (mean and standard deviation for each of R, G, B, yielding 6 values), (4) Gabor filter responses computed over four scales (0.05, 0.1, 0.2, 0.4) and six orientations, with mean and standard deviation per filter (24 × 2 = 48 values), and (5) a three‑level wavelet decomposition, again using mean and standard deviation (40 values). These five vectors are concatenated into a single 150‑dimensional vector ρ for each image. By combining colour, texture, and frequency information, the authors aim to provide a richer representation than any single descriptor alone.

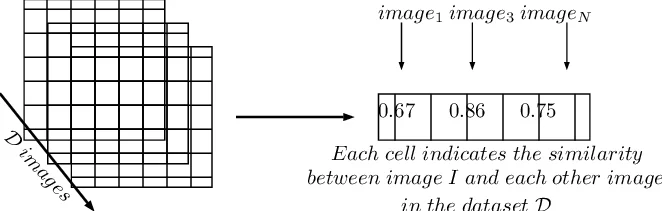

The k‑NN section first presents a naïve implementation that iterates over every pixel of every image, computes absolute differences, and sums them, resulting in a time complexity of O(D · m · n) where D is the number of images and m × n is the image size. Recognising the inefficiency, the authors then “vectorise” the process: each image is reshaped into a 1‑D vector, and the distance between two images reduces to a simple norm computation, cutting the complexity to O(D). They discuss the choice of distance metric (p‑norm, Manhattan, etc.) and illustrate the geometric intuition of k‑NN using a Voronoi diagram, showing how the algorithm implicitly tessellates the feature space. While the exposition is clear, the paper does not address common scalability tricks such as KD‑trees, ball‑trees, or approximate nearest‑neighbour methods, which are essential for large‑scale deployments.

The SVM portion provides a concise yet thorough derivation of the maximum‑margin classifier. It distinguishes functional margin (γ̂_i = y_i(wᵀx_i + b)) from geometric margin (γ_i = γ̂_i/‖w‖) and explains why maximising the latter leads to better generalisation. The authors formulate the primal optimisation problem: maximise γ subject to y_i(wᵀx_i + b) ≥ γ and ‖w‖ = 1. Because the ‖w‖ = 1 constraint is non‑convex, they replace it with a scaling that fixes the functional margin to 1, yielding the classic soft‑margin SVM optimisation: minimise (1/2)‖w‖² subject to y_i(wᵀx_i + b) ≥ 1. They then introduce the Lagrangian, derive the dual, and show that only training points with non‑zero Lagrange multipliers (the support vectors) influence the solution. The discussion of kernels is brief but mentions that the kernel trick allows the same optimisation to be performed in very high‑dimensional (even infinite‑dimensional) feature spaces, enabling non‑linear decision boundaries. However, the paper does not provide concrete guidance on selecting kernel types (linear, RBF, polynomial) or tuning hyper‑parameters (C, γ), which are critical for practical performance.

A notable strength of the tutorial is the provision of a complete MATLAB implementation hosted on GitHub (https://github.com/kirk86/ImageRetrieval). The code follows the described pipeline: it reads a dataset, extracts the five descriptors, concatenates them, and then offers two retrieval modes—k‑NN similarity search and SVM‑based binary classification. This hands‑on component allows readers to experiment directly with the algorithms, observe runtime behaviour, and visualise retrieval results.

Nevertheless, the paper lacks an empirical evaluation. No quantitative metrics such as precision, recall, mean average precision (MAP), or runtime benchmarks are reported, nor is there a comparison with more recent deep‑learning based retrieval methods (e.g., CNN‑derived embeddings). Consequently, while the tutorial succeeds in teaching the fundamentals, readers are left without evidence of how well the described system performs on real‑world datasets.

In conclusion, the manuscript serves as a solid introductory resource that blends theoretical exposition with practical code. Its clear explanations of feature extraction, k‑NN vectorisation, and SVM margin optimisation make it suitable for students and practitioners beginning in content‑based image retrieval. To evolve into a comprehensive reference, future revisions should incorporate performance experiments, scalability analyses, and discussions of modern alternatives such as deep feature learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment