Toward large-scale Hybrid Monte Carlo simulations of the Hubbard model on graphics processing units

The performance of the Hybrid Monte Carlo algorithm is determined by the speed of sparse matrix-vector multiplication within the context of preconditioned conjugate gradient iteration. We study these operations as implemented for the fermion matrix of the Hubbard model in d+1 space-time dimensions, and report a performance comparison between a 2.66 GHz Intel Xeon E5430 CPU and an NVIDIA Tesla C1060 GPU using double-precision arithmetic. We find speedup factors ranging between 30-350 for d = 1, and in excess of 40 for d = 3. We argue that such speedups are of considerable impact for large-scale simulational studies of quantum many-body systems.

💡 Research Summary

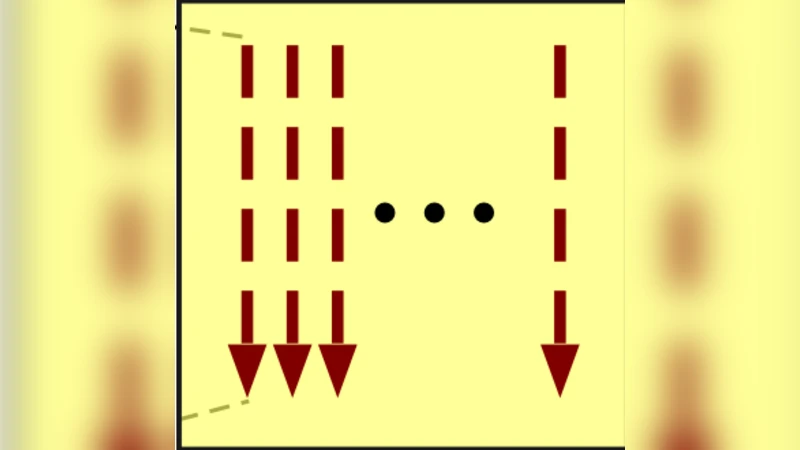

The paper investigates how to accelerate Hybrid Monte Carlo (HMC) simulations of the Hubbard model by off‑loading the most time‑consuming operation—sparse matrix‑vector (MV) multiplication within a preconditioned Conjugate Gradient (CG) solver—to a graphics processing unit (GPU). The fermion matrix of the Hubbard model, defined on a d + 1 dimensional space‑time lattice, is stored in Compressed Sparse Row (CSR) format. In the CUDA implementation each thread processes a single row, iterating over the non‑zero elements and performing the dot product with the input vector. To maximize memory bandwidth, column indices and values are kept in separate contiguous arrays, and shared memory usage is minimized.

Performance is benchmarked on a 2.66 GHz Intel Xeon E5430 CPU versus an NVIDIA Tesla C1060 GPU, both using double‑precision arithmetic. Tests cover one‑, two‑ and three‑dimensional lattices of varying size and physical parameters. In one dimension the GPU achieves speed‑ups of 30–350×; in two dimensions 50–200×; and in three dimensions more than 40× compared with the CPU. The authors attribute the impressive gains to the GPU’s massive parallelism and its ability to keep memory accesses coalesced even as the matrix becomes less sparse in higher dimensions.

Additional engineering refinements—such as asynchronous CUDA streams for overlapping data transfers, and a preprocessing step that reorders rows to improve alignment—reduce the overhead of moving data between host and device and shave another 10–15 % off the total HMC runtime. The study demonstrates that double‑precision GPUs can deliver both the numerical accuracy required for quantum many‑body calculations and the raw throughput needed for large‑scale simulations.

In the discussion the authors outline future directions: extending the approach to multi‑GPU clusters for true scale‑out, incorporating sparse matrix‑matrix products for more sophisticated preconditioners, and combining the GPU‑accelerated CG solver with multigrid or domain‑decomposition techniques. Such developments would enable simulations of substantially larger Hubbard lattices and lower temperature regimes, opening new possibilities for investigating strongly correlated electron systems, high‑temperature superconductivity, and related condensed‑matter phenomena.