Improving Semantic Embedding Consistency by Metric Learning for Zero-Shot Classification

This paper addresses the task of zero-shot image classification. The key contribution of the proposed approach is to control the semantic embedding of images -- one of the main ingredients of zero-shot learning -- by formulating it as a metric learni…

Authors: Maxime Bucher (Palaiseau), Stephane Herbin (Palaiseau), Frederic Jurie

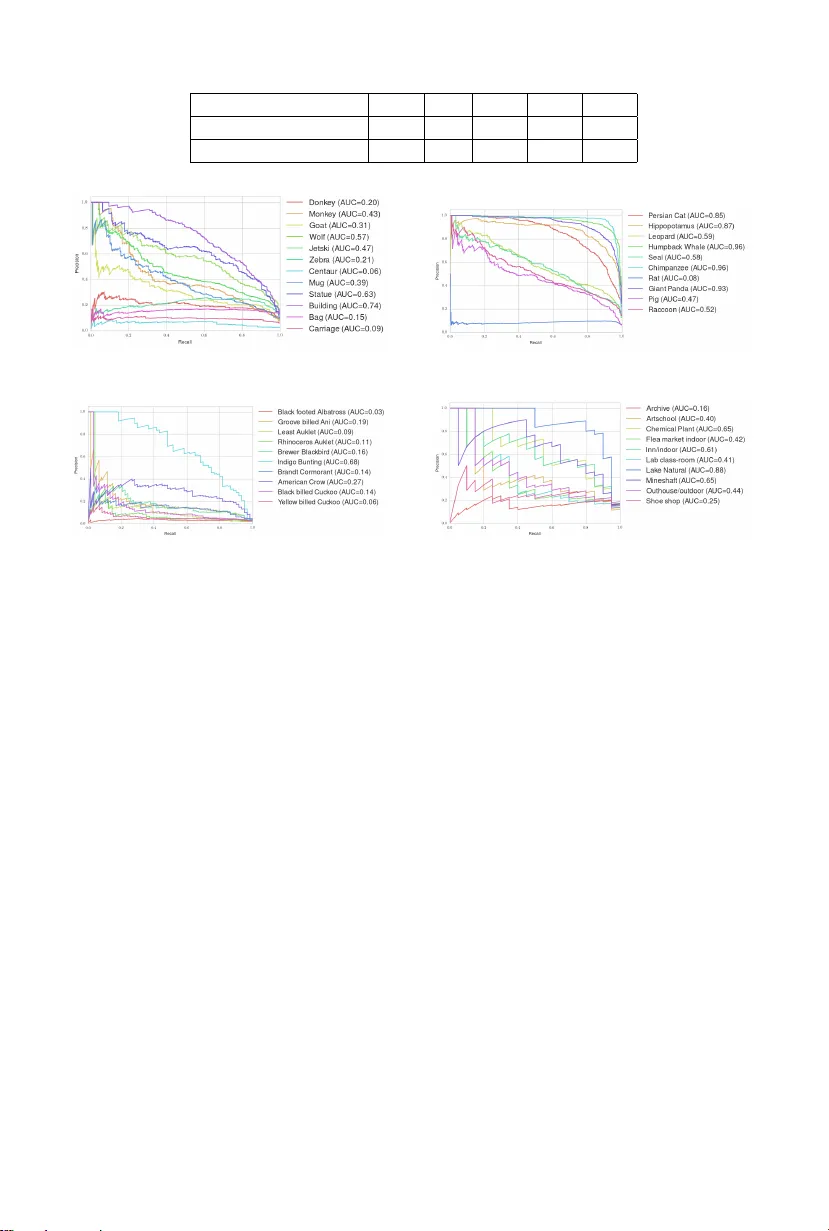

Impro ving Seman tic Em b edding Consistency b y Metric Learning for Zero-Shot Classification Maxime Buc her 1 , 2 , St ´ ephane Herbin 1 , F r ´ ed ´ eric Jurie 2 1 ONERA - The F rench Aerospace Lab, P alaiseau, F rance 2 Normandie Univ, UNICAEN, ENSICAEN, CNRS Abstract. This pap er addresses the task of zero-shot image classifica- tion. The k ey contribution of the prop osed approach is to control the seman tic em b edding of images – one of the main ingredien ts of zero-shot learning – by form ulating it as a metric learning problem. The optimized empirical criterion associates tw o types of sub-task constraints: metric discriminating capacit y and accurate attribute prediction. This results in a no vel expression of zero-shot learning not requiring the notion of class in the training phase: only pairs of image/attributes, augmented with a consistency indicator, are given as ground truth. A t test time, the learned mo del can predict the consistency of a test image with a giv en set of at- tributes, allowing flexible w ays to produce recognition inferences. Despite its simplicity , the prop osed approach giv es state-of-the-art results on four c hallenging datasets used for zero-shot recognition ev aluation. Keyw ords: zero-shot learning, attributes, seman tic em b edding 1 In tro duction This pap er addresses the question of zero-shot learning (ZSL) image classifica- tion, i.e. , the classification of images b elonging to classes not represented by the training examples. This problem has attracted m uch interest in the last decade b ecause of its clear practical impact: in many applications, having access to anno- tated data for the categories considered is often difficult, and requires new wa ys to increase the in terpretation capacity of automated recognition systems.The efficiency of ZSL relies on the existence of an in termediate representation level, effortlessly understandable by human designers and sufficiently formal to b e the supp ort of algorithmic inferences. Most of the studies ha ve so far considered this represen tation in the form of semantic attributes mainly b ecause it pro vides an easy w ay to describ e compact yet discriminative descriptions of new classes. It has also b een observed [1,2] that attribute representations as provided b y h umans ma y not be the ideal embedding space b ecause it can lack the infor- mational quality necessary to conduct reliable inferences: the structure of the attribute manifold for a giv en data distribution may b e rather complex, redun- dan t, noisy and unevenly organized. Attribute descriptions, although semanti- cally meaningful and useful to in tro duce a new category , are not necessarily isomorphic to image data or to image pro cessing outputs. 2 Buc her, Herbin and Jurie T o comp ensate for the shortcomings induced by attribute represen tations, the recen t trends in ZSL studies are aiming at b etter con trolling the classification inference as w ell as the attribute prediction in the learning criteria. Indeed, if attribute classifiers are learned indep enden tly of the final classification task, as in the Direct A ttribute Prediction mo del [3], they migh t be optimal at predicting attributes but not necessarily at predicting no vel classes. In the work proposed in this pap er, w e instead suggest that b etter con trolling the structure of the em bedding attribute space is at least as important than con- straining the classification inference step. The fundamental idea is to empirically disen tangle the attribute distribution by learning a metric able to b oth select and transform the original data distribution according to informational criteria. This metric is obtained b y optimizing an ob jective function based on pairs of attributes/images without assuming that the training images are assigned to categories; only the semanti c annotations are used during training. More sp ecif- ically , we empirically v alidated the idea that optimizing jointly the attribute em b edding and the classification metric, in a multi-ob jectiv e framework, is what mak es the performance better, ev en with a simple linear embedding and distance to mean attribute classification. The approach is experimentally v alidated on 4 recent datasets for zero-shot recognition, i.e. the ‘aP ascal&aY aho o’, ‘Animals with Attributes’, ‘CUB-200- 2011 and ‘SUN attribute’ datasets for which excellent results are obtained, de- spite the simplicit y of the approach. The rest of the paper is organized as follows: Section 2 presents the related w orks, Section 3 describ es the prop osed approach while the exp erimen tal v ali- dation is giv en in Section 4. 2 Related work 2.1 Visual features and semantic attributes Image represen tation – i.e. the set of mechanisms allowing to transform image ra w pixel intensities in to representations suitable for recognition tasks – plays an imp ortan t role in image classification. State-of-the art image represen tations w ere, a couple of years ago, mainly based on the p ooling of hard/soft quantized lo cal descriptors ( e.g. SIFT [4]) through the bag-of-words [5] of Fisher v ectors [6] mo dels. How ever, the work of Krizhevsky et. al. [7] has op ened a new area and most of the state-of-the-art image descriptors now adays rely on Deep Con- v olutional Neural Netw orks (CNN). W e follow this trend in our experiments and use the so-called ‘V GG-V eryDeep-19’ (4096-dim) descriptors of [8]. Tw o recent pap ers hav e exhibited existing links b et ween CNN features and seman tic attributes. Ozeki et al. [9] show ed that some CNN units can predict some seman tic attributes of the ‘Animals with Attributes’ dataset fairly ac- curately . One in teresting conclusion of their pap er is that the visual seman tic attributes can b e predicted muc h more accurately than the non-visual ones by the no des of the CNN. More recently , [10] show ed the existence of Attribute Metric Learning for Zero-Shot Classification 3 Cen tric No des (ACNs) within CNNs trained to recognize ob jects, collectively enco ding information p ertinen t to visual attributes, unevenly and sparsely dis- tributed across all the la yers of the netw ork. Despite these recent findings could certainly make the p erformance of our metho d b etter, w e don’t use them in our exp erimen ts and stick to the use of standard CNN features, with the inten tion of making our results directly com- parable to the recen t zero-shot learning pap ers (e.g. [11]). 2.2 Describing images b y seman tic and non-semantic attributes Zero-shot learning methods rely on the use of intermediate represen tations, usu- ally given as attributes . This term can, how ev er, encompass differen t concepts. F or Lamp ert et al. [12] it denotes the presence/absence of a given ob ject prop ert y , assuming that attributes are name able prop erties (color or presence or absence of a certain part, etc. ). The adv antage of so-defined attributes is that they can b e used easily to define new classes expressed by a shared semantic vocabulary . Ho wev er, finding a discriminative and meaningful set of attributes can some- times b e difficult. [13,14] addressed this issue b y prop osing an interactiv e ap- proac h that discov ers lo cal attributes b oth discriminativ e and semantically mean- ingful, employing a recommender system that selects attributes through human in teractions. An alternativ e for iden tifying attribute v o cabulary without human lab eling is to mine existing textual description of images sampled from the In- ternet, such as prop osed by [15]. In the same line of thought, [16] presen ted a mo del for classifying unseen categories from their (already existing) textual de- scription. [17] proposed an approach for zero-shot learning where the description of unseen categories comes in the form of t ypical text suc h as an encyclop edia en tries, without the need to explicitly define attributes. Another dra wback of h uman generated attributes is that they can b e redun- dan t or not adapted to image classification. These issues hav e been addressed b y automatically designing discriminative category-level attributes and using them for tasks of cross-category kno wledge transfer, such as in the work of Y u et al. [18]. Finally , the attributes can also b e structured into hierarchies [19,20,21] or obtained b y text mining or textual descriptions [21,16,17]. Beside these pap ers which all consider attributes as meaningful for humans, some authors denoted by attributes any latent space providing an intermediate represen tation b et ween image and hidden descriptions that can be used to trans- fer information to images from unseen classes. This is t ypically the case of [22] whic h jointly learn the attribute classifiers and the attribute v ectors, with the in tention of obtaining a b etter attribute-lev el representation, conv erting unde- tectable and redundant attributes into discriminativ e ones while retaining the useful seman tic attributes. [23] has also introduced the concept of discriminative attributes , taking the form of random comparisons. The space of class names can also constitute an interesting embedding, suc h as in the works of [24,25,11,26] whic h represent images as mixtures of known classes distributions. Finally , it w orth pointing out that the aforementioned tec hniques are re- stricting attributes to categorical lab els and dont allo w the representation of 4 Buc her, Herbin and Jurie more general semantic relationships. T o coun ter this limitation, [27] prop osed to mo del relative attributes by learning a ranking function. 2.3 Zero shot learning from seman tic and attribute em b edding As defined by [28], the problem of zero-shot learning can b e seen as the problem of learning a classifier f : x → y that can predict no vel v alues of y not av ailable in the training set. Most of existing metho ds rely on the computation of a similarit y or c onsistency function linking image descriptors and the semantic description of the classes. These links are giv en by learning tw o embeddings – the first from the image representation to semantic space and the second from the class space to the semantic space – and defining a wa y to describe the constraints b et ween the class space and the image space, the tw o b eing strongly in terdep enden t. D AP (Direct Attribute Prediction) and Indirect attribute prediction (IAP), first prop osed b y [3], use the b et ween lay er of attributes as v ariables decoupling the images from the la yer of lab els. In DAP , indep enden t attribute predictors are used to build the embedding of the image, the similarity b etw een tw o semantic represen tations (one predicted from the image representation, one giv en b y the class) b eing giv en as the probability of the class attribute knowing the image. In IAP , attributes form a connecting la yer b et ween tw o lay ers of lab els, one for classes that are known at training time and one for classes that are not known. In this case, attributes are predicted from (known) class predictions. Lamp ert et al. [3] concluded that DAP gives muc h b etter p erformance than IAP . Ho wev er, as mentioned in the introduction, DAP has tw o problems: first, it do es not mo del an y correlation b et ween attributes, each b eing predicted in- dep enden tly . Second, the mapping b et ween classes and the attribute space do es not w eight the relativ e imp ortance of the attributes nor the correlations betw een them. Inspired by [29], which learns a linear embedding b et w een image features and annotations, [30] and [2] tried to o vercome this limitation. The work of Ak ata et al. [30] introduced a function measuring the consistency b et ween an image and a lab el embedding, the parameters of this function is learned to ensure that, given an image, the correct classes rank higher than the incorrect ones. This consistency function has the form of a bilinear relation W asso ciating the image em b edding θ ( x ) and the lab el representation φ ( y ) as S ( x, y ; W ) = θ ( x ) t W φ ( y ). Romera et al. [2] prop osed a simple closed form solution for W , assuming a sp ecific form of regularization is chosen. In comparison to our work none of the t wo pap ers ([30], [2]) use a metric learning framework to con trol the statistical structure of the attribute em b edding space. The co efficients of the consistency constraint W can be also predicted from a semantic textual description of the image. As an example, the goal of [17] is to predict a classifier for a new category based only on the learned classes and a textual description of this category . They solve this problem as a regression function, learnt from the textual feature domain to the visual classifier domain. [16] builds on these ideas, extending them by using a more expressiv e regression function based on a deep neural netw ork. They take adv antage of the architec- ture of CNNs and learn features at different la yers, rather than just learning Metric Learning for Zero-Shot Classification 5 an em b edding space for b oth mo dalities. The prop osed mo del provides means to automatically generate a list of pseudo-attributes for each visual category consisting of w ords from Wikip edia articles. In contrast with the aforementioned metho ds, Hamm et al. [31] introduced the idea of ordinal similarity b et ween classes (eg. d(‘cat’,‘dog’) < d(‘cat’, ‘au- tomobile’)), claiming that not only this t yp e of similarit y may b e sufficient for distinguishing cat and truck, but also that it seems a more natural represen tation since the ordinal similarity is inv ariant under scaling and monotonic transfor- mation of numerical v alues. It is also w orth mentioning the work of Ja yaraman et al. [32] which prop osed to leverage the statistics ab out each attribute error tendencies within a random forest approach, allowing to train zero-shot mo dels that explicitly accoun t for the unreliability of attribute predictions. W u et al. [33] exploit natural language processing technologies to generate ev ent descriptions. They measure their similarit y to images by pro jecting them in to a common high-dimensional space using text expansion. The similarity is expressed as the concatenation of L 2 distances of the differen t modalities consid- ered. Strictly sp eaking, there is no metric learning inv olv ed but a concatenation of L 2 distances. Finally , F rome et al. [34] aim at leveraging semantic kno wledge learned in the text domain, and transfer it to a mo del trained for visual ob ject recognition by learning a metric aligning the t wo mo dalities. Ho wev er, in contrast to our w ork, [34,33] do not explicitly control the quality of the embedding. 2.4 Zero-shot learning as transductive and semi-sup ervised learning All the previously mentioned approaches consider that the embedding and the consistency function ha ve to b e learned from a set of training data of known classes, used in a second time to infer predictions ab out the images of new classes not av ailable during training. How ev er, a different problem can b e addressed when images from the unknown classes are already av ailable at training time and can hence b e used to produce a b etter em b edding. In this case, the problem can b e cast as a transductive learning problem i.e. the inference of the correct lab els for the given unlab eled data only , or a semi-supervised learning problem i.e. the inference of the b est em b edding using b oth lab eled and unlab eled data. W ang and F orsyth [35] proposed MIL framew ork for jointly learning at- tributes and ob ject classifiers from weakly annotated data. W ang and Mori [22] treated attributes of an ob ject as latent v ariables and captured the correlations among attributes using an undirected graphical mo del, allowing to infer ob ject class lab els using the information of b oth the test image and its (latent) at- tributes. In [1], the class information is incorp orated in to the attribute classifier to get an attribute-level representation that generalizes well to unseen examples of known classes as w ell as those of the unseen classes, assuming unlab eled im- ages are av ailable for learning. [36] considered the introduction of unseen classes as a nov elty detection problem in a multi-class classification problem. If the im- age is of a known category , a standard classifier can b e used. Otherwise, images are assigned to a class based on the likelihoo d of being an unseen category . 6 Buc her, Herbin and Jurie F u et al. [37] rectified the pro jection domain shift b et w een auxiliary and tar- get datasets b y introducing a multi-view seman tic space alignment pro cess to correlate differen t semantic views and the lo w-level feature view, by pro jecting them onto a latent embedding space learnt using m ulti-view Canonical Correla- tion Analysis. More recently Li et al. [38] learned the embedding from the input data in a semi-sup ervised large-margin learning framew ork, jointly considering m ulti-class classification ov er observ ed and unseen classes. Finally , [39] formu- lated a regularized sparse co ding framew ork which used the target domain class lab el pro jections in the seman tic space to regularize the learnt target domain pro jection, with the aim of ov ercoming the pro jection domain shift problem. 2.5 Zero-shot learning as a metric learning problem Tw o contributions, [40] and [41], exploit metric learning to Zero shot class de- scription. Mensink et al. [40] learn a metric adapted to measure the similarity of images, in the context of k -nearest neighbor image classification, and apply it in fact to One Shot Learning to sho w it can generalize w ell to new classes. They don’t use any attribute embedding space nor consider ZSL in their w ork. Kuznetso v a et al. [41] learn a metric to infer p ose and ob ject class from a single image. They use the expression zer o-shot to actually denote a (new) transfer learning problem when data are unevenly sampled in the join t p ose and class space, and not a Zero-Shot Classification problem where new classes are only kno wn from attribute descriptions. As far as we know, zero-shot learning has never b een addressed explicitly as a metric learning problem in the attribute embedding space, which is one of the k ey contributions of this pap er. 3 Metho d 3.1 Em b edding consistency score Most of the inference problems can b e cast into an optimal framework of the form: Y ∗ = arg min Y ∈Y S ( X , Y ) where X ∈ X is a given sample from some mo dalit y , e.g. an image or some features extracted from it, Y ∗ is the most consistent asso ciation from another mo dalit y Y , e.g. a vector of attribute indicators or a textual description, and S is a measure able to quantify the joint consistency of tw o observ ations from the t wo mo dalities. In this formulation, the smaller the score, the more consistent the samples. One can think of this score as a negative lik eliho o d. When trying to design suc h a consistency score, one of the difficult aspects is to relate meaningfully the t w o modalities. One usual approach consists in Metric Learning for Zero-Shot Classification 7 em b edding them in to a common representational space A 1 where their hetero- geneous nature can be compared. This space can b e abstract, i.e. its structure can b e obtained from some optimization pro cess, or semantically in terpretable e.g. a fixed list of attributes or prop erties each indexed by a tag referring to some shared knowledge or on tology , leading to a p -dimensional vector space. Let ˆ A X ( X ) and ˆ A Y ( Y ) b e the tw o embeddings for each mo dalit y X and Y , taking v alues in X and Y and pro ducing outputs in A . In this work, it is prop osed to define the consistency score as a metric on the common em b edding space A . More precisely , we use the Mahalanobis like description of a metric parametrized b y a linear mapping W A : d A ( A 1 , A 2 ) = ( A 1 − A 2 ) T W A 2 , assuming that the embedding space is a vector space, and define the c onsistency score as: S ( X , Y ) = d A ( ˆ A X ( X ) , ˆ A Y ( Y )) = ( ˆ A X ( X ) − ˆ A Y ( Y )) T W A 2 . The Mahalanobis mapping W A can b e in terpreted itself as a linear embed- ding in an abstract m -dimensional vector space where the natural metric is the Euclidean distance, and acts as a m ultiv ariate whitening filter. It is expected that this prop ert y will improv e empirically the reliability of the consistency score (1) b y choosing the appropriate linear mapping. W e are no w left with t wo questions: ho w to define the em bedding? How build the Mahalanobis mapping? W e see in the following that these tw o questions can b e solved jointly by optimizing a unique criterion. 3.2 Em b edding in the attribute space The main problem addressed in this work is to be able to discriminate a series of new hypotheses that can only b e sp ecified using a single mo dalit y , the Y one with our notations. In many Zero-Shot Learning studies, this mo dalit y is often expressed as the existence or presence of several attributes or prop erties from a fixed given set. The simplest embedding space one can think of is precisely this attribute space, implying that the Y modality embedding is the iden tity: ˆ A Y ( Y ) = Y with A = Y . In this case, the consistency score simplifies as: S ( X , Y ) = ( ˆ A X ( X ) − Y ) T W A 2 (1) The next step is to embed the X mo dalit y into Y directly . W e suggest using a simple linear em b edding with matrix W X and bias b X , assuming that X is in a d -dimensional v ector space. This can b e expressed as: ˆ A X ( X ) = max(0 , X T W X + b X ) . (2) 1 W e use the letters A and A in our notations since w e will fo cus on the space of attribute descriptions as the embedding space. 8 Buc her, Herbin and Jurie W e use a reLu-t yp e output normalization to k eep the significance of the attribute space as prop erty detectors, negativ e num b ers b eing difficult to interpret in this con text. In the simple formulation prop osed here, we do not question the wa y new h yp otheses are sp ecified in the target modality , nor use an y external source of information ( e.g. word v ectors) to map the attributes into a more semantically organized space such as in [36]. W e leav e the problem of correcting the original attribute description to the construction of the metric in the common embedding space. 3.3 Metric learning The design problem is now reduced to the estimation of three mathematical ob jects: the linear embedding to the attribute space W X of dimensions d × p , a bias b X of dimension p , and the Mahalanobis linear mapping W A of dimensions p × m , m b eing a free parameter to choose. The prop osed approac h consists in building empirically those ob jects from a set of examples b y appling metric learning techniques. The training set is supp osed to contain pairs of data ( X i , Y i ) sampling the joint distribution of the t wo mo dalities: X i is a v ector representing an image or some features extracted from it, while Y i denotes an attribute-based description. Notice that we do not in tro duce an y class information in this formulation: the link b et ween class and attribute represen tations is assumed to b e sp ecified by the use case considered. The rationale b ehind the use of metric learning is to transform the original represen tational space so that the resulting metric tak es into account the statis- tical structure of the data using pairwise constraints. One usual wa y to do so is to express the problem as a binary classification on pairs of samples, where the role of the metric is to separate similar and dissimilar samples by thresholding (see [42] for a survey on M.L.). It is easy to build pairs of similar and dissimi- lar examples from the annotated examples by sampling randomly (uniformly or according to some la w) the t wo mo dalities X and Y and assigning an indicator Z ∈ {− 1 , 1 } stating whether Y i is a goo d attribute description of X i ( Z i = 1) or not ( Z i = − 1). Metric learning approac hes try to catch a data-dep enden t w ay to enco de similarity . In general, the data manifold has a smaller intrinsic dimension than the feature space, and is not isotropically distributed. W e are now giv en a dataset of triplets { ( X i , Y i , Z i ) } N i =1 , the Z indicator stating that the tw o mo dalities are similar, i.e. consistent, or not 2 . The next step is to describ e an empirical criterion that will b e able to learn W X , b X and W A . The idea is to decomp ose the problem in three ob jectives: metric learning, go od embedding and regularization. The metric learning part follows a now standard hinge loss approach [43] taking the follo wing form for each sample: l H ( X i , Y i , Z i , τ ) = max 0 , 1 − Z i ( τ − S ( X i , Y i ) 2 ) . (3) 2 T o make notations simpler, we do not rename or re-index from the original dataset the pairs of data for the similar and dissimilar cases. Metric Learning for Zero-Shot Classification 9 The extra parameter τ is free and can also b e learned from data. Its role is to define the threshold separating similar from dissimilar examples, and should dep end on the data distribution. The embedding criterion is a simple quadratic loss, but only applied to similar data: l A ( X i , Y i , Z ) = max(0 , Z i ) . Y i − ˆ A X ( X i ) 2 2 . (4) Its role is to ensure that the attribute prediction is of goo d quality , so that the difference Y − ˆ A X ( X ) reflects dissimilarity due to modality inconsistencies rather than bad represen tational issues. The size of the learning problem ( d × p + p + p × m ) can b e large and requires regularization to prev ent ov er fitting. W e use a quadratic p enalization: R ( W A , W X , b X ) = k W X k 2 F + k b X k 2 2 + k W A k 2 F (5) where k . k F is the F rob enius norm. The ov erall optimization criterion can now b e written as the sum of the previously defined terms: L ( W A , W X , b X , τ ) = X i l H ( X i , Y i , Z i , τ ) + λ X i l A ( X i , Y i , Z i ) + µR ( W A , W X , b X ) (6) where λ and µ are h yp er-parameters that are chosen using cross-v alidation. Note that the criterion (6) can also b e interpreted as a m ulti-ob jectiv e learning ap- proac h since it mixes tw o optimal but dep enden t issues: attribute embedding and metric on the em b edding space. T o solve the optimization problem, w e do not follo w the approac h prop osed in [43] since w e also learn the attribute em b edding part W X join tly with the metric em b edding W A . W e use instead a global sto c hastic gradien t descent (see section 4 for details). 3.4 Application to image recognition and retriev al The consistency score (1) is a versatile to ol that can be used for several image in terpretation problems. Section 4 will ev aluate the potential of our approac h on three of them. Zero-shot learning The problem can be defined as finding the most consistent attribute description giv en the image to classify , and a set of exclusiv e attribute class descriptors { Y ∗ k } C k =1 where k is the index of a class: k ∗ = arg min k ∈{ 1 ...C } S ( X , Y ∗ k ) (7) In this formulation, classifying is made equiv alen t to identifying b et ween the C classes the b est attribute description. A v ariant of this scheme can exploit a v oting process to identify the best attribute among a set of k candidates, inspired from a k − nearest neighbor approach. 10 Buc her, Herbin and Jurie F ew-shot learning Learning a metric in the embedding space can conv eniently b e used to sp ecialize the consistency score to new data when they are av ail- able. W e study a simple fine tuning approach using sto c hastic gradient descent on criterion (6) applied to no vel triplets ( X, Y , Z ) from unseen classes only , starting with the mo del learned with seen classes. This makes “few-shot learn- ing”p ossible. The decision framework is identical to the ZSL one. Zero-shot retriev al The score (1) can also used to retrieve the data from a giv en database that hav e at least a consistent level λ with a given query defined in the Y (or A ) mo dalit y: Retriev e( A , λ ) = { X ∈ X / S ( X , A ) < λ } The p erformance is usually characterized by precision-recall curves. 4 Exp erimen ts This section presents the exp erimental v alidation of the prop osed metho d. The section first introduces the 4 datasets ev aluated as well as the details of the exp erimen tal settings. The metho d is empirically ev aluated on three different tasks as describ ed in section 3: Zero-Shot-Learning (ZSL), F ew-Shot Learning (FSL) and Zero-Shot Retriev al (ZSR). The ZSL exp eriments aim at ev aluating the capabilit y of the proposed mo del to predict unseen classes. This section also ev aluates the contribution of the different comp onen ts of the mo del to the p erformance, and makes comparisons with state-of-the-art results. In the FSL exp erimen ts, we show how the ZSL mo del can serve as go od prior to learning a classifier when only a few samples of the unknown classes are av ailable. Finally , w e ev aluate our model on a ZSR task, illustrating the capabilit y of the algorithm to retriev e images using attribute-based queries. 4.1 Datasets and Exp erimen tal Settings The exp erimen tal v aluation is done on 4 public datasets widely used in the com- m unity , allowing to compare our results with those recently prop osed in the literature: the aPascal&aY ahoo (aP&Y) [23], Animals with A ttributes (AwA) [3], CUB-200-2011 (CUB) [44] and SUN attribute (SUN) [45] datasets (see T a- ble 1 for few statistics on their con tent). Theses datasets exhibit a large num b er of categories (indoor and outdo or scenes, ob jects, p erson, animals, etc. ) and attributes (shap es, materials, color, parts, etc. ) These datasets ha ve b een in tro duced for training and ev aluating ZSL meth- o ds and contain images annotated with semantic attributes. More sp ecifically , eac h image of the aP&Y, CUB and SUN datasets has its own attribute descrip- tion, meaning that tw o images of the same class can ha ve different attributes. This is not the case for AwA where all the images of a giv en class share the same attributes. As a consequence, in the ZSL exp eriments on aP&Y, CUB and SUN, Metric Learning for Zero-Shot Classification 11 T able 1: Dataset statistics Dataset #T raining classes #T est classes #Instances #Attributes aP ascal & aY ahoo [23] 20 12 15,339 64 Animals with A ttributes [3] 40 10 30,475 85 CUB 200-2011 [44] 150 50 11,788 312 SUN A ttributes [45] 707 10 14,340 102 the attribute represen tation of unknown classes, required for class prediction, is tak en as their mean attribute frequencies. In order to make comparisons with previous works p ossible, we use the same training/testing splits as [23] (aP&Y), [3] (AwA), [21] CUB and [32] (SUN). Regarding the represen tation of images, we used b oth the V GG-V eryDeep-19 [8] and AlexNet [7] CNN mo dels, both pre-trained on imageNet – without fine tuning to the attribute datasets – and use the p en ultimate fully connected la yer ( e.g. , F C7 4096-d lay er for V GG-V eryDeep-19) for represen ting the images. V ery deep CNN models act as generic feature extractors and ha ve b een demonstrated to work well for ob ject recognition. They hav e b een also used in many recent ZSL exp erimen ts and w e use exactly the same descriptors as [21,11]. One of the key c haracteristics of our mo del is that it requires a set of im- age/attributes pairs for training. Positiv e (resp. negative) pairs are obtained by taking the training images associated with their own provided attribute vector (resp. by randomly assigning attributes not presen t in the image) and are as- signed to the class lab el ‘1 (resp. ‘-1). In order to b ound the size of the training set w e generate only 2 pairs p er training image, one p ositiv e and one negative. Our model has three hyper-parameters: the w eight λ , the dimensionality of the space in which the distance is computed ( m ) and the regularization param- eters µ . These hyper-parameters are estimated through a grid search v alidation pro cedure by randomly keeping 20% of the training classes for cross-v alidating the h yp er-parameters, and c ho osing the parameters giving best accuracy for these so-obtained v alidation classes. The parameter are searched in the follow- ing ranges: m ∈ [20% , 120%] of the initial attribute dimension, λ ∈ [0 . 05 , 1 . 0] and µ ∈ [0 . 01 , 10 . 0. τ is a parameter learned during training. Once the h yp er-parameters are tuned, w e tak e the whole training set to learn the final mo del and ev aluate it on the test set (unseen classes in case of ZSL). The optimization of W A and W X is done with stochastic gradien t descen t, the parameters b eing initialized randomly with normal distribution. The size of the mini-batch is of 100. As the ob jective function is non-conv ex, different initializations can give different parameters. W e addressed this issue by doing 5 estimations of the parameters starting from 5 differen t initializations and select- ing the b est one on a v alidation set (we keep a part of the train set for doing this and fine-tune the parameters on the whole train set when the b est initial- ization is kno wn).W e use the optimizer provided in the T ensorFlow framework [46]. Using the GPU mode with a Nvidia 750 GTX GPU, learning a mo del ( W A 12 Buc her, Herbin and Jurie T able 2: Zero-shot classification accuracy (mean ± std). W e rep ort results b oth with VGG-v erydeep-19 [8] and AlexNet [7] features for fair comparisons, when- ev er it’s p ossible (AwA images are not public an ymore preven ting the computa- tion of their AlexNet represen tations). F eat. Method aP&Y AwA CUB SUN Ak ata et al. [21] - 61.9 40.3 - Alex Net [7] Ours 46.14 ± 0.91 - 41.98 ± 0.67 75.48 ± 0 . 43 Lamp ert et al. [12] 38.16 57.23 - 72.00 Romera-P aredes et al. [2] 24.22 ± 2 . 89 75.32 ± 2 . 28 - 82.10 ± 0 . 32 Zhang et al. [11] 46.23 ± 0 . 53 76.33 ± 0 . 83 30.41 ± 0 . 20 82.50 ± 1.32 Zhang et al. [47] 50.35 ± 2 . 97 80.46 ± 0 . 53 42.11 ± 0 . 55 83.83 ± 0.29 Ours w/o ML 47.25 ± 0 . 48 73.81 ± 0 . 13 33.87 ± 0 . 98 74.91 ± 0 . 12 Ours w/o constrain t 48.47 ± 1 . 24 75.69 ± 0 . 56 38.35 ± 0 . 49 79.21 ± 0 . 87 V GG-V eryDeep [8] Ours 53.15 ± 0.88 77.32 ± 1.03 43.29 ± 0.38 84.41 ± 0.71 and W X ) takes 5-10 minutes for a given set of hyper-parameters. Computing image/attribute consistency tak es around 4ms p er pair. 4.2 Zero-Shot Learning Exp erimen ts The exp erimen ts follo w the standard ZSL protocol: during training, a set of images from known classes is a v ailable for learning the mo del parameters. At test time, images from unseen classes are pro cessed and the goal is to find the class describ ed by an attribute representation most consistent with the images. T able 2 giv es the p erformance of our approac h on the 4 datasets considered – expressed as multi-class accuracy – and mak es comparisons with state-of-the-art approac hes. The p erformances of previous metho ds are taken from [11,21,47]. P erformance is rep orted with 2 different features i.e. VGG-V eryDeep-19 [8] and AlexNet [7] for fair comparisons. As images of AwA are not public anymore it is only p ossible to use the features a v ailable for download. On the four datasets our model achiev es ab o ve state-of-the-art p erformance (note : [47] w as published after our submission), with a noticeable impro vemen t of more than 8% on aP&Y. As explained in the previous section, our mo del is based on a multi-ob jectiv e function trying to maximize metric discriminating capacity as w ell as attribute prediction. It is interesting to observ e ho w the p erformance degrades when one of the tw o terms is missing. In T able 2, the ‘Ours w/o ML’ setting makes use of the Euclidean distance i.e. W A = I . The ‘Ours w/o constraint’ setting is when the attribute prediction term (Eq. 4) is missing in the criterion. This term gives a 4% impro vemen t, on av erage. Figure 1a shows the accuracy as a function of the em b edding dimension. This pro jection maps the original data in a space in which the Euclidean distance is go od for the task considered. It can b e seen as a wa y to exploit and select the correlation structure betw een attributes. W e experimented that the b est Metric Learning for Zero-Shot Classification 13 (a) Classification accuracy (b) Image retriev al Fig. 1: (a) ZSL accuracy as a function of the dimensionality of the metric space; b est results are obtained when the dimension of the m etric em b edding is less than 40% of the image space dimension. It also shows the improv emen t due to the attribute prediction term in the ob jectiv e function. (b) F ew-shot learning: Classification accuracy (%) as a function of the amoun t of training examples p er unseen classes. p erformance is generally obtained when the dimension of this space less than 40% smaller than the size of the initial attribute space. 4.3 F ew-Shot Learning F ew-shot learning corresponds to the situation where 1 (or more) annotated example(s) from unseen classes are av ailable at test time. In this case our mo del is first trained using only the seen classes (same as with ZSL), and we in tro duced the examples from unseen class data one b y one b efore fine-tuning the mo del parameters b y doing a few more learning iterations using these new data only . Figure 1b shows the accuracy ev olution, giv en as a function of the num b er of additional images from the unseen classes. Please note that for the SUN dataset w e hav e used a maxim um of 10 additional examples as unseen classes con tain only 20 images. W e observ ed that knowing even a very few num b er of anno- tated examples significantly improv es the p erformance. It is a very encouraging b eha vior for large-scale applications where annotations for a large num b er of categories are hard and exp ensiv e to get. 4.4 Zero-Shot Retriev al The task of Zero-Shot image Retriev al consists in searching an image database with attribute-based queries. F or doing this, we first train our mo del as for stan- dard ZSL. W e then tak e the attribute descriptions of unseen classes as queries, and rank the images from the unseen classes based on the similarit y with the 14 Buc her, Herbin and Jurie T able 3: Zero-Shot Retriev al task: Mean Av erage Precision (%) on the 4 datasets aP&Y AwA CUB SUN Av. Zhang et al. [11] 15.43 46.25 4.69 58.94 31.33 Ours (V GG features) 36.92 68.1 25.33 52.68 45.76 (a) aP&Y (b) AwA (c) CUB-200-2011 (d) SUN Fig. 2: Precision Recall curve for each unseen class by dataset. F or CUB dataset w e randomly choose 10 classes (b est viewed on a computer screen). query . T able 3 rep orts the mean av erage precision on the 4 datasets. Our mo del outp erforms the state-of-the-art SEE metho d [11] b y more than 10% on a v erage. Figure 2 shows the a verage precision for each class of the 4 datasets. In the aP&aY a dataset, the ‘donkey’, ‘centaur’ and ‘zebra’ classes ha ve a very low a verage precision. This can b e explained by the strong visual similarity b et ween these classes whic h only differ by a few attributes. 5 Conclusion This pap er has presented a nov el approach for zero-shot classification exploiting m ulti-ob jectiv e metric learning techniques. The prop osed formulation has the nice property of not requiring an y ground truth at the category lev el for learning a consistency score b et w een the image and the semantic mo dalities, but only requiring weak consistency information. The resulting score can b e used with v ersatility on v arious image in terpretation tasks, and sho ws close or ab o v e state- of-the-art p erformance on four standard b enc hmarks. The formal simplicity of the approac h allows sev eral av en ues for future improv ement. A first one w ould b e to pro vide a b etter embedding on the semantic side of the consistency score ˆ A Y ( Y ). A second one would b e to explore more complex functions than the linear mappings tested in this work, and introduce, for instance, deep netw ork arc hitectures. Metric Learning for Zero-Shot Classification 15 References 1. Maha jan, D.K., Sellamanick am, S., Nair, V.: A joint learning framework for at- tribute mo dels and ob ject descriptions. In: IEEE International Conference on Computer Vision (ICCV). (2011) 2. Romera-P aredes, B., T orr, P .H.: An embarrassingly simple approach to zero-shot learning. In: Pro ceedings of the In ternational Conference on Mac hine learning. (2015) 2152–2161 3. Lamp ert, C.H., Nickisc h, H., Harmeling, S.: Learning to detect unseen ob ject classes by b et w een-class attribute transfer. In: IEEE International Conference on Computer Vision and Pattern Recognition (CVPR). (2009) 4. Lo we, D.G.: Distinctiv e image features from scale-inv ariant keypoints. Interna- tional Journal of Computer Vision (IJCV) 60 (2) (2004) 91–110 5. Csurk a, G., Dance, C., F an, L., Willamowski, J., Bray , C.: Visual categorization with bags of keypoints. In: W orkshop on statistical learning in computer vision, ECCV. (2004) 1–2 6. S´ anc hez, J., P erronnin, F., Mensink, T., V erb eek, J.: Image classification with the Fisher v ector: Theory and practice. International Journal of Computer Vision (IJCV) 105 (3) (2013) 222–245 7. Krizhevsky , A., Sutskev er, I., Hinton, G.E.: ImageNet Classification with Deep Con volutional Neural Netw orks. In: Conference on Neural Information Pro cessing Systems (NIPS). (2012) 1106–1114 8. Simon yan, K., Zisserman, A.: V ery Deep Conv olutional Netw orks for Large-Scale Image Recognition. In: ICLR. (2014) 9. Ozeki, M., Ok atani, T.: Understanding Conv olutional Neural Netw orks in T erms of Category-Level Attributes. In: Asian Conference on Computer Vision (ACCV). (2014) 10. Escorcia, V., Niebles, J.C., Ghanem, B.: On the Relationship betw een Visual A ttributes and Conv olutional Netw orks. In: IEEE International Conference on Computer Vision and Pattern Recognition (CVPR). (2015) 11. Zhang, Z., Saligrama, V.: Zero-Shot Learning via Semantic Similarit y Embedding. In: IEEE In ternational Conference on Computer Vision (ICCV). (2015) 12. Lamp ert, C.H., Nickisc h, H., Harmeling, S.: Attribute-Based Classification for Zero-Shot Visual Ob ject Categorization. IEEE T rans. on Pattern Analysis and Mac hine Intelligence 36 (3) (2014) 453–465 13. P arikh, D., Grauman, K.: In teractively building a discriminative vocabulary of nameable attributes. In: IEEE International Conference on Computer Vision and P attern Recognition (CVPR). (2011) 14. Duan, K., Parikh, D., Crandall, D., Grauman, K.: Disco vering lo calized attributes for fine-grained recognition. In: IEEE International Conference on Computer Vi- sion and Patt ern Recognition (CVPR). (2012) 15. Berg, T.L., Berg, A.C., Shih, J.: Automatic attribute discov ery and characteriza- tion from noisy web data. In: Europ ean Conference on Computer Vision (ECCV). (2010) 16. Ba, L.J., Swersky , K., Fidler, S., Salakhutdino v, R.: Predicting deep zero-shot con volutional neural netw orks using textual descriptions. In: 2015 IEEE Inter- national Conference on Computer Vision, ICCV 2015, Santiago, Chile, December 7-13, 2015. (2015) 4247–4255 17. Elhosein y , M., Saleh, B., Elgammal, A.: W rite a Classifier: Zero-Shot Learning Us- ing Purely T extual Descriptions. In: IEEE In ternational Conference on Computer Vision (ICCV). (2013) 16 Buc her, Herbin and Jurie 18. Y u, F.X., Cao, L., F eris, R.S., Smith, J.R., Chang, S.F.F.: Designing category-lev el attributes for discriminative visual recognition. In: IEEE International Conference on Computer Vision (ICCV), IEEE (2013) 19. V erma, N., Maha jan, D., Sellamanick am, S., Nair, V.: Learning hierarchical simi- larit y metrics. In: IEEE In ternational Conference on Computer Vision and P attern Recognition (CVPR). (2012) 20. Rohrbac h, M., Stark, M., Schiele, B.: Ev aluating knowledge transfer and zero-shot learning in a large-scale setting. In: IEEE International Conference on Computer Vision and Pa ttern Recognition (CVPR). (2011) 21. Ak ata, Z., Reed, S., W alter, D., Lee, H., Schiele, B.: Ev aluation of Output Embed- dings for Fine-Grained Image Classification. In: IEEE International Conference on Computer Vision and Pattern Recognition (CVPR). (2015) 22. W ang, Y., Mori, G.: A Discriminative Laten t Mo del of Ob ject Classes and A t- tributes. In: Europ ean Conference on Computer Vision (ECCV). (2010) 23. F arhadi, A., Endres, I., Hoiem, D., F orsyth, D.: Describing ob jects by their at- tributes. In: IEEE International Conference on Computer Vision and P attern Recognition (CVPR). (2009) 24. Mensink, T., Gavv es, E., Sno ek, C.G.M.: COST A: Co-Occurrence Statistics for Zero-Shot Classification. In: IEEE International Conference on Computer Vision and P attern Recognition (CVPR). (2014) 25. Norouzi, M., Mik olov, T., Bengio, S., Singer, Y., Shlens, J., F rome, A., Corrado, G.S., Dean, J.: Zero-Shot Learning by Conv ex Combination of Semantic Embed- dings. In: International Conference on Learning Representations (ICLR). (Decem- b er 2013) 26. F u, Z., Xiang, T.A., Ko diro v, E., Gong, S.: Zero-shot ob ject recognition by seman- tic manifold distance. In: IEEE International Conference on Computer Vision and P attern Recognition (CVPR). (2015) 27. P arikh, D., Grauman, K.: Relativ e attributes. In: IEEE International Conference on Computer Vision (ICCV). (2011) 28. P alatucci, M., Pomerleau, D., Hinton, G.E., Mitchell, T.M.: Zero-shot learning with semantic output co des. In: Conference on Neural Information Pro cessing Systems (NIPS). (2009) 29. W eston, J., Bengio, S., Usunier, N.: WSABIE: scaling up to large v o cabulary image annotation. In: IJCAI. (2011) 2764–2770 30. Ak ata, Z., P erronnin, F., Harc haoui, Z., Schmid, C.: Lab el-Embedding for Image Classification. IEEE T rans. on Pattern Analysis and Machine In telligence (2015) 31. Hamm, J., Belkin, M.: Probabilistic Zero-shot Classification with Semantic Rank- ings. arXiv.org (F ebruary 2015) 32. Ja yaraman, D., Grauman, K.: Zero-shot recognition with unreliable attributes. In: Conference on Neural Information Pro cessing Systems (NIPS). (2014) 33. W u, S., Bondugula, S., Luisier, F., Zh uang, X., Natara jan, P .: Zero-Shot Even t Detection Using Multi-mo dal F usion of W eakly Sup ervised Concepts. In: IEEE In ternational Conference on Computer Vision and Pattern Recognition (CVPR). (2014) 34. F rome, A., Corrado, G.S., Shlens, J., Bengio, S., Dean, J., Ranzato, M., Mikolo v, T.: DeViSE: A Deep Visual-Semantic Embedding Model. In: Conference on Neural Information Pro cessing Systems (NIPS). (2013) 35. W ang, G., F orsyth, D.: Joint learning of visual attributes, ob ject classes and visual saliency. In: IEEE International Conference on Computer Vision (ICCV). (2009) Metric Learning for Zero-Shot Classification 17 36. So c her, R., Ganjo o, M., Manning, C.D., Ng, A.: Zero-Shot Learning Through Cross-Mo dal T ransfer. In: Conference on Neural Information Pro cessing Systems (NIPS). (2013) 37. F u, Y., Hosp edales, T.M., Xiang, T., F u, Z., Gong, S.: T ransductive multi-view em b edding for zero-shot recognition and annotation. In: Europ ean Conference on Computer Vision (ECCV). (2014) 38. Li, X., Guo, Y., Sch uurmans, D.: Semi-Supervised Zero-Shot Classification With Lab el Representation Learning. In: IEEE International Conference on Computer Vision (ICCV). (2015) 39. Ko diro v, E., Xiang, T., F u, Z., Gong, S.: Unsup ervised Domain Adaptation for Zero-Shot Learning. In: IEEE International Conference on Computer Vision (ICCV). (2015) 40. Mensink, T., V erb eek, J., P erronnin, F., Csurk a, G.: Metric learning for large scale image classification: Generalizing to new classes at near-zero cost. In: Computer Vision–ECCV 2012. Springer (2012) 488–501 41. Kuznetso v a, A., Hwang, S.J., Rosenhahn, B., Sigal, L.: Exploiting view-sp ecific app earance similarities across classes for zero-shot pose prediction: A metric learn- ing approach. In: Pro ceedings of the Thirtieth AAAI Conference on Artificial In telligence, F ebruary 12-17, 2016, Pho enix, Arizona, USA. (2016) 3523–3529 42. Bellet, A., Habrard, A., Sebban, M.: A Survey on Metric Learning for F eature V ectors and Structured Data. T echnical Rep ort arXiv:1306.6709v4, Universit y of St Etienne (2013) 43. Shalev-Sh wartz, S., Singer, Y., Ng, A.Y.: Online and batc h learning of pseudo- metrics. In: Pro ceedings of the International Conference on Mac hine learning, A CM (2004) 94 44. W ah, C., Branson, S., W elinder, P ., Perona, P ., Belongie, S.: The Caltech-UCSD Birds-200-2011 Dataset. T echnical rep ort (July 2011) 45. P atterson, G., Xu, C., Su, H., Ha ys, J.: The SUN Attribute Database: Beyond Categories for Deeper Scene Understanding. In ternational Journal of Computer Vision (IJCV) 108 (1-2) (2014) 59–81 46. Abadi, M., Agarw al, A., Barham, P ., Brevdo, E., Chen, Z., Citro, C., Corrado, G.S., Davis, A., Dean, J., Devin, M., Ghema wat, S., Go odfellow, I., Harp, A., Irving, G., Isard, M., Jia, Y., Jozefowicz, R., Kaiser, L., Kudlur, M., Leven b erg, J., Man´ e, D., Monga, R., Mo ore, S., Murra y , D., Olah, C., Sch uster, M., Shlens, J., Steiner, B., Sutskev er, I., T alw ar, K., T uck er, P ., V anhouck e, V., V asudev an, V., Vi ´ egas, F., Viny als, O., W arden, P ., W atten b erg, M., Wick e, M., Y u, Y., Zheng, X.: T ensorFlow: Large-scale machine learning on heterogeneous systems (2015) Soft ware av ailable from tensorflow.org. 47. Zhang, Z., Saligrama, V.: Zero-shot learning via joint laten t similarity embedding. In: IEEE International Conference on Computer Vision and P attern Recognition (CVPR). (2016) 6034–6042

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment