Distributed Coding of Multiview Sparse Sources with Joint Recovery

In support of applications involving multiview sources in distributed object recognition using lightweight cameras, we propose a new method for the distributed coding of sparse sources as visual descriptor histograms extracted from multiview images. The problem is challenging due to the computational and energy constraints at each camera as well as the limitations regarding inter-camera communication. Our approach addresses these challenges by exploiting the sparsity of the visual descriptor histograms as well as their intra- and inter-camera correlations. Our method couples distributed source coding of the sparse sources with a new joint recovery algorithm that incorporates multiple side information signals, where prior knowledge (low quality) of all the sparse sources is initially sent to exploit their correlations. Experimental evaluation using the histograms of shift-invariant feature transform (SIFT) descriptors extracted from multiview images shows that our method leads to bit-rate saving of up to 43% compared to the state-of-the-art distributed compressed sensing method with independent encoding of the sources.

💡 Research Summary

This paper addresses the problem of efficiently compressing and transmitting high‑dimensional sparse visual descriptor histograms (e.g., SIFT) generated by lightweight, resource‑constrained cameras in a multiview object‑recognition scenario. The authors propose a novel framework called DICOSS (Distributed Coding of Sparse Sources) that combines compressed sensing, multiple side‑information (SI) generation, and Slepian‑Wolf (SW) coding to achieve substantial bitrate savings while preserving reconstruction quality.

Problem Setting and Background

Given J cameras, each captures an n‑dimensional sparse histogram x_j. Directly sending the full high‑resolution measurements y_j = Φ_j x_j is infeasible due to limited computation, power, and bandwidth. Classical distributed compressed sensing (DCS) assumes each camera independently projects its signal with a random matrix and the decoder jointly recovers the signals using a Joint Sparsity Model (JSM). However, DCS ignores the cost of quantizing and entropy‑coding the measurements. Conversely, SW coding can reduce the bitrate of the measurements but traditionally handles only a single side‑information source and does not exploit multiple correlated views. Recent works on CS with side information and on multiple SI signals exist, yet they lack an integrated system that transmits low‑resolution priors, generates multiple SI at the decoder, and jointly decodes high‑resolution data.

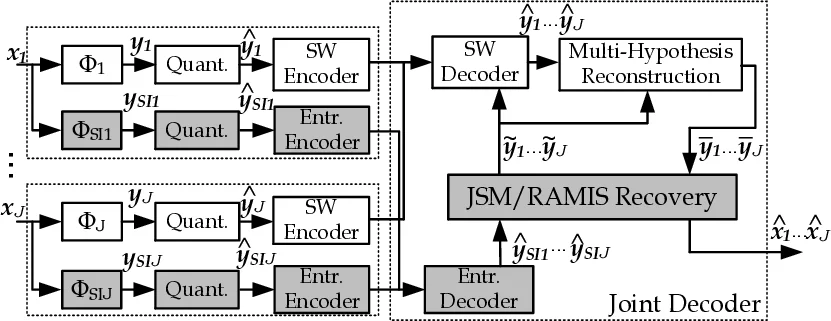

DICOSS Architecture

Each encoder produces two measurement vectors:

- Low‑resolution (coarse) measurements y_SI_j = Φ_SI_j x_j, with m_SI_j ≪ n. These are quantized and entropy‑coded (no SW coding).

- High‑resolution measurements y_j = Φ_j x_j, with m_j ≪ n. These are quantized and then encoded using a SW coder based on Low‑Density Parity‑Check Accumulator (LDPCA) codes.

At the decoder, the low‑resolution measurements are first decoded and jointly processed to generate high‑quality side‑information signals e_y_j. These SI signals are then used in two ways: (i) to assist the SW decoder (providing soft inputs) and (ii) to improve the final reconstruction of the original histograms.

Joint Recovery Methods

Two alternative joint recovery strategies are explored:

Joint Sparsity Model (JSM) Recovery: The decoder solves an ℓ₁‑minimization problem that jointly recovers the common component x_c and the innovation components z_j for all J sources, using the concatenated low‑resolution measurements. After obtaining an estimate of x_j, the corresponding high‑resolution measurements e_y_j = Φ_j x_j are computed and later used together with the high‑resolution SW‑decoded measurements to refine the final estimate via another joint ℓ₁ problem.

RAMIS (Reconstruction Algorithm with Multiple Incremental Side Information): This is an extension of the RAMSIA algorithm. The decoder recovers the sources sequentially. When recovering source x_j, the already reconstructed sources {x_1,…,x_{j‑1}} serve as multiple SI signals. The objective function combines a data‑fidelity term (½‖Φ_j x_j – y_j‖₂²) with a weighted ℓ₁ regularizer that penalizes deviations from each SI signal, where the weights (both per‑coefficient W_p and per‑SI β_p) are updated iteratively based on the current reconstruction error. This adaptive weighting allows the algorithm to automatically emphasize the most reliable SI signals.

Joint Decoding and Multi‑Hypothesis Reconstruction

The SW decoder employs LDPCA with multiple SI inputs. The residual between a measurement y_j and its SI e_y_j is modeled as Laplacian rather than Gaussian, reflecting empirical observations that Laplacian tails better capture CS measurement errors. For each SI, a weight u_j is assigned, and the combined conditional density f(y_j|e_y_1,…,e_y_J) = Σ_j u_j f(y_j|e_y_j) is fed to the LDPCA decoder, which selects the most informative soft input for successful decoding of the quantized high‑resolution measurements b y_j.

After decoding, a multi‑hypothesis reconstruction step computes each measurement y_j as a weighted average of the conditional expectations over the SI distributions, constrained to the quantization interval. The reconstructed measurements are then fed back into the joint recovery stage (either JSM or RAMIS) to obtain the final histogram estimates (\hat{x}_j).

Adaptive Rate Allocation

The authors recognize that transmitting low‑resolution priors incurs extra bits. They therefore define two operating modes:

- Intra‑mode: Directly encode and transmit only the high‑resolution measurements (no priors).

- Prior‑mode: Transmit both low‑resolution priors and high‑resolution SW‑coded data.

By estimating the conditional entropies H(bY_j|eY_1,…,eY_J) and the entropy of the priors H(bY_SI_j), the system can decide per source which mode yields the lower total bitrate. This adaptive decision makes DICOSS robust across varying inter‑camera correlation levels.

Experimental Evaluation

The framework is tested on the COIL‑100 dataset, where SIFT descriptors are quantized into 1000‑dimensional histograms for multiple views of the same object. Experiments with J = 2, 3, and 4 cameras demonstrate:

- Up to 43 % bitrate reduction compared with the state‑of‑the‑art DCS method that encodes each source independently.

- PSNR improvements of 2–4 dB in the reconstructed histograms.

- RAMIS outperforms the batch JSM approach when inter‑camera correlations are strong, because the sequential exploitation of already recovered SI leads to more accurate weighting and less error propagation.

- The adaptive mode selection correctly chooses Prior‑mode when correlation is high and Intra‑mode when it is low, confirming the practicality of the bitrate‑prediction model.

Conclusions and Impact

DICOSS successfully merges compressed sensing, distributed source coding, and multi‑SI joint reconstruction into a coherent system suitable for low‑power, bandwidth‑limited camera networks. By sending a lightweight coarse preview of each source, the decoder can generate multiple high‑quality side‑information signals that dramatically improve both SW decoding efficiency and final reconstruction fidelity. The RAMIS algorithm introduces a principled way to handle multiple, possibly heterogeneous SI signals through adaptive weighting, extending the applicability of CS‑with‑SI beyond the single‑SI case. The use of a Laplacian residual model and the adaptive bitrate decision further enhance robustness for real‑world deployments. Overall, the paper provides a compelling solution for distributed visual analytics in emerging applications such as mobile augmented reality, smart surveillance, and Internet‑of‑Things visual sensor networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment