Tracking Dynamic Point Processes on Networks

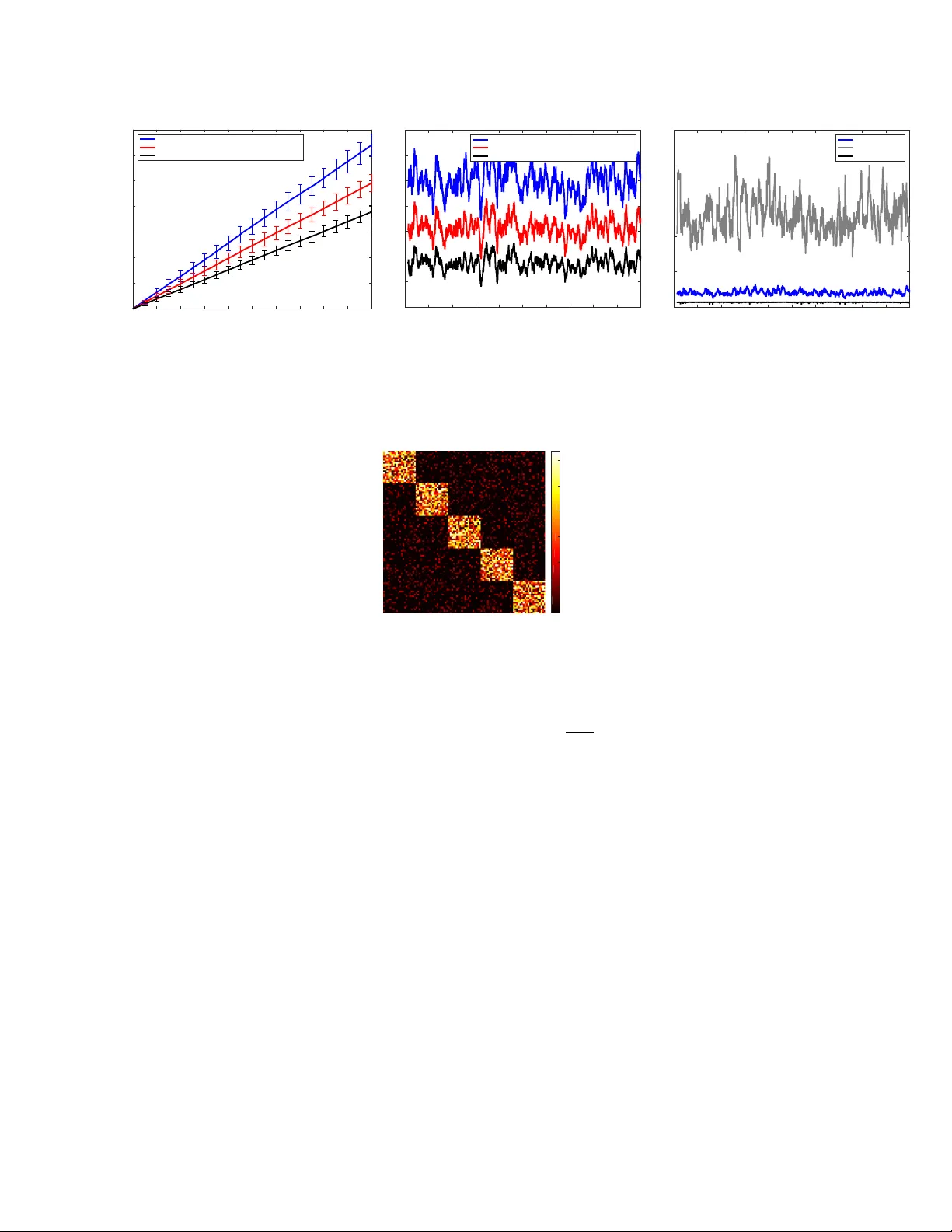

Cascading chains of events are a salient feature of many real-world social, biological, and financial networks. In social networks, social reciprocity accounts for retaliations in gang interactions, proxy wars in nation-state conflicts, or Internet m…

Authors: Eric C. Hall, Rebecca M. Willett