Meaningful Models: Utilizing Conceptual Structure to Improve Machine Learning Interpretability

The last decade has seen huge progress in the development of advanced machine learning models; however, those models are powerless unless human users can interpret them. Here we show how the mind’s construction of concepts and meaning can be used to create more interpretable machine learning models. By proposing a novel method of classifying concepts, in terms of ‘form’ and ‘function’, we elucidate the nature of meaning and offer proposals to improve model understandability. As machine learning begins to permeate daily life, interpretable models may serve as a bridge between domain-expert authors and non-expert users.

💡 Research Summary

The paper “Meaningful Models: Utilizing Conceptual Structure to Improve Machine Learning Interpretability” argues that the ultimate utility of modern machine learning systems hinges on human users being able to understand what the models do and why they produce particular outputs. To bridge the gap between high‑performance algorithms and human comprehension, the author draws on cognitive psychology, particularly the processes by which humans form concepts, and proposes a novel framework for thinking about meaning in terms of “form” and “function.”

First, the manuscript reviews implicit learning – the unconscious, automatic extraction of statistical regularities from the environment. Implicit learning supplies low‑level features (continuous or categorical properties) that later become the building blocks of conscious concepts. A concept is modeled as a key‑value pair: a symbolic token (word, image, sound) serves as the key, while the associated set of features constitutes the value. This mirrors a dictionary structure and provides a natural analogy for feature engineering in machine learning.

Second, the author redefines meaning as the mapping of a symbol onto its feature set within a specific context. Drawing on latent semantic analysis and connectionist models, the paper argues that symbols are intrinsically meaningless until they are linked to a network of features; meaning therefore emerges relationally, as a function of the surrounding semantic system.

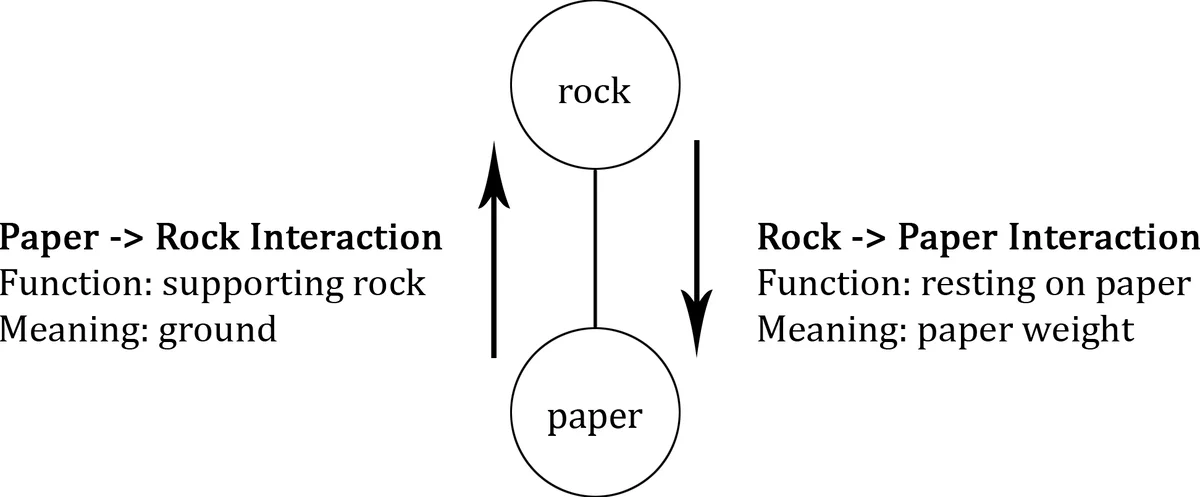

The core contribution is the introduction of two orthogonal dimensions – form and function – to categorize concepts. “Function” captures the core, context‑dependent meaning of a concept; it is the input‑output relationship that the concept enacts in a given situation. “Form” captures peripheral, instance‑specific attributes that differentiate one realization of the same function from another. The paper illustrates this with linguistic examples (“go” vs. “head” in different sentences) and with a simple physical scenario (a rock resting on paper). By treating function as the essential meaning and form as the surface manifestation, the author provides a systematic way to separate what a model should do (its functional goal) from how it presents its results (its form).

Building on this theoretical foundation, three practical recommendations are offered for improving model interpretability:

-

Explicitly State the Model’s Function – Clearly define input requirements, output goals, and the intended application domain. Reduce the number of input attributes through variable ranking, weight elimination, or other feature‑selection techniques. This not only simplifies the model but also reduces over‑fitting risk.

-

Embed the Model Within an Existing Process – Position the model as a component of a larger workflow (e.g., a diagnostic pipeline, an anomaly‑detection stage). By clarifying the model’s role in the broader system, users can infer its purpose and limitations more readily.

-

Design for User Experience – Provide a front‑end that translates raw model outputs into familiar formats, includes clear prompts for required inputs, and visualizes results in an intuitive manner. Track usability metrics (recommendation likelihood, efficiency gains, identified pain points) and iterate on the interface. The paper notes that major cloud ML platforms (Google Cloud, AWS, Azure, H2O.ai) are already moving toward such integrated UX solutions.

The author emphasizes that interpretability should not be an after‑thought; rather, the relationship between understandability and accuracy must be considered from the outset. By aligning model design with the human cognitive architecture of concept formation—specifically, by ensuring that a model’s functional intent is transparent and its form is presented in a familiar, context‑appropriate way—developers can produce “meaningful models” that are both powerful and accessible to non‑expert stakeholders.

In conclusion, the paper synthesizes insights from psychology, linguistics, and machine learning to propose a unified view: concepts are functions operating within contexts, and their meaning can be captured by the dual lenses of form and function. Applying this lens to model development yields concrete design guidelines that promise to narrow the gap between sophisticated algorithms and the everyday users who must rely on them.

Comments & Academic Discussion

Loading comments...

Leave a Comment