Cyberbullying Identification Using Participant-Vocabulary Consistency

With the rise of social media, people can now form relationships and communities easily regardless of location, race, ethnicity, or gender. However, the power of social media simultaneously enables harmful online behavior such as harassment and bully…

Authors: Elaheh Raisi, Bert Huang

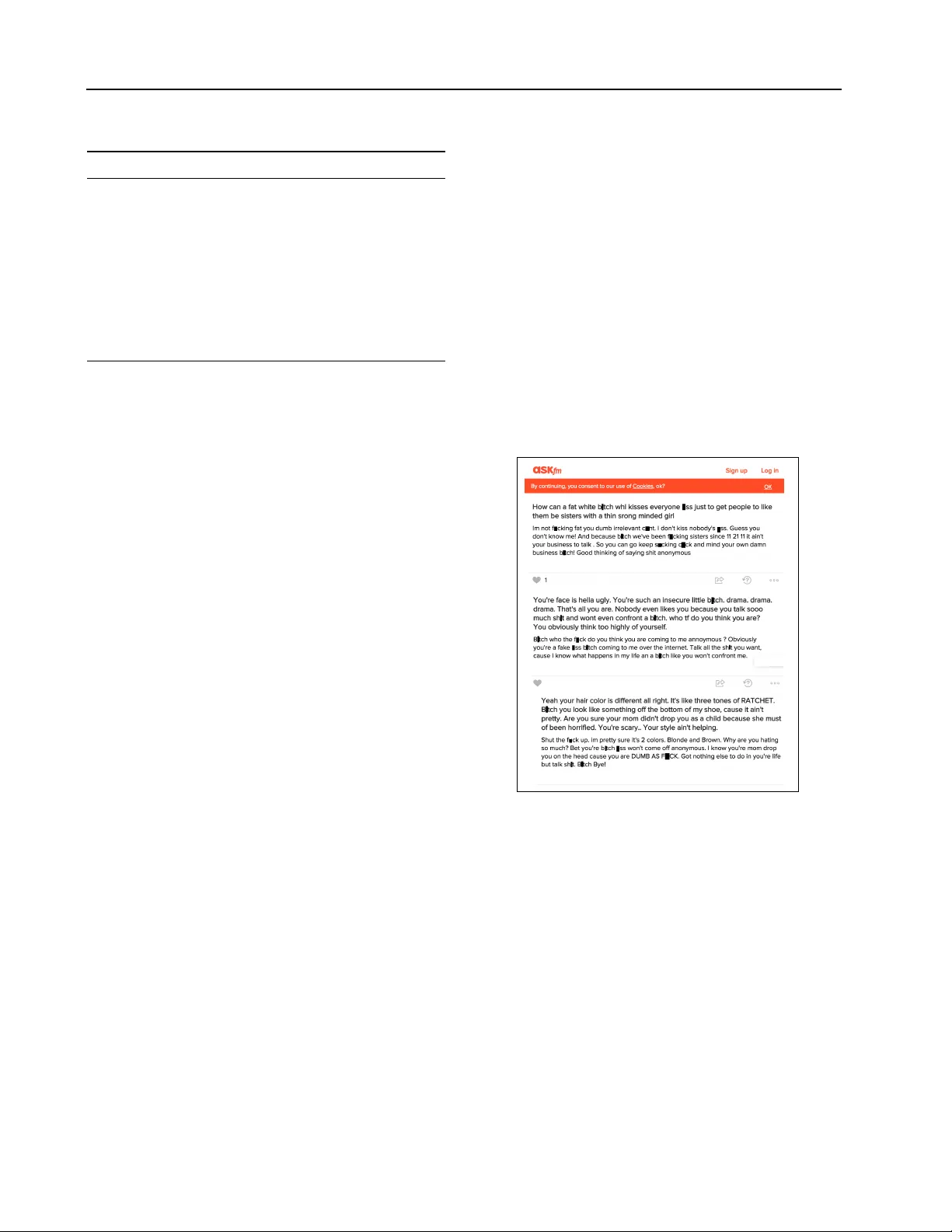

Cyberb ullying Identification Using Participant-V ocab ulary Consistency Elaheh Raisi E L A H E H @ V T . E D U V irginia T ech, Blacksbur g, V A Bert Huang B H UA N G @ V T . E D U V irginia T ech, Blacksbur g, V A Abstract W ith the rise of social media, people can now form relationships and communities easily re- gardless of location, race, ethnicity , or gender . Howe ver , the power of social media simulta- neously enables harmful online behavior such as harassment and b ullying. Cyberbullying is a serious social problem, making it an impor- tant topic in social network analysis. Machine learning methods can potentially help provide better understanding of this phenomenon, b ut they must address several ke y challenges: the rapidly changing v ocabulary in volved in cyber- bullying, the role of social network structure, and the scale of the data. In this study , we propose a model that simultaneously discov ers instigators and victims of bullying as well as ne w bullying vocab ulary by starting with a corpus of social interactions and a seed dictionary of bullying indicators. W e formulate an objective function based on participant-vocab ulary consistency . W e ev aluate this approach on T witter and Ask.fm data sets and show that the proposed method can detect ne w bullying v ocabulary as well as victims and bullies. 1. Introduction Social media has significantly changed the nature of so- ciety . Our ability to connect with others has been mas- siv ely enhanced, removing boundaries created by location, gender , age, and race. Howe ver , the benefits of this hyper-connecti vity also come with the enhancement of detrimental aspects of social behavior . Cyberbullying is an example of one such behavior that is hea vily affecting the younger generations ( Boyd , 2014 ). The Cyberbullying 2016 ICML W orkshop on #Data4Good: Mac hine Learning in Social Good Applications , New Y ork, NY , USA. Cop yright by the author(s). Figure 1. Surve y Statistics on Cyberb ullying Experiences. Data collected and visualized by the Cyberbullying Research Center (http://cyberb ullying.org/). Research Center defines cyberb ullying as “willful and repeated harm inflicted through the use of computers, cell phones, and other electronic devices. ” Like traditional bullying, c yberbullying occurs in v arious forms. Examples include name calling, rumor spreading, threats, and sharing of priv ate information or photographs. 1 , 2 Even seemingly innocuous actions such as supporting of fensi ve comments by “liking” them can be considered b ullying ( W ang et al. , 2009 ). As stated by the National Crime Pre vention Coun- cil, around 50% of American young people are victimized by cyberb ullying. According to the American Academy of Child and Adolescent Psychiatry , victims of cyberb ullying hav e strong tendencies tow ard mental and psychiatric dis- orders ( American Academy of Child Adolescent Psychia- try , 2016 ). In e xtreme cases, suicides have been linked to cyberb ullying ( Goldman , 2010 ; Smith-Spark , 2013 ). The phenomenon is widespread, as indicated in Fig. 1 , which plots surv ey responses collected from students. These facts make it clear that cyberb ullying is a serious health threat. Machine learning can be useful in addressing the cyber- bullying problem. Recently , various studies considered supervised, text-based cyberb ullying detection, classifying 1 http://www .endcyberb ullying.org 2 http://www .ncpc.org/c yberbullying 46 Cyberbullying Identification Using P articipant-V ocabulary Consistency social media posts as ‘bullying ’or ‘non-bullying’. T raining data is annotated by experts or crowdsourced workers. Since bullying often in volves offensi ve language, text- based cyberbullying detection studies often use curated swear words as features, augmenting other standard text features. Then, supervised machine learning approaches train classifiers from this annotated data ( Y in et al. , 2009 ; Ptaszynski et al. , 2010 ; Dinakar et al. , 2011 ). W e identify three significant challenges for supervised cyberb ullying detection. First, annotation is not an easy task. It requires expertise about culture, examination of the social structure of the individuals in volved in each interaction. Because of the difficulty of labeling these of- ten subtle distinctions between b ullying and non-bullying, there is likely to be disagreement among labelers, making costs add up quickly for a large-scale problem. Second, reasoning about which individuals are in volved in b ullying should do joint, or collectiv e, classification. E.g., if we believ e a message from A to B is a bullying interaction, we should also expect a message from A to C to have an increased likelihood of also being bullying. Third, language is rapidly changing, especially among young populations, making the use of static text indicators prone to becoming outdated. Some curse words have completely faded a way or are not as taboo as they once were, while new slang is frequently introduced into the culture. These three challenges suggest that we need a dynamic methodology to collectiv ely detect emerging and e volving slurs with only weak supervision. In this paper , we introduce an automated, data-dri ven method for cyberbullying identification. The ev entual goal of such work is to detect such harmful behaviors in social media and intervene, either by filtering or by providing advice to those in volv ed. Our proposed learnable model takes advantage of the fact that the data and concepts in volve relationships. W e train this relational model in a weakly supervised manner, where human experts provide a small seed set of phrases that are highly indicativ e of bullying. Then the algorithm finds other bullying terms by extrapolating from these expert annotations. In other words, our algorithm detects c yberb ullying from ke y- phrase indicators. W e refer to our proposed method as the participant-vocab ulary consistency (PVC) model; It seeks a consistent parameter setting for all users and key phrases in the data that characterizes the tendency of each user to harass or to be harassed and the tendency of a ke y phrase to be indicative of harassment. The learning algorithm optimizes the parameters to minimize their disagreement with the training data which are highly indicati ve bullying phrases in messages between specific users. A study by ditchthelabel.or g ( 2013 ) found that Facebook, Y ouT ube, T witter , and Ask.fm are the platforms that have the most frequent occurrences of cyberbullying. T o ev alu- ate the participant-vocabulary consistency method, we ran our experiments on T witter and Ask.fm data. From a list of highly indicati ve of b ullying key phrases, we subsample small seed sets to train the algorithm. W e then e xamine the participant-vocab ulary consistency method to see ho w well it recov ers the remaining, held-out set of indicativ e phrases. Additionally , we extract the detected most b ullying phrases and qualitati vely verify that they are in fact examples of bullying. 2. Related W ork There are two main branches of research related to our topic. One of them is online harassment and cyberb ullying detection; the other one is associated with automated vocab ulary discovery . V arious studies have used fully supervised learning to classify bully posts from non-b ully posts. Man y of them focus on the textual features of post to identify cyberbullying incidents ( Dinakar et al. , 2011 ; Ptaszynski et al. , 2010 ; Hosseinmardi et al. , 2015 ; Chen et al. , 2012 ; Margono et al. , 2014 ). Some of them use other features than only textual features, for example content, sentiment, and contextual features ( Y in et al. , 2009 ), the number , density and the value of offensi ve words ( Reynolds et al. , 2011 ), or the number of friends, network structure, and relationship centrality ( Huang & Singh , 2014 ). Nahar et al. ( 2013 ) used semantic and weighted features; they also identify predators and victims using a ranking algorithm. Many studies hav e been applied machine learning techniques to better understand social- psychological issues such as bullying. They used data sets such as T witter , Instagram and Ask.fm to study negati ve user behavior ( Bellmore et al. , 2015 ; Hosseinmardi et al. , 2014a ; b ). V arious works use query e xpansion to e xtend search queries to dynamically include additional terms. For example, Massoudi et al. ( 2011 ) use temporal information as well as co-occurrence to score the related terms to expand the query . Mahendiran et al. propose a method based on probabilistic soft logic to grow a vocab ulary using multiple indicators (e.g., social network, demographics, and time). 3. Proposed Method T o model the cyberbullying problem, for each user u i , we assign a bully score b i and a victim score v i . The bully score measures how much a user tends to bully others; like wise, victim score indicates how much a user tends to be bullied by other users. For each feature w k , we associate a feature-indicator score that represents how much the feature is an indicator of a bullying interaction. 47 Cyberbullying Identification Using P articipant-V ocabulary Consistency Each feature represents the e xistence of some descriptor in the message, such as n-grams in text data. The sum of senders bullying score and receiv ers victim score ( b i + v i ) specifies the message’ s social bullying scor e , which our model aims to make consistent with the vocabulary- based feature score. W e formulate a regularized objectiv e function that penalizes inconsistency between the social bullying score and each of the feature scores. J ( b , v , w ; λ ) = λ 2 || b || 2 + || v || 2 + || w || 2 + 1 2 X m ∈ M X k : w k ∈ f ( m ) b s ( m ) + v r ( m ) − w k 2 (1) Learning is then an optimization problem ov er parameter vectors b , v , and w . The consistency penalties are determined by the structure of the social data. W e include information from an e xpert-provided initial seed of highly indicativ e bully words. W e require these seed features to hav e a high score, adding the constraint: min b , v , w J ( b , v , w ; λ ) s . t . w k = 1 . 0 , ∀ k : x k ∈ S. (2) W e refer to this model as the participant-vocabulary consis- tency model because we optimize the consistenc y of scores computed based on the participants of each social interac- tion as well as the vocab ulary used in each interaction. The objectiv e function Eq. ( 1 ) is not jointly con ve x; Howe ver , if we optimize each parameter vector in isolation, we then solve con ve x optimizations with closed form solutions. The optimal value for each parameter vector given the others can be obtained by solving for their zero-gradient conditions. The update for b ully score vector b is: arg min b i J = X m ∈ M | s ( m )= i X k ∈ f ( m ) w k − | f ( m ) | v r ( m ) λ + X m ∈ M | s ( m )= i | f ( m ) | where { m ∈ M | s ( m ) = i } is the set of messages that are sent by user i , and | f ( m ) | is the number of n-grams in the message m . The closed-form solution for optimizing with respect to victim score vector v is arg min v j J = X m ∈ M | r ( m )= j X k ∈ f ( m ) ( w k − | f ( m ) | b i ) λ + X m ∈ M | r ( m )= j | f ( m ) | where { m ∈ M | r ( m ) = j } is the set of messages sent to user j . The word score v ector w can be updated with arg min w k J = X m ∈ M | k ∈ f ( m ) b r ( m ) + v s ( m ) λ + |{ m ∈ M | k ∈ f ( m ) }| . Figure 2. R OC curv e of recov ered target words for Ask.fm (top) and T witter (bottom). The set { m ∈ M | k ∈ f ( m ) } indicates the set of messages that contain the k th feature or n-gram. The strategy of fixing some parameters and solving the optimization problem for the rest of parameters known as alternating least-squares. Our algorithm iterativ ely updates each of parameter vectors b , v , and w until con vergence. The output of the algorithm is the bully and victim score of all the users and the bully score of all the w ords. 4. Experiments W e ran our experiments on T witter and Ask.fm data. T o collect our T witter data set, we first collected tweets using words from our curse word dictionary; then we use snowball sampling from these profane tweets to collect additional messages. Since we want to consider messages between users, we are interested in @-replies, in which one user directs a message to another or answers a post from another user . From our full data set, we remov e the tweets which are not part of any such con versation, all retweets and duplicate tweets. After the data collection and post- processing, our T witter data set contains 180,355 users and 296,308 tweets. Ask.fm is a social question-answering service, where users post questions on the profiles of other users. W e use part of the Ask.fm data set collected by Hosseinmardi et al. ( 2014b ). The y used snowball sampling, collecting user profiles and a complete list of answered questions. W e remov e all the question-answer pairs where the identity of the questioner is hidden. Our Ask.fm data set consists of 48 Cyberbullying Identification Using P articipant-V ocabulary Consistency T able 1. Identified b ullying bigrams detected by participant- vocab ulary consistency from T witter and Ask.fm data sets. Data Set Selected High-Scoring W ords T witter sh*tstain arisew , c*nt lying, w*gger , commi f*ggot, sp*nkb ucket lowlife, f*cking nutter , blacko wned whitetrash, monster hatchling, f*ggot dumb*ss, *ssface mcb*ober , ignorant *sshat Ask.fm total d*ck, blaky , ilysm n*gger, fat sl*t, pathetic waste, loose p*ssy , c*cky b*stard, wifi b*tch, que*n c*nt, stupid hoee, sleep p*ssy , worthless sh*t, ilysm n*gger 41,833 users and 286,767 question-answer pairs. W e compare our method with two baselines. The first is co-occurrence . All words or bigrams that occur in the same tweet as any seed word are given the score 1, and all other words have score 0. The second baseline is dynamic query expansion (DQE) ( Ramakrishnan et al. , 2014 ). DQE e xtracts messages containing seed w ords, then computes the document fr equency score of each word. It then iterates selection of the k highest-scoring keywords and re-extraction of relev ant messages until it reaches a stable state. W e ev aluate performance by considering held out words from our full curse word dictionary as the rele vant target words. W e measure the true positiv e rate and the false posi- tiv e rate, computing the receiv er order characteristic (ROC) curve for each compared method. Fig. 2 contains the R OC curves for both T witter and Ask.fm. Co-occurrence only indicates whether words co-occur or not, so it forms a single point in the R OC space. Our PVC model and DQE compute real-valued scores for words and generate curves. DQE produces very high precision, but does not recover many of the target words. Howe ver , co-occurrence detects a high proportion of the target words, but at the cost of also recovering a lar ge fraction of non-target words. PVC is able to recover a much higher proportion of the target words comparing DQE. PVC enables a good compromise between recall and precision. W e also compute the average score of target words, non- target words, and all of the words. If the algorithm succeeds, the average target-word score should be higher than the ov erall av erage. For both T witter and Ask.fm, our proposed PVC model can capture target words much better than baselines. W e measured ho w many standard deviations the a verage tar get-word score is above the ov er- all a verage. For T witter , PVC provides a lift of around 1.5 standard deviations over the overall av erage, while DQE only produces a lift of 0.242. W e also observe the same beha vior for Ask.fm: PVC learns scores that ha ve a defined lift between the overall a verage word score and the av erage target word score (0.825). DQE produces a small lift (0.0099). Co-occurrence has no apparent lift. By manually examining the 1,000 highest scoring words, we find many seemingly v alid bullying words. These de- tected curse words include sexual, sexist, racist, and LGBT (lesbian, gay , bisexual, and transgender) slurs. T able 1 lists some of these high-scoring words from our experiments. The PVC algorithm also computes bully and victim scores for users. By studying the profiles of highly scored victims in Ask.fm, we noticed that some of these users do appear to be bullied. This happens in T witter as well, in which some detected high scoring users are often using offensi ve language in their tweets. Fig. 3 shows some bullying comments to an Ask.fm user and her responses, all of which contain offensi ve language and seem highly inflammatory . Figure 3. Example of an Ask.fm con versation containing possible bullying and hea vy usage of offensiv e language. 5. Conclusion In this paper, we proposed the participant-vocab ulary con- sistency method to simultaneously discov er victims, insti- gators, and vocabulary of words indicates bullying. Start- ing with seed dictionary of high-precision bullying indica- tors, we optimize an objectiv e function that seeks consis- tency between the scores of the participants in each interac- tion and the scores of the language use. For e valuation, we perform our e xperiments on data from T witter and Ask.fm, services known to contain high frequencies of bullying. Our experiments indicate that our method can successfully detect new bullying vocabulary . W e are currently working on creating a more formal probabilistic model for bullying to robustly incorporate noise and uncertainty . 49 Cyberbullying Identification Using P articipant-V ocabulary Consistency References American Academy of Child Adolescent Psychiatry . Facts for families guide. the american academy of child adolescent psychiatry . 2016. URL http: //www.aacap.org/AACAP/Families_and_ Youth/Facts_for_Families/FFF- Guide/ FFF- Guide- Home.aspx . Bellmore, Amy , Calvin, Angela J., Xu, Jun-Ming, and Zhu, Xiaojin. The fiv e w’ s of b ullying on twitter: Who, what, why , where, and when. Computers in Human Behavior , 44:305–314, 2015. Boyd, Danah. It’s Complicated . Y ale Univ ersity Press, 2014. Chen, Y ing, Zhou, Y ilu, Zhu, Sencun, and Xu, Heng. Detecting of fensi ve language in social media to protect adolescent online safety . International Confer ence on Privacy , Security , Risk and T rust (P ASSA T), and International Conference on Social Computing (SocialCom) , pp. 71–80, 2012. Dinakar , Karthik, Reichart, Roi, and Lieberman, Henry . Modeling the detection of te xtual cyberb ullying. International Confer ence on W eblog and Social Media - Social Mobile W eb W orkshop , 2011. ditchthelabel.org. Ditch the label-anti-bullying charity: The annual cyberb ullying survey . 2013. URL http: //www.ditchthelabel.org/ . Goldman, Russell. T eens indicted after alle gedly taunting girl who hanged herself. 2010. URL http:// abcnews.go.com/Technology/TheLaw/ . Hosseinmardi, Homa, Han, Richard, Lv , Qin, Mishra, Shiv akant, and Ghasemianlangroodi, Amir . T ow ards understanding cyberb ullying behavior in a semi- anonymous social network. Advances in Social Networks Analysis and Mining (ASON AM), 2014 IEEE/A CM International Confer ence on , pp. 244–252, August 2014a. Hosseinmardi, Homa, Li, Shaosong, Y ang, Zhili, Lv , Qin, Rafiq, Rahat Ibn, Han, Richard, and Mishra, Shiv akant. A comparison of common users across instagram and ask.fm to better understand cyberb ullying. Big Data and Cloud Computing (BdCloud), 2014 IEEE F ourth International Confer ence on , pp. 355–362, 2014b. Hosseinmardi, Homa, Mattson, Sabrina Arredondo, Rafiq, Rahat Ibn, Han, Richard, Lv , Qin, and Mishra, Shiv akant. Detection of cyberb ullying incidents on the instagram social network. Association for the Advancement of Artificial Intelligence , 2015. Huang, Qianjia and Singh, V iv ek Kumar . Cyber bullying detection using social and textual analysis. SAM ’14 Proceedings of the 3r d International W orkshop on Socially-A war e Multimedia , pp. 3–6, 2014. Mahendiran, Aravindan, W ang, W ei, Arredondo, Jaime, Lira, Sanchez, Huang, Bert, Getoor , Lise, Mares, David, and Ramakrishnan, Naren. Discovering e volving political vocab ulary in social media. Margono, Hendro, Y i, Xun, and Raikundalia, Gitesh K. Mining indonesian cyber bullying patterns in social networks. Pr oceedings of the Thirty-Seventh Aus- tralasian Computer Science Confer ence (ACSC 2014) , 147, January 2014. Massoudi, Kamran, Tsagkias, Manos, de Rijke, Maarten, and W eerkamp, W outer . Incorporating query e xpansion and quality indicators in searching microblog posts. AIR11. Springer , 15(5):362–367, Nov ember 2011. Nahar , V inita, Li, Xue, and Pang, Chaoyi. An effecti ve approach for cyberb ullying detection. Communications in Information Science and Management Engineering. , 3(5):238–247, May 2013. Ptaszynski, Michal, Dybala, Pawel, Matsuba, T atsuaki, Masui, Fumito, Rzepka, Rafal, and Araki, Kenji. Machine learning and affect analysis against cyber- bullying. In the 36th AISB , pp. 7–16), 2010. Ramakrishnan, Naren, Butler, Patrick, Muthiah, Sathap- pan, Self, Nathan, Khandpur, Rupinder, and et al. ‘Beating the news’ with EMBERS: Forecasting civil unrest using open source indicators. KDD , 2014. Reynolds, K elly , Kontostathis, April, and Edwards, L ynne. Using machine learning to detect cyberbullying. 10th International Conference on Mac hine Learning and Applications and W orkshops (ICMLA) , 2:241–4, 2011. Smith-Spark, Laura. Hanna Smith suicide fuels calls for action on ask.fm cyberbullying. 2013. URL http://www.cnn.com/2013/08/07/world/ europe/uk- social- media- bullying/ . W ang, Jing, Iannotti, Ronald J., and Nansel, T onja R. School b ullying among us adolescents: Physical, verbal, relational and cyber . Journal of Adolescent Health , 45: 368–375, 2009. Y in, Dawei, Xue, Zhenzhen, Hong, Liangjie, Davison, Brian D., Kontostathis, April, and Edwards, L ynne. Detection of harassment on web 2.0. Pr oceedings of the Content Analysis in the WEB 2.0 , 2009. 50

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment