Towards stationary time-vertex signal processing

Graph-based methods for signal processing have shown promise for the analysis of data exhibiting irregular structure, such as those found in social, transportation, and sensor networks. Yet, though these systems are often dynamic, state-of-the-art me…

Authors: Nathanael Perraudin, Andreas Loukas, Francesco Grassi

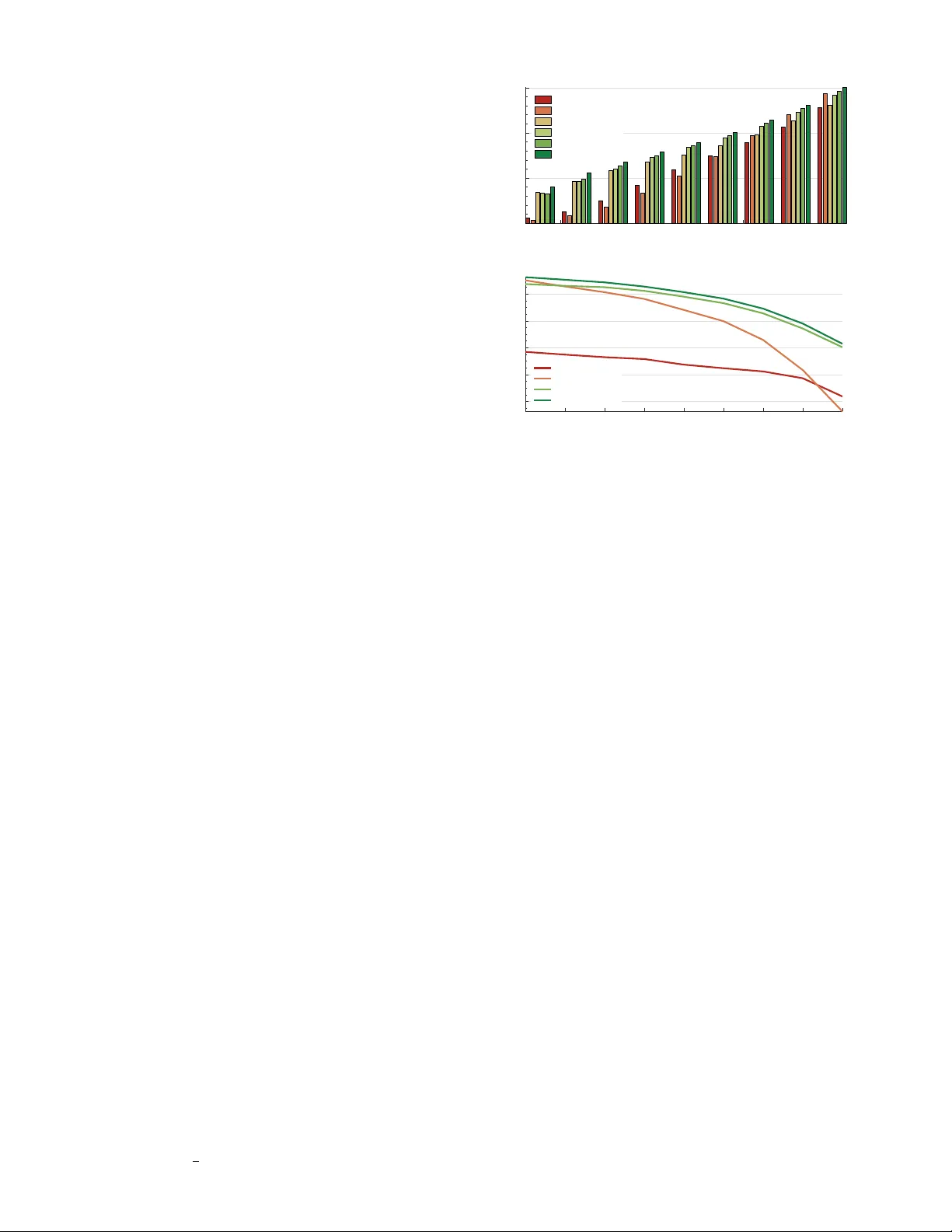

1 T o wards stationary time-verte x signal processing Nathana ¨ el Perraudin ∗ and Andreas Loukas ∗ and Francesco Grassi and Pierre V anderghe ynst ´ Ecole Polytechnique F ´ ed ´ erale Lausanne, Switzerland Abstract —Graph-based methods f or signal processing hav e shown promise for the analysis of data exhibiting irregular structure, such as those found in social, transportation, and sensor networks. Y et, though these systems are often dynamic, state-of-the-art methods for signal pr ocessing on graphs ignore the dimension of time, treating successi ve graph signals indepen- dently or taking a global av erage. T o address this shortcoming, this paper considers the statistical analysis of time-varying graph signals. W e introduce a novel definition of joint (time-vertex) stationarity , which generalizes the classical definition of time sta- tionarity and the more recent definition appropriate for graphs. Joint stationarity gi ves rise to a scalable Wiener optimization framework for joint denoising, semi-supervised learning, or more generally inv ersing a linear operator , that is provably optimal. Experimental results on real weather data demonstrate that taking into account graph and time dimensions jointly can yield significant accuracy improvements in the reconstruction effort. I . I N T RO D U C T I O N Whether examining opinion dichotomy in social net- works [1], how traffic ev olves in the roads of a city [2], or neuronal activ ation patterns present in the brain [3], much of the high-dimensional data one encounters exhibit comple x non-euclidean properties. This realization has been the driving force behind recent efforts to re-in vent the mathematical mod- els used for data analysis. W ithin the field of signal processing, one of the main research thrusts has been to extend harmonic analysis to graph signals, i.e., signals supported on the vertices of irregular graphs. The key breakthrough in the field has been the introduction of a notion of frequency appropriate for graph signals and of the associated graph Fourier transform (GFT). Because it enables us to process signals taking into account complex relations between variables, the GFT has lead to advances in problems such as denoising [4] and semi- supervised learning [5], [6]. Y et, state-of-the-art graph frequency based methods often fail to produce useful results when applied to real datasets. One of the main reasons underlying this shortcoming is that they ignore the time dimension, for example by treating successiv e signals independently or performing a global average [3], [7], [8]. On the contrary , many of the systems to which graph signal processing is applied to are dynamic. Consider for instance a sensor network, and suppose that we want to infer the weather conditions on a mountain gi ven temperature measurements from a small set of weather stations. Ap- proaches that do not take into account the temporal ev olution of weather will be biased by seasonal variations and unable to provide insights about transient phenomena. Moreover , when the weather dynamics are slow and predictable, taking into account the time dimension, e.g., by imposing a smoothness A. Loukas and N. Perraudin contributed equally to this work. prior , can yield accuracy improvements in the reconstruction effort. Motiv ated by this need, this paper considers the statis- tical analysis of time-evolving graph signals . Our results are inspired by the recent introduction of a joint temporal and graph Fourier transform (JFT), a generalization of GFT appropriate for time-varying graph signals [9], and the re- cent generalization of stationarity for graphs [7], [10], [11]. Our main contribution is a novel definition of time-vertex (wide-sense) stationarity , or joint stationarity for short. W e believ e that the proposed definition is natural, at it elegantly generalizes existing definitions of stationarity in the time and verte x domains. W e sho w that joint stationarity carries along important properties classically associated with stationarity . Moreov er , our definition leads to a W iener framew ork for solv- ing denoising and interpolating time-varying graph signals, that yields superior performance compared to state-of-the-art methods in time or verte x domains. The proposed frame work is composed out of two key components: a scalable joint power spectral density estimation method, and an optimization framew ork suitable for deconv olution under additive error . The latter is shown to be optimal in the mean-squared error sense. Experiments with a real weather dataset illustrate the superior performance of our method, ultimately demonstrating that joint stationarity is a useful assumption in practice. I I . P R E L I M I NA R I E S Our objective is to model and predict the e v olution of graph signals, i.e., signals supported on the vertices V = { v 1 , v 2 , . . . , v N } of a weighted undirected graph G = ( V , E , W G ) , with E the set of edges and W G the weighted adjacency matrix. A more con venient matrix representa- tion of G is the (combinatorial 1 ) Laplacian matrix L G = diag ( W G 1 N ) − W G , where 1 N is the all-ones v ector of size N , and diag ( W G 1 N ) is the diagonal de gree matrix. Harmonic vertex analysis. In the context of graph signal processing, the importance of the Laplacian matrix stems from it gi ving rise to a graph-specific notion of frequency . The Graph F ourier T ransform (GFT) of a graph signal x ∈ R N is defined as GFT { x } = U ∗ G x , where U G is the eigen vector matrix of L G and thus L G = U G Λ G U ∗ G . The GFT allows us to extend filtering to graphs [13], [14], [15]. Filtering a signal x with a graph filter h ( L G ) corresponds to element- wise multiplication in the spectral domain h ( L G ) x ∆ = GFT -1 { h ( Λ G ) ◦ GFT { x }} = U G h ( Λ G ) U ∗ G x , 1 Though we use the combinatorial Laplacian in our presentation, our results are applicable to any positive semi-definite matrix representation of a graph or to the recently introduced shift operator [12]. 2 where the scalar function h : R + 7→ R , referred to as the graph frequency response, has been applied to each diagonal entry of Λ G . It is often con venient to represent the diagonal of matrix h ( Λ G ) as a vector , in which case we write h = diag ( h ( Λ G )) . The notation U ∗ G denotes the transposed complex conjugate of U G , U | G the transpose of U G , and ¯ U G the complex conjugate of U G . W e will also use the notion of graph localization [15], [7], a generalization of the translation operator used in the classical setting appropriate for graphs 2 . Localizing a filter with frequency response h onto vertex v i reads T G i h ∆ = h ( L G ) δ i , (1) where δ i is a Kronecker delta centered at vertex v i . For a sufficiently regular function h , this operation localizes the filter around v i [15, Theorem 1 and Corollary 2]. Ev aluated at the i 2 -th verte x, the abo ve expression becomes T G i 1 h ( i 2 ) = N X n =1 h ( λ n ) ¯ u n ( i 1 ) u n ( i 2 ) , (2) where we use the notation u n ( i ) = [ U G ] i,n and λ n = [ Λ G ] n,n . Note that in the expression above, the localization operator takes precedence over indexing and T G i 1 h ( i 2 ) = [ T G i 1 h ]( i 2 ) ; this con vention is used throughout this paper . The concept of localization is intimately linked to that of translation in the time domain. If T T τ h is the localization operator taken on a cycle graph of T vertices (representing time), localization is equiv alent to translation T T τ h ( t ) = T T 0 h ( t − τ ) , for all t, τ = 1 , . . . , T . (3) In simple words, for a cyclic graph, the localization operator computes the in verse Fourier transform of the frequency response h , and translates it to vertex v i . W e can verify this using the fact that the complex exponential Fourier basis form the eigen vector set of all cyclic graphs [16], which together with (2) implies that T T t 1 h ( t 2 ) = 1 T T X τ =1 h ( ω τ ) e − 2 π j ( τ − 1) t 1 T e 2 π j ( τ − 1) t 2 T = 1 T T X τ =1 h ( ω τ ) e 2 π j ( τ − 1)( t 2 − t 1 ) T = T T 0 h ( t 2 − t 1 ) . (4) Abov e T T 0 h = U T h is the in verse Fourier transform of h and U T is the orthonormal Fourier basis. In the case of irregular graphs, localization differs further from translation because the shape of the localized filter adapts to the graph and v aries as a function of its topology . Additional insights about the localization operator can be found in [15], [13], [17], [7]. Harmonic time-vertex analysis. Suppose that a graph signal x t is sampled at T successiv e regular intervals of unit length. The time-varying graph signal X = [ x 1 , x 2 , . . . , x T ] ∈ R N × T is then the matrix having graph signal x t as its t - th column. Equiv alently , X = x 1 , x 2 , . . . , x N | holds N 2 Stationarity is classically defined as the in variance of statistical moments of a signal with respect to translation. This definition ho wev er cannot be directly generalized to graphs, which do not possess regular structure and thus lack of an isometric translation operator . temporal signals x i ∈ R T , one for each vertex v i . Throughout this paper , we denote as x = vec ( X ) (without subscript) the vectorized representation of the matrix X . The frequency representation of X is giv en by the joint (time-verte x) Fourier transform (or JFT for short) JFT { X } = U ∗ G X ¯ U T , (5) where, once more, U G is the graph Laplacian eigen vector ma- trix, whereas ¯ U T is the complex conjugate of the DFT matrix divided by 1 / √ T . In fact, matrix U T is the eigenv ector matrix of the lag operator (or Laplacian matrix) L T = U T Λ T U ∗ T . Denote by Ω the diagonal matrix of angular frequencies (i.e., Ω tt = ω t = 2 π t/T ). In case L T is the lag operator , we have Λ T = e − j Ω , where j = √ − 1 . When L T is the Laplacian, we hav e Λ T = real I − e − j Ω . Expressed in vector form, the joint Fourier transform becomes JFT { x } = U ∗ J x , where U J = U T ⊗ U G is unitary , and operator ( ⊗ ) denotes the kro- neker product. The in verse joint Fourier transforms in matrix and vector form are, respecti vely , JFT -1 { X } = U G X U | T and JFT -1 { x } = U J x . For an in-depth discussion of JFT and its properties, we refer the reader to [9]. Lev eraging the definition of the JFT , filtering and localiza- tion can also be extended to the joint (time-vertex) domain. A joint filter h ( L J ) is a function defined in the joint spectral domain h : R + × R 7→ R that is ev aluated at the graph eigen values λ G and the angular frequencies ω . The output of a joint filter is h ( L J ) x ∆ = U J h ( Λ G , Ω ) U ∗ J x , (6) where h ( Λ G , Ω ) is a N T × N T diagonal matrix with [ h ( Λ G , Ω )] k,k = h ( λ n , ω τ ) and k = N ( τ − 1) + n . Equiv- alently , if we define the matrix H of dimension N × T as H n,τ = h ( λ n , ω τ ) for ev ery graph frequency λ n and temporal frequency ω τ , we ha ve h ( L J ) x = vec JFT -1 { H ◦ JFT { X } ) } , (7) with ( ◦ ) being the element-wise multiplication (Hadamard product). In an analogy to (1), we define the joint localization operator as T J i,t h ∆ = mat ( h ( L J ) ( δ t ⊗ δ i )) (8) = JFT -1 { H ◦ JFT { δ i δ | t } ) } (9) where mat ( · ) is the matricization operator , such that mat ( vec ( X )) = X . In order to link (8) with graph localization (1) and the classical translation operator, we observe the following relations T J i 1 ,t 1 h ( i 2 , t 2 ) = 1 T N ,T X n =1 τ =1 h ( λ n , ω k ) ¯ u n ( i 1 ) u n ( i 2 ) e 2 π j ( τ − 1)( t 2 − t 1 ) T = T J i 1 , 0 h ( i 2 , t 2 − t 1 ) (10) = 1 T N X n =1 " T X τ =1 h ( λ n , ω τ ) e 2 π j ( τ − 1)( t 2 − t 1 ) T # ¯ u n ( i 1 ) u n ( i 2 ) = N X n =1 T T t 1 H n, · ( t 2 ) ¯ u n ( i 1 ) u n ( i 2 ) (11) 3 = 1 T T X τ =1 " N X n =1 h ( λ n , ω τ ) ¯ u n ( i 1 ) u n ( i 2 ) # e 2 π j ( τ − 1)( t 2 − t 1 ) T = 1 T T X τ =1 T G i 1 H · ,τ ( i 2 ) e 2 π j ( τ − 1)( t 2 − t 1 ) T . (12) The above equations provide three key insights about the joint localization operator: 1) From (10), we observe that the localization operator performs a translation along the time dimension. 2) From (11), it follows that joint localization consist of first localizing (translating) independently in time each line of the matrix H and then localizing independently on the graph each column of the resulting matrix. Joint localization is thus equiv alent to a successive application of a graph and and a time localization operator . 3) Furthermore, according to (12), the successive localiza- tion in time and graph can be performed in any order . When the filter is separable, i.e., when the joint frequency response can be written as the product of a frequency response defined solely in the verte x domain and one in the time domain h ( λ, ω ) = h 1 ( λ ) h 2 ( ω ) , the joint localization is simply h ( L J ) ( δ t ⊗ δ i ) = vec ( h ( L G )( δ t ⊗ δ | i ) h ( L T )) . (13) Nev ertheless, for this work, we assume that the filter is not separable as it is a too restrictive hypothesis. I I I . J O I N T T I M E - V E RT E X S TA T I O NA R I T Y Let X be a discrete multiv ariate stochastic process with finite number of time-steps T that is indexed by verte x v i and time t . W e refer to such processes as joint time-vertex pr ocesses , or joint processes for short. T o put our results in context, let us first revie w the estab- lished definitions of stationarity over time and vertex domains, respectiv ely . Our definition will emerge us a consequence of both. W e note that, although our exposition is self-contained, the reader will benefit from familiarizing with previous work on stationarity on graphs [7]. Definition 1 (Time stationarity) . A joint pr ocess X is T ime W ide-Sense Stationary (TWSS), if and only if the following two pr operties hold independently for each vertex v i : 1) The expected value is constant over the time domain E x i = c i 1 T . 2) There exists a function γ i , for which [ Σ x i ] t, · = h E x i x i ∗ − E x i E x i ∗ i t, · = T T t γ i Function γ i is the autocorr elation function of signal x i in the F ourier domain, and is also r eferred to as T ime P ower Spectral Density (TPSD). W e remind the reader that on a cyclic graph, localizing the TPSD is equiv alent to translating the autocorrelation. Thus, using (9) we recover the classical definition, where the autocorrelation function depends only on the time difference: [ Σ x i ] t,τ = T T 0 γ i ( t − τ ) . Simply put, assuming time stationar- ity is equiv alent to asserting that the statistics of the tw o first moments are independent of the time. W e also observe that the TPSD is the Fourier transform of the autocorrelation, agreeing with the Wiener -Khintchine Theorem [18]. T o summarize, TPSD encodes the statistics of the signal in the spectral domain. This consideration allows us to generalize the concept of stationarity to graph signals. Please refer to the work of Perraudin and V andergheynst [7] for a more detailled study . W e express a v ariation of their definition in the follo wing. Definition 2 (V ertex stationarity) . A joint process X = [ x 1 x 2 . . . x T ] is called V erte x W ide-Sense (or second or der) Stationary (VWSS), if and only if the following two properties hold independently for eac h time t : 1) The expected value is in the null space of the Laplacian L G E [ x t ] = 0 N . 2) There exists a gr aph filter s t ( L G ) , for which [ Σ x t ] i, · = [ E [ x t x ∗ t ] − E [ x t ] E [ x ∗ t ]] i, · = T G i s t . Function s t is the autocorr elation function of signal x t in the graph F ourier domain and is also referr ed to as V ertex P ower Spectral Density (VPSD). Considering that the null space of L T in both the normal- ized and the combinatorial case is the span of the constant eigen vector 1 T , the first condition of the above definition is analogous to the corresponding condition of the time stationar- ity definition. Moreov er , the condition for the second moment is a natural generalization of the second condition of time stationarity where, instead of imposing translation in v ariance, we suppose inv ariance under the localization operator . This second condition is in fact equivalent to a generalization of the Wiener -Khintchine theorem and implies that Σ x t is jointly diagonalizable with L G (in TWSS the covariance is T oeplitz and thus also diagonalizable with the DFT matrix U T ). W e now unify the TWSS and GWSS in order to lev erage both the time and v ertex domain statistics. Definition 3 (Joint stationarity) . A pr ocess X is called Jointly (or time-vertex) W ide-Sense Stationary (JWSS), if and only if its vector form x = vec ( X ) satisfies the following properties: 1) The expected value is in the null space of the Laplacian L J E [ x ] = 0 N T . 2) There exists a joint filter h ( L J ) , for which [ Σ x ] k, · = [ E [ xx ∗ ] − E [ x ] E [ x ∗ ]] k, · = vec T J i,t h , wher e k = N ( t − 1) + i . Function h is the autocorrelation function of signal x in the joint F ourier domain and is also r eferr ed to as time-vertex power spectral density or J oint P ower Spectral Density (JPSD) for short. The definition above is in fact equiv alent to stating that the mean is constant, and the co v ariance matrix Σ x is jointly di- agonalizable with the joint Laplacian L J . The latter statement is (also) a generalization the Wiener -Khintchine theorem and is prov en ne xt. 4 Theorem 1. A pr ocess X is JWSS if and only if 1) L J E [ x ] = 0 N T , and 2) its covariance matrix is jointly diagonalizable by the joint F ourier basis U J . Pr oof: T o prove an equiv alence relation between the two definitions (i.e., the JWSS definition and the one stated by the theorem) we will prov e a one-to-one equiv alence between their respective conditions. Clearly the first condi- tions of both definitions are identical. The second condition [ Σ x ] k, · = v ec T J i,t h of the joint stationarity definition together with (8) assert that the covariance being a joint filter Σ x = h ( L J ) for some function h . W e therefore have that Σ x = U J h ( Λ G , Ω ) U ∗ J with h ( Λ G , Ω ) diagonal, which implies our claim. Interestingly , assuming joint stationarity is equiv alent to assuming stationarity in both domains at the same time. Theorem 2. If a joint pr ocess X is JWSS, then it is both TWSS and GWSS. Pr oof: It is straightforward to see that L J E [ x ] = 0 N T if and only if both L T E [ x t ] = 0 N and L G E x i = 0 T , hold for all t and i . W e still need to show that the second- order moment properties of TWSS and VWSS are equiv alent to that of JWSS. If a process is joint stationary , then from (12) we hav e that, for each vertex v i [ Σ x i ] t 1 ,t 2 = [ T J i,t 1 h ]( i, t 2 ) = 1 T T X τ =1 T G i H · ,τ ( i ) e 2 π j ( τ − 1)( t 2 − t 1 ) T which is equi v alent to asserting that x i is stationary in time with TPSD γ i ( ω τ ) = [ T G i H · ,τ ]( i ) . Similarly , using (11), we find that for each time t [ Σ x t ] i 1 ,i 2 = [ T J i 1 ,t h ]( i 2 , t ) = N X n =1 T T t H n, · ( t ) ¯ u n ( i 1 ) u n ( i 2 ) meaning that process x t is stationary with VPSD s t ( λ n ) = [ T G t H n, · ]( t ) . Example 1 (White i.i.d. noise) . White i.i.d. noise w ∈ R N T is JWSS for any graph. Indeed, the first moment E [ w ] is constant for any time and vertex. Mor eover , due to being an identity matrix, the covariance of w is diagonalized by the joint F ourier basis of any graph Σ w = I = U J I U ∗ J . This last equation tells us that the JPSD is constant, which implies that similar to the classical case, white noise contains all joint (time-vertex) frequencies. An interesting property of JWSS processes is that station- arity is preserved through a filtering operation. Theorem 3. When a joint filter f ( L J ) is applied to a JWSS process X , the result Y r emains JWSS with mean f (0 , 0) E [ X ] and JPSD that satisfies h Y ( λ, ω ) = f 2 ( λ, ω ) · h X ( λ, ω ) . (14) Pr oof: The output of a filter f ( L J ) can be written in vector form as y = f ( L J ) . If the input signal x is JWSS, we can confirm that the first moment of the filter output is zero, E [ f ( L J ) x ] = f ( L J ) E [ x ] = f (0 , 0) E [ x ] . The last equality follows from the fact that by definition E [ x ] is in the null space of L J . The computation of the second moment gives Σ y = E f ( L J ) x ( f ( L J ) x ) ∗ − E [ h ( L J ) x ] E [( f ( L J ) x ) ∗ ] = f ( L J ) E [ xx ∗ ] f ( L J ) − f ( L J ) E [ x ] E [ x ∗ ] f ( L J ) ∗ = f ( L J ) Σ x f ( L J ) ∗ = U J f 2 ( Λ G , Ω ) h X ( Λ G , Ω ) U ∗ J , which is, from Theorem 1, JWSS as it is diagonalizable by U J . As the following diagram illustrates, Theorem 3 provides a simple way to artificially produce JWSS signals with a prescribed PSD f 2 by simply filtering white noise with the joint filter f ( L J ) . The resulting signal will be stationary with PSD f 2 and this holds for white noise abiding to any distribution (not only Gaussian). In the sequel, we assume for simplicity that the signal is centered at 0 , i.e., E [ x ] = 0 · 1 . Whenev er it is clear from the context, in the following we simply refer to the TPSD, VPSD, and JPSD as PSD. I V . J O I N T P OW E R S P E C T R A L D E N S I T Y E S T I M A T I O N As the JPSD is central in our method, we need a reliable way to compute it. Since we take into account the correlation both in the time and in the vertex domain, the actual size of the covariance matrix Σ x is N T × N T . In many cases, this matrix is not computable nor can be even stored. Additionally , if attempt to estimate it using classical cov ariance estimation methods, the number of samples necessary for obtaining a reasonable estimation accurac y can be prohibiti ve. The number of samples needed for obtaining a good sample covariance matrix of an n -dimensional process is generally not known, but for distributions with finite second moment it has been shown to be O ( n log n ) by Rudelson [19], [20]. In our case, this theorem implies that we need O ( N T log ( N T )) signals, of N T variables each, to obtain a good estimate of the statistics of a joint process. T o circumvent this issue, we leverage the time-vertex struc- ture of the data. The basic idea behind our approach stems from two established methods used to estimate the TPSD of a temporal signal, namely Bartlett’ s and W elch’ s methods [21], which are summarized belo w . TPSD estimation methods. In Bartlett’ s method, the signal (timeseries) is first cut into equally sized segments without ov erlap. Then, the Fourier transform of each segment is computed. Finally , the PSD is obtained by averaging over segments the squared amplitude of the Fourier coefficients. W elch’ s method [22] is a generalization that works with ov erlapping se gments. W e can see the TPSD estimation of both methods as the averaging over time of the squared coefficients of a Short Time Fourier T ransform (STFT). W e remind the reader that STFT is used to extract the frequency content of a 5 temporal signal at a given time, by first selecting a part of the signal using a windo w and then compute the discrete F ourier transform. More concretely , for a discrete signal s of length T , the circular discrete sampled STFT of s at the m -th (out of M ) frequency band, and under windo w g is STFT { s } ( k , m ) ∆ = T X t =1 s ( t ) g ( t k ) e − 2 πj ( t − 1)( m − 1) M , where t k = mod ( t − a ( k − 1) , T ) + 1 , scalar a is the shift in time between two successive windows [23, equation 1], and mod ( t, T ) finds the remainder after division by T i.e., mod ( t, T ) = t − T b t T c . Note that k = 0 , 1 , . . . , b T a c − 1 is the time band centered at k a and that m = 1 , . . . , M is the frequency band index. For additional insights about this transform, we refer the reader to [24], [25]. Joint PSD estimation. Based on the idea that the Bartlett method is an average of STFT coefficients, we propose to use the GFT of the STFT as a tool to estimate the joint PSD. Consider a time window g and a time-verte x signal X . W e first define the coef ficients’ tensor as C n,k,m ∆ = N X i =1 [ U G ] i,n STFT { x i } ( k , m ) = N X i =1 [ U G ] i,n T X t =1 X i,t g ( t k ) e − 2 π j ( t − 1)( m − 1) M . A usual parameter for M is the support size of g . Then, for half-ov erlapping windows, we set a to M / 2 . For any discrete verte x frequency λ n and time frequency ω m = 2 π m/ M , our JPSD estimator is ˜ h ( λ n , ω m ) ∆ = a T k g k 2 2 b T /a c− 1 X k =0 C 2 n,k,m (15) In order to get an estimate of h at ω 6 = ω m , we interpo- late between the known points. Alternatively , with sufficient computation power , one may set M = T . Though alternative choices are possible, we suggest using the iterated sine windo w g ( t ) = sin 0 . 5 π cos ( π t/ M ) 2 χ [ − M / 2 ,M/ 2] ( t ) , where χ [ − M / 2 ,M/ 2] ( t ) = 1 if t ∈ [ − M / 2 , M / 2] and 0 otherwise, as it turns the STFT into a tight operator for M = 2 a . W e defer an error analysis of the estimator for the longer version of this paper . Other PSD estimation methods. In case T N , two problems arise with the aforementioned method. First, we cannot compute the graph Fourier basis U G , and second the number of sample in time might not be sufficient to av erage ov er time. T o circumvent this problem, one can do the av erage ov er the graph vertex using a technique similar to the PSD estimator of [7]. This technique will be studied in future work. V . O P T I M I Z A T I O N F R A M E W O R K W e can le verage our definition of stationarity to generalize the optimization framework of [7], useful for denoising, inter- polating, and more generally deconv oling stationary processes. Concretely , suppose that our measurements y are generated by a linear model y = Ax + w , (16) where, as in the rest of this document, x and y are the vectorized version of X , Y . Further , suppose that the JPSD of x is h X , whereas the noise w is zero mean has JPSD h W and may follo w any distribution. Matrix A is a general linear operator , not assumed to be jointly diagonalizable with L J . Tikhono v-regularization. Whet the signal x v aries smoothly on the graph, i.e is low frequency based, the classical ap- proach of finding x from y , consists of solving the following optimization scheme, commonly referred to as Tikhono v- regularization arg min x k Ax − y k 2 2 + α x ∗ L J x (17) Notice that the prior abo ve is separable into two terms x ∗ L J x = tr ( X ∗ L G X ) + tr ( X L T X ∗ ) . (18) As a result, optimization problem (17) can only encode a particular joint time-verte x structure. Additionally this scheme requires the parameter α to be tuned and does not take into account the statistical structure of the signals. Wiener optimization framework. W e instead propose to recov er x as the solution of the Wiener optimization problem ˙ x = arg min x k Ax − y k 2 2 + k f ( L J )( x − E [ x ]) k 2 2 , (19) where f ( λ, ω ) are the joint Fourier penalization weights, defined as f ( λ, ω ) ∆ = s h W ( λ, ω ) h X ( λ, ω ) = 1 p SNR( λ, ω ) . (20) In the noise-less case, one alternatively solves the problem ˙ x = arg min x k h − 1 2 X ( L J ) x k 2 2 , subject to Ax = y . (21) Intuitiv ely , the weight f ( λ, ω ) heavily penalizes frequencies associated with low SNR and vice-versa. Formally , we can show that: • If X is a Gaussian process, then the solution of Prob- lem (19) coincides with a MAP estimator . • If A is a masking operator , then the solution of Prob- lem (19) coincides with the minimum mean square error linear estimator . • If A = a ( L J ) is a joint filter, then the solution of Problem (19) is a joint Wiener filter [9]. The proofs are generalizations of Theorems 3,4 and 5 of [7]. Comparison to the MAP estimator . There are three main advantages of the Wiener optimization framework ov er a Gaussian MAP estimator based on an empirical covariance matrix estimate. Firstly , assuming stationarity allows for a more robust estimate of the covariance matrix. This is crucial in this problem since we typically expect the number of variable N × T to be large and an empirical estimate of the covariance matrix to be expensi ve. Secondly , storing the 6 cov ariance might not be possible as it consists of O (( N T ) 2 ) elements. On the contrary , the JPSD h X has only N T ele- ments. Finally , thanks to proximal splitting methods, we can deriv e an algorithm for solving Problem (19) that requires only the application of A and spectral graph filtering. On the contrary the classical Gaussian MAP estimator requires the in verse of a large part of the cov ariance matrix. V I . E X P E R I M E N T S W e apply our methods to a weather dataset depicting the temperature of 32 weather stations, o ver a span of 31 days. Our experiment aims to show that 1) joint stationarity is a useful model, even in datasets which may violate the strict conditions of our definition, and 2) that time-vertex stationarity can yield a significant increase in denoising and recovery accuracy , as compared to time- or vertex-based methods, on a real dataset. Experimental setup. The French national meteorological service has published in open access a dataset 3 with hourly weather observations collected during the Month of January 2014 in the region of Brest (France). The graph is built from the coordinates of the weather stations by connecting all the neighbors in a gi ven radius with a weight function [ W G ] i 1 ,i 2 = exp( − k d ( i 1 , i 2 ) 2 ) , where d ( i 1 , i 2 ) is the euclidean distance between the stations i 1 and i 2 . Parameter k is adjusted to as obtain an average degree around 3 ( k , howe ver , is not a sensitiv e parameter). As sole pre-processing, we remove the mean (over time and stations) of the temperature. This is equiv alent to removing the first moment. The dataset, which consisted of a total of T = 744 timesteps, was split into two parts of size ρT and (1 − ρ ) T , respectiv ely . W e use the first part of the dataset to estimate the PSD and the second to quantify the joint filter performance. W e compare our joint method to the state-of-the-art wiener filters for the disjoint time/verte x domains, which are known to outperform non-statistics based methods, such as graph/time T ikhonov and graph/time TV . T o highlight the benefit of the joint approach, in the disjoint cases we use the entire dataset to estimate the PSD (for ρ = 1 the same data are used for both training and testing). Denoising. For this experiment, we add Gaussian random noise to the data and remove the noise thanks to Wiener filter ( A = I in problem (19)). The result is displayed in Figure 1. Joint stationarity outperforms time or v ertex stationarity especially when the noise level is high. Indeed, joint stationarity allows the estimator to average over more samples. In order to obtain a good denoising, we need a good JPSD estimation. The effect of the dataset size can be observed through the parameter ρ , with larger ρ resulting in higher accuracy . Especially for large input SNR, the joint approach becomes particularly meaningful as it outperforms other approaches, even when a very small portion of the data is used for JPSD estimation (whereas the time and v ertex based methods ρ = 1 , meaning that they use the entire dataset for PSD estimation). 3 Access to the raw data is possible directly from https://donneespubliques. meteofrance.fr/donnees libres/Hackathon/RADOMEH.tar .gz -20 -15 -10 -5 0 5 10 Input SNR (dB) 0 5 10 15 Output SNR (dB) V ertex, ρ = 100% Time, ρ = 100% Joint, ρ = 25% Joint, ρ = 50% Joint, ρ = 75% Joint, ρ = 100% (a) Denoising 10 20 30 40 50 60 70 80 90 Pe rcen t of mis sing v alues 6 8 10 12 14 Output - SNR (dB ) V ertex, ρ = 100% Time, ρ = 100% Joint, ρ = 75% Joint, ρ = 100% (b) Recovery Fig. 1: Experiments on Molene temperatures. The joint ap- proach becomes especially meaningful when the av ailable data are very noisy or are few . The recovery performance is slightly improv ed when a larger percentage ρ of data are av ailable for training. Recovery . W e also consider a recovery problem, where a gi ven percentage of entries of matrix X is missing. Figure 1 depicts the recovery error obtained using problem (21). Again, we observe a significant improvement ov er competing methods. This improv ement is achiev ed because the joint approach lev erages the correlation both in the time and in the vertex domain: each random variable in a TWSS or VWSS process is dependent on only T − 1 or N − 1 other random variables, respectiv ely (rather than N T − 1 as in the joint case), implying a higher recov ery variance. V I I . C O N C L U S I O N This paper proposed a novel definition of (wide-sense) stationarity appropriate for time-varying graph signals. W e showed that joint stationarity possess a number of useful properties, that are familiar from the classical setting. Based on our definition, we proposed a W iener optimization framew ork and the accompanying PSD estimation method, which together can be used to for solving the problem of in verting a rank- deficient linear system under a jointly stationary input and disturbance. The proposed optimization frame work is optimal in the mean-squared error sense and scales well with the number of time samples. In our experiment with a weather dataset, the joint approach was shown to yield a significant benefit over disjoint statistical methods for signal denoising and recov ery . The longer version of this paper will expand our analysis and ev aluate our approach in a larger set of experiments. W e will additionally make a detailed complexity analysis and propose solutions to a void the computationaly expensiv e 7 diagonalization of the graph Laplacian L G . W e remark that our simulations were done using the GSPBOX [26], the UNLocBoX [27], and the L TF A T [23]. The code reproducing all figures will be made available soon. R E F E R E N C E S [1] L. A. Adamic and N. Glance, “The political blogosphere and the 2004 us election: divided they blog, ” in Proceedings of the 3r d international workshop on Link discovery . A CM, 2005, pp. 36–43. [2] P . Mohan, V . N. Padmanabhan, and R. Ramjee, “Nericell: rich mon- itoring of road and traffic conditions using mobile smartphones, ” in Pr oceedings of the 6th ACM conference on Embedded network sensor systems . A CM, 2008, pp. 323–336. [3] W . Huang, L. Goldsberry , N. F . W ymbs, S. T . Grafton, D. S. Bassett, and A. Ribeiro, “Graph frequency analysis of brain signals, ” arXiv preprint arXiv:1512.00037 , 2015. [4] F . Zhang and E. R. Hancock, “Graph spectral image smoothing using the heat kernel, ” P attern Recognition , vol. 41, no. 11, pp. 3328–3342, 2008. [5] A. J. Smola and R. Kondor , “Kernels and regularization on graphs, ” in Learning theory and kernel machines . Springer , 2003, pp. 144–158. [6] M. Belkin and P . Niyogi, “Semi-supervised learning on riemannian manifolds, ” Machine learning , vol. 56, no. 1-3, pp. 209–239, 2004. [7] N. Perraudin and P . V andergheynst, “Stationary signal processing on graphs, ” arXiv preprint , 2016. [8] V . Kalofolias, “How to learn a graph from smooth signals, ” arXiv pr eprint arXiv:1601.02513 , 2016. [9] A. Loukas and D. Foucard, “Frequency analysis of temporal graph signals, ” arXiv preprint , 2016. [10] B. Girault, “Stationary graph signals using an isometric graph transla- tion, ” in Signal Pr ocessing Conference (EUSIPCO), 2015 23rd Euro- pean . IEEE, 2015, pp. 1516–1520. [11] A. G. Marques, S. Segarra, G. Leus, and A. Ribeiro, “Stationary graph processes and spectral estimation, ” arXiv pr eprint arXiv:1603.04667 , 2016. [12] A. Sandryhaila and J. M. Moura, “Discrete signal processing on graphs, ” IEEE transactions on signal processing , vol. 61, pp. 1644–1656, 2013. [13] D. K. Hammond, P . V anderghe ynst, and R. Gribonv al, “W avelets on graphs via spectral graph theory, ” Applied and Computational Harmonic Analysis , vol. 30, no. 2, pp. 129–150, 2011. [14] D. I. Shuman, S. K. Narang, P . Frossard, A. Ortega, and P . V an- derghe ynst, “The emerging field of signal processing on graphs: Ex- tending high-dimensional data analysis to networks and other irregular domains, ” Signal Pr ocessing Magazine, IEEE , v ol. 30, no. 3, pp. 83–98, 2013. [15] D. I. Shuman, B. Ricaud, and P . V andergheynst, “V ertex-frequency analysis on graphs, ” arXiv preprint , 2013. [16] G. Strang, “The discrete cosine transform, ” SIAM r eview , v ol. 41, no. 1, pp. 135–147, 1999. [17] N. Perraudin, B. Ricaud, D. Shuman, and P . V anderghe ynst, “Global and local uncertainty principles for signals on graphs, ” arXiv preprint arXiv:1603.03030 , 2016. [18] N. Wiener , “Generalized harmonic analysis, ” Acta mathematica , vol. 55, no. 1, pp. 117–258, 1930. [19] M. Rudelson, “Random vectors in the isotropic position, ” Journal of Functional Analysis , vol. 164, no. 1, pp. 60–72, 1999. [20] R. V ershynin, “How close is the sample cov ariance matrix to the actual cov ariance matrix?” Journal of Theoretical Probability , vol. 25, no. 3, pp. 655–686, 2012. [21] M. S. Bartlett, “Periodogram analysis and continuous spectra, ” Biometrika , pp. 1–16, 1950. [22] P . W elch, “The use of fast fourier transform for the estimation of power spectra: a method based on time averaging over short, modified periodograms, ” IEEE T ransactions on audio and electr oacoustics , pp. 70–73, 1967. [23] Z. Prusa, P . L. Sonderg aard, N. Holighaus, C. Wiesmeyr , and P . Balazs, “The Lar ge Time-Frequency Analysis T oolbox 2.0, ” in Sound, Music, and Motion , ser . Lecture Notes in Computer Science, M. Aramaki, O. Derrien, R. Kronland-Martinet, and S. Ystad, Eds. Springer International Publishing, 2014, pp. 419–442. [Online]. A vailable: http://dx.doi.org/10.1007/978- 3- 319- 12976- 1 25 [24] K. Gr ¨ ochenig, F oundations of time-fr equency analysis . Springer Science & Business Media, 2013. [25] H. G. Feichtinger and T . Strohmer, Gabor analysis and algorithms: Theory and applications . Springer Science & Business Media, 2012. [26] N. Perraudin, J. Paratte, D. Shuman, V . Kalofolias, P . V andergheynst, and D. K. Hammond, “GSPBO X: A toolbox for signal processing on graphs, ” ArXiv e-prints , Aug. 2014. [27] N. Perraudin, D. Shuman, G. Puy, and P . V andergheynst, “UNLocBoX A matlab con ve x optimization toolbox using proximal splitting methods, ” ArXiv e-prints , Feb. 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment