Measuring photometric redshifts using galaxy images and Deep Neural Networks

We propose a new method to estimate the photometric redshift of galaxies by using the full galaxy image in each measured band. This method draws from the latest techniques and advances in machine learning, in particular Deep Neural Networks. We pass the entire multi-band galaxy image into the machine learning architecture to obtain a redshift estimate that is competitive with the best existing standard machine learning techniques. The standard techniques estimate redshifts using post-processed features, such as magnitudes and colours, which are extracted from the galaxy images and are deemed to be salient by the user. This new method removes the user from the photometric redshift estimation pipeline. However we do note that Deep Neural Networks require many orders of magnitude more computing resources than standard machine learning architectures.

💡 Research Summary

This paper presents a novel method for estimating photometric redshifts of galaxies by directly utilizing their full multi-band images as input to a Deep Neural Network (DNN). The core innovation lies in bypassing the traditional, human-dependent step of feature engineering, where astronomers manually select salient post-processed features like magnitudes and colors derived from galaxy images. Instead, the proposed method feeds pre-processed pixel data from g, r, i, and z-band images directly into a machine learning model, effectively removing user bias from the feature selection pipeline.

The study uses data from the Sloan Digital Sky Survey (SDSS) Data Release 10, selecting approximately 65,000 galaxies with reliable spectroscopic redshifts. The image preprocessing involves creating a 4-channel input for the DNN by converting pixel fluxes into pixel-based colors (i-z, r-i, g-r) and using the r-band pixel magnitude as a normalization layer, resulting in RGBA-style images of size 4x60x60 pixels.

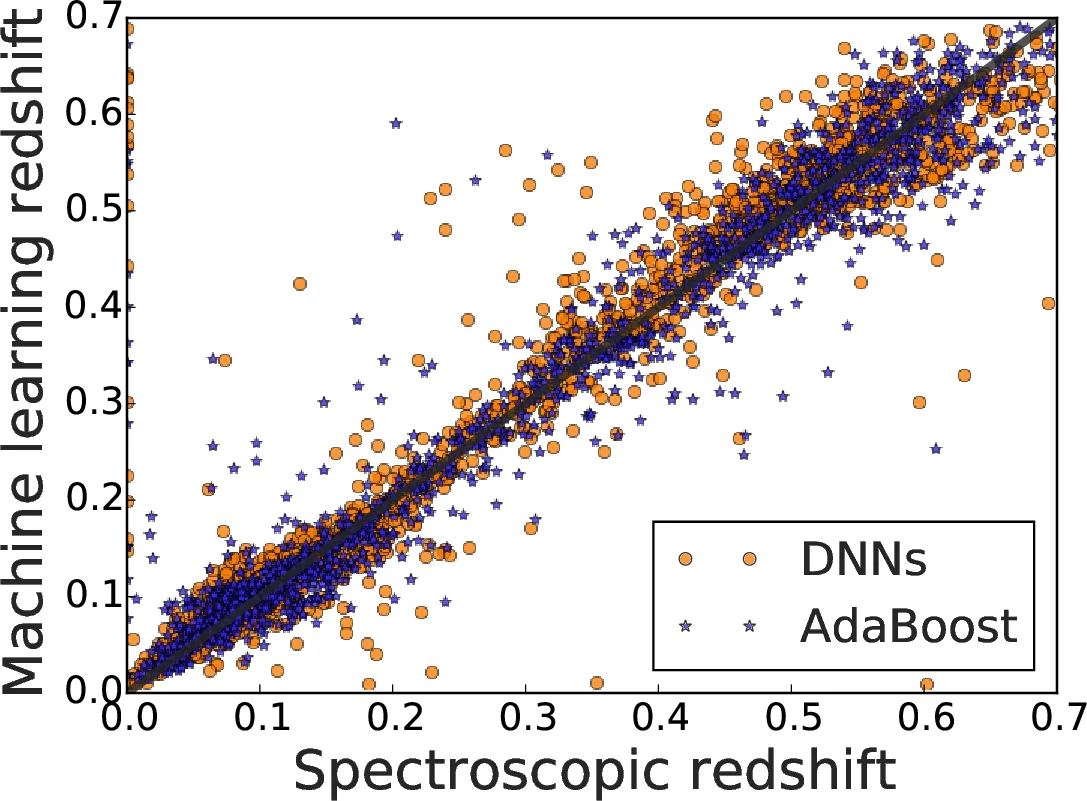

The machine learning architecture at the heart of the proposal is a Convolutional Neural Network (CNN)-based DNN, inspired by models successful in the ImageNet challenge. This network is configured for a classification task, predicting a redshift value by assigning galaxies to one of 94 redshift bins, each with a width of 0.01. To combat overfitting, the authors employ techniques like Dropout and extensive data augmentation through random 90-degree rotations and cropping. For comparison, the paper also implements a state-of-the-art feature-based method using the AdaBoost algorithm with regression trees, taking standard SDSS model magnitudes and size as input features.

The key finding is that the DNN-based image analysis method achieves point prediction accuracy competitive with the highly-tuned, feature-based AdaBoost method. This demonstrates that the raw pixel data containing morphological and structural information can be as predictive for redshift as carefully chosen aggregated features. The research successfully validates the potential of an end-to-end deep learning approach for astronomical data analysis.

However, the paper candidly addresses a significant drawback: the immense computational cost associated with training and running such DNNs. It notes that these networks require orders of magnitude more computing resources than standard machine learning techniques, making them currently intractable for very large datasets (e.g., >50,000 samples) without parallelization. In conclusion, while this work pioneers a user-agnostic, fully data-driven pipeline for photometric redshift estimation and proves its conceptual viability, it also highlights the practical scalability challenges that must be overcome for widespread application in upcoming large-scale sky surveys.

Comments & Academic Discussion

Loading comments...

Leave a Comment