Semantic Image Segmentation with Deep Convolutional Nets and Fully Connected CRFs

Deep Convolutional Neural Networks (DCNNs) have recently shown state of the art performance in high level vision tasks, such as image classification and object detection. This work brings together methods from DCNNs and probabilistic graphical models for addressing the task of pixel-level classification (also called “semantic image segmentation”). We show that responses at the final layer of DCNNs are not sufficiently localized for accurate object segmentation. This is due to the very invariance properties that make DCNNs good for high level tasks. We overcome this poor localization property of deep networks by combining the responses at the final DCNN layer with a fully connected Conditional Random Field (CRF). Qualitatively, our “DeepLab” system is able to localize segment boundaries at a level of accuracy which is beyond previous methods. Quantitatively, our method sets the new state-of-art at the PASCAL VOC-2012 semantic image segmentation task, reaching 71.6% IOU accuracy in the test set. We show how these results can be obtained efficiently: Careful network re-purposing and a novel application of the ‘hole’ algorithm from the wavelet community allow dense computation of neural net responses at 8 frames per second on a modern GPU.

💡 Research Summary

The paper “Semantic Image Segmentation with Deep Convolutional Nets and Fully Connected CRFs” introduces a system called DeepLab that combines a deep convolutional neural network (DCNN) with a fully‑connected Conditional Random Field (CRF) to achieve state‑of‑the‑art semantic segmentation. The authors first identify two fundamental problems when applying standard DCNNs to pixel‑level labeling: (1) repeated max‑pooling and strided convolutions dramatically down‑sample the feature maps, and (2) the large receptive fields and built‑in invariance that make DCNNs powerful for classification hurt precise localization.

To address the down‑sampling issue, they convert the VGG‑16 classification network into a fully‑convolutional architecture and apply the “atrous” (or dilated) convolution algorithm. By skipping subsampling after the last two pooling layers and inserting zeros into the convolution kernels (effectively enlarging the kernel spacing by factors of 2 and 4), they obtain dense output with an 8‑pixel stride while preserving the original receptive field size. They also reduce the spatial size of the first fully‑connected layer from 7×7 to 4×4 (or 3×3), cutting computation time for that bottleneck by 2–3×. The resulting network can produce a 39×39 dense feature map from a 306×306 image at roughly 8 frames per second on a modern GPU; training runs at about 3 fps and fine‑tuning on PASCAL VOC takes ~10 hours.

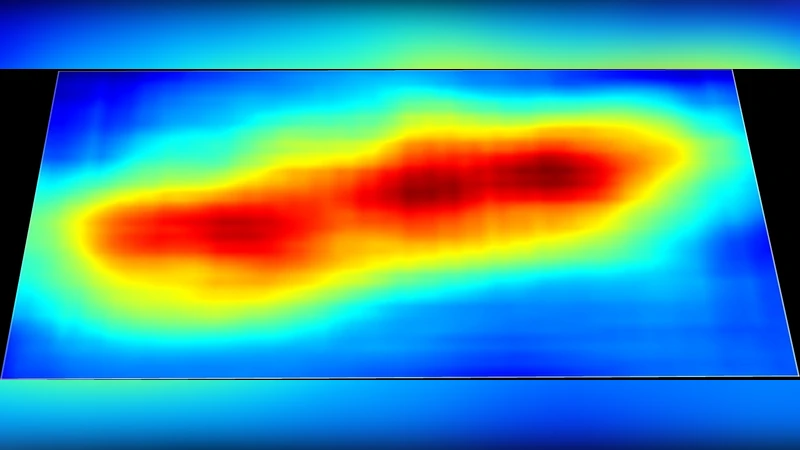

The second component is a fully‑connected CRF that refines the coarse DCNN “unary” scores into sharp, edge‑aware label maps. They adopt the efficient mean‑field inference of Krähenbühl & Koltun (2011), which treats every pixel as a node and uses Gaussian kernels over color, position, and gradient to model pairwise potentials. Because the DCNN score maps are already smooth, a short‑range CRF would be detrimental; instead the fully‑connected model captures long‑range dependencies and aligns predictions with object boundaries. Ten mean‑field iterations (≈0.5 s) are sufficient to produce high‑quality belief maps.

Training is performed by replacing the original 1000‑way ImageNet classifier with a 21‑way classifier for the PASCAL VOC categories and optimizing a per‑pixel cross‑entropy loss via stochastic gradient descent. No additional weighting or sampling tricks are required; all pixels contribute equally.

Evaluation on the PASCAL VOC‑2012 segmentation benchmark shows a mean Intersection‑over‑Union (IoU) of 71.6 % on the test set, surpassing the previous best by more than 7 percentage points. Qualitative results demonstrate that DeepLab recovers fine object contours that earlier methods miss, and the system runs at real‑time speed (≈8 fps) with modest GPU memory.

The paper’s three core contributions are: (i) an efficient dense‑output DCNN achieved through atrous convolution, (ii) the integration of a fully‑connected CRF with fast mean‑field inference to sharpen DCNN predictions, and (iii) a simple two‑stage pipeline that delivers both high accuracy and speed without complex region‑proposal or super‑pixel preprocessing. The work also foreshadows later research that unrolls CRF inference into a differentiable layer for end‑to‑end training (e.g., Zheng et al., 2015). Overall, DeepLab demonstrates that coupling the powerful representation learning of deep nets with the fine‑grained spatial modeling of fully‑connected CRFs yields a practical, high‑performance solution for semantic image segmentation.

Comments & Academic Discussion

Loading comments...

Leave a Comment