The Power of Fair Information Practices - A Control Agency Approach

Most companies’ new business practices are based on customer data. These practices have raised privacy concerns because of the associated risks. Privacy laws require companies to gain customer consent before using their information, which stands as the biggest roadblock to monetise this asset. Privacy literature suggests that reducing privacy concerns and building trust may increase individuals’ intention to authorise the use of personal information. Fair information practices (FIPs) are potential means to achieve this goal. However, there is lack of empirical evidence on the mechanisms through which the FIPs affect privacy concerns and trust. This research argues that FIPs load individuals with control, which has been found to influence privacy concerns and trust level. We will use an experimental design methodology to conduct the study. The results are expected to have both theoretical and managerial implications.

💡 Research Summary

**

The paper tackles a central paradox of the modern data‑driven economy: companies need personal data to create value, yet privacy regulations force them to obtain explicit consent, which often hampers monetisation. While prior literature suggests that reducing privacy concerns and building trust can increase individuals’ willingness to authorise data use, the mechanisms through which “fair information practices” (FIPs) achieve these outcomes remain under‑explored.

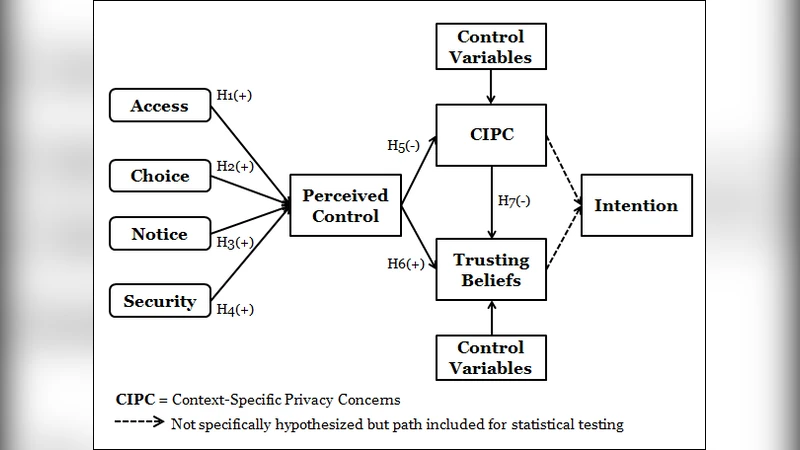

The authors adopt a “control‑agency” perspective, arguing that FIPs work by endowing individuals with a sense of control over their personal information. Control, in turn, is hypothesised to (a) lower privacy concerns, (b) raise trust in the data‑collecting organisation, and (c) ultimately increase the intention to grant consent. To test these propositions, a 2 × 2 between‑subjects experimental design is employed. The two independent variables are (1) presence of FIP information (provided vs. not provided) and (2) level of user‑control (high vs. low). Participants are placed in a simulated online‑service scenario where they encounter different privacy notices and control mechanisms.

Key measurement instruments include validated scales for perceived control (7‑point Likert), privacy concern (Smith et al., 1996), trust (McKnight et al., 2002), and consent intention (behavioral intention items). Manipulation checks confirm that the high‑control condition indeed raises perceived control, and that FIP provision further amplifies this perception.

Statistical analysis proceeds in two stages. First, ANOVA demonstrates that both FIP provision and high control independently reduce privacy concerns and increase trust (p < .01). Second, structural equation modelling (SEM) reveals that perceived control partially mediates the relationship between FIPs and the two outcome variables. The indirect path from FIP to privacy concern via control carries a standardized coefficient of –0.32, while the path to trust is +0.45. A moderation test shows that the positive effects of FIPs are strongest when control is high, confirming the hypothesised interaction.

The findings have several theoretical and managerial implications. Theoretically, the study extends privacy research by positioning control as the psychological conduit linking FIPs to both concern reduction and trust formation, thereby enriching the “privacy‑trust‑consent” triad with a concrete mediating construct. Practically, the results suggest that companies should move beyond merely ticking regulatory boxes. Instead, they need to embed tangible control mechanisms—such as user‑friendly data dashboards, granular consent toggles, real‑time revocation options, and transparent purpose‑selection tools—into their product design. By doing so, firms can transform the consent process from a barrier into a value‑adding interaction that fosters user confidence and willingness to share data.

The authors acknowledge limitations. The experimental setting is artificial; real‑world longitudinal effects of control‑enhancing FIPs remain unknown. Cultural variations in control perception are not addressed, and the sample is limited to a single demographic region. Future research directions include field experiments with actual service providers, cross‑cultural replications, and longitudinal analyses linking control‑induced trust to concrete business outcomes such as customer retention and revenue growth.

In sum, the paper provides robust empirical evidence that fair information practices, when coupled with genuine user control, can mitigate privacy anxieties, build trust, and encourage data sharing—offering a viable pathway for organisations to reconcile regulatory compliance with data‑driven business objectives.

Comments & Academic Discussion

Loading comments...

Leave a Comment