Breaching the Human Firewall: Social engineering in Phishing and Spear-Phishing Emails

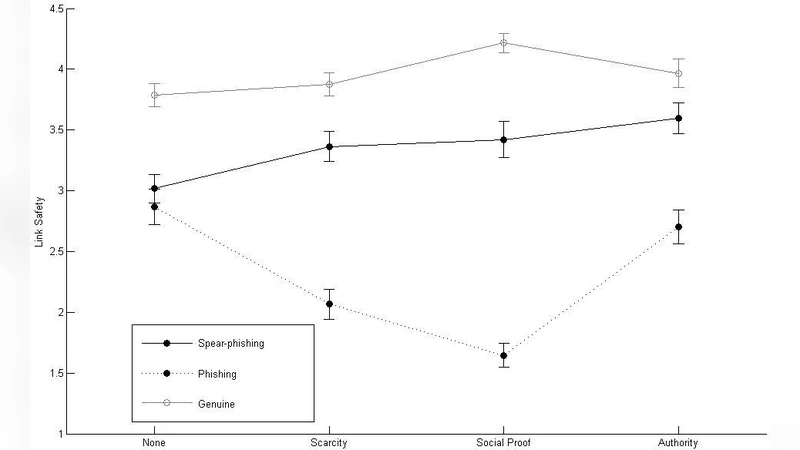

We examined the influence of three social engineering strategies on users’ judgments of how safe it is to click on a link in an email. The three strategies examined were authority, scarcity and social proof, and the emails were either genuine, phishing or spear-phishing. Of the three strategies, the use of authority was the most effective strategy in convincing users that a link in an email was safe. When detecting phishing and spear-phishing emails, users performed the worst when the emails used the authority principle and performed best when social proof was present. Overall, users struggled to distinguish between genuine and spear-phishing emails. Finally, users who were less impulsive in making decisions generally were less likely to judge a link as safe in the fraudulent emails. Implications for education and training are discussed.

💡 Research Summary

The paper investigates how three classic social‑engineering principles—authority, scarcity, and social proof—affect users’ judgments about the safety of clicking links embedded in email messages. The authors constructed nine experimental email conditions by crossing the three persuasion tactics with three email types: genuine, generic phishing, and spear‑phishing. A sample of over three hundred participants, drawn from university students and working professionals, first completed an impulsivity questionnaire and then evaluated each email on a five‑point Likert scale indicating how safe they believed the link to be.

Statistical analysis revealed that the authority principle was the most potent manipulator. When authority cues (e.g., a message purportedly from a senior executive or a recognized institution) were present, participants rated the link as safe far more often than in the scarcity or social‑proof conditions (p < 0.001). This effect was especially pronounced for spear‑phishing emails, where the authority cue essentially erased the performance gap between malicious and legitimate messages, pushing the average safety rating from 3.8 to 4.5 on a 5‑point scale. In contrast, emails that employed social proof—such as references to colleagues’ actions or endorsements—produced the highest detection rates. Participants consistently gave lower safety scores (average 2.3) for phishing and spear‑phishing messages containing social‑proof cues, indicating that peer‑based validation prompts users to be more skeptical. The scarcity tactic yielded intermediate results; it generated a sense of urgency that modestly increased click‑through intent but did not match the persuasive power of authority or the protective effect of social proof.

A secondary focus of the study was the role of individual impulsivity. Participants scoring low on impulsivity (i.e., more deliberative decision‑makers) were about 15 % less likely to deem a fraudulent link safe, regardless of the persuasion tactic employed. Moreover, impulsivity moderated the authority effect: highly impulsive individuals were especially vulnerable to authority cues, showing a marked increase in safety judgments when authority was present.

From these findings, the authors derive several practical recommendations for cybersecurity education and organizational policy. First, training curricula should incorporate authority‑based phishing simulations so that users experience firsthand how legitimate‑looking authority can override their skepticism. Second, leveraging social proof as a defensive mechanism—such as encouraging peer verification of suspicious emails—can boost detection rates. Third, tailoring interventions to personality traits, particularly by offering decision‑delay tools (e.g., a mandatory 10‑second pause before clicking) to high‑impulsivity staff, may reduce susceptibility. Finally, technical controls that automatically verify the authenticity of messages claiming high authority (e.g., CEO or IT department emails) can serve as an additional barrier against authority‑driven attacks.

In sum, the study provides empirical evidence that authority is the most effective social‑engineering lever for convincing users that a malicious link is safe, while social proof can act as a protective cue. It also highlights the moderating influence of impulsivity on phishing susceptibility. These insights inform both the design of more realistic phishing awareness training and the development of layered technical defenses aimed at strengthening the human element of the security perimeter.