Semidefinite Programs for Exact Recovery of a Hidden Community

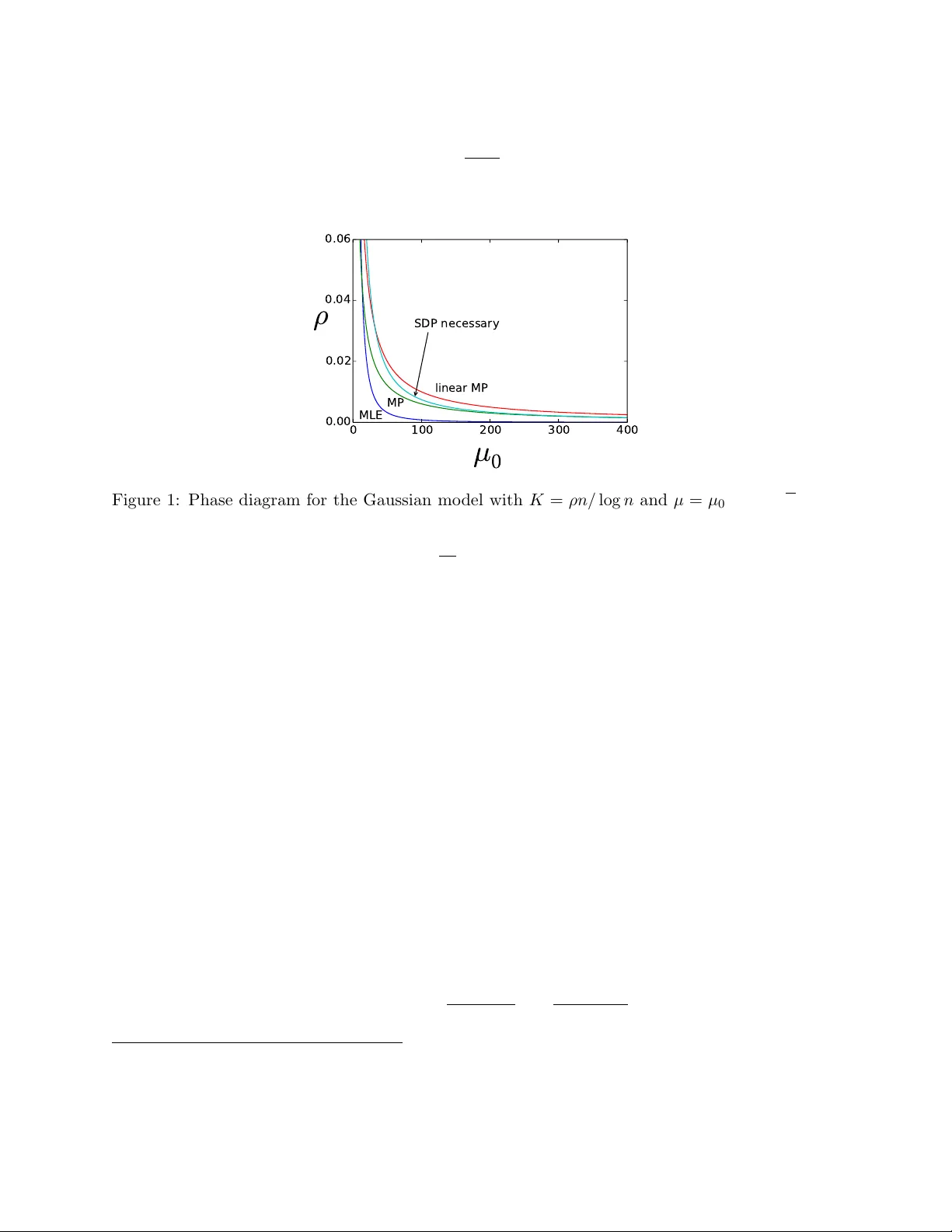

We study a semidefinite programming (SDP) relaxation of the maximum likelihood estimation for exactly recovering a hidden community of cardinality $K$ from an $n \times n$ symmetric data matrix $A$, where for distinct indices $i,j$, $A_{ij} \sim P$ i…

Authors: Bruce Hajek, Yihong Wu, Jiaming Xu