Combinatorial Topic Models using Small-Variance Asymptotics

Topic models have emerged as fundamental tools in unsupervised machine learning. Most modern topic modeling algorithms take a probabilistic view and derive inference algorithms based on Latent Dirichlet Allocation (LDA) or its variants. In contrast, …

Authors: Ke Jiang, Suvrit Sra, Brian Kulis

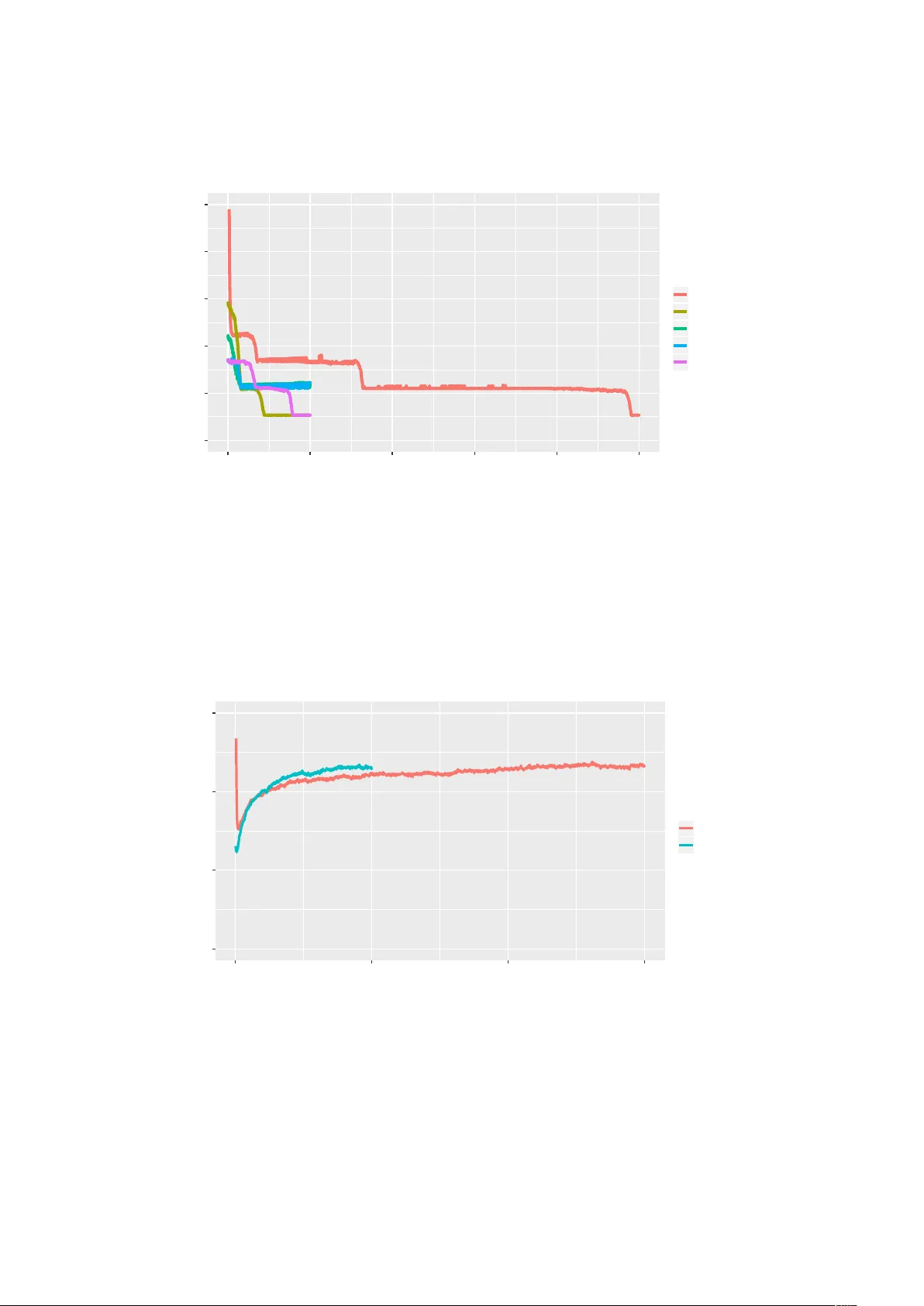

Combinatorial T opic Models using Small-V ariance Asymptotics K e Jiang Dept. of Computer Science and Engineering Ohio State Uni versity jiang.454@osu.edu Suvrit Sra Lab . for Information and Decision Systems Massachusetts Institute of T echnology suvrit@mit.edu Brian Kulis Dept. of Electrical & Computer Engineering and Dept. of Computer Science Boston Uni versity bkulis@bu.edu Abstract T opic models hav e emer ged as fundamental tools in unsupervised machine learning. Most modern topic modeling algorithms tak e a probabilistic view and derive inference algorithms based on Latent Dirichlet Allocation (LD A) or its variants. In contrast, we study topic modeling as a combinatorial optimization problem, and propose a ne w objectiv e function derived from LD A by passing to the small- varia nce limit. W e minimize the deriv ed objectiv e by using ideas from combinatorial optimization, which results in a ne w , fast, and high-quality topic modeling algorithm. In particular , we sho w that our results are competitiv e with popular LDA-based topic modeling approaches, and also discuss the (dis)similarities between our approach and its probabilistic counterparts. 1 Intr oduction T opic modeling has long been fundamental to unsupervised learning on large document collections. Though the roots of topic modeling date back to latent semantic indexing [ 12 ] and probabilistic latent semantic indexing [ 16 ], the arri v al of Latent Dirichlet Allocation (LD A) [ 9 ] was a turning point that transformed the community’ s thinking about topic modeling. LD A led to sev eral followups that address some limitations of the original model [ 8 , 31 ], and also helped pave the way for subsequent adv ances in Bayesian learning methods, including variational inference methods [ 29 ], nonparametric Bayesian models [ 7 , 28 ], among others. The LD A family of topic models are almost exclusiv ely cast as probabilistic models. Consequently , the v ast majority of techniques de veloped for topic modeling—collapsed Gibbs sampling [ 15 ], v ariational methods [ 9 , 29 ], and “factorization” approaches with theoretical guarantees [ 1 , 3 , 6 ]—are centered around performing inference for underlying probabilistic models. By limiting ourselves to a purely probabilistic vie wpoint, we may be missing important opportunities grounded in combinatorial thinking. This realization leads us to the central question of this paper: Can we obtain a combinatorial topic model that competes with LD A? W e answer this question in the affirmati ve. In particular , we propose a combinatorial optimization formulation for topic modeling, deri ved using small-variance asymptotics (SV A) on the LD A model. SV A produces limiting v ersions of v arious probabilistic learning models, which can then be solv ed as combinatorial optimization problems. An analogy worth keeping in mind here is ho w k -means solves the combinatorial problem that arises upon letting v ariances go to zero in Gaussian mixtures. 1 SV A techniques hav e prov ed quite fruitful recently , e.g., for cluster ev olution [ 11 ], hidden Markov models [ 26 ], feature learning [ 10 ], supervised learning [ 32 ], hierarchical clustering [ 22 ], and others [ 17 , 33 ]. A common theme in these examples is that computational advantages and good empirical performance of k -means carry over to richer SV A based models. Indeed, in a compelling example, [ 11 ] demonstrate ho w a hard cluster e volution algorithm obtained via SV A is orders of magnitude faster than competing sampling-based methods, while still being significantly more accurate than competing probabilistic inference algorithms on benchmark data. But merely using SV A to obtain a combinatorial topic model does not suffice. W e need effecti ve algorithms to optimize the resulting model. Unfortunately , a direct application of greedy combinatorial procedures on the LD A-based SV A model fails to compete with the usual probabilistic LD A methods. This setback necessitates a ne w idea. Surprisingly , as we will see, a local refinement procedure combined with an improved word assignment technique transforms the SV A approach into a competitive topic modeling algorithm. Contributions . In summary the main contributions of our paper are the follo wing: • W e perform SV A on the standard LD A model and obtain through it a combinatorial topic model. • W e dev elop an optimization procedure for optimizing the deriv ed combinatorial model by utilizing local refinement and ideas from the facility location problem. • W e show how our procedure can be implemented to take O ( N K ) time per iteration to assign each word token to a topic, where N is the total number of word tokens and K the number of topics. • W e demonstrate that our approach competes fav orably with existing state-of-the-art topic modeling algorithms; in particular , our approach is orders of magnitude faster than sampling-based approaches, with comparable or better accuracy . Before proceeding to outline the technical details, we make an important comment reg arding e valuation of topic models. The connection between our approach and standard LDA may be vie wed analogously to the connection between k-means and a Gaussian mixture model. As such, ev aluation is nontrivial; most topic models are e valuated using predicti ve log-likelihood or related measures. In light of the “hard-vs-soft” analogy , a predictiv e log-likelihood score can be a misleading wa y to e v aluate performance of the k-means algorithm, so clustering comparisons typically focus on ground-truth accurac y (when possible). Due to the lack of a vailable ground truth data, to assess our combinatorial model we must resort to synthetic data sampled from the LD A model to enable meaningful quantitativ e comparisons; but in line with common practice we also present results on real-world data, for which we use both hard and soft predicti ve log-likelihoods. 1.1 Related W ork LD A Algorithms. Many techniques ha ve been dev eloped for efficient inference for LD A. The most popular are perhaps MCMC-based methods, notably the collapsed Gibbs sampler (CGS) [ 15 ], and variational inference methods [ 9 , 29 ]. Among MCMC and variational techniques, CGS typically yields excellent results and is guaranteed to sample from the desired posterior with suf ficiently many samples. Its running time can be slo w and many samples may be required before con ver gence. Since topic models are often used on large (document) collections, significant ef fort has been made in scaling up LD A algorithms. One recent example is [ 23 ] that presents a massiv ely distributed implementation. Such methods are outside the focus of this paper , which focuses more on our ne w combinatorial model that can quatitati vely compete with the probabilistic LD A model. Ultimately , our model should be amenable to fast distrib uted solvers, and obtaining such solv ers for our model is an important part of future work. 2 A complementary line of algorithms starts with [ 3 , 2 ], who consider certain separability assumptions on the input data to circumvent NP-Hardness of the basic LDA model. These works ha ve sho wn performance competiti ve to Gibbs sampling in some scenarios while also featuring theoretical guarantees. Other recent vie wpoints on LD A are offered by [1, 24, 6]. Small-V ariance Asymptotics (SV A). As noted abov e, SV A has recently emerged as a powerful tool for obtaining scalable algorithms and objecti ve functions by “hardening” probabilistic models. Similar connections are known for instance in dimensionality reduction [ 25 ], multi-vie w learning, classification [ 30 ], and structured prediction [ 27 ]. Starting with Dirichlet process mixtures [ 21 ], one thread of research has considered applying SV A to richer Bayesian nonparametric models. Applications include clustering [ 21 ], feature learning [ 10 ], ev olutionary clustering [ 11 ], infinite hidden Marko v models [ 26 ], Markov jump processes [ 17 ], infinite SVMs [ 32 ], and hierarchical clustering methods [ 22 ]. A related thread of research considers how to apply SV A methods when the data likelihood is not Gaussian, which is precisely the scenario under which LD A falls. In [ 19 ], it is sho wn ho w SV A may be applied as long as the likelihood is a member of the exponential family of distrib utions. Their work considers topic modeling as a potential application, but does not dev elop any algorithmic tools, and without these SV A fails to succeed on topic models; the present paper fixes this by using a stronger w ord assignment algorithm and introducing local refinement. Combinatorial Optimization. In dev eloping effecti ve algorithms for topic modeling, we will borrow some ideas from the large literature on combinatorial optimization algorithms. In particular , in the k -means community , significant effort has been made on how to impro ve upon the basic k -means algorithm, which is kno wn to be prone to local optima; these techniques include local search methods [ 14 ] and good initialization strategies [ 4 ]. W e also borro w ideas from approximation algorithms, most notably algorithms based on the facility location problem [18]. 2 SV A f or Latent Dirichlet Allocation W e no w detail our combinatorial approach to topic modeling. W e start with the deriv ation of the underlying objecti ve function that is the basis of our w ork. This objecti ve is deri ved from the LD A model by applying SV A, and contains two terms. The first is similar to the k -means clustering objectiv e in that it seeks to assign words to topics that are, in a particular sense, “close. ” The second term, arising from the Dirichlet prior on the per-document topic distrib utions, places a penalty on the number of topics per document. Recall the standard LD A model. W e choose topic weights for each document as θ j ∼ Dir ( α ) , where j ∈ { 1 , ..., M } . Then we choose w ord weights for each topic as ψ i ∼ Dir ( β ) , where i ∈ { 1 , ..., K } . Then, for each word i in document j , we choose a topic z j t ∼ Cat ( θ j ) and a word w j t ∼ Cat ( ψ z j t ) . Here α and β are scalars (i.e., we are using a symmetric Dirichlet distribution). Let W denote the vector of all words in all documents, Z the topic indicators of all w ords in all documents, θ the concatenation of all the θ j v ariables, and ψ the concatenation of all the ψ i v ariables. Also let N j be the total number of word tokens in document j . The θ j vectors are each of length K , the number of topics. The ψ i vectors are each of length D , the size of the vocab ulary . W e can write down the full joint lik elihood p ( W , Z , θ , ψ | α, β ) of the model in the factored form K Y i =1 p ( ψ i | β ) M Y j =1 p ( θ j | α ) N j Y t =1 p ( z j t | θ j ) p ( w j t | ψ z j t ) , where each of the probabilities is as specified by the LD A model. Follo wing standard LD A manipulations, we can eliminate v ariables to simplify inference by integrating out θ to obtain p ( Z , W , ψ | α, β ) = Z θ p ( W , Z , θ , ψ | α , β ) d θ . (1) 3 After integration and some simplification, (1) becomes K Y i =1 p ( ψ i | β ) M Y j =1 N j Y t =1 p ( w j t | ψ z j t ) × M Y j =1 Γ( αK ) Γ( P K i =1 n i j · + αK ) K Y i =1 Γ( n i j · + α ) Γ( α ) . (2) Here n i j · is the number of word tokens in document j assigned to topic i . Now , follo wing [ 10 ], we can obtain the SV A objecti ve by taking the (ne gative) log arithm of this likelihood and letting the v ariance go to zero. Gi ven space considerations, we will summarize this deri vation; full details are a v ailable in Appendix A. Consider the first bracketed term of (2) . T aking logs yields a sum over terms of the form log p ( ψ i | β ) and terms of the form log p ( w j t | ψ z j t ) . Noting that the latter of these is a multinomial distribution, and thus a mem- ber of the exponential family , we can appeal to the results in [ 5 , 19 ] to introduce a new parameter for scaling the v ariance. In particular , we can write p ( w j t | ψ z j t ) in its Br e gman diver gence form exp( − KL ( ˜ w j t , ψ z j t )) , where KL refers to the discrete KL-div ergence, and ˜ w j t is an indicator vector for the word at token w j t . It is straightforward to v erify that KL ( ˜ w j t , ψ z j t ) = − log ψ z j t ,w j t . Next, introduce a ne w parameter η that scales the v ariance appropriately , and write the resulting distribution as proportional to exp( − η · KL ( ˜ w j t , ψ z j t )) . As η → ∞ , the expected v alue of the distribution remains fixed while the v ariance goes to zero, exactly what we require. After this, consider the second bracketed term of (2) . W e scale α appropriately as well; this ensures that the hierarchical form of the model is retained asymptotically . In particular, we write α = exp( − λ · η ) . After some manipulation of this distrib ution, we can conclude that the ne gati ve log of the Dirichlet multinomial term becomes asymptotically η λ ( K j + − 1) , where K j + is the number of topics i in document j where n i j · > 0 , i.e., the number of topics currently used by document j . (The maximum value for K j + is K , the total number of topics.) T o formalize, let f ( x ) ∼ g ( x ) denote that f ( x ) /g ( x ) → 1 as x → ∞ . Then we ha ve the follo wing (see Appendix A for a proof): Lemma 1. Consider the likelihood p ( Z | α ) = M Y j =1 Γ( αK ) Γ( P K i =1 n i j · + αK ) K Y i =1 Γ( n i j · + α ) Γ( α ) . If α = exp( − λ · η ) , then asymptotically as η → ∞ , the ne gative log-likelihood satisfies − log p ( Z | α ) ∼ η λ X M j =1 ( K j + − 1) . No w we put the terms of the negati ve log-likelihood together . The − log p ( ψ i | β ) terms vanish asymptoti- cally since we are not scaling β (see the note below on scaling β ). Thus, the remaining terms in the SV A objecti ve are the ones arising from the w ord likelihoods and the Dirichlet-multinomial. Using the Bregman di ver gence representation with the additional η parameter , we conclude that the negati ve log-likelihood asymptotically yields the follo wing: − log p ( Z , W , ψ | α, β ) ∼ η M X j =1 N j X t =1 KL ( ˜ w j t , ψ z j t ) + λ M X j =1 ( K j + − 1) , which leads to our final objecti ve function min Z , ψ M X j =1 N j X t =1 KL ( ˜ w j t , ψ z j t ) + λ M X j =1 K j + . (3) 4 W e remind the reader that KL ( ˜ w j t , ψ z j t ) = − log ψ z j t ,w j t . Thus, we obtain a k -means-like term that says that all words in all documents should be “close” to their assigned topic in terms of KL-di vergence, b ut that we should also not hav e too many topics represented in each document. Note . W e did not scale β to obtain a simple objectiv e with only one parameter (other than the total number of topics), but let us say a fe w words about scaling β . A natural approach is to further integrate out ψ of the joint likelihood, as is done in the collapsed Gibbs sampler . One would obtain additional Dirichlet-multinomial distributions, and properly scaling as discussed abov e would yield a simple objectiv e that places penalties on the number of topics per document as well as the number of words in each topic. Optimization would then be performed with respect to the topic assignment matrix. Future work will consider ef fectiv eness of such an objecti ve function for topic modeling. 3 Algorithms W ith our combinatorial objectiv e in hand, we are ready to dev elop algorithms that optimize it. In particular , we discuss a locally-con ver gent algorithm similar to k -means and the hard topic modeling algorithm [ 19 ]. Then, we introduce two more powerful techniques: (i) a word-le vel assignment method that arises from connections between our proposed objectiv e function and the f acility location problem; and (ii) an incremental topic refinement method that is inspired by local-search methods dev eloped for k -means. Despite the apparent complexity of our algorithms, we show that the per -iteration time matches that of the collapsed Gibbs sampler (while empirically con ver ging in just a few iterations, as opposed to the thousands typically required for Gibbs sampling). W e first describe a basic iterativ e algorithm for optimizing the combinatorial hard LDA objecti ve deri ved in the previous section (see Appendix A for pseudo-code). The basic algorithm follows the k -means style—we perform alternate optimization by first minimizing with respect to the topic indicators for each word (the Z v alues) and then minimizing with respect to the topics (the ψ vectors). Consider first minimization with respect to ψ , with Z fixed. In this case, the penalty term of the objective function for the number of topics per document is not rele vant to the minimization. Therefore the minimization can be performed in closed form by computing means based on the assignments, due to kno wn properties of the KL-di vergence; see Proposition 1 of [ 5 ]. In our case, the topic vectors will be computed as follo ws: entry ψ iu corresponding to topic i and word u will simply be equal to the number of occurrences of word u assigned to topic i normalized by the total number of word tokens assigned to topic i . Next consider minimization with respect to Z with fixed ψ . W e follow a strategy similar to DP-means [ 21 ]. In particular , we compute the KL-div ergence between each word token w j t and ev ery topic i via − log ( ψ i,w j t ) . Then, for any topic i that is not currently occupied by any word token in document j , i.e., z j t 6 = i for all tokens t in document j , we penalize the distance by λ . Next we obtain new assignments by reassigning each word token to the topic corresponding to its smallest div ergence (including an y penalties). W e continue this alternating strategy until con ver gence. The running time of the batch algorithm can be shown to be O ( N K ) per iteration, where N is the total number of word tokens and K is the number of topics. One can also show that this algorithm is guaranteed to con verge to a local optimum, similar to k -means and DP-means. 3.1 Impro ved W ord Assignments The basic algorithm has the adv antage that it achie ves local con ver gence. Howe ver , it is quite sensitive to initialization, analogous to standard k-means. In this section, we discuss and analyze an alternativ e assignment technique for Z , which may be used as an initialization to the locally-conv ergent basic algorithm or to replace it completely . 5 Algorithm 1 Improv ed W ord Assignments for Z Input: W ords: W , Number of topics: K , T opic penalty: λ , T opics: ψ f or ev ery document j do Let f i = λ for all topics i . Initialize all word tokens to be unmark ed. while there are unmarked tokens do Pick the topic i and set of unmarked tokens T that minimizes (4). Let f i = 0 and mark all tokens in T . Assign z j t = i for all t ∈ T . end while end f or Output: Assignments Z . Algorithm 1 details the alternate assignment strate gy for tokens. The inspiration for this greedy algorithm arises from the fact that we can view the assignment problem for Z , giv en ψ , as an instance of the unca- pacitated facility location (UFL) problem [ 18 ]. Recall that the UFL problem aims to open a set of facilities from a set F of potential locations. Giv en a set of clients D , a distance function d : D × F → R + , and a cost function f : F → R + for the set F , the UFL problem aims to find a subset S of F that minimizes P i ∈ S f i + P j ∈ D (min i ∈ S d ij ) . T o map UFL to the assignment problem in combinatorial topic modeling, consider the problem of assigning word tokens to topics for some fixed document j . The topics correspond to the facilities and the clients correspond to word tokens. Let f i = λ for each facility , and let the distances between clients and facilities be given by the corresponding KL-diver gences as detailed earlier . Then the UFL objecti ve corresponds exactly to the assignment problem for topic modeling. Algorithm 1 is a greedy algorithm for UFL that has been shown to achiev e constant f actor approximation guarantees when distances between clients and facilities forms a metric [ 18 ] (this guarantee does not apply in our case, as KL-di ver gence is not a metric). The algorithm, must select, among all topics and all unmarked tokens T , the minimizer to f i + P t ∈ T KL ( ˜ w j t , ψ i ) | T | . (4) This algorithm appears to be computationally e xpensive, requiring multiple rounds of marking where each round requires us to find a minimizer over e xponentially-sized sets. Surprisingly , under mild assumptions we can use the structure of our problem to deriv e an efficient implementation of this algorithm that runs in total time O ( N K ) . The details of this efficient implementation are presented in Appendix B. 3.2 Incremental T opic Refinement Unlike traditional clustering problems, topic modeling is hierarchical: we have both word le vel assignments and “mini-topics” (formed by word tokens in the same document which are assigned to the same topic). Explicitly refining the mini-topics should help in achie ving better word-coassignment within the same document. Inspired by local search techniques in the clustering literature [ 14 ], we take a similar approach here. Howe ver , traditional approaches [ 13 ] do not directly apply in our setting; we therefore adapt local search techniques from clustering to the topic modeling problem. More specifically , we consider an incremental topic refinement scheme that works as follo ws. For a gi ven document, we consider sw apping all word tokens assigned to the same topic within that document to another topic. W e compute the change in objecti ve function that would occur if we both updated the topic 6 Algorithm 2 Incremental T opic Refinements for Z Input: W ords: W , Number of topics: K , T opic penalty: λ , Assignment: Z , T opics: ψ randomly permute the documents. f or ev ery document j do f or each mini-topic S , where z j s = i ∀ s ∈ S for some topic i do f or ev ery other topic i 0 6 = i do Compute ∆( S, i, i 0 ) , the change in the obj. function when re-assigning z j s = i 0 ∀ s ∈ S. end f or Let i ∗ = argmin i 0 ∆( S, i, i 0 ) . Reassign tokens in S to i ∗ if it yields a smaller obj. Update topics ψ and assignments Z . end f or end f or Output: Assignments Z and T opics ψ . assignments for those tokens and then updated the resulting topic vectors. Specifically , for document j and its mini-topic S formed by its w ord tokens assigned to topic i , the objecti ve function change can be computed by ∆( S, i, i 0 ) = − ( n i ·· − n i j · ) φ ( ψ − i ) − ( n i 0 ·· + n i j · ) φ ( ψ + i 0 ) + n i ·· φ ( ψ i ) + n i 0 ·· φ ( ψ i 0 ) − λ I [ i 0 ∈ T j ] , where n i j · is the number of tokens in document j assigned to topic i , n i ·· is the total number of tokens assigned to topic i , ψ − i and ψ + i 0 are the updated topics, T j is the set of all the topics used in document j , and φ ( ψ i ) = P w ψ iw log ψ iw . W e accept the mo ve if min i 0 6 = i ∆( S, i, i 0 ) < 0 and update the topics ψ and assignments Z accordingly . Then we continue to the next mini-topic, hence the term “incremental”. Note here we accept all moves that improv e the objectiv e function instead of just the single best move as in traditional approaches [ 13 ]. Since ψ and Z are updated in ev ery objectiv e-decreasing mov e, we randomly permute the processing order of the documents in each iteration. This usually helps in obtaining better results in practice. See Algorithm 2 for details. At first glance, it appears that this incremental topic refinement strategy may be computationally expensi ve. Ho wev er, computing the global change in objectiv e function ∆( S, i, i 0 ) can be performed in O ( | S | ) time, if the topics are maintained by count matrices. Only the counts inv olving the words in the mini-topic and the total counts are affected. Since we compute the change across all topics, and across all mini-topics S , the total running time of the incremental topic refinement can be seen to be O ( N K ) , as in the basic batch algorithm and the facility location assignment algorithm. 4 Experiments In this section, we compare the algorithms proposed abov e with their probabilistic counterparts. 4.1 Synthetic Documents Our first set of experiments is on simulated data. W e compare three versions of our algorithms—Basic Batch ( Basic ), Improv ed W ord Assignment ( Word ), and Impro ved W ord with T opic Refinement ( Word+Refine )— with the collapsed Gibbs sampler ( CGS ) 1 [ 15 ], the standard v ariational inference algorithm ( VB ) 2 [ 9 ], and the 1 http://psiexp.ss.uci.edu/research/programs data/toolbox.htm 2 http://scikit-learn.org/stable/modules/generated/sklearn.decomposition.LatentDirichletAllocation.html 7 Method Number of Documents 5k 10k 50k 100k 500k 1M CGS (s) .143 .321 1.96 4.31 23.36 55.69 Word (s) .438 .922 4.88 9.75 50.38 101.58 Word / CGS 3.07 2.87 2.48 2.26 2.16 1.82 Refine (s) .277 .533 2.58 5.09 25.75 52.28 Refine / CGS 1.94 1.66 1.32 1.18 1.10 0.94 Algorithm 6K 8K 10K CGS 0.098 0.338 0.276 VB 0.448 0.443 0.392 Anchor 0.118 0.118 0.112 Basic 1.805 1.796 1.794 Word 0.582 0.537 0.504 W+R 0.155 0.110 0.105 KMeans 1.022 0.921 0.952 Figure 1: Left: Running time comparison per iteration (in secs) of CGS to the facility location improved word algorithm ( Word ) and local refinement ( Refine ), on data sets of different sizes. Word / CGS and Refine / CGS refer to the ratio of Word and Refine to CGS . For larger datasets, Word takes roughly 2 Gibbs iterations and Refine takes roughly 1 Gibbs iteration. Right: Comparison of topic reconstruction errors of dif ferent algorithms with different sizes of SynthB. recent Anchor method 3 [2]. Methodology . Due to a lack of ground truth data for topic modeling, follo wing [ 2 ], we benchmark on synthetic data. W e train all algorithms on the following data sets. (A) documents sampled from an LD A model with α = 0 . 04 , β = 0 . 05 , with 20 topics and having vocab ulary size 2000. Each document has length 150. (B) documents sampled from an LDA model with α = 0 . 02 , β = 0 . 01 , 50 topics and v ocabulary size 3000. Each document has length 200. For the collapsed Gibbs sampler , we collect 10 samples with 30 iterations of thinning after 3000 burn-in iterations. The variational inference runs for 100 iterations. The Word algorithm replaces basic word assignment with the improved word assignment step within the batch algorithm, and Word+Refine further alternates between improv ed word and incremental topic refinement steps. The Word and Word+Refine are run for 20 and 10 iterations respectiv ely . For Basic , Word and Word+Refine , we run e xperiments with λ ∈ { 6 , 7 , 8 , 9 , 10 , 11 , 12 } , and the best results are presented if not stated otherwise. In contrast, the true α, β parameters are provided as input to the LDA algorithms, whene ver applicable. W e note that we ha ve heavily handicapped our methods by this setup, since the LD A algorithms are designed specifically for data from the LD A model. Assignment accuracy . Both the Gibbs sampler and our algorithms provide word-le vel topic assignments. Thus we can compare the training accurac y of these assignments, which is sho wn in T able 1. The result of the Gibbs sampler is giv en by the highest among all the samples selected. The accuracy is sho wn in terms of the normalized mutual information (NMI) score and the adjusted Rand index (ARand), which are both in the range of [0,1] and are standard ev aluation metrics for clustering problems. From the plots, we can see that the performance of Word+Refine matches or slightly outperforms the Gibbs sampler for a wide range of λ v alues. T opic reconstruction err or . Now we look at the reconstruction error between the true topic-word distributions and the learned distributions. In particular , gi ven a learned topic matrix ˆ ψ and the true matrix ψ , we use the Hungarian algorithm [ 20 ] to align topics, and then e v aluate the ` 1 distance between each pair of topics. Figure 1 presents the mean reconstruction errors per topic of dif ferent learning algorithms for v arying number of documents. As a baseline, we also include the results from the k -means algorithm with KL-di ver gence [ 5 ] where each document is assigned to a single topic. W e see that, on this data, the Anchor and Word+Refine methods perform the best; see Appendix C for further results and discussion. Running Time. See Figure 1 for comparisons of our approach to CGS . The two most expensi ve steps of 3 http://www .cs.nyu.edu/ ∼ halpern/code.html 8 NMI / ARand λ = 8 λ = 9 λ = 10 λ = 11 λ = 12 Basic 0.027 / 0.009 0.027 / 0.009 0.027 / 0.009 0.027 / 0.009 0.027 / 0.009 Word 0.724 / 0.669 0.730 / 0.660 0.786 / 0.750 0.786 / 0.745 0.784 / 0.737 Word+Refine 0.828 / 0.838 0.839 / 0.850 0.825 / 0.810 0.847/ 0.859 0.848 / 0.859 CGS 0.829 / 0.839 NMI / ARand λ = 6 λ = 7 λ = 8 λ = 9 λ = 10 Basic 0.043 / 0.007 0.043 / 0.007 0.043 / 0.007 0.043 / 0.007 0.043 / 0.007 Word 0.850 / 0.737 0.854 / 0.743 0.855 / 0.752 0.855 / 0.750 0.850 / 0.743 Word+Refine 0.922 / 0.886 0.926 / 0.901 0.913 / 0.860 0.923 / 0.899 0.914 / 0.876 CGS 0.917 / 0.873 T able 1: The NMI scores and Adjusted Rand Index (best results in bold) for word assignments of our algorithms for both synthetic datasets with 5000 documents ( top : SynthA, bottom : SynthB). Enron β = 0 . 1 β = 0 . 01 β = 0 . 001 hard original KL hard original KL hard original KL CGS -5.932 -8.583 3.899 -5.484 -10.781 7.084 -5.091 -13.296 10.000 W+R -5.434 -9.843 4.541 -5.147 -11.673 7.225 -4.918 -13.737 9.769 NYT β = 0 . 1 β = 0 . 01 β = 0 . 001 hard original KL hard original KL hard original KL CGS -6.594 -9.361 4.374 -6.205 -11.381 7.379 -5.891 -13.716 10.135 W+R -6.105 -10.612 5.059 -5.941 -12.225 7.315 -5.633 -14.524 9.939 T able 2: The predicti ve w ord log-likelihood on ne w documents for Enron ( K = 100 topics) and NYT imes ( K = 100 topics) datasets with fixed α v alue. “hard” is short for hard predicti ve w ord log-likelihood which is computed using the word-topic assignments inferred by the Word algorithm, “original” is short for original predicti ve word log-likelihood which is computed using the document-topic distributions inferred by the sampler , and “KL ” is short for symmetric KL-di ver gence. the Word+Refine algorithm are the w ord assignments via facility location and the local refinement step (the other steps of the algorithm are lo wer-order). The relativ e runnings times impro ve as the data set sizes gets larger and, on lar ge data sets, an iteration of Refine is roughly equiv alent to one Gibbs iteration while an iteration of Word is roughly equiv alent to two Gibbs iterations. Since one typically runs thousands of Gibbs iterations (while ours runs in 10 iterations even on v ery large data sets), we can observe se veral orders of magnitude improv ement in speed by our algorithm. Further , running times could be significantly enhanced by noting that the facility location algorithm trivially parallellizes. In addition to these results, we found our per-iteration running times to be consistently f aster than VB . See Appendix C for further results on synthetic data, including on using our algorithm as initialization to the collapsed Gibbs sampler . 4.2 Real Documents W e consider two real-world data sets with different properties: a random subset of the Enron emails (8K documents, v ocabulary size 5000), and a subset of the New Y ork Times articles 4 (15K documents, v ocabulary size 7000). 1K documents are reserved for predictiv e performance assessment for both datasets. W e use the follo wing metrics: a “hard” predictiv e word log-likelihood and the standard probabilistic predictive word 4 http://archiv e.ics.uci.edu/ml/machine-learning-databases/bag-of-words/ 9 CGS art, artist, painting, museum, century , show , collection, history , french, exhibition W+R painting, exhibition, portrait, dra wing, object, photograph, gallery , flag, artist CGS plane, flight, airport, passenger , pilot, aircraft, crew , planes, air , jet W+R flight, plane, passenger , airport, pilot, airline, aircraft, jet, planes, airlines CGS money , million, fund, donation, pay , dollar, contrib ution, donor, raising, financial W+R fund, raising, contribution, donation, raised, donor , soft, raise, finance, foundation CGS car , driver , truck, vehicles, vehicle, zzz ford, seat, wheel, driving, dri ve W+R car , driver , vehicles, vehicle, truck, wheel, fuel, engine, dri ve, zzz ford T able 3: Example topics pairs learned from NYT imes dataset. log-likelihood on ne w documents. T o get the topic assignments for new documents, we c an either perform one iteration of the Word algorithm which can be used to compute the “hard” predictiv e log-lik elihood, or use MCMC to sample the assignments with the learned topic matrix. Our hard log-likelihood can be vie wed as the natural analogue of the standard predictiv e log-likelihood to our setting. W e also compute the symmetric KL-di ver gence between learned topics. T o make f air comparisons, we tune the λ v alue such that the resulting number of topics per document is comparable to that of the sampler . W e remind the reader of issues raised in the introduction, namely that our combinatorial approach is no longer probabilistic, and therefore would not necessarily be expected to perform well on a standard lik elihood-based score. T able 5 sho ws the results on the Enron and NYTimes datasets. W e can see that our approach excels in the “hard” predictiv e word log-likelihood while lags in the standard mixture-view predicti ve word log-likelihood, which is in line with the objectiv es and reminiscent to the differences between k -means and GMMs. T able 3 further sho ws some sample topics generated by CGS and our method. See Appendix C for further results on predicti ve log-likelihood, including comparisons to other approaches than CGS . 5 Conclusions Our goal has been to lay the groundwork for a combinatorial optimization view of topic modeling as an alternati ve to the standard probabilistic framew ork. Small-v ariance asymptotics pro vides a natural way to obtain an underlying objecti ve function, using the k -means connection to Gaussian mixtures as an analogy . Potential future work includes distrib uted implementations for further scalability , adapting k-means-based semi-supervised clustering techniques to this setting, and extensions of k-means++ [ 4 ] to deriv e explicit performance bounds for this problem. Refer ences [1] A. Anandkumar , Y . Liu, D. J. Hsu, D. P . Foster , and S. M. Kakade. A spectral algorithm for latent Dirichlet allocation. In NIPS , pages 917–925, 2012. [2] S. Arora, R. Ge, Y . Halpern, D. Mimno, A. Moitra, D. Sontag, Y . W u, and M. Zhu. A practical algorithm for topic modeling with prov able guarantees. In ICML , 2013. [3] S. Arora, R. Ge, and A. Moitra. Learning topic models–going beyond SVD. In F oundations of Computer Science (FOCS) , pages 1–10. IEEE, 2012. [4] D. Arthur and S. V assilvitskii. k-means++: The advantages of careful seeding. In A CM-SIAM Symposium on Discr ete Algorithms (SODA) , 2007. 10 [5] A. Banerjee, S. Merugu, I. S. Dhillon, and J. Ghosh. Clustering with Bregman div ergences. Journal of Machine Learning Resear ch , 6:1705–1749, 2005. [6] T . Bansal, C. Bhattacharyya, and R. Kannan. A prov able SVD-based algorithm for learning topics in dominant admixture corpus. In NIPS , pages 1997–2005, 2014. [7] D. M. Blei, M. I. Jordan, T . L. Griffiths, and J. B. T enenbaum. Hierarchical topic models and the nested Chinese restaurant process. In NIPS , 2004. [8] D. M. Blei and J. D. Lafferty . Correlated topic models. In NIPS , 2006. [9] D. M. Blei, A. Y . Ng, and M. I. Jordan. Latent Dirichlet allocation. Journal of Machine Learning Resear ch , 3(4-5):993–1022, 2003. [10] T . Broderick, B. Kulis, and M. I. Jordan. MAD-Bayes: MAP-based asymptotic deriv ations from Bayes. In ICML , 2013. [11] T . Campbell, M. Liu, B. Kulis, J. How , and L. Carin. Dynamic clustering via asymptotics of the dependent Dirichlet process. In NIPS , 2013. [12] S. Deerwester, S. Dumais, T . Landauer , G. Furnas, and R. Harshman. Indexing by latent semantic analysis. Journal of the American Society of Information Science , 41(6):391–407, 1990. [13] I. S. Dhillon and Y . Guan. Information theoretic clustering of sparse co-occurrence data. In IEEE International Confer ece on Data Mining (ICDM) , 2003. [14] I. S. Dhillon, Y . Guan, and J. Kogan. Iterati ve clustering of high dimensioanl text data augmented by local search. In IEEE International Conference on Data Mining (ICDM) , 2002. [15] T . L. Griffiths and M. Steyvers. Finding scientific topics. Pr oceedings of the National Academy of Sciences , 101:5228–5235, 2004. [16] T . Hofmann. Probabilistic latent semantic indexing. In Pr oc. SIGIR , 1999. [17] J. H. Huggins, K. Narasimhan, A. Saeedi, and V . K. Mansinghka. JUMP-means: Small-variance asymptotics for Marko v jump processes. In ICML , 2015. [18] K. Jain, M. Mahdian, E. Markakis, A. Saberi, and V . V . V azirani. Greedy facility location algorithms analyzed using dual fitting with factor -re vealing LP. Journal of the A CM , 50(6):795–824, 2003. [19] K. Jiang, B. Kulis, and M. I. Jordan. Small-v ariance asymptotics for exponential family Dirichlet process mixture models. In NIPS , 2012. [20] H. W . Kuhn. The Hungarian method for the assignment problem. Naval Resear ch Lo gistics Quarterly , 2(1-2):83–97, 1955. [21] B. Kulis and M. I. Jordan. Revisiting k-means: New algorithms via Bayesian nonparametrics. In ICML , 2012. [22] J. Lee and S. Choi. Bayesian hierarchical clustering with exponential family: Small-variance asymptotics and reducibility . In Artificial Intelligence and Statistics (AIST ATS) , 2015. [23] A. Q. Li, A. Ahmed, S. Ravi, and A. J. Smola. Reducing the sampling complexity of topic models. In A CM SIGKDD , pages 891–900. A CM, 2014. 11 [24] A. Podosinnikov a, F . Bach, and S. Lacoste-Julien. Rethinking LD A: moment matching for discrete ica. In NIPS , pages 514–522, 2015. [25] S. Roweis. EM algorithms for PCA and SPCA. In NIPS , 1997. [26] A. Roycho wdhury , K. Jian, and B. Kulis. Small-variance asymptotics for hidden Markov models. In NIPS , 2013. [27] R. Samdani, K-W . Chang, and D. Roth. A discriminative latent v ariable model for online clustering. In ICML , 2014. [28] Y . W . T eh, M. I. Jordan, M. J. Beal, and D. M. Blei. Hierarchical Dirichlet processes. Journal of the American Statistical Association (J ASA) , 101(476):1566–1581, 2006. [29] Y . W . T eh, D. Ne wman, and M. W elling. A collapsed v ariational Bayesian inference algorithm for latent Dirichlet allocation. In NIPS , 2006. [30] S. T ong and D. K oller . Restricted Bayes optimal classifiers. In AAAI , 2000. [31] X. W ang and E. Grimson. Spatial latent Dirichlet allocation. In NIPS , 2007. [32] Y . W ang and J. Zhu. Small-v ariance asymptotics for Dirichlet process mixtures of SVMs. In Pr oc. T wenty-Eighth AAAI Confer ence on Artificial Intelligence , 2014. [33] Y . W ang and J. Zhu. DP-space: Bayesian nonparametric subspace clustering with small-v ariance asymptotics. In ICML , 2015. [34] I. E. H. Y en, X. Lin, K. Zhang, P . Ravikumar , and I. S. Dhillon. A conv ex ex emplar-based approach to MAD-Bayes Dirichlet process mixture models. In ICML , 2015. A ppendix A Full Derivation of the SV A Objectiv e Recall the standard Latent Dirichlet Allocation (LD A) model: • Choose θ j ∼ Dir ( α ) , where j ∈ { 1 , ..., M } . • Choose ψ i ∼ Dir ( β ) , where i ∈ { 1 , ..., K } . • For each word t in document j : – Choose a topic z j t ∼ Cat ( θ j ) . – Choose a word w j t ∼ Cat ( ψ z j t ) . Here α and β are scalar-v alued (i.e., we are using a symmetric Dirichlet distribution). Denote W as the vector denoting all w ords in all documents, Z as the topic indicators of all words in all documents, θ as the concatenation of all the θ j v ariables, and ψ as the concatenation of all the ψ i v ariables. Also let N j be the total number of word tokens in document j . The θ j vectors are each of length K , the number of topics. The ψ i vectors are each of length D , the number of words in the dictionary . W e can write down the full joint likelihood of the model as p ( W , Z , θ , ψ | α, β ) = K Y i =1 p ( ψ i | β ) M Y j =1 p ( θ j | α ) N j Y t =1 p ( z j t | θ j ) p ( w j t | ψ z j t ) , 12 where each of the probabilities are given as specified in the above model. Now , follo wing standard LDA manipulations, we can eliminate v ariables to simplify inference by integrating out θ to obtain p ( Z , W , ψ | α, β ) = Z θ p ( W , Z , θ , ψ | α , β ) d θ . After simplification, we obtain p ( Z , W , ψ | α, β ) = K Y i =1 p ( ψ i | β ) M Y j =1 N j Y t =1 p ( w j t | ψ z j t ) × M Y j =1 Γ( αK ) Γ( P K i =1 n i j · + αK ) K Y i =1 Γ( n i j · + α ) Γ( α ) . Here n i j · is the number of word tokens in document j assigned to topic i . Now , following [ 10 ], we can obtain the SV A objecti ve by taking the log of this lik elihood and observing what happens when the v ariance goes to zero. In order to do this, we must be able to scale the likelihood categorical distribution, which is not readily apparent. Here we use two f acts about the categorical distrib ution. First, as discussed in [ 5 ], we can equi v alently express the distribution p ( w j t | ψ z j t ) in its Bregman di ver gence form, which will prove amenable to SV A analysis. In particular , example 10 from [ 5 ] details this deriv ation. In our case we have a cate gorical distribution, and thus we can write the probability of tok en w j t as: p ( w j t | ψ z j t ) = exp( − d φ (1 , ψ z j t ,w j t )) . (5) d φ is the unique Bre gman di vergence associated with the categorical distribution which, as detailed in example 10 from [ 5 ], is the discrete KL diver gence and ψ z j t ,w j t is the entry of the topic vector associated with the topic index ed by z j t at the entry corresponding to the word at token w j t . This KL diver gence will correspond to a single term of the form x log ( x/y ) , where x = 1 since we are considering a single token of a w ord in a document. Thus, for a particular token, the KL di ver gence simply equals − log ψ z j t ,w j t . Note that when plugging in − log ψ z j t ,w j t into (5) , we obtain exactly the original probability for word token w j t that we had in the original multinomial distrib ution. W e will write the KL-div ergence d φ (1 , ψ z j t ,w j t ) as KL ( ˜ w j t , ψ z j t ) , where ˜ w j t is an indicator vector for the w ord at token w j t . Although it may appear that we have gained nothing by this notational manipulation, there is a key adv antage of expressing the categorical probability in terms of Bregman diver gences. In particular , the second step is to parameterize the Bregman di vergence by an additional v ariance parameter . As discussed in Lemma 3.1 of [ 19 ], we can introduce another parameter , which we will call η , that scales the variance in an exponential f amily while fixing the mean. This new distrib ution may be represented, using the Bregman di ver gence view , as proportional to exp( − η · KL ( ˜ w j t , ψ z j t )) . As η → ∞ , the mean remains fixed while the v ariance goes to zero, which is precisely what we require to perform small-variance analysis. W e will choose to scale α appropriately as well; this will ensure that the hierarchical form of the model is retained asymptotically . In particular, we will write α = exp( − λ · η ) . Now we consider the full negati ve log-likelihood: − log p ( Z , W , ψ | α, β ) . Let us first deriv e the asymptotic behavior arising from the Dirichlet-multinomial distribution part of the likelihood, for a gi ven document j : Γ( αK ) Γ( P K i =1 n i j · + αK ) K Y i =1 Γ( n i j · + α ) Γ( α ) . In particular , we will show the follo wing lemma. 13 Lemma 2. Consider the likelihood p ( Z | α ) = M Y j =1 Γ( αK ) Γ( P K i =1 n i j · + αK ) K Y i =1 Γ( n i j · + α ) Γ( α ) . If α = exp( − λ · η ) , then asymptotically as η → ∞ we have − log p ( Z | α ) ∼ η λ X M j =1 ( K j + − 1) . Pr oof. Note that N j = P K i =1 n i j · . Using standard properties of the Γ function, we hav e that the negati ve log of the abov e distribution is equal to N j − 1 X n =0 log( αK + n ) − K X i =1 n i j · − 1 X n =0 log( α + n ) . All of the logarithmic summands con verge to a finite constant whene ver the y hav e an additional term besides α or αK inside. The only terms that asymptotically div erge are those of the form log( αK ) or log( α ) , that is, when n = 0 . The first term always occurs. T erms of the type log( α ) occur only when, for the corresponding i , we ha ve n i j · > 0 . Recalling that α = exp( − λ · η ) , we can conclude that the ne gativ e log of the Dirichlet multinomial term becomes asymptotically η λ ( K j + − 1) , where K j + is the number of topics i in document j where n i j · > 0 , i.e., the number of topics currently utilized by document j . (The maximum value for K j + is K , the total number of topics.) The rest of the negati ve log-likelihood is straightforward. The − log p ( ψ i | β ) terms vanish asymptotically since we are not scaling β (see the note below on scaling β ). Thus, the remaining terms in the SV A objectiv e are the ones arising from the word likelihoods which, after applying a ne gativ e logarithm, become − M X j =1 N j X t =1 log p ( w j t | ψ z j t ) . Using the Bregman div ergence representation, we can conclude that the negati ve log-likelihood asymptotically yields the objecti ve − log p ( Z , W , ψ | α, β ) ∼ η M X j =1 N j X t =1 KL ( ˜ w j t , ψ z j t ) + λ M X j =1 ( K j + − 1) , where f ( x ) ∼ g ( x ) denotes that f ( x ) /g ( x ) → 1 as x → ∞ . This leads to the objectiv e function min Z , ψ M X j =1 N j X t =1 KL ( ˜ w j t , ψ z j t ) + λ M X j =1 K j + . (6) W e remind the reader that KL ( ˜ w j t , ψ z j t ) = − log ψ z j t ,w j t . Thus we obtain a k -means-like term that says that all words in all documents should be “close” to their assigned topic in terms of KL-di ver gence, but that we should also not hav e too many topics represented in each document. Note that we did not scale β , to obtain a simple objecti ve with only one parameter (other than the total number of topics), but let us say a few words about scaling β . A natural approach is to further integrate out ψ of the joint likelihood, as is done with the collapsed Gibbs sampler . One would obtain additional Dirichlet-multinomial distributions, and properly scaling as discussed abo ve would yield a simple objecti ve that places penalties on the number of topics per document as well as the number of words in each topic. Optimization would be performed only with respect to the topic assignment matrix. Future work would consider the ef fectiv eness of such an objectiv e function for topic modeling. 14 Algorithm 3 Basic Batch Algorithm Input: W ords: W , Number of topics: K , T opic penalty: λ Initialize Z and topic vectors ψ 1 , ..., ψ K . Compute initial objecti ve function (6) using Z and ψ . repeat //Update assignments: f or ev ery word token t in ev ery document j do Compute distance d ( j, t, i ) to topic i : − log ( ψ i,w j t ) . If z j t 6 = i for all tokens t in document j , add λ to d ( j, t, i ) . Obtain assignments via Z j t = argmin i d ( j, t, i ) . end f or //Update topic vectors: f or ev ery element ψ iu do ψ iu = # occ. of word u in topic i / total # of word tokens in topic i . end f or Recompute objecti ve function (6) using updated Z and ψ . until no change in objecti ve function. Output: Assignments Z . A.1 Further Details on the Basic Algorithm Pseudo-code for the basic algorithm is giv en as Algorithm 3. W e briefly elaborate on a few points raised in the main text. First, the running time of the batch algorithm can be shown to be O ( N K ) per iteration, where N is the total number of word tokens and K is the number of topics. This is because each word token must be compared to e very topic, b ut the resulting comparison can be done in constant time. Updating topics is performed by maintaining a count of the number of occurrences of each word in each topic, which also runs in O ( N K ) time. Note that the collapsed Gibbs sampler runs in O ( N K ) time per iteration, and thus has a comparable running time per iteration. Second, one can also sho w that this algorithm is guaranteed to conv erge to a local optimum, similar to k -means and DP-means. The argument follo ws along similar lines to k -means and DP-means, namely that each updating step cannot increase the objectiv e function. In particular , the update on the topic vectors must improv e the objecti ve function since the means are kno wn to be the best representati ves for topics based on the results of [ 5 ]. The assignment step must decrease the objective since we only re-assign if the distance goes do wn. Further , we only re-assign to a topic that is not currently used by the document if the distance is more than λ greater than the distance to the current topic, thus accounting for the additional λ that must be paid in the objecti ve function. A ppendix B An Efficient Facility Location Algorithm f or Improv ed W ord Assignments In this section, we describe an ef ficient O ( N K ) algorithm based on facility location for obtaining the w ord assignments. Recall the algorithm, giv en earlier in Algorithm 1. Our first observation is that, for a fixed size of T and a gi ven i , the best choice of T is obtained by selecting the | T | closest tokens to ψ i in terms of the KL-di ver gence. Thus, as a first pass, we can obtain the correct points to mark by appropriately sorting KL-di ver gences of all tokens to all topics, and then searching over all sizes of T and topics i . 15 Next we mak e three observ ations about the sorting procedure. First, the KL-div ergence between a word and a topic depends purely on counts of words within topics; recall that it is of the form − log ψ iu , where ψ iu equals the number of occurrences of word u in topic i di vided by the total number of word tok ens assigned to i . Thus, for a gi ven topic, the sorted words are obtained e xactly by sorting word counts within a topic in decreasing order . Second, because the word counts are all integers, we can use a linear-time sorting algorithm such as counting sort or radix sort to efficiently sort the items. In the case of counting sort, for instance, if we have n integers whose maximum value is k , the total running time is O ( n + k ) ; the storage time is also O ( n + k ) . In our case, we perform many sorts. Each sort considers, for a fix ed document j , sorting word counts to some topic i . Suppose there are n i j · tokens with non-zero counts to the topic, with maximum word count m i j . Then the running time of this sort is O ( n i j · + m i j ) . Across the document, we do this for ev ery topic, making the running time scale as O ( P i ( n i j · + m i j )) = O ( n · j · K ) , where n · j · is the number of word tok ens in document j . Across all documents this sorting then takes O ( N K ) time. Third, we note that we need only sort once per run of the algorithm. Once we ha ve sorted lists for words to topics, if we mark some set T , we can efficiently remov e these words from the sorted lists and keep the updated lists in sorted order . Remo ving an individual w ord from a single sorted list can be done in constant time by maintaining appropriate pointers. Since each word token is remov ed exactly once during the algorithm, and must be removed from each topic, the total time to update the sorted lists during the algorithm is O ( N K ) . At this point, we still do not ha ve a procedure that runs in O ( N K ) time. In particular , we must find the minimum of f i + P t ∈ T KL ( ˜ w j t , ψ i ) | T | at each round of marking. Nai vely this is performed by traversing the sorted lists and accumulating the value of the abov e score via summation. In the worst case, each round w ould take a total of O ( N K ) time across all documents, so if there are R rounds on av erage across all the documents, the total running time would be O ( N K R ) . Ho wev er , we can observe that we need not traverse entire sorted lists in general. Consider a fixed document, where we try to find the best set T by trav ersing all possible sizes of T . W e can show that, as we increase the size of T , the v alue of the score function monotonically decreases until hitting the minimum value, and then monotonically increases afterward. W e can formalize the monotonicity of the scoring function as follo ws: Proposition 1. Let s ni be the value of the scoring function (4) for the best candidate set T of size t for topic i . If s t − 1 ,i ≤ s ti , then s ti ≤ s t +1 ,i . Pr oof. Recall that the KL-diver gence is equal to the negati ve logarithm of the number of occurrences of the corresponding word token divided by the total number occurrences of tokens in the topic. Write this as log n i ·· − log c i` , where n i ·· is the number of occurrences of tokens in topic i and c i` is the count of the ` -th highest-count word in topic i . Now , by assumption s t − 1 ,i ≤ s ti . Plugging the score functions into this inequality and cancelling the log n i ·· terms, we hav e − 1 t − 1 t − 1 X ` =1 log c i` + f i t − 1 ≤ − 1 t t X ` =1 log c i` + f i t . Multiplying by t ( t − 1) and simplifying yields the inequality f i + t log c it ≤ t X ` =1 log c i` . 16 objecti ve v alue ( × 10 6 ) SynthA SynthB Basic 5.07 5.45 Word 4.06 3.79 Word+Refine 3.98 3.61 T able 4: Optimized combinatorial topic modeling objectiv e function v alues for dif ferent algorithms with λ = 10 . No w , assuming this holds for s t − 1 ,i and s t,i , we must sho w that this inequality also holds for s t,i and s t +1 ,i , i.e. that f i + ( t + 1) log c i,t +1 ≤ t +1 X ` =1 log c i` . Simple algebraic manipulation and the fact that the counts are sorted, i.e., log c i,t +1 ≤ log c it , shows the inequality to hold. In words, the abov e proof demonstrates that, once the scoring function stops decreasing, it will not decrease any further , i.e., the minimum score has been found. Thus, once the score function starts to increase as T gets lar ger , we can stop and the best score (i.e., the best set T ) for that topic i has been found. W e do this for all topics i until we find the ov erall best set T for marking. Under the mild assumption that the size of the chosen minimizer T is similar (specifically , within a constant factor) to the average size of the best candidate sets T across the other topics (an assumption which holds in practice), then it follows that the total time to find all the sets T takes O ( N K ) time. Putting e verything together , all the steps of this algorithm combine to cost O ( N K ) time. A ppendix C Additional Experimental Results Objective optimization . T able 4 shows the optimized objectiv e function values for all three proposed algorithms. W e can see that the Word algorithm significantly reduces the objecti ve v alue when compared with the Basic algorithm, and the Word+Refine algorithm reduces further . As pointed out in [ 34 ] in the context of other SV A models, the Basic algorithm is very sensitive to initializations. Howe ver , this is not the case for the Word and Word+Refine algorithms and they are quite rob ust to initializations. From the objecti ve v alues, the improvement from Word+Refine to Word seems to be mar ginal. But we will sho w in the follo wing that the incorporation of the topic refinement is crucial for learning good topic models. Evolution of the Gibbs Sampler . The Gibbs sampler can easily become trapped in a local optima area and needs many iterations on lar ge data sets, which can be seen from Figure 2. Since our algorithm outputs Z , we can use this assignment as initialization to the sampler . In Figure 2, we also show the evolution of topic reconstruction ` 1 error initialized with the Word+Refine optimized assignment for 3 iterations with v arying v alues of λ . W ith these semi-optimized initializations, we observe more than 5-fold speed-up compared to random initializations. T opic Reconstruction Error . In the main text, we observed that the Anchor method is the most competiti ve with Word+Refine on larger synthetic data sets, but that Word+Refine still outperforms Anchor for these larger data sets. W e found this to be true as we scale up further; for instance, for 20,000 documents from the SynthB data, Anchor achie ves a topic reconstruction score of 0.103 while Word+Refine achieves 0.095. Log likelihood comparisons on r eal data. T able 5 contains further predictive log-lik elihood results on the Enron and NYT imes data sets. Here we also show results on VB, which also indicate (as e xpected) that our 17 0.0 0.1 0.2 0.3 0.4 0.5 0 1000 2000 3000 4000 5000 Iterations L1 Error Initialization Random lambda=6 lambda=8 lambda=10 lambda=12 L1 Error v.s. Iterations Figure 2: The e volution of topic reconstruction ` 1 errors of Gibbs sampler with different initializations: “Random” means random initialization, and “lambda=6” means initializing with the assignment earned using Word+Refine algorithm with λ = 6 (best vie wed in color). -12.0 -11.5 -11.0 -10.5 0 1000 2000 3000 Iterations Test log-likelihood Initialization Random W+R Init Test Log-Likelihood v.s. Iterations Figure 3: The ev olution of the predicti ve word log-likelihood of the Enron dataset with dif ferent initializations: “Random” means random initialization, and “W+R Init” means initializing with the assignment learned using Word+Refine algorithm. 18 Enron β = 0 . 1 β = 0 . 01 β = 0 . 001 hard original KL hard original KL hard original KL CGS -5.932 -8.583 3.899 -5.484 -10.781 7.084 -5.091 -13.296 10.000 VB -6.007 -8.803 4.528 -5.610 -10.202 7.010 -5.334 -11.472 9.010 W+R -5.434 -9.843 4.541 -5.147 -11.673 7.225 -4.918 -13.737 9.769 NYT β = 0 . 1 β = 0 . 01 β = 0 . 001 hard original KL hard original KL hard original KL CGS -6.594 -9.361 4.374 -6.205 -11.381 7.379 -5.891 -13.716 10.135 VB -6.470 -10.077 5.666 -6.269 -11.509 7.803 -6.023 -12.832 9.691 W+R -6.105 -10.612 5.059 -5.941 -12.225 7.315 -5.633 -14.524 9.939 T able 5: The predicti ve w ord log-likelihood on ne w documents for Enron ( K = 100 topics) and NYT imes ( K = 100 topics) datasets with fixed α v alue. “hard” is short for hard predicti ve w ord log-likelihood which is computed using the word-topic assignments inferred by the Word algorithm, “original” is short for original predicti ve word log-likelihood which is computed using the document-topic distributions inferred by the sampler , and “KL ” is short for symmetric KL-di ver gence. approach does well with respect to the hard log-likelihood score but less well on the original log-lik elihood score. W e omit the results of the Anchor method since it cannot adjust its result on different combinations of α and β v alues 5 . In Figure 3, we show the e volution of predicti ve heldout log-likelihood of the Gibbs sampler initialized with the Word+Refine optimized assignment for 3 iterations for the Enron dataset. W ith these semi-optimized initializations, we also observed significant speed-up compared to random initializations. 5 W e also observed that there are 0 entries in the learned topic matrix, which makes it difficult to compute the predictive log-likelihood. 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment