Evasion and Hardening of Tree Ensemble Classifiers

Classifier evasion consists in finding for a given instance $x$ the nearest instance $x’$ such that the classifier predictions of $x$ and $x’$ are different. We present two novel algorithms for systematically computing evasions for tree ensembles such as boosted trees and random forests. Our first algorithm uses a Mixed Integer Linear Program solver and finds the optimal evading instance under an expressive set of constraints. Our second algorithm trades off optimality for speed by using symbolic prediction, a novel algorithm for fast finite differences on tree ensembles. On a digit recognition task, we demonstrate that both gradient boosted trees and random forests are extremely susceptible to evasions. Finally, we harden a boosted tree model without loss of predictive accuracy by augmenting the training set of each boosting round with evading instances, a technique we call adversarial boosting.

💡 Research Summary

The paper addresses the problem of adversarial evasion for tree‑based ensemble classifiers such as Gradient Boosted Trees (GBT) and Random Forests (RF). Evasion is defined as finding, for a given input x, the smallest perturbation δ (according to a chosen distance metric) that changes the classifier’s prediction. The authors propose two complementary algorithms.

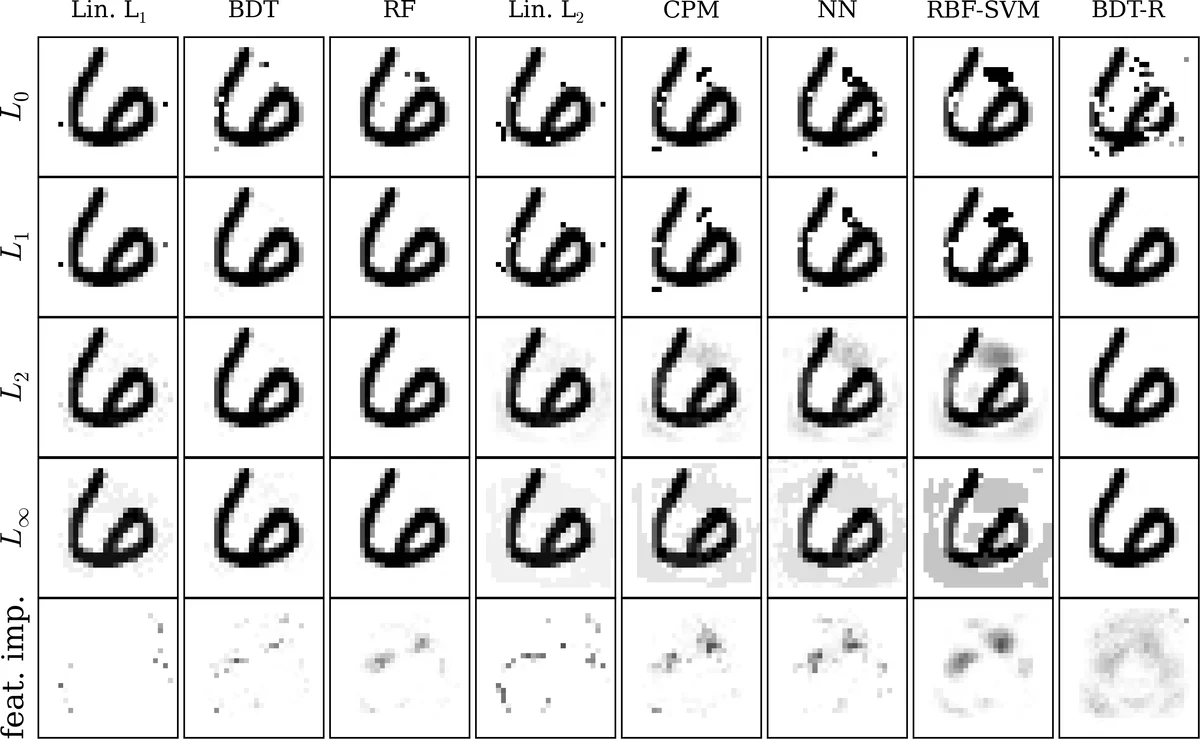

The first algorithm formulates the evasion problem as a Mixed‑Integer Linear Program (MILP). The model’s internal predicates (e.g., x_i < τ) are represented by binary variables p, and each leaf’s activation is represented by a continuous variable l that is forced to be binary by the constraints. Consistency constraints enforce the logical ordering of predicates on the same feature, leaf‑selection constraints ensure exactly one leaf per tree is active, and a mis‑classification constraint forces the sign of the ensemble output to flip. By avoiding “big‑M” constants and using tight formulations, the MILP can solve optimal evasion for L₀, L₁, L₂, and L∞ distances in reasonable time for typical GBT/RF models.

The second algorithm, called symbolic prediction, provides a fast approximate solution. It traverses each tree symbolically, keeping track of which predicates are satisfied for a given feature vector. By computing the change in the ensemble’s output for a unit change in each feature (finite differences), the method selects the feature that yields the largest increase in loss and updates it iteratively. This coordinate‑descent style approach runs in near‑linear time with respect to the number of trees and features, allowing the generation of millions of adversarial examples quickly, albeit without a guarantee of optimality.

Experiments are conducted on a handwritten digit recognition task (a MNIST‑like dataset). The authors compare seven models: L₁‑regularized logistic regression, L₂‑regularized logistic regression, a max‑ensemble of linear classifiers (shallow max‑out), a three‑layer deep neural network, an RBF‑SVM, a Gradient Boosted Tree model, and a Random Forest. All models are tuned to achieve comparable accuracy (~98%). For each model, the minimal L₂ perturbation required to flip the label is measured. Both GBT and RF require dramatically smaller perturbations (roughly 0.03–0.05) than the other models (≈0.12–0.15), indicating that tree ensembles are intrinsically more brittle despite high predictive performance.

To improve robustness, the authors introduce “adversarial boosting”. During each boosting round, they generate adversarial examples for the current model using the fast symbolic‑prediction method, label them with the opposite class, and add them to the training set for the next round. This forces subsequent trees to learn decision boundaries that are less sensitive to small feature changes. The hardened GBT model shows a 1.8‑fold increase in the average L₂ perturbation needed for successful evasion, while its test accuracy remains essentially unchanged (within 0.1%).

The paper also proves that deciding whether a tree‑ensemble can be made to output a positive value is NP‑complete via a reduction from 3‑SAT, establishing that the evasion feasibility problem is computationally hard in the worst case. Nonetheless, the practical MILP and symbolic‑prediction methods exploit the structure of real‑world ensembles to solve the problem efficiently.

In summary, the contributions are: (1) a formal definition of optimal evasion for tree ensembles; (2) an exact MILP formulation that handles multiple distance metrics; (3) a novel symbolic‑prediction algorithm for fast approximate evasion; (4) extensive empirical evidence that tree ensembles are more vulnerable than linear models, DNNs, or SVMs on a digit task; and (5) a practical adversarial‑boosting procedure that hardens tree ensembles without sacrificing accuracy. The work extends adversarial‑learning research beyond differentiable models, offering concrete tools for assessing and improving the security of widely deployed non‑linear, non‑differentiable classifiers.

Comments & Academic Discussion

Loading comments...

Leave a Comment