Practitioners Perspectives on Change Impact Analysis for Safety-Critical Software - A Preliminary Analysis

Safety standards prescribe change impact analysis (CIA) during evolution of safety-critical software systems. Although CIA is a fundamental activity, there is a lack of empirical studies about how it is performed in practice. We present a case study on CIA in the context of an evolving automation system, based on 14 interviews in Sweden and India. Our analysis suggests that engineers on average spend 50-100 hours on CIA per year, but the effort varies considerably with the phases of projects. Also, the respondents presented different connotations to CIA and perceived the importance of CIA differently. We report the most pressing CIA challenges, and several ideas on how to support future CIA. However, we show that measuring the effect of such improvement solutions is non-trivial, as CIA is intertwined with other development activities. While this paper only reports preliminary results, our work contributes empirical insights into practical CIA.

💡 Research Summary

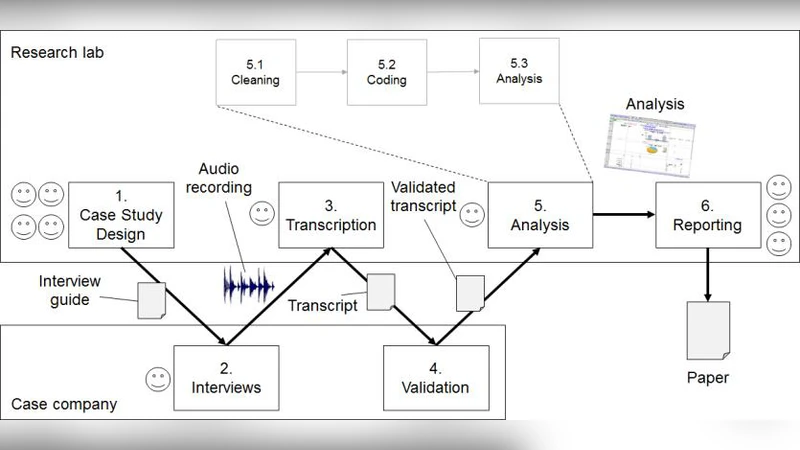

The paper investigates how Change Impact Analysis (CIA) – a mandatory activity in safety‑critical software development – is actually performed in industry, and what practitioners think about its purpose, effort, and challenges. The authors conducted a case‑study based on semi‑structured interviews with fourteen engineers from two organizations that develop an evolving automation system, one located in Sweden and the other in India. All participants work under safety standards such as ISO 26262 or IEC 61508, which require systematic CIA whenever the software changes.

The interview data reveal that, on average, engineers devote between 50 and 100 person‑hours per year to CIA, but the distribution of effort is highly uneven across project phases. In early requirement‑definition stages, CIA activity is minimal because changes are not yet concrete. Conversely, during design, implementation, integration, and testing phases, the effort spikes dramatically, especially when modifications affect multiple modules or external interfaces. In some cases, a single large change can consume more than 150 hours of CIA work.

A striking finding is the lack of a shared definition of CIA among the respondents. Some view CIA narrowly as “identifying directly affected code files,” producing simple lists of changed files and their callers. Others adopt a broader perspective that includes “re‑assessment of system‑level safety arguments,” linking impact analysis to risk assessment and safety case updates. This divergence influences the depth of documentation, the traceability artifacts produced, and ultimately the ability to demonstrate compliance during certification audits.

Perceptions of CIA’s importance also vary by role. Developers, pressed by schedule constraints, tend to treat CIA as a “necessary but peripheral” activity and often rely on outdated traceability matrices. Quality‑assurance and safety engineers, however, stress that CIA provides critical evidence for safety certification and argue that it must be tightly coupled with risk re‑evaluation and regression testing. The mismatch in perception leads to inconsistent collaboration: CIA results are sometimes isolated from other development activities, causing duplicated effort and missed safety insights.

Four major challenges emerged from the interviews. First, pinpointing the scope of a change is difficult, especially in complex, highly modular systems where a single modification can ripple through many components. Second, existing documentation (design specifications, requirements traceability, test cases) is frequently out‑of‑date, making manual impact tracing labor‑intensive and error‑prone. Third, there is a scarcity of automation tools that fit the organizations’ processes; most CIA work remains manual. Fourth, integrating CIA outcomes with downstream activities such as regression testing, risk analysis, and change management lacks a systematic methodology, leading to fragmented workflows.

To address these issues, participants suggested several improvement ideas: (a) adopting a model‑based traceability matrix that automatically maps change elements to affected architectural components; (b) implementing a formalized, tool‑supported safety‑risk re‑calculation engine that updates hazard analyses as soon as a change is logged; and (c) embedding CIA into continuous integration/continuous delivery pipelines so that impact analysis triggers targeted regression tests and updates safety arguments automatically. However, the authors caution that measuring the benefits of such interventions is non‑trivial. Because CIA is tightly intertwined with many other development activities, a single metric (e.g., reduced hours or fewer defects) cannot capture the full effect; a multi‑dimensional evaluation framework would be required.

In summary, the study provides empirical evidence that CIA remains a resource‑intensive, cognitively demanding activity in safety‑critical software projects, and that a gap exists between the prescriptive standards and the practical realities faced by engineers. The diversity of definitions, the role‑dependent perception of importance, and the identified challenges highlight the need for better tooling, clearer process integration, and systematic evaluation methods. The authors view their preliminary findings as a foundation for future longitudinal studies that could quantify the impact of proposed improvements and guide the evolution of CIA practices in safety‑critical domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment