Neural Machine Translation by Jointly Learning to Align and Translate

Neural machine translation is a recently proposed approach to machine translation. Unlike the traditional statistical machine translation, the neural machine translation aims at building a single neural network that can be jointly tuned to maximize t…

Authors: Dzmitry Bahdanau, Kyunghyun Cho, Yoshua Bengio

Published as a conference paper at ICLR 2015 N E U R A L M A C H I N E T R A N S L A T I O N B Y J O I N T L Y L E A R N I N G T O A L I G N A N D T R A N S L A T E Dzmitry Bahdanau Jacobs Univ ersity Bremen, Germany K yungHyun Cho Y oshua Bengio ∗ Univ ersit ´ e de Montr ´ eal A B S T R AC T Neural machine translation is a recently proposed approach to machine transla- tion. Unlike the traditional statistical machine translation, the neural machine translation aims at b uilding a single neural network that can be jointly tuned to maximize the translation performance. The models proposed recently for neu- ral machine translation often belong to a family of encoder–decoders and encode a source sentence into a fixed-length v ector from which a decoder generates a translation. In this paper , we conjecture that the use of a fixed-length vector is a bottleneck in improving the performance of this basic encoder–decoder architec- ture, and propose to e xtend this by allo wing a model to automatically (soft-)search for parts of a source sentence that are rele vant to predicting a tar get word, without having to form these parts as a hard segment explicitly . W ith this new approach, we achiev e a translation performance comparable to the existing state-of-the-art phrase-based system on the task of English-to-French translation. Furthermore, qualitativ e analysis re veals that the (soft-)alignments found by the model agree well with our intuition. 1 I N T RO D U C T I O N Neural machine tr anslation is a ne wly emer ging approach to machine translation, recently proposed by Kalchbrenner and Blunsom (2013), Sutske ver et al. (2014) and Cho et al. (2014b). Unlike the traditional phrase-based translation system (see, e.g., Koehn et al. , 2003) which consists of many small sub-components that are tuned separately , neural machine translation attempts to build and train a single, large neural netw ork that reads a sentence and outputs a correct translation. Most of the proposed neural machine translation models belong to a family of encoder – decoders (Sutske ver et al. , 2014; Cho et al. , 2014a), with an encoder and a decoder for each lan- guage, or in volv e a language-specific encoder applied to each sentence whose outputs are then com- pared (Hermann and Blunsom, 2014). An encoder neural network reads and encodes a source sen- tence into a fixed-length vector . A decoder then outputs a translation from the encoded vector . The whole encoder–decoder system, which consists of the encoder and the decoder for a language pair , is jointly trained to maximize the probability of a correct translation giv en a source sentence. A potential issue with this encoder–decoder approach is that a neural network needs to be able to compress all the necessary information of a source sentence into a fixed-length vector . This may make it dif ficult for the neural network to cope with long sentences, especially those that are longer than the sentences in the training corpus. Cho et al. (2014b) sho wed that indeed the performance of a basic encoder–decoder deteriorates rapidly as the length of an input sentence increases. In order to address this issue, we introduce an extension to the encoder –decoder model which learns to align and translate jointly . Each time the proposed model generates a word in a translation, it (soft-)searches for a set of positions in a source sentence where the most rele vant information is concentrated. The model then predicts a target word based on the context vectors associated with these source positions and all the previous generated tar get words. ∗ CIF AR Senior Fellow 1 Published as a conference paper at ICLR 2015 The most important distinguishing feature of this approach from the basic encoder–decoder is that it does not attempt to encode a whole input sentence into a single fixed-length vector . Instead, it en- codes the input sentence into a sequence of vectors and chooses a subset of these v ectors adaptiv ely while decoding the translation. This frees a neural translation model from having to squash all the information of a source sentence, regardless of its length, into a fixed-length vector . W e show this allows a model to cope better with long sentences. In this paper , we show that the proposed approach of jointly learning to align and translate achie ves significantly improved translation performance over the basic encoder–decoder approach. The im- prov ement is more apparent with longer sentences, but can be observed with sentences of any length. On the task of English-to-French translation, the proposed approach achiev es, with a single model, a translation performance comparable, or close, to the conv entional phrase-based system. Furthermore, qualitative analysis reveals that the proposed model finds a linguistically plausible (soft-)alignment between a source sentence and the corresponding target sentence. 2 B A C K G R O U N D : N E U R A L M A C H I N E T R A N S L A T I O N From a probabilistic perspectiv e, translation is equiv alent to finding a target sentence y that max- imizes the conditional probability of y giv en a source sentence x , i.e., arg max y p ( y | x ) . In neural machine translation, we fit a parameterized model to maximize the conditional probability of sentence pairs using a parallel training corpus. Once the conditional distribution is learned by a translation model, gi ven a source sentence a corresponding translation can be generated by searching for the sentence that maximizes the conditional probability . Recently , a number of papers hav e proposed the use of neural networks to directly learn this condi- tional distribution (see, e.g., Kalchbrenner and Blunsom, 2013; Cho et al. , 2014a; Sutske ver et al. , 2014; Cho et al. , 2014b; Forcada and ˜ Neco, 1997). This neural machine translation approach typ- ically consists of two components, the first of which encodes a source sentence x and the second decodes to a target sentence y . For instance, two recurrent neural networks (RNN) were used by (Cho et al. , 2014a) and (Sutskev er et al. , 2014) to encode a v ariable-length source sentence into a fixed-length v ector and to decode the vector into a v ariable-length target sentence. Despite being a quite new approach, neural machine translation has already shown promising results. Sutske ver et al. (2014) reported that the neural machine translation based on RNNs with long short- term memory (LSTM) units achiev es close to the state-of-the-art performance of the con ventional phrase-based machine translation system on an English-to-French translation task. 1 Adding neural components to e xisting translation systems, for instance, to score the phrase pairs in the phrase table (Cho et al. , 2014a) or to re-rank candidate translations (Sutskev er et al. , 2014), has allowed to surpass the previous state-of-the-art performance le vel. 2 . 1 R N N E N C O D E R – D E C O D E R Here, we describe briefly the underlying framework, called RNN Encoder–Decoder , proposed by Cho et al. (2014a) and Sutske ver et al. (2014) upon which we build a nov el architecture that learns to align and translate simultaneously . In the Encoder–Decoder frame work, an encoder reads the input sentence, a sequence of vectors x = ( x 1 , · · · , x T x ) , into a vector c . 2 The most common approach is to use an RNN such that h t = f ( x t , h t − 1 ) (1) and c = q ( { h 1 , · · · , h T x } ) , where h t ∈ R n is a hidden state at time t , and c is a vector generated from the sequence of the hidden states. f and q are some nonlinear functions. Sutske ver et al. (2014) used an LSTM as f and q ( { h 1 , · · · , h T } ) = h T , for instance. 1 W e mean by the state-of-the-art performance, the performance of the conv entional phrase-based system without using any neural network-based component. 2 Although most of the pre vious works (see, e.g., Cho et al. , 2014a; Sutsk ever et al. , 2014; Kalchbrenner and Blunsom, 2013) used to encode a variable-length input sentence into a fixed-length vector , it is not necessary , and ev en it may be beneficial to hav e a variable-length vector , as we will show later . 2 Published as a conference paper at ICLR 2015 The decoder is often trained to predict the next word y t 0 giv en the context vector c and all the previously predicted words { y 1 , · · · , y t 0 − 1 } . In other words, the decoder defines a probability over the translation y by decomposing the joint probability into the ordered conditionals: p ( y ) = T Y t =1 p ( y t | { y 1 , · · · , y t − 1 } , c ) , (2) where y = y 1 , · · · , y T y . W ith an RNN, each conditional probability is modeled as p ( y t | { y 1 , · · · , y t − 1 } , c ) = g ( y t − 1 , s t , c ) , (3) where g is a nonlinear , potentially multi-layered, function that outputs the probability of y t , and s t is the hidden state of the RNN. It should be noted that other architectures such as a hybrid of an RNN and a de-con volutional neural netw ork can be used (Kalchbrenner and Blunsom, 2013). 3 L E A R N I N G T O A L I G N A N D T R A N S L A T E In this section, we propose a novel architecture for neural machine translation. The ne w architecture consists of a bidirectional RNN as an encoder (Sec. 3.2) and a decoder that emulates searching through a source sentence during decoding a translation (Sec. 3.1). 3 . 1 D E C O D E R : G E N E R A L D E S C R I P T I O N x 1 x 2 x 3 x T + α t,1 α t,2 α t,3 α t,T y t-1 y t h 1 h 2 h 3 h T h 1 h 2 h 3 h T s t-1 s t Figure 1: The graphical illus- tration of the proposed model trying to generate the t -th tar- get word y t giv en a source sentence ( x 1 , x 2 , . . . , x T ) . In a ne w model architecture, we define each conditional probability in Eq. (2) as: p ( y i | y 1 , . . . , y i − 1 , x ) = g ( y i − 1 , s i , c i ) , (4) where s i is an RNN hidden state for time i , computed by s i = f ( s i − 1 , y i − 1 , c i ) . It should be noted that unlike the existing encoder–decoder ap- proach (see Eq. (2)), here the probability is conditioned on a distinct context v ector c i for each target w ord y i . The context vector c i depends on a sequence of annotations ( h 1 , · · · , h T x ) to which an encoder maps the input sentence. Each annotation h i contains information about the whole input sequence with a strong focus on the parts surrounding the i -th word of the input sequence. W e explain in detail how the annotations are com- puted in the next section. The conte xt v ector c i is, then, computed as a weighted sum of these annotations h i : c i = T x X j =1 α ij h j . (5) The weight α ij of each annotation h j is computed by α ij = exp ( e ij ) P T x k =1 exp ( e ik ) , (6) where e ij = a ( s i − 1 , h j ) is an alignment model which scores how well the inputs around position j and the output at position i match. The score is based on the RNN hidden state s i − 1 (just before emitting y i , Eq. (4)) and the j -th annotation h j of the input sentence. W e parametrize the alignment model a as a feedforward neural network which is jointly trained with all the other components of the proposed system. Note that unlike in traditional machine translation, 3 Published as a conference paper at ICLR 2015 the alignment is not considered to be a latent v ariable. Instead, the alignment model directly com- putes a soft alignment, which allo ws the gradient of the cost function to be backpropagated through. This gradient can be used to train the alignment model as well as the whole translation model jointly . W e can understand the approach of taking a weighted sum of all the annotations as computing an expected annotation , where the e xpectation is ov er possible alignments. Let α ij be a probability that the target word y i is aligned to, or translated from, a source word x j . Then, the i -th context vector c i is the expected annotation o ver all the annotations with probabilities α ij . The probability α ij , or its associated energy e ij , reflects the importance of the annotation h j with respect to the previous hidden state s i − 1 in deciding the next state s i and generating y i . Intuitiv ely , this implements a mechanism of attention in the decoder . The decoder decides parts of the source sentence to pay attention to. By letting the decoder have an attention mechanism, we relieve the encoder from the burden of having to encode all information in the source sentence into a fixed- length vector . W ith this new approach the information can be spread throughout the sequence of annotations, which can be selectiv ely retriev ed by the decoder accordingly . 3 . 2 E N C O D E R : B I D I R E C T I O N A L R N N F O R A N N O TA T I N G S E Q U E N C E S The usual RNN, described in Eq. (1), reads an input sequence x in order starting from the first symbol x 1 to the last one x T x . Ho wev er , in the proposed scheme, we would like the annotation of each word to summarize not only the preceding words, but also the following words. Hence, we propose to use a bidirectional RNN (BiRNN, Schuster and P aliwal, 1997), which has been successfully used recently in speech recognition (see, e.g., Grav es et al. , 2013). A BiRNN consists of forward and backward RNN’ s. The forw ard RNN − → f reads the input sequence as it is ordered (from x 1 to x T x ) and calculates a sequence of forwar d hidden states ( − → h 1 , · · · , − → h T x ) . The backward RNN ← − f reads the sequence in the reverse order (from x T x to x 1 ), resulting in a sequence of backwar d hidden states ( ← − h 1 , · · · , ← − h T x ) . W e obtain an annotation for each word x j by concatenating the forward hidden state − → h j and the backward one ← − h j , i.e., h j = h − → h > j ; ← − h > j i > . In this way , the annotation h j contains the summaries of both the preceding words and the follo wing words. Due to the tendency of RNNs to better represent recent inputs, the annotation h j will be focused on the words around x j . This sequence of annotations is used by the decoder and the alignment model later to compute the context vector (Eqs. (5)–(6)). See Fig. 1 for the graphical illustration of the proposed model. 4 E X P E R I M E N T S E T T I N G S W e ev aluate the proposed approach on the task of English-to-French translation. W e use the bilin- gual, parallel corpora provided by A CL WMT ’14. 3 As a comparison, we also report the perfor- mance of an RNN Encoder–Decoder which was proposed recently by Cho et al. (2014a). W e use the same training procedures and the same dataset for both models. 4 4 . 1 D A TA S E T WMT ’14 contains the follo wing English-French parallel corpora: Europarl (61M words), news commentary (5.5M), UN (421M) and two crawled corpora of 90M and 272.5M words respecti vely , totaling 850M words. Following the procedure described in Cho et al. (2014a), we reduce the size of the combined corpus to hav e 348M words using the data selection method by Axelrod et al. (2011). 5 W e do not use any monolingual data other than the mentioned parallel corpora, although it may be possible to use a much lar ger monolingual corpus to pretrain an encoder . W e concatenate ne ws-test- 3 http://www.statmt.org/wmt14/translation- task.html 4 Implementations are av ailable at https://github.com/lisa- groundhog/GroundHog . 5 A vailable online at http://www- lium.univ- lemans.fr/ ˜ schwenk/cslm_joint_paper/ . 4 Published as a conference paper at ICLR 2015 0 10 20 30 40 50 60 Sen tence length 0 5 10 15 20 25 30 BLEU score RNNsearc h-50 RNNsearc h-30 RNNenc-50 RNNenc-30 Figure 2: The BLEU scores of the generated translations on the test set with respect to the lengths of the sen- tences. The results are on the full test set which in- cludes sentences having un- known w ords to the models. 2012 and news-test-2013 to make a dev elopment (validation) set, and ev aluate the models on the test set (ne ws-test-2014) from WMT ’14, which consists of 3003 sentences not present in the training data. After a usual tokenization 6 , we use a shortlist of 30,000 most frequent words in each language to train our models. An y word not included in the shortlist is mapped to a special token ( [ UNK ] ). W e do not apply any other special preprocessing, such as lo wercasing or stemming, to the data. 4 . 2 M O D E L S W e train two types of models. The first one is an RNN Encoder–Decoder (RNNencdec, Cho et al. , 2014a), and the other is the proposed model, to which we refer as RNNsearch. W e train each model twice: first with the sentences of length up to 30 words (RNNencdec-30, RNNsearch-30) and then with the sentences of length up to 50 word (RNNencdec-50, RNNsearch-50). The encoder and decoder of the RNNencdec have 1000 hidden units each. 7 The encoder of the RNNsearch consists of forward and backward recurrent neural networks (RNN) each having 1000 hidden units. Its decoder has 1000 hidden units. In both cases, we use a multilayer network with a single maxout (Goodfellow et al. , 2013) hidden layer to compute the conditional probability of each target w ord (Pascanu et al. , 2014). W e use a minibatch stochastic gradient descent (SGD) algorithm together with Adadelta (Zeiler, 2012) to train each model. Each SGD update direction is computed using a minibatch of 80 sen- tences. W e trained each model for approximately 5 days. Once a model is trained, we use a beam search to find a translation that approximately maximizes the conditional probability (see, e.g., Grav es, 2012; Boulanger-Le wando wski et al. , 2013). Sutsk ev er et al. (2014) used this approach to generate translations from their neural machine translation model. For more details on the architectures of the models and training procedure used in the experiments, see Appendices A and B. 5 R E S U L T S 5 . 1 Q U A N T I TA T I V E R E S U L T S In T able 1, we list the translation performances measured in BLEU score. It is clear from the table that in all the cases, the proposed RNNsearch outperforms the con ventional RNNencdec. More importantly , the performance of the RNNsearch is as high as that of the conv entional phrase-based translation system (Moses), when only the sentences consisting of kno wn words are considered. This is a significant achie vement, considering that Moses uses a separate monolingual corpus (418M words) in addition to the parallel corpora we used to train the RNNsearch and RNNencdec. 6 W e used the tokenization script from the open-source machine translation package, Moses. 7 In this paper , by a ’hidden unit’, we always mean the gated hidden unit (see Appendix A.1.1). 5 Published as a conference paper at ICLR 2015 The agreement on the European Economic Area was signed in August 1992 . L' accord sur la zone économique européenne a été signé en août 1992 . It should be noted that the marine environment is the least known of environments . Il convient de noter que l' environnement marin est le moins connu de l' environnement . (a) (b) Destruction of the equipment means that Syria can no longer produce new chemical weapons . La destruction de l' équipement signifie que la Syrie ne peut plus produire de nouvelles armes chimiques . " This will change my future with my family , " the man said . " Cela va changer mon avenir avec ma famille " , a dit l' homme . (c) (d) Figure 3: Four sample alignments found by RNNsearch-50. The x-axis and y-axis of each plot correspond to the words in the source sentence (English) and the generated translation (French), respectiv ely . Each pixel sho ws the weight α ij of the annotation of the j -th source word for the i -th target word (see Eq. (6)), in grayscale ( 0 : black, 1 : white). (a) an arbitrary sentence. (b–d) three randomly selected samples among the sentences without any unknown w ords and of length between 10 and 20 words from the test set. One of the motiv ations behind the proposed approach was the use of a fixed-length context vector in the basic encoder –decoder approach. W e conjectured that this limitation may make the basic encoder–decoder approach to underperform with long sentences. In Fig. 2, we see that the perfor- mance of RNNencdec dramatically drops as the length of the sentences increases. On the other hand, both RNNsearch-30 and RNNsearch-50 are more rob ust to the length of the sentences. RNNsearch- 50, especially , shows no performance deterioration even with sentences of length 50 or more. This superiority of the proposed model over the basic encoder–decoder is further confirmed by the fact that the RNNsearch-30 ev en outperforms RNNencdec-50 (see T able 1). 6 Published as a conference paper at ICLR 2015 Model All No UNK ◦ RNNencdec-30 13.93 24.19 RNNsearch-30 21.50 31.44 RNNencdec-50 17.82 26.71 RNNsearch-50 26.75 34.16 RNNsearch-50 ? 28.45 36.15 Moses 33.30 35.63 T able 1: BLEU scores of the trained models com- puted on the test set. The second and third columns show respectiv ely the scores on all the sentences and, on the sentences without any unknown word in them- selves and in the reference translations. Note that RNNsearch-50 ? was trained much longer until the performance on the development set stopped improv- ing. ( ◦ ) W e disallowed the models to generate [UNK] tokens when only the sentences having no unknown words were e valuated (last column). 5 . 2 Q U A L I T A T I V E A N A L Y S I S 5 . 2 . 1 A L I G N M E N T The proposed approach provides an intuitiv e way to inspect the (soft-)alignment between the words in a generated translation and those in a source sentence. This is done by visualizing the annotation weights α ij from Eq. (6), as in Fig. 3. Each ro w of a matrix in each plot indicates the weights associated with the annotations. From this we see which positions in the source sentence were considered more important when generating the target w ord. W e can see from the alignments in Fig. 3 that the alignment of words between English and French is largely monotonic. W e see strong weights along the diagonal of each matrix. Howe ver , we also observe a number of non-tri vial, non-monotonic alignments. Adjectiv es and nouns are typically ordered differently between French and English, and we see an example in Fig. 3 (a). From this figure, we see that the model correctly translates a phrase [European Economic Area] into [zone ´ economique europ ´ een]. The RNNsearch was able to correctly align [zone] with [Area], jumping ov er the two words ([European] and [Economic]), and then looked one word back at a time to complete the whole phrase [zone ´ economique europ ´ eenne]. The strength of the soft-alignment, opposed to a hard-alignment, is evident, for instance, from Fig. 3 (d). Consider the source phrase [the man] which was translated into [l’ homme]. Any hard alignment will map [the] to [l’] and [man] to [homme]. This is not helpful for translation, as one must consider the word following [the] to determine whether it should be translated into [le], [la], [les] or [l’]. Our soft-alignment solves this issue naturally by letting the model look at both [the] and [man], and in this example, we see that the model was able to correctly translate [the] into [l’]. W e observe similar beha viors in all the presented cases in Fig. 3. An additional benefit of the soft align- ment is that it naturally deals with source and tar get phrases of different lengths, without requiring a counter-intuiti ve way of mapping some words to or from nowhere ([NULL]) (see, e.g., Chapters 4 and 5 of K oehn, 2010). 5 . 2 . 2 L O N G S E N T E N C E S As clearly visible from Fig. 2 the proposed model (RNNsearch) is much better than the con ventional model (RNNencdec) at translating long sentences. This is likely due to the fact that the RNNsearch does not require encoding a long sentence into a fixed-length vector perfectly , but only accurately encoding the parts of the input sentence that surround a particular word. As an example, consider this source sentence from the test set: An admitting privileg e is the right of a doctor to admit a patient to a hospital or a medical centr e to carry out a diagnosis or a pr ocedure , based on his status as a health car e worker at a hospital. The RNNencdec-50 translated this sentence into: Un privil ` ege d’admission est le dr oit d’un m ´ edecin de reconna ˆ ıtr e un patient ` a l’h ˆ opital ou un centre m ´ edical d’un diagnostic ou de prendr e un diagnostic en fonction de son ´ etat de sant ´ e. 7 Published as a conference paper at ICLR 2015 The RNNencdec-50 correctly translated the source sentence until [a medical center]. Howev er , from there on (underlined), it deviated from the original meaning of the source sentence. For instance, it replaced [based on his status as a health care worker at a hospital] in the source sentence with [en fonction de son ´ etat de sant ´ e] (“based on his state of health”). On the other hand, the RNNsearch-50 generated the following correct translation, preserving the whole meaning of the input sentence without omitting any details: Un privil ` ege d’admission est le dr oit d’un m ´ edecin d’admettre un patient ` a un h ˆ opital ou un centr e m ´ edical pour effectuer un diagnostic ou une pr oc ´ edur e, selon son statut de travailleur des soins de sant ´ e ` a l’h ˆ opital. Let us consider another sentence from the test set: This kind of experience is part of Disne y’ s ef forts to ”extend the lifetime of its series and build new relationships with audiences via digital platforms that are becoming ever mor e important, ” he added. The translation by the RNNencdec-50 is Ce type d’exp ´ erience fait partie des initiatives du Disney pour ”pr olonger la dur ´ ee de vie de ses nouvelles et de d ´ evelopper des liens avec les lecteurs num ´ eriques qui deviennent plus comple xes. As with the pre vious example, the RNNencdec began de viating from the actual meaning of the source sentence after generating approximately 30 words (see the underlined phrase). After that point, the quality of the translation deteriorates, with basic mistakes such as the lack of a closing quotation mark. Again, the RNNsearch-50 was able to translate this long sentence correctly: Ce genr e d’exp ´ erience fait partie des efforts de Disne y pour ”pr olonger la dur ´ ee de vie de ses s ´ eries et cr ´ eer de nouvelles r elations avec des publics via des plateformes num ´ eriques de plus en plus importantes”, a-t-il ajout ´ e. In conjunction with the quantitati ve results presented already , these qualitativ e observations con- firm our hypotheses that the RNNsearch architecture enables far more reliable translation of long sentences than the standard RNNencdec model. In Appendix C, we provide a few more sample translations of long source sentences generated by the RNNencdec-50, RNNsearch-50 and Google T ranslate along with the reference translations. 6 R E L A T E D W O R K 6 . 1 L E A R N I N G T O A L I G N A similar approach of aligning an output symbol with an input symbol was proposed recently by Grav es (2013) in the context of handwriting synthesis. Handwriting synthesis is a task where the model is asked to generate handwriting of a giv en sequence of characters. In his work, he used a mixture of Gaussian kernels to compute the weights of the annotations, where the location, width and mixture coefficient of each kernel was predicted from an alignment model. More specifically , his alignment was restricted to predict the location such that the location increases monotonically . The main dif ference from our approach is that, in (Graves, 2013), the modes of the weights of the annotations only move in one direction. In the context of machine translation, this is a sev ere limi- tation, as (long-distance) reordering is often needed to generate a grammatically correct translation (for instance, English-to-German). Our approach, on the other hand, requires computing the annotation weight of every word in the source sentence for each word in the translation. This drawback is not severe with the task of translation in which most of input and output sentences are only 15–40 words. Howe ver , this may limit the applicability of the proposed scheme to other tasks. 8 Published as a conference paper at ICLR 2015 6 . 2 N E U R A L N E T W O R K S F O R M A C H I N E T R A N S L AT I O N Since Bengio et al. (2003) introduced a neural probabilistic language model which uses a neural net- work to model the conditional probability of a word giv en a fixed number of the preceding words, neural networks have widely been used in machine translation. Ho wev er , the role of neural net- works has been largely limited to simply providing a single feature to an e xisting statistical machine translation system or to re-rank a list of candidate translations provided by an e xisting system. For instance, Schwenk (2012) proposed using a feedforward neural network to compute the score of a pair of source and target phrases and to use the score as an additional feature in the phrase-based statistical machine translation system. More recently , Kalchbrenner and Blunsom (2013) and Devlin et al. (2014) reported the successful use of the neural networks as a sub-component of the existing translation system. T raditionally , a neural network trained as a target-side language model has been used to rescore or rerank a list of candidate translations (see, e.g., Schwenk et al. , 2006). Although the abov e approaches were shown to improve the translation performance over the state- of-the-art machine translation systems, we are more interested in a more ambitious objecti ve of designing a completely new translation system based on neural networks. The neural machine trans- lation approach we consider in this paper is therefore a radical departure from these earlier works. Rather than using a neural netw ork as a part of the e xisting system, our model works on its own and generates a translation from a source sentence directly . 7 C O N C L U S I O N The conv entional approach to neural machine translation, called an encoder–decoder approach, en- codes a whole input sentence into a fixed-length vector from which a translation will be decoded. W e conjectured that the use of a fixed-length context vector is problematic for translating long sen- tences, based on a recent empirical study reported by Cho et al. (2014b) and Pouget-Abadie et al. (2014). In this paper , we proposed a no vel architecture that addresses this issue. W e extended the basic encoder–decoder by letting a model (soft-)search for a set of input words, or their annotations com- puted by an encoder , when generating each target word. This frees the model from having to encode a whole source sentence into a fixed-length vector , and also lets the model focus only on information relev ant to the generation of the next target word. This has a major positi ve impact on the ability of the neural machine translation system to yield good results on longer sentences. Unlike with the traditional machine translation systems, all of the pieces of the translation system, including the alignment mechanism, are jointly trained to wards a better log-probability of producing correct translations. W e tested the proposed model, called RNNsearch, on the task of English-to-French translation. The experiment revealed that the proposed RNNsearch outperforms the con ventional encoder–decoder model (RNNencdec) significantly , re gardless of the sentence length and that it is much more ro- bust to the length of a source sentence. From the qualitativ e analysis where we in vestigated the (soft-)alignment generated by the RNNsearch, we were able to conclude that the model can cor- rectly align each target word with the relev ant words, or their annotations, in the source sentence as it generated a correct translation. Perhaps more importantly , the proposed approach achieved a translation performance comparable to the existing phrase-based statistical machine translation. It is a striking result, considering that the proposed architecture, or the whole family of neural machine translation, has only been proposed as recently as this year . W e believe the architecture proposed here is a promising step tow ard better machine translation and a better understanding of natural languages in general. One of challenges left for the future is to better handle unkno wn, or rare words. This will be required for the model to be more widely used and to match the performance of current state-of-the-art machine translation systems in all contexts. 9 Published as a conference paper at ICLR 2015 A C K N O W L E D G M E N T S The authors would like to thank the developers of Theano (Ber gstra et al. , 2010; Bastien et al. , 2012). W e acknowledge the support of the following agencies for research funding and computing support: NSERC, Calcul Qu ´ ebec, Compute Canada, the Canada Research Chairs and CIF AR. Bah- danau thanks the support from Planet Intelligent Systems GmbH. W e also thank Felix Hill, Bart van Merri ´ enboer , Jean Pouget-Abadie, Coline Devin and T ae-Ho Kim. R E F E R E N C E S Axelrod, A., He, X., and Gao, J. (2011). Domain adaptation via pseudo in-domain data selection. In Pr oceedings of the ACL Confer ence on Empirical Methods in Natural Language Pr ocessing (EMNLP) , pages 355–362. Association for Computational Linguistics. Bastien, F ., Lamblin, P ., Pascanu, R., Bergstra, J., Goodfellow , I. J., Bergeron, A., Bouchard, N., and Bengio, Y . (2012). Theano: ne w features and speed improvements. Deep Learning and Unsupervised Feature Learning NIPS 2012 W orkshop. Bengio, Y ., Simard, P ., and Frasconi, P . (1994). Learning long-term dependencies with gradient descent is difficult. IEEE T ransactions on Neural Networks , 5 (2), 157–166. Bengio, Y ., Ducharme, R., V incent, P ., and Jan vin, C. (2003). A neural probabilistic language model. J. Mac h. Learn. Res. , 3 , 1137–1155. Bergstra, J., Breuleux, O., Bastien, F ., Lamblin, P ., Pascanu, R., Desjardins, G., T urian, J., W arde- Farle y , D., and Bengio, Y . (2010). Theano: a CPU and GPU math expression compiler . In Pr oceedings of the Python for Scientific Computing Confer ence (SciPy) . Oral Presentation. Boulanger-Le wando wski, N., Bengio, Y ., and V incent, P . (2013). Audio chord recognition with recurrent neural networks. In ISMIR . Cho, K., van Merrienboer , B., Gulcehre, C., Bougares, F ., Schwenk, H., and Bengio, Y . (2014a). Learning phrase representations using RNN encoder-decoder for statistical machine translation. In Pr oceedings of the Empiricial Methods in Natural Language Pr ocessing (EMNLP 2014) . to appear . Cho, K., van Merri ¨ enboer , B., Bahdanau, D., and Bengio, Y . (2014b). On the properties of neural machine translation: Encoder–Decoder approaches. In Eighth W orkshop on Syntax, Semantics and Structur e in Statistical T ranslation . to appear . Devlin, J., Zbib, R., Huang, Z., Lamar , T ., Schwartz, R., and Makhoul, J. (2014). Fast and robust neural network joint models for statistical machine translation. In Association for Computational Linguistics . Forcada, M. L. and ˜ Neco, R. P . (1997). Recursi ve hetero-associativ e memories for translation. In J. Mira, R. Moreno-D ´ ıaz, and J. Cabestany , editors, Biological and Artificial Computation: F r om Neur oscience to T echnolo gy , v olume 1240 of Lectur e Notes in Computer Science , pages 453–462. Springer Berlin Heidelberg. Goodfellow , I., W arde-Farle y , D., Mirza, M., Courville, A., and Bengio, Y . (2013). Maxout net- works. In Pr oceedings of The 30th International Conference on Machine Learning , pages 1319– 1327. Grav es, A. (2012). Sequence transduction with recurrent neural networks. In Pr oceedings of the 29th International Confer ence on Machine Learning (ICML 2012) . Grav es, A. (2013). Generating sequences with recurrent neural networks. arXiv: 1308.0850 [cs.NE] . Grav es, A., Jaitly , N., and Mohamed, A.-R. (2013). Hybrid speech recognition with deep bidirec- tional LSTM. In Automatic Speech Recognition and Understanding (ASR U), 2013 IEEE W ork- shop on , pages 273–278. 10 Published as a conference paper at ICLR 2015 Hermann, K. and Blunsom, P . (2014). Multilingual distributed representations without word align- ment. In Proceedings of the Second International Conference on Learning Representations (ICLR 2014) . Hochreiter , S. (1991). Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, Institut f ¨ ur Informatik, Lehrstuhl Prof. Brauer , T echnische Univ ersit ¨ at M ¨ unchen. Hochreiter , S. and Schmidhuber, J. (1997). Long short-term memory . Neural Computation , 9 (8), 1735–1780. Kalchbrenner , N. and Blunsom, P . (2013). Recurrent continuous translation models. In Pr oceedings of the A CL Confer ence on Empirical Methods in Natural Language Pr ocessing (EMNLP) , pages 1700–1709. Association for Computational Linguistics. K oehn, P . (2010). Statistical Machine T ranslation . Cambridge Univ ersity Press, New Y ork, NY , USA. K oehn, P ., Och, F . J., and Marcu, D. (2003). Statistical phrase-based translation. In Pr oceedings of the 2003 Confer ence of the North American Chapter of the Association for Computational Linguistics on Human Language T echnology - V olume 1 , NAA CL ’03, pages 48–54, Stroudsb urg, P A, USA. Association for Computational Linguistics. Pascanu, R., Mikolo v , T ., and Bengio, Y . (2013a). On the difficulty of training recurrent neural networks. In ICML ’2013 . Pascanu, R., Mikolov , T ., and Bengio, Y . (2013b). On the dif ficulty of training recurrent neural networks. In Pr oceedings of the 30th International Conference on Machine Learning (ICML 2013) . Pascanu, R., Gulcehre, C., Cho, K., and Bengio, Y . (2014). How to construct deep recurrent neural networks. In Pr oceedings of the Second International Confer ence on Learning Repr esentations (ICLR 2014) . Pouget-Abadie, J., Bahdanau, D., v an Merri ¨ enboer , B., Cho, K., and Bengio, Y . (2014). Overcoming the curse of sentence length for neural machine translation using automatic segmentation. In Eighth W orkshop on Syntax, Semantics and Structure in Statistical T ranslation . to appear . Schuster , M. and P aliwal, K. K. (1997). Bidirectional recurrent neural networks. Signal Pr ocessing, IEEE T ransactions on , 45 (11), 2673–2681. Schwenk, H. (2012). Continuous space translation models for phrase-based statistical machine translation. In M. Kay and C. Boitet, editors, Pr oceedings of the 24th International Confer ence on Computational Linguistics (COLIN) , pages 1071–1080. Indian Institute of T echnology Bombay . Schwenk, H., Dchelotte, D., and Gauvain, J.-L. (2006). Continuous space language models for statistical machine translation. In Proceedings of the COLING/A CL on Main conference poster sessions , pages 723–730. Association for Computational Linguistics. Sutske ver , I., V inyals, O., and Le, Q. (2014). Sequence to sequence learning with neural networks. In Advances in Neural Information Pr ocessing Systems (NIPS 2014) . Zeiler , M. D. (2012). AD ADEL T A: An adaptiv e learning rate method. arXiv: 1212.5701 [cs.LG] . 11 Published as a conference paper at ICLR 2015 A M O D E L A R C H I T E C T U R E A . 1 A R C H I T E C T U R A L C H O I C E S The proposed scheme in Section 3 is a general framew ork where one can freely define, for instance, the acti vation functions f of recurrent neural netw orks (RNN) and the alignment model a . Here, we describe the choices we made for the experiments in this paper . A . 1 . 1 R E C U R R E N T N E U R A L N E T W O R K For the activ ation function f of an RNN, we use the gated hidden unit recently proposed by Cho et al. (2014a). The gated hidden unit is an alternativ e to the conv entional simple units such as an element-wise tanh . This gated unit is similar to a long short-term memory (LSTM) unit proposed earlier by Hochreiter and Schmidhuber (1997), sharing with it the ability to better model and learn long-term dependencies. This is made possible by having computation paths in the unfolded RNN for which the product of deriv ativ es is close to 1. These paths allow gradients to flow backward easily without suf fering too much from the vanishing effect (Hochreiter, 1991; Bengio et al. , 1994; Pascanu et al. , 2013a). It is therefore possible to use LSTM units instead of the gated hidden unit described here, as was done in a similar context by Sutsk ev er et al. (2014). The new state s i of the RNN employing n gated hidden units 8 is computed by s i = f ( s i − 1 , y i − 1 , c i ) = (1 − z i ) ◦ s i − 1 + z i ◦ ˜ s i , where ◦ is an element-wise multiplication, and z i is the output of the update gates (see below). The proposed updated state ˜ s i is computed by ˜ s i = tanh ( W e ( y i − 1 ) + U [ r i ◦ s i − 1 ] + C c i ) , where e ( y i − 1 ) ∈ R m is an m -dimensional embedding of a word y i − 1 , and r i is the output of the reset gates (see below). When y i is represented as a 1 -of- K vector , e ( y i ) is simply a column of an embedding matrix E ∈ R m × K . Whene ver possible, we omit bias terms to make the equations less cluttered. The update gates z i allow each hidden unit to maintain its pre vious acti vation, and the reset gates r i control how much and what information from the previous state should be reset. W e compute them by z i = σ ( W z e ( y i − 1 ) + U z s i − 1 + C z c i ) , r i = σ ( W r e ( y i − 1 ) + U r s i − 1 + C r c i ) , where σ ( · ) is a logistic sigmoid function. At each step of the decoder , we compute the output probability (Eq. (4)) as a multi-layered func- tion (Pascanu et al. , 2014). W e use a single hidden layer of maxout units (Goodfellow et al. , 2013) and normalize the output probabilities (one for each word) with a softmax function (see Eq. (6)). A . 1 . 2 A L I G N M E N T M O D E L The alignment model should be designed considering that the model needs to be e valuated T x × T y times for each sentence pair of lengths T x and T y . In order to reduce computation, we use a single- layer multilayer perceptron such that a ( s i − 1 , h j ) = v > a tanh ( W a s i − 1 + U a h j ) , where W a ∈ R n × n , U a ∈ R n × 2 n and v a ∈ R n are the weight matrices. Since U a h j does not depend on i , we can pre-compute it in advance to minimize the computational cost. 8 Here, we show the formula of the decoder . The same formula can be used in the encoder by simply ignoring the context v ector c i and the related terms. 12 Published as a conference paper at ICLR 2015 A . 2 D E TA I L E D D E S C R I P T I O N O F T H E M O D E L A . 2 . 1 E N C O D E R In this section, we describe in detail the architecture of the proposed model (RNNsearch) used in the experiments (see Sec. 4 – 5). From here on, we omit all bias terms in order to increase readability . The model takes a source sentence of 1-of-K coded word v ectors as input x = ( x 1 , . . . , x T x ) , x i ∈ R K x and outputs a translated sentence of 1-of-K coded word vectors y = ( y 1 , . . . , y T y ) , y i ∈ R K y , where K x and K y are the vocab ulary sizes of source and target languages, respectiv ely . T x and T y respectiv ely denote the lengths of source and target sentences. First, the forward states of the bidirectional recurrent neural network (BiRNN) are computed: − → h i = ( (1 − − → z i ) ◦ − → h i − 1 + − → z i ◦ − → h i , if i > 0 0 , if i = 0 where − → h i = tanh − → W E x i + − → U h − → r i ◦ − → h i − 1 i − → z i = σ − → W z E x i + − → U z − → h i − 1 − → r i = σ − → W r E x i + − → U r − → h i − 1 . E ∈ R m × K x is the word embedding matrix. − → W , − → W z , − → W r ∈ R n × m , − → U , − → U z , − → U r ∈ R n × n are weight matrices. m and n are the word embedding dimensionality and the number of hidden units, respectiv ely . σ ( · ) is as usual a logistic sigmoid function. The backw ard states ( ← − h 1 , · · · , ← − h T x ) are computed similarly . W e share the word embedding matrix E between the forward and backward RNNs, unlik e the weight matrices. W e concatenate the forward and backward states to to obtain the annotations ( h 1 , h 2 , · · · , h T x ) , where h i = " − → h i ← − h i # (7) A . 2 . 2 D E C O D E R The hidden state s i of the decoder giv en the annotations from the encoder is computed by s i =(1 − z i ) ◦ s i − 1 + z i ◦ ˜ s i , where ˜ s i = tanh ( W E y i − 1 + U [ r i ◦ s i − 1 ] + C c i ) z i = σ ( W z E y i − 1 + U z s i − 1 + C z c i ) r i = σ ( W r E y i − 1 + U r s i − 1 + C r c i ) E is the word embedding matrix for the target language. W, W z , W r ∈ R n × m , U, U z , U r ∈ R n × n , and C, C z , C r ∈ R n × 2 n are weights. Again, m and n are the word embedding dimensionality and the number of hidden units, respectively . The initial hidden state s 0 is computed by s 0 = tanh W s ← − h 1 , where W s ∈ R n × n . The context v ector c i are recomputed at each step by the alignment model: c i = T x X j =1 α ij h j , 13 Published as a conference paper at ICLR 2015 Model Updates ( × 10 5 ) Epochs Hours GPU T rain NLL Dev . NLL RNNenc-30 8.46 6.4 109 TIT AN BLA CK 28.1 53.0 RNNenc-50 6.00 4.5 108 Quadro K-6000 44.0 43.6 RNNsearch-30 4.71 3.6 113 TIT AN BLA CK 26.7 47.2 RNNsearch-50 2.88 2.2 111 Quadro K-6000 40.7 38.1 RNNsearch-50 ? 6.67 5.0 252 Quadro K-6000 36.7 35.2 T able 2: Learning statistics and relev ant information. Each update corresponds to updating the parameters once using a single minibatch. One epoch is one pass through the training set. NLL is the av erage conditional log-probabilities of the sentences in either the training set or the de velopment set. Note that the lengths of the sentences dif fer . where α ij = exp ( e ij ) P T x k =1 exp ( e ik ) e ij = v > a tanh ( W a s i − 1 + U a h j ) , and h j is the j -th annotation in the source sentence (see Eq. (7)). v a ∈ R n 0 , W a ∈ R n 0 × n and U a ∈ R n 0 × 2 n are weight matrices. Note that the model becomes RNN Encoder–Decoder (Cho et al. , 2014a), if we fix c i to − → h T x . W ith the decoder state s i − 1 , the context c i and the last generated word y i − 1 , we define the probability of a target w ord y i as p ( y i | s i , y i − 1 , c i ) ∝ exp y > i W o t i , where t i = max ˜ t i, 2 j − 1 , ˜ t i, 2 j > j =1 ,...,l and ˜ t i,k is the k -th element of a vector ˜ t i which is computed by ˜ t i = U o s i − 1 + V o E y i − 1 + C o c i . W o ∈ R K y × l , U o ∈ R 2 l × n , V o ∈ R 2 l × m and C o ∈ R 2 l × 2 n are weight matrices. This can be under- stood as ha ving a deep output (P ascanu et al. , 2014) with a single maxout hidden layer (Goodfellow et al. , 2013). A . 2 . 3 M O D E L S I Z E For all the models used in this paper, the size of a hidden layer n is 1000, the word embedding dimensionality m is 620 and the size of the maxout hidden layer in the deep output l is 500. The number of hidden units in the alignment model n 0 is 1000. B T R A I N I N G P R O C E D U R E B . 1 P A R A M E T E R I N I T I A L I Z A T I O N W e initialized the recurrent weight matrices U, U z , U r , ← − U , ← − U z , ← − U r , − → U , − → U z and − → U r as random or- thogonal matrices. For W a and U a , we initialized them by sampling each element from the Gaussian distribution of mean 0 and variance 0 . 001 2 . All the elements of V a and all the bias vectors were ini- tialized to zero. Any other weight matrix was initialized by sampling from the Gaussian distribution of mean 0 and variance 0 . 01 2 . B . 2 T R A I N I N G W e used the stochastic gradient descent (SGD) algorithm. Adadelta (Zeiler, 2012) was used to automatically adapt the learning rate of each parameter ( = 10 − 6 and ρ = 0 . 95 ). W e explicitly 14 Published as a conference paper at ICLR 2015 normalized the L 2 -norm of the gradient of the cost function each time to be at most a predefined threshold of 1 , when the norm was larger than the threshold (Pascanu et al. , 2013b). Each SGD update direction was computed with a minibatch of 80 sentences. At each update our implementation requires time proportional to the length of the longest sentence in a minibatch. Hence, to minimize the waste of computation, before every 20-th update, we retriev ed 1600 sentence pairs, sorted them according to the lengths and split them into 20 minibatches. The training data was shuf fled once before training and was trav ersed sequentially in this manner . In T ables 2 we present the statistics related to training all the models used in the experiments. C T R A N S L AT I O N S O F L O N G S E N T E N C E S Source An admitting pri vilege is the right of a doctor to admit a patient to a hospital or a medical centre to carry out a diagnosis or a procedure, based on his status as a health care worker at a hospital. Reference Le privil ` ege d’admission est le droit d’un m ´ edecin, en vertu de son statut de membre soignant d’un h ˆ opital, d’admettre un patient dans un h ˆ opital ou un centre m ´ edical afin d’y d ´ elivrer un diagnostic ou un traitement. RNNenc-50 Un privil ` ege d’admission est le droit d’un m ´ edecin de reconna ˆ ıtre un patient ` a l’h ˆ opital ou un centre m ´ edical d’un diagnostic ou de prendre un diagnostic en fonction de son ´ etat de sant ´ e. RNNsearch-50 Un privil ` ege d’admission est le droit d’un m ´ edecin d’admettre un patient ` a un h ˆ opital ou un centre m ´ edical pour ef fectuer un diagnostic ou une proc ´ edure, selon son statut de trav ailleur des soins de sant ´ e ` a l’h ˆ opital. Google T ranslate Un pri vil ` ege admettre est le droit d’un m ´ edecin d’admettre un patient dans un h ˆ opital ou un centre m ´ edical pour effectuer un diagnostic ou une proc ´ edure, fond ´ ee sur sa situation en tant que trav ailleur de soins de sant ´ e dans un h ˆ opital. Source This kind of experience is part of Disney’ s efforts to ”extend the lifetime of its series and build new relationships with audiences via digital platforms that are becoming ev er more important, ” he added. Reference Ce type d’exp ´ erience entre dans le cadre des efforts de Disney pour ” ´ etendre la dur ´ ee de vie de ses s ´ eries et construire de nouvelles relations avec son public gr ˆ ace ` a des plateformes num ´ eriques qui sont de plus en plus importantes”, a-t-il ajout ´ e. RNNenc-50 Ce type d’exp ´ erience fait partie des initiati ves du Disney pour ”prolonger la dur ´ ee de vie de ses nouvelles et de d ´ evelopper des liens a vec les lecteurs num ´ eriques qui deviennent plus com- plex es. RNNsearch-50 Ce genre d’exp ´ erience fait partie des ef forts de Disney pour ”prolonger la dur ´ ee de vie de ses s ´ eries et cr ´ eer de nouvelles relations av ec des publics via des plateformes num ´ eriques de plus en plus importantes”, a-t-il ajout ´ e. Google T ranslate Ce genre d’exp ´ erience fait partie des efforts de Disney ` a “ ´ etendre la dur ´ ee de vie de sa s ´ erie et construire de nouvelles relations av ec le public par le biais des plates-formes num ´ eriques qui deviennent de plus en plus important”, at-il ajout ´ e. Source In a press conference on Thursday , Mr Blair stated that there was nothing in this video that might constitute a ”reasonable motiv e” that could lead to criminal charges being brought against the mayor . Reference En conf ´ erence de presse, jeudi, M. Blair a af firm ´ e qu’il n’y av ait rien dans cette vid ´ eo qui puisse constituer des ”motifs raisonnables” pouv ant mener au d ´ ep ˆ ot d’une accusation criminelle contre le maire. RNNenc-50 Lors de la conf ´ erence de presse de jeudi, M. Blair a dit qu’il n’y avait rien dans cette vid ´ eo qui pourrait constituer une ”motiv ation raisonnable” pouvant entra ˆ ıner des accusations criminelles port ´ ees contre le maire. RNNsearch-50 Lors d’une conf ´ erence de presse jeudi, M. Blair a d ´ eclar ´ e qu’il n’y a vait rien dans cette vid ´ eo qui pourrait constituer un ”motif raisonnable” qui pourrait conduire ` a des accusations criminelles contre le maire. Google T ranslate Lors d’une conf ´ erence de presse jeudi, M. Blair a d ´ eclar ´ e qu’il n’y avait rien dans cette vido qui pourrait constituer un ”motif raisonnable” qui pourrait mener ` a des accusations criminelles portes contre le maire. T able 3: The translations generated by RNNenc-50 and RNNsearch-50 from long source sentences (30 words or more) selected from the test set. For each source sentence, we also show the gold- standard translation. The translations by Google T ranslate were made on 27 August 2014. 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

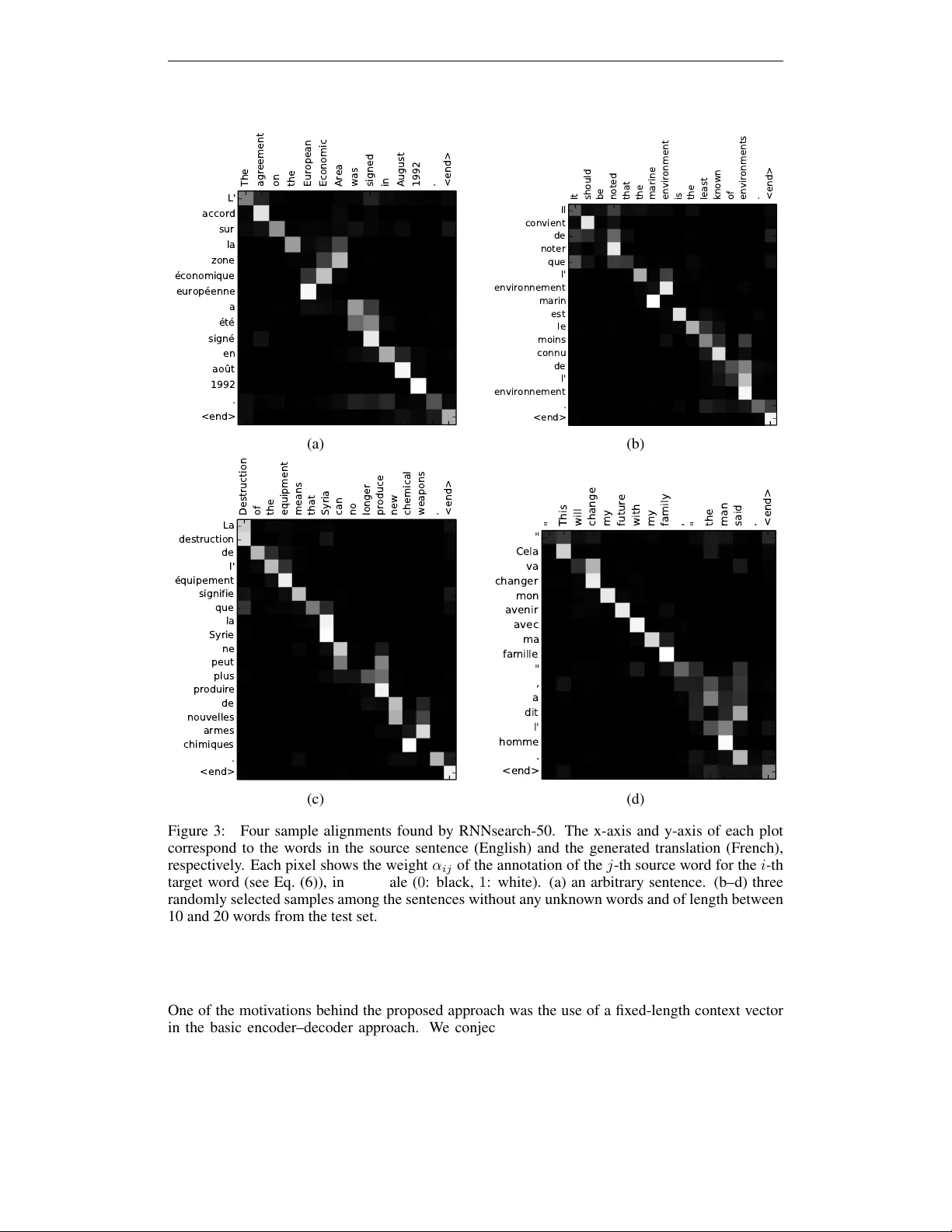

Leave a Comment