Convex Optimization for Linear Query Processing under Approximate Differential Privacy

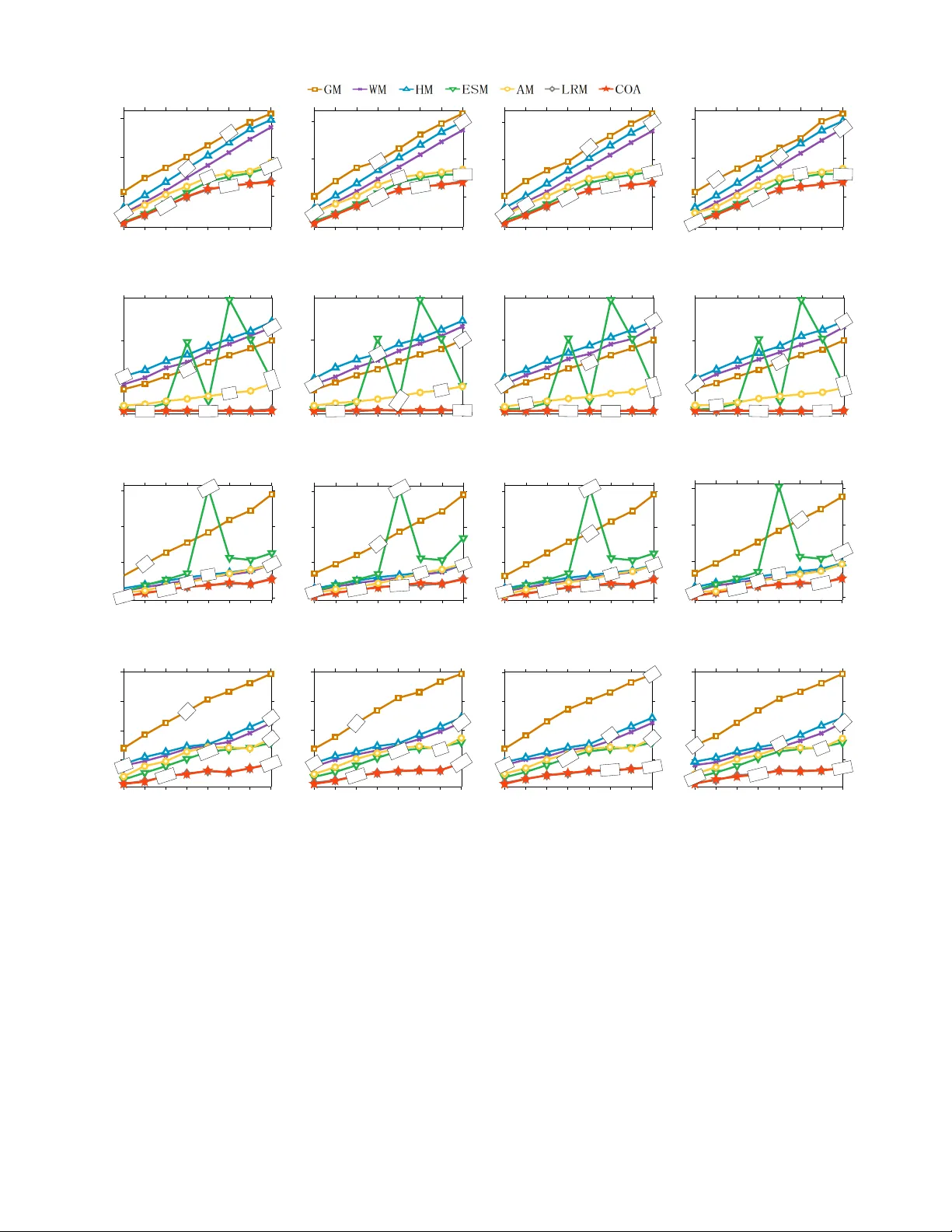

Differential privacy enables organizations to collect accurate aggregates over sensitive data with strong, rigorous guarantees on individuals' privacy. Previous work has found that under differential privacy, computing multiple correlated aggregates …

Authors: Ganzhao Yuan, Yin Yang, Zhenjie Zhang